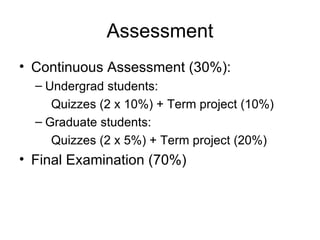

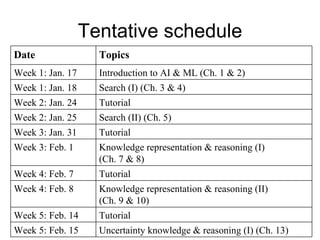

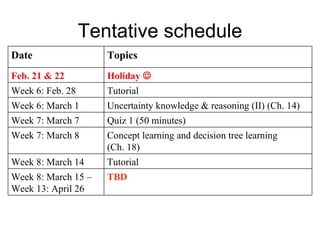

This document provides an overview of the COMP3170 Artificial Intelligence and Machine Learning course at Hong Kong Baptist University. It includes information about the course lecturers and textbook, assessment details, a tentative schedule of topics and dates, and a brief description of the content to be covered each week.

![Rational agents An agent is an entity that perceives and acts This course is about designing rational agents Abstractly, an agent is a function from percept histories to actions: [ f : P* A ] For any given class of environments and tasks, we seek the agent (or class of agents) with the best performance Caveat: computational limitations make perfect rationality unachievable design best program for given machine resources](https://image.slidesharecdn.com/introduction2082/85/Introduction-12-320.jpg)

![Agents and environments The agent function maps from percept histories to actions: [ f : P* A ] The agent program runs on the physical architecture to produce f agent = architecture + program](https://image.slidesharecdn.com/introduction2082/85/Introduction-20-320.jpg)

![Vacuum-cleaner world Percepts: location and contents, e.g., [A,Dirty] Actions: Left , Right , Suck , NoOp](https://image.slidesharecdn.com/introduction2082/85/Introduction-21-320.jpg)