Incremental Sense Weight Training for In-depth Interpretation of Contextualized Word Embeddings

•

0 likes•42 views

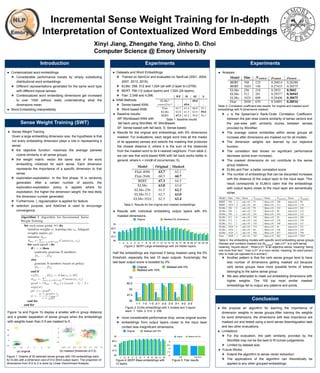

AAAI Conference on Artificial Intelligence: Student Abstract and Poster Program Authors: Xinyi Jiang, Zhengzhe Yang, Jinho D. Choi Year: 2020. https://arxiv.org/abs/1911.01623

Report

Share

Report

Share

Download to read offline

Recommended

Dynamic pooling and unfolding recursive autoencoders for paraphrase detection

Paper survey of

Dynamic pooling and unfolding recursive autoencoders for paraphrase detection

Deep Implicit Layers: Learning Structured Problems with Neural Networks

How to combine algorithm and deep learning?

Machine learning by using python By: Professor Lili Saghafi

Machine learning is the kind of programming which gives computers the capability to automatically learn from data without being explicitly programmed.

This means in other words that these programs change their behavior by learning from data.

In this course we will cover various aspects of machine learning

Of course, everything will be related to Python. So it is Machine Learning by using Python.

What is the best programming language for machine learning?

Python is clearly one of the top players!

k-nearest Neighbor Classifier

Neural networks

Neural Networks from Scratch in Python

Neural Network in Python using Numypy

Dropout Neural Networks

Neural Networks with Scikit

Machine Learning with Scikit and Python

Naive Bayes Classifier

Introduction into Text Classification using Naive Bayes and Python

Quality Prediction in Fingerprint Compression

A new algorithm for fingerprint compression based on sparse representation is introduced. At first, dictionary is constructed by sparse combination of set of fingerprint patches. Designing dictionaries can be done by either selecting one from a prespecified set or adapting a dictionary to a set of training signals. In this paper, we use K-SVD algorithm to construct dictionary. After computation of dictionary, the image gets quantized, filtered and encoded. The resultant image obtained may be of three qualities: Good, Bad and Ugly (GBU problem). In this paper, we overcome the GBU problem by prediction the quality of image.

Machine Learning and Data Mining: 13 Nearest Neighbor and Bayesian Classifiers

Course "Machine Learning and Data Mining" for the degree of Computer Engineering at the Politecnico di Milano. This lecture introduces nearest neighbor and Bayesian classifiers

Recommended

Dynamic pooling and unfolding recursive autoencoders for paraphrase detection

Paper survey of

Dynamic pooling and unfolding recursive autoencoders for paraphrase detection

Deep Implicit Layers: Learning Structured Problems with Neural Networks

How to combine algorithm and deep learning?

Machine learning by using python By: Professor Lili Saghafi

Machine learning is the kind of programming which gives computers the capability to automatically learn from data without being explicitly programmed.

This means in other words that these programs change their behavior by learning from data.

In this course we will cover various aspects of machine learning

Of course, everything will be related to Python. So it is Machine Learning by using Python.

What is the best programming language for machine learning?

Python is clearly one of the top players!

k-nearest Neighbor Classifier

Neural networks

Neural Networks from Scratch in Python

Neural Network in Python using Numypy

Dropout Neural Networks

Neural Networks with Scikit

Machine Learning with Scikit and Python

Naive Bayes Classifier

Introduction into Text Classification using Naive Bayes and Python

Quality Prediction in Fingerprint Compression

A new algorithm for fingerprint compression based on sparse representation is introduced. At first, dictionary is constructed by sparse combination of set of fingerprint patches. Designing dictionaries can be done by either selecting one from a prespecified set or adapting a dictionary to a set of training signals. In this paper, we use K-SVD algorithm to construct dictionary. After computation of dictionary, the image gets quantized, filtered and encoded. The resultant image obtained may be of three qualities: Good, Bad and Ugly (GBU problem). In this paper, we overcome the GBU problem by prediction the quality of image.

Machine Learning and Data Mining: 13 Nearest Neighbor and Bayesian Classifiers

Course "Machine Learning and Data Mining" for the degree of Computer Engineering at the Politecnico di Milano. This lecture introduces nearest neighbor and Bayesian classifiers

K-Nearest Neighbor Classifier

K-Nearest neighbor is one of the most commonly used classifier based in lazy learning. It is one of the most commonly used methods in recommendation systems and document similarity measures. It mainly uses Euclidean distance to find the similarity measures between two data points.

Machine Learning Lecture 2 Basics

This presentation is a part of ML Course and this deals with some of the basic concepts such as different types of learning, definitions of classification and regression, decision surfaces etc. This slide set also outlines the Perceptron Learning algorithm as a starter to other complex models to follow in the rest of the course.

Knowledge distillation deeplab

Compress a neural network using knowledge distillation technique, reduce params to a sufficient amount in order to run on embedded devices

L05 language model_part2

Discusses the concept of Language Models in Natural Language Processing. The n-gram models, markov chains are discussed. Smoothing techniques such as add-1 smoothing, interpolation and discounting methods are addressed.

MATLAB Code + Description : Real-Time Object Motion Detection and Tracking

This file contains a simple description about what I have created about how to detect object motion and track whatever moving as a computer vision project when being undergraduate student at 2014.

The MATLAB code of the system is also available in the document.

Find me on:

AFCIT

http://www.afcit.xyz

YouTube

https://www.youtube.com/channel/UCuewOYbBXH5gwhfOrQOZOdw

Google Plus

https://plus.google.com/u/0/+AhmedGadIT

SlideShare

https://www.slideshare.net/AhmedGadFCIT

LinkedIn

https://www.linkedin.com/in/ahmedfgad/

ResearchGate

https://www.researchgate.net/profile/Ahmed_Gad13

Academia

https://www.academia.edu/

Google Scholar

https://scholar.google.com.eg/citations?user=r07tjocAAAAJ&hl=en

Mendelay

https://www.mendeley.com/profiles/ahmed-gad12/

ORCID

https://orcid.org/0000-0003-1978-8574

StackOverFlow

http://stackoverflow.com/users/5426539/ahmed-gad

Twitter

https://twitter.com/ahmedfgad

Facebook

https://www.facebook.com/ahmed.f.gadd

Pinterest

https://www.pinterest.com/ahmedfgad/

Derivation of Convolutional Neural Network (ConvNet) from Fully Connected Net...

In image analysis, convolutional neural networks (CNNs or ConvNets for short) are time and memory efficient than fully connected (FC) networks. But why? What are the advantages of ConvNets over FC networks in image analysis? How is ConvNet derived from FC networks? Where the term convolution in CNNs came from? These questions are to be answered in this article.

Image analysis has a number of challenges such as classification, object detection, recognition, description, etc. If an image classifier, for example, is to be created, it should be able to work with a high accuracy even with variations such as occlusion, illumination changes, viewing angles, and others. The traditional pipeline of image classification with its main step of feature engineering is not suitable for working in rich environments. Even experts in the field won’t be able to give a single or a group of features that are able to reach high accuracy under different variations. Motivated by this problem, the idea of feature learning came out. The suitable features to work with images are learned automatically. This is the reason why artificial neural networks (ANNs) are one of the robust ways of image analysis. Based on a learning algorithm such as gradient descent (GD), ANN learns the image features automatically. The raw image is applied to the ANN and ANN is responsible for generating the features describing it.

-Reference

Aghdam, Hamed Habibi, and Elnaz Jahani Heravi. Guide to Convolutional Neural Networks: A Practical Application to Traffic-Sign Detection and Classification. Springer, 2017.

Clustering part 1

Basic clustering algorithms and methods - describing hierarchical clustering method and k-means clustering method.

Data Science - Part VII - Cluster Analysis

This lecture provides an overview of clustering techniques, including K-Means, Hierarchical Clustering, and Gaussian Mixed Models. We will go through some methods of calibration and diagnostics and then apply the technique on a recognizable dataset.

Customer Segmentation using Clustering

Introduction to customer segmentation using hierarchical and k-means clustering. Including some practice with R

K - Nearest neighbor ( KNN )

Machine learning algorithm ( KNN ) for classification and regression .

Lazy learning , competitive and Instance based learning.

Fuzzy neural networks

Fuzzy soft set approach in decision making plays a crucial role by using Dempster–Shafer theory of evidence. First, the uncertain degrees of several parameters are obtained via grey relational analysis that apply to calculate the grey mean relational degree. Secondly, a mass functions of different independent choices with several parameters have given according to the uncertain degree. Lastly, aggregate the choices into a collective choices, Dempster’s rule of evidence combination have been utilized. The aforesaid soft computing based method have been applied on decision making problem.

Multi-task Learning for Dense Prediction Tasks in Computer Vision

Presenting multi-task learning techniques for dense prediction tasks in computer vision

Mca2020 advanced data structure

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

help.mbaassignments@gmail.com

or

call us at : 08263069601

Neural collaborative filtering-발표

25-May-2017 sungkyunkwan university data mining lab in-lab presentation 이현성

Catching co occurrence information using word2vec-inspired matrix factorization

연구실 발표 자료

item co-occurrence를 이용한 matrix factorization model의 성능 향상

Distilling Linguistic Context for Language Model Compression

A computationally expensive and memory intensive neural network lies behind the recent success of language representation learning. Knowledge distillation, a major technique for deploying such a vast language model in resource-scarce environments, transfers the knowledge on individual word representations learned without restrictions. In this paper, inspired by the recent observations that language representations are relatively positioned and have more semantic knowledge as a whole, we present a new knowledge distillation objective for language representation learning that transfers the contextual knowledge via two types of relationships across representations: Word Relation and Layer Transforming Relation. Unlike other recent distillation techniques for the language models, our contextual distillation does not have any restrictions on architectural changes between teacher and student. We validate the effectiveness of our method on challenging benchmarks of language understanding tasks, not only in architectures of various sizes, but also in combination with DynaBERT, the recently proposed adaptive size pruning method.

More Related Content

What's hot

K-Nearest Neighbor Classifier

K-Nearest neighbor is one of the most commonly used classifier based in lazy learning. It is one of the most commonly used methods in recommendation systems and document similarity measures. It mainly uses Euclidean distance to find the similarity measures between two data points.

Machine Learning Lecture 2 Basics

This presentation is a part of ML Course and this deals with some of the basic concepts such as different types of learning, definitions of classification and regression, decision surfaces etc. This slide set also outlines the Perceptron Learning algorithm as a starter to other complex models to follow in the rest of the course.

Knowledge distillation deeplab

Compress a neural network using knowledge distillation technique, reduce params to a sufficient amount in order to run on embedded devices

L05 language model_part2

Discusses the concept of Language Models in Natural Language Processing. The n-gram models, markov chains are discussed. Smoothing techniques such as add-1 smoothing, interpolation and discounting methods are addressed.

MATLAB Code + Description : Real-Time Object Motion Detection and Tracking

This file contains a simple description about what I have created about how to detect object motion and track whatever moving as a computer vision project when being undergraduate student at 2014.

The MATLAB code of the system is also available in the document.

Find me on:

AFCIT

http://www.afcit.xyz

YouTube

https://www.youtube.com/channel/UCuewOYbBXH5gwhfOrQOZOdw

Google Plus

https://plus.google.com/u/0/+AhmedGadIT

SlideShare

https://www.slideshare.net/AhmedGadFCIT

LinkedIn

https://www.linkedin.com/in/ahmedfgad/

ResearchGate

https://www.researchgate.net/profile/Ahmed_Gad13

Academia

https://www.academia.edu/

Google Scholar

https://scholar.google.com.eg/citations?user=r07tjocAAAAJ&hl=en

Mendelay

https://www.mendeley.com/profiles/ahmed-gad12/

ORCID

https://orcid.org/0000-0003-1978-8574

StackOverFlow

http://stackoverflow.com/users/5426539/ahmed-gad

Twitter

https://twitter.com/ahmedfgad

Facebook

https://www.facebook.com/ahmed.f.gadd

Pinterest

https://www.pinterest.com/ahmedfgad/

Derivation of Convolutional Neural Network (ConvNet) from Fully Connected Net...

In image analysis, convolutional neural networks (CNNs or ConvNets for short) are time and memory efficient than fully connected (FC) networks. But why? What are the advantages of ConvNets over FC networks in image analysis? How is ConvNet derived from FC networks? Where the term convolution in CNNs came from? These questions are to be answered in this article.

Image analysis has a number of challenges such as classification, object detection, recognition, description, etc. If an image classifier, for example, is to be created, it should be able to work with a high accuracy even with variations such as occlusion, illumination changes, viewing angles, and others. The traditional pipeline of image classification with its main step of feature engineering is not suitable for working in rich environments. Even experts in the field won’t be able to give a single or a group of features that are able to reach high accuracy under different variations. Motivated by this problem, the idea of feature learning came out. The suitable features to work with images are learned automatically. This is the reason why artificial neural networks (ANNs) are one of the robust ways of image analysis. Based on a learning algorithm such as gradient descent (GD), ANN learns the image features automatically. The raw image is applied to the ANN and ANN is responsible for generating the features describing it.

-Reference

Aghdam, Hamed Habibi, and Elnaz Jahani Heravi. Guide to Convolutional Neural Networks: A Practical Application to Traffic-Sign Detection and Classification. Springer, 2017.

Clustering part 1

Basic clustering algorithms and methods - describing hierarchical clustering method and k-means clustering method.

Data Science - Part VII - Cluster Analysis

This lecture provides an overview of clustering techniques, including K-Means, Hierarchical Clustering, and Gaussian Mixed Models. We will go through some methods of calibration and diagnostics and then apply the technique on a recognizable dataset.

Customer Segmentation using Clustering

Introduction to customer segmentation using hierarchical and k-means clustering. Including some practice with R

K - Nearest neighbor ( KNN )

Machine learning algorithm ( KNN ) for classification and regression .

Lazy learning , competitive and Instance based learning.

Fuzzy neural networks

Fuzzy soft set approach in decision making plays a crucial role by using Dempster–Shafer theory of evidence. First, the uncertain degrees of several parameters are obtained via grey relational analysis that apply to calculate the grey mean relational degree. Secondly, a mass functions of different independent choices with several parameters have given according to the uncertain degree. Lastly, aggregate the choices into a collective choices, Dempster’s rule of evidence combination have been utilized. The aforesaid soft computing based method have been applied on decision making problem.

Multi-task Learning for Dense Prediction Tasks in Computer Vision

Presenting multi-task learning techniques for dense prediction tasks in computer vision

Mca2020 advanced data structure

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

help.mbaassignments@gmail.com

or

call us at : 08263069601

Neural collaborative filtering-발표

25-May-2017 sungkyunkwan university data mining lab in-lab presentation 이현성

Catching co occurrence information using word2vec-inspired matrix factorization

연구실 발표 자료

item co-occurrence를 이용한 matrix factorization model의 성능 향상

What's hot (20)

MATLAB Code + Description : Real-Time Object Motion Detection and Tracking

MATLAB Code + Description : Real-Time Object Motion Detection and Tracking

Derivation of Convolutional Neural Network (ConvNet) from Fully Connected Net...

Derivation of Convolutional Neural Network (ConvNet) from Fully Connected Net...

Multi-task Learning for Dense Prediction Tasks in Computer Vision

Multi-task Learning for Dense Prediction Tasks in Computer Vision

Catching co occurrence information using word2vec-inspired matrix factorization

Catching co occurrence information using word2vec-inspired matrix factorization

Similar to Incremental Sense Weight Training for In-depth Interpretation of Contextualized Word Embeddings

Distilling Linguistic Context for Language Model Compression

A computationally expensive and memory intensive neural network lies behind the recent success of language representation learning. Knowledge distillation, a major technique for deploying such a vast language model in resource-scarce environments, transfers the knowledge on individual word representations learned without restrictions. In this paper, inspired by the recent observations that language representations are relatively positioned and have more semantic knowledge as a whole, we present a new knowledge distillation objective for language representation learning that transfers the contextual knowledge via two types of relationships across representations: Word Relation and Layer Transforming Relation. Unlike other recent distillation techniques for the language models, our contextual distillation does not have any restrictions on architectural changes between teacher and student. We validate the effectiveness of our method on challenging benchmarks of language understanding tasks, not only in architectures of various sizes, but also in combination with DynaBERT, the recently proposed adaptive size pruning method.

Distilling Linguistic Context for Language Model Compression

Abstract: A computationally expensive and memory intensive neural network lies behind the recent success of language representation learning. Knowledge distillation, a major technique for deploying such a vast language model in resource-scarce environments, transfers the knowledge on individual word representations learned without restrictions. In this paper, inspired by the recent observations that language representations are relatively positioned and have more semantic knowledge as a whole, we present a new knowledge distillation objective for language representation learning that transfers the contextual knowledge via two types of relationships across representations: Word Relation and Layer Transforming Relation. Unlike other recent distillation techniques for the language models, our contextual distillation does not have any restrictions on architectural changes between teacher and student. We validate the effectiveness of our method on challenging benchmarks of language understanding tasks, not only in architectures of various sizes, but also in combination with DynaBERT, the recently proposed adaptive size pruning method.

Evolving Reinforcement Learning Algorithms, JD. Co-Reyes et al, 2021

RL 논문 리뷰 스터디에서 Evolving Reinforcement Learning Algorithms 논문 내용을 정리해 발표했습니다. 이 논문은 Value-based Model-free RL 에이전트의 손실 함수를 표현하는 언어를 설계하고 기존 DQN보다 최적화된 손실 함수를 제안합니다. 많은 분들에게 도움이 되었으면 합니다.

End-to-end sequence labeling via bi-directional LSTM-CNNs-CRF

Pytorch Tutorial for End-to-end sequence labeling via bi-directional LSTM-CNN's-CRF

Intrinsic and Extrinsic Evaluations of Word Embeddings

In this paper, we first analyze the semantic composition of word embeddings by cross-referencing their clusters with the manual lexical database, WordNet. We then evaluate a variety of word embedding approaches by comparing their contributions to two NLP tasks. Our experiments show that the word embedding clusters give high correlations to the synonym and hyponym sets in WordNet, and give 0.88% and 0.17% absolute improvements in accuracy to named entity recognition and part-of-speech tagging, respectively.

Word embedding

Word embedding, Vector space model, language modelling, Neural language model, Word2Vec, GloVe, Fasttext, ELMo, BERT, distilBER, roBERTa, sBERT, Transformer, Attention

TextRank: Bringing Order into Texts

This is a short presentation that explains the famous TextRank papers that used graphs to produce summaries and document indices (keywords).

Link to paper : https://web.eecs.umich.edu/~mihalcea/papers/mihalcea.emnlp04.pdf

Supervised WSD Using Master- Slave Voting Technique

IOSR Journal of Computer Engineering (IOSR-JCE) is a double blind peer reviewed International Journal that provides rapid publication (within a month) of articles in all areas of computer engineering and its applications. The journal welcomes publications of high quality papers on theoretical developments and practical applications in computer technology. Original research papers, state-of-the-art reviews, and high quality technical notes are invited for publications.

Deep Reinforcement Learning with Distributional Semantic Rewards for Abstract...

Deep reinforcement learning (RL) has been a commonly-used strategy for the abstractive summarization task to address both the exposure bias and non-differentiable task issues. However, the conventional reward ROUGE-L simply looks for exact n-grams matches between candidates and annotated references, which inevitably makes the generated sentences repetitive and incoherent. In this paper, we explore the practicability of utilizing the distributional semantics to measure the matching degrees. Our proposed distributional semantics reward has distinct superiority in capturing the lexical and compositional diversity of natural language.

sentiment analysis

Application of several machine learning algorithms for sentiment analysis and comparison of results.

Neural Network in Knowledge Bases

In this talk given in DSR lab I discuss the applications of Neural Networks in Knowledge Base expansion and inference.

Comparative Analysis of Transformer Based Pre-Trained NLP Models

BERT, Roberta, and ALBERT pre-trained NLP models for Multi-Class Sentiment analysis on a non-benchmarking dataset.

IRE Semantic Annotation of Documents

Semantic annotation is done through first representing words and documents in the vector space model using Word2Vec and Doc2Vec implementations, the vectors are taken as features into a classifier, trained and a model is made which can classify a document with ACM classification tree categories, with the help of Wikipedia corpus.

Project Presentation: https://youtu.be/706HJteh1xc

Project Webpage: http://rohitsakala.github.io/semanticAnnotationAcmCategories/

Source Code: https://github.com/rohitsakala/semanticAnnotationAcmCategories

References:

Quoc V. Le, and Tomas Mikolov, ''Distributed Representations of Sentences and Documents ICML", 2014

Networks and Natural Language Processing

This presentation is a briefing of a paper about Networks and Natural Language Processing. It describes many graph based methods and algorithms that help in syntactic parsing, lexical semantics and other applications.

Similar to Incremental Sense Weight Training for In-depth Interpretation of Contextualized Word Embeddings (20)

Distilling Linguistic Context for Language Model Compression

Distilling Linguistic Context for Language Model Compression

Distilling Linguistic Context for Language Model Compression

Distilling Linguistic Context for Language Model Compression

Evolving Reinforcement Learning Algorithms, JD. Co-Reyes et al, 2021

Evolving Reinforcement Learning Algorithms, JD. Co-Reyes et al, 2021

End-to-end sequence labeling via bi-directional LSTM-CNNs-CRF

End-to-end sequence labeling via bi-directional LSTM-CNNs-CRF

Intrinsic and Extrinsic Evaluations of Word Embeddings

Intrinsic and Extrinsic Evaluations of Word Embeddings

Supervised WSD Using Master- Slave Voting Technique

Supervised WSD Using Master- Slave Voting Technique

A Joint Many-Task Model: Growing a Neural Network for Multiple NLP Tasks

A Joint Many-Task Model: Growing a Neural Network for Multiple NLP Tasks

Deep Reinforcement Learning with Distributional Semantic Rewards for Abstract...

Deep Reinforcement Learning with Distributional Semantic Rewards for Abstract...

Comparative Analysis of Transformer Based Pre-Trained NLP Models

Comparative Analysis of Transformer Based Pre-Trained NLP Models

More from Jinho Choi

Adaptation of Multilingual Transformer Encoder for Robust Enhanced Universal ...

IWPT 2020

Han He and Jinho D. Choi

https://www.aclweb.org/anthology/2020.iwpt-1.19/

Analysis of Hierarchical Multi-Content Text Classification Model on B-SHARP D...

AACL 2020

Renxuan Albert Li, Ihab Hajjar, Felicia Goldstein, Jinho D. Choi

https://www.aclweb.org/anthology/2020.aacl-main.38/

Competence-Level Prediction and Resume & Job Description Matching Using Conte...

EMNLP 2020

Changmao Li, Elaine Fisher, Rebecca Thomas, Steve Pittard, Vicki Hertzberg, Jinho D. Choi

https://www.aclweb.org/anthology/2020.emnlp-main.679/

Transformers to Learn Hierarchical Contexts in Multiparty Dialogue for Span-b...

ACL 2020

Changmao Li and Jinho D. Choi

https://www.aclweb.org/anthology/2020.acl-main.505/

The Myth of Higher-Order Inference in Coreference Resolution

EMNLP 2020

Liyan Xu, Jinho D. Choi

https://www.aclweb.org/anthology/2020.emnlp-main.686/

Noise Pollution in Hospital Readmission Prediction: Long Document Classificat...

BioNLP 2020

Liyan Xu, Julien Hogan, Rachel E. Patzer, Jinho D. Choi

https://www.aclweb.org/anthology/2020.bionlp-1.10/

CS329 - WordNet Similarities

CS|QTM|LING329: Computational Linguistics

https://github.com/emory-courses/cs329

CS329 - Lexical Relations

CS|QTM|LING329: Computational Linguistics

https://github.com/emory-courses/cs329

Automatic Knowledge Base Expansion for Dialogue Management

Emory NLP Weekly

- Date: 11/4/2020

- Presenter: James Finch (PhD student)

- https://www.linkedin.com/events/6711788100151975936/

Attention is All You Need for AMR Parsing

Emory NLP Weekly

- Date: 10/28/2020

- Presenter: Han He (PhD student)

- https://www.linkedin.com/events/6711787130047217664/

Graph-to-Text Generation and its Applications to Dialogue

Emory NLP Weekly

- Date: 10/15/2020

- Presenter: Sarah Finch (PhD student)

https://www.linkedin.com/events/6711785730856767488/

Real-time Coreference Resolution for Dialogue Understanding

Emory NLP Weekly

- Date: 10/21/2020

- Presenter: Liyan Xu (PhD student)

https://www.linkedin.com/events/6711786162941374464/

Multi-modal Embedding Learning for Early Detection of Alzheimer's Disease

Emory NLP Weekly

- Date: 9/23/2020

- Presenter: Ran Xu (BS student)

https://www.linkedin.com/events/6711762540579446784/

Building Widely-Interpretable Semantic Networks for Dialogue Contexts

Emory NLP Weekly

- Date: 9/16/2020

- Presenter: Lydia Feng (BS student)

https://www.linkedin.com/events/6711760000370515968/

How to make Emora talk about Sports Intelligently

Emory NLP Weekly

- Date: 9/2/2020

- Presenter: Sonny Xu (BS/MS student)

https://www.linkedin.com/events/6711696381951770624/

More from Jinho Choi (20)

Adaptation of Multilingual Transformer Encoder for Robust Enhanced Universal ...

Adaptation of Multilingual Transformer Encoder for Robust Enhanced Universal ...

Analysis of Hierarchical Multi-Content Text Classification Model on B-SHARP D...

Analysis of Hierarchical Multi-Content Text Classification Model on B-SHARP D...

Competence-Level Prediction and Resume & Job Description Matching Using Conte...

Competence-Level Prediction and Resume & Job Description Matching Using Conte...

Transformers to Learn Hierarchical Contexts in Multiparty Dialogue for Span-b...

Transformers to Learn Hierarchical Contexts in Multiparty Dialogue for Span-b...

The Myth of Higher-Order Inference in Coreference Resolution

The Myth of Higher-Order Inference in Coreference Resolution

Noise Pollution in Hospital Readmission Prediction: Long Document Classificat...

Noise Pollution in Hospital Readmission Prediction: Long Document Classificat...

Automatic Knowledge Base Expansion for Dialogue Management

Automatic Knowledge Base Expansion for Dialogue Management

Graph-to-Text Generation and its Applications to Dialogue

Graph-to-Text Generation and its Applications to Dialogue

Real-time Coreference Resolution for Dialogue Understanding

Real-time Coreference Resolution for Dialogue Understanding

Multi-modal Embedding Learning for Early Detection of Alzheimer's Disease

Multi-modal Embedding Learning for Early Detection of Alzheimer's Disease

Building Widely-Interpretable Semantic Networks for Dialogue Contexts

Building Widely-Interpretable Semantic Networks for Dialogue Contexts

Recently uploaded

Mission to Decommission: Importance of Decommissioning Products to Increase E...

Mission to Decommission: Importance of Decommissioning Products to Increase Enterprise-Wide Efficiency by VP Data Platform, American Express

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

💥 Speed, accuracy, and scaling – discover the superpowers of GenAI in action with UiPath Document Understanding and Communications Mining™:

See how to accelerate model training and optimize model performance with active learning

Learn about the latest enhancements to out-of-the-box document processing – with little to no training required

Get an exclusive demo of the new family of UiPath LLMs – GenAI models specialized for processing different types of documents and messages

This is a hands-on session specifically designed for automation developers and AI enthusiasts seeking to enhance their knowledge in leveraging the latest intelligent document processing capabilities offered by UiPath.

Speakers:

👨🏫 Andras Palfi, Senior Product Manager, UiPath

👩🏫 Lenka Dulovicova, Product Program Manager, UiPath

ODC, Data Fabric and Architecture User Group

Let's dive deeper into the world of ODC! Ricardo Alves (OutSystems) will join us to tell all about the new Data Fabric. After that, Sezen de Bruijn (OutSystems) will get into the details on how to best design a sturdy architecture within ODC.

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

Reflecting on new architectures for knowledge based systems in light of generative ai

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovation With Your Product by VP of Product Design, Warner Music Group

Bits & Pixels using AI for Good.........

A whirlwind tour of tech & AI for socio-environmental impact.

Knowledge engineering: from people to machines and back

Keynote at the 21st European Semantic Web Conference

Epistemic Interaction - tuning interfaces to provide information for AI support

Paper presented at SYNERGY workshop at AVI 2024, Genoa, Italy. 3rd June 2024

https://alandix.com/academic/papers/synergy2024-epistemic/

As machine learning integrates deeper into human-computer interactions, the concept of epistemic interaction emerges, aiming to refine these interactions to enhance system adaptability. This approach encourages minor, intentional adjustments in user behaviour to enrich the data available for system learning. This paper introduces epistemic interaction within the context of human-system communication, illustrating how deliberate interaction design can improve system understanding and adaptation. Through concrete examples, we demonstrate the potential of epistemic interaction to significantly advance human-computer interaction by leveraging intuitive human communication strategies to inform system design and functionality, offering a novel pathway for enriching user-system engagements.

Accelerate your Kubernetes clusters with Varnish Caching

A presentation about the usage and availability of Varnish on Kubernetes. This talk explores the capabilities of Varnish caching and shows how to use the Varnish Helm chart to deploy it to Kubernetes.

This presentation was delivered at K8SUG Singapore. See https://feryn.eu/presentations/accelerate-your-kubernetes-clusters-with-varnish-caching-k8sug-singapore-28-2024 for more details.

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scalable Platform by VP of Product, The New York Times

Connector Corner: Automate dynamic content and events by pushing a button

Here is something new! In our next Connector Corner webinar, we will demonstrate how you can use a single workflow to:

Create a campaign using Mailchimp with merge tags/fields

Send an interactive Slack channel message (using buttons)

Have the message received by managers and peers along with a test email for review

But there’s more:

In a second workflow supporting the same use case, you’ll see:

Your campaign sent to target colleagues for approval

If the “Approve” button is clicked, a Jira/Zendesk ticket is created for the marketing design team

But—if the “Reject” button is pushed, colleagues will be alerted via Slack message

Join us to learn more about this new, human-in-the-loop capability, brought to you by Integration Service connectors.

And...

Speakers:

Akshay Agnihotri, Product Manager

Charlie Greenberg, Host

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

“AGI should be open source and in the public domain at the service of humanity and the planet.”

JMeter webinar - integration with InfluxDB and Grafana

Watch this recorded webinar about real-time monitoring of application performance. See how to integrate Apache JMeter, the open-source leader in performance testing, with InfluxDB, the open-source time-series database, and Grafana, the open-source analytics and visualization application.

In this webinar, we will review the benefits of leveraging InfluxDB and Grafana when executing load tests and demonstrate how these tools are used to visualize performance metrics.

Length: 30 minutes

Session Overview

-------------------------------------------

During this webinar, we will cover the following topics while demonstrating the integrations of JMeter, InfluxDB and Grafana:

- What out-of-the-box solutions are available for real-time monitoring JMeter tests?

- What are the benefits of integrating InfluxDB and Grafana into the load testing stack?

- Which features are provided by Grafana?

- Demonstration of InfluxDB and Grafana using a practice web application

To view the webinar recording, go to:

https://www.rttsweb.com/jmeter-integration-webinar

When stars align: studies in data quality, knowledge graphs, and machine lear...

Keynote at DQMLKG workshop at the 21st European Semantic Web Conference 2024

Designing Great Products: The Power of Design and Leadership by Chief Designe...

Designing Great Products: The Power of Design and Leadership by Chief Designer, Beats by Dr Dre

Leading Change strategies and insights for effective change management pdf 1.pdf

Leading Change strategies and insights for effective change management pdf 1.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Keynote at DIGIT West Expo, Glasgow on 29 May 2024.

Cheryl Hung, ochery.com

Sr Director, Infrastructure Ecosystem, Arm.

The key trends across hardware, cloud and open-source; exploring how these areas are likely to mature and develop over the short and long-term, and then considering how organisations can position themselves to adapt and thrive.

Recently uploaded (20)

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

Mission to Decommission: Importance of Decommissioning Products to Increase E...

Mission to Decommission: Importance of Decommissioning Products to Increase E...

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

Dev Dives: Train smarter, not harder – active learning and UiPath LLMs for do...

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

Knowledge engineering: from people to machines and back

Knowledge engineering: from people to machines and back

Epistemic Interaction - tuning interfaces to provide information for AI support

Epistemic Interaction - tuning interfaces to provide information for AI support

Accelerate your Kubernetes clusters with Varnish Caching

Accelerate your Kubernetes clusters with Varnish Caching

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

Connector Corner: Automate dynamic content and events by pushing a button

Connector Corner: Automate dynamic content and events by pushing a button

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

JMeter webinar - integration with InfluxDB and Grafana

JMeter webinar - integration with InfluxDB and Grafana

When stars align: studies in data quality, knowledge graphs, and machine lear...

When stars align: studies in data quality, knowledge graphs, and machine lear...

Designing Great Products: The Power of Design and Leadership by Chief Designe...

Designing Great Products: The Power of Design and Leadership by Chief Designe...

Leading Change strategies and insights for effective change management pdf 1.pdf

Leading Change strategies and insights for effective change management pdf 1.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Incremental Sense Weight Training for In-depth Interpretation of Contextualized Word Embeddings

- 1. Incremental Sense Weight Training for In-depth Interpretation of Contextualized Word Embeddings Xinyi Jiang, Zhengzhe Yang, Jinho D. Choi Computer Science @ Emory University ● Contextualized word embeddings ❖ Considerable performance boosts by simply substituting distributional word embeddings. ❖ Different representations generated for the same word type with different topical senses. ❖ Contextualized word embedding dimensions get increased to over 1000 without really understanding what the dimensions mean. ● Word Embedding Interpretibility Introduction ● Datasets and Word Embeddings ❖ Trained on SemCor and evaluated on SenEval (2001, 2004, 2007, 2013, 2015). ❖ ELMo: 256, 512 and 1,024 (all with 2-layer bi-LSTM). ❖ BERT: 768 (12 output layers) and 1,024 (24 layers). ❖ Flair: 2,048 and 4,096. ● KNN Methods ❖ Sense-based KNN. ❖ Word-based KNN. ● Baseline results: WF:Wordbased KNN with fall back using WordNet. W: Wordbased. SF: Sense-based with fall back. S: Sense-based. ● Results for the original and embeddings with 5% dimensions masked. For evaluations, each target word tries all the masks of its appeared senses and selects the masking that produces the closest distance d, where d is the sum of the distances from the masked word to its k-nearest neighbors. From table 2, we can see that word-based KNN with fall back works better in general, where k = min(# of occurrences; 5). ● Results with individual embedding output layers with 5% masked dimensions. Half the embeddings are improved if being masked using the 5% threshold, especially the last 10 layer outputs. Surprisingly, the last layer output score is boosted by 3%. ❖ more considerable performance drop, worse original scores ❖ embeddings from output layers closer to the input layer contain less insignificant dimensions. Experiments ● Sense Weight Training Given a large embedding dimension size, the hypothesis is that not every embedding dimension plays a role in representing a sense. ❖ the objective function: maximize the average pairwise cosine similarity in all sense groups. ❖ the weight matrix: vector the same size of the word embedding initialized for each sense. Each dimension represents the importance of a specific dimension to that sense. ❖ exploration-exploitation: In the first phase, N is randomly generated. After a certain number of epochs, the exploration-exploitation policy is applied where for exploitation, the higher the dimension weight, the less likely the dimension number generated. ❖ Furthermore, l1 regularization is applied for feature ❖ selection purpose, and AdaGrad is used to encourage convergence. Figure 1a and Figure 1b display a smaller with-in group distance and a greater separation of sense groups when the embeddings with weights lower than 0.5 are masked to 0 . Sense Weight Training (SWT) ● Analysis ❖ ⍴ is the Spearman’s Rank-Order Correlation Coefficient between the pair-wise cosine similarity of sense vectors and the pair-wise path similarity scores between senses provided by WordNet. ❖ The average cosine similarities within sense groups all increase after dimensions are masked out for all models. ❖ The dimension weights are learned by our objective function. ❖ The correlation test shows no significant performance decrease (some even increase). ❖ The masked dimensions do not contribute to the sense group relations. ❖ ELMo and Flair: a better correlation score. ❖ The number of embeddings that can be discarded increases with the distance of the output layer to the input layer. This result corresponds to ELMo’s claim that the embeddings with output layers closer to the input layer are semantically richer. ❖ Another pattern is that the verb sense groups tend to have less number of dimensions getting masked out because verb sense groups have more possible forms of tokens belonging to the same sense group. ❖ We also attempted to mask out embedding dimensions with higher weights. The 100 top most similar masked embeddings fail to output any patterns and points. Experiments ● We propose an algorithm for learning the importance of dimension weights in sense groups.After training the weights for word dimensions, the dimensions with less importance are masked out and tested using a word sense disambiguation task and two other evaluations. ● Limitations: ❖ For the evaluation, the path similarity provided by the WordNet may not be the best to fit human judgements. ❖ Limited by dataset size. ● Future Works: ❖ Extend the algorithm to sense vector extraction. ❖ The applications of the algorithm can theoretically be applied to any other grouped embeddings. Conclusion Table 1: Baseline results Table 2: Results for the original and masked embeddings. Figure 2: BERT-Large embeddings with 24 hidden layers. Figure 3: ELMo embeddings with 3 models and 3 layers each. 1: 1024. 2: 512. 3: 256. Figure 4: BERT-Base embeddings with 12 layers Figure 5: Flair results Table 3: Correlation coefficient test results for original and masked word embeddings with N dimensions masked. Table 4: the embedding models with specific word embedding sense groups (Sense) and numbers masked out (Nmasked ): “ask.v.01” is a verb sense, meaning “inquire about”; “three.s.01” is an adjective sense, meaning “being one more than two”; “man.n.01” is a noun sense, meaning “an adult person who is male (as opposed to a woman)”. (b) masked (threshold of 0.5) Figure 1: Graphs of 20 selected sense groups with 100 embeddings each for ELMo with a dimension size of 512 (third output layer). The projection of dimensions from 512 to 2 is done by Linear Discriminant Analysis. (a) original