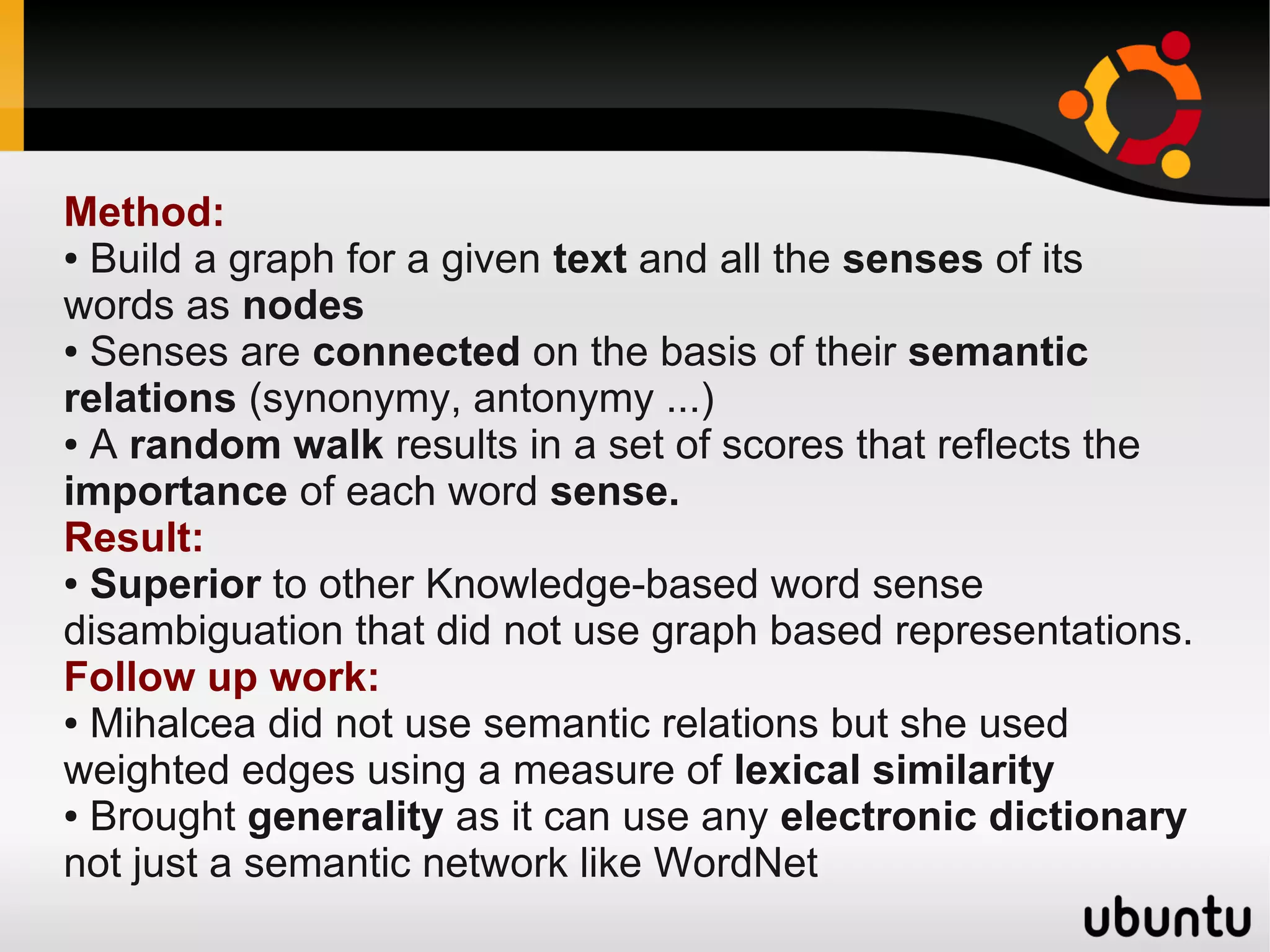

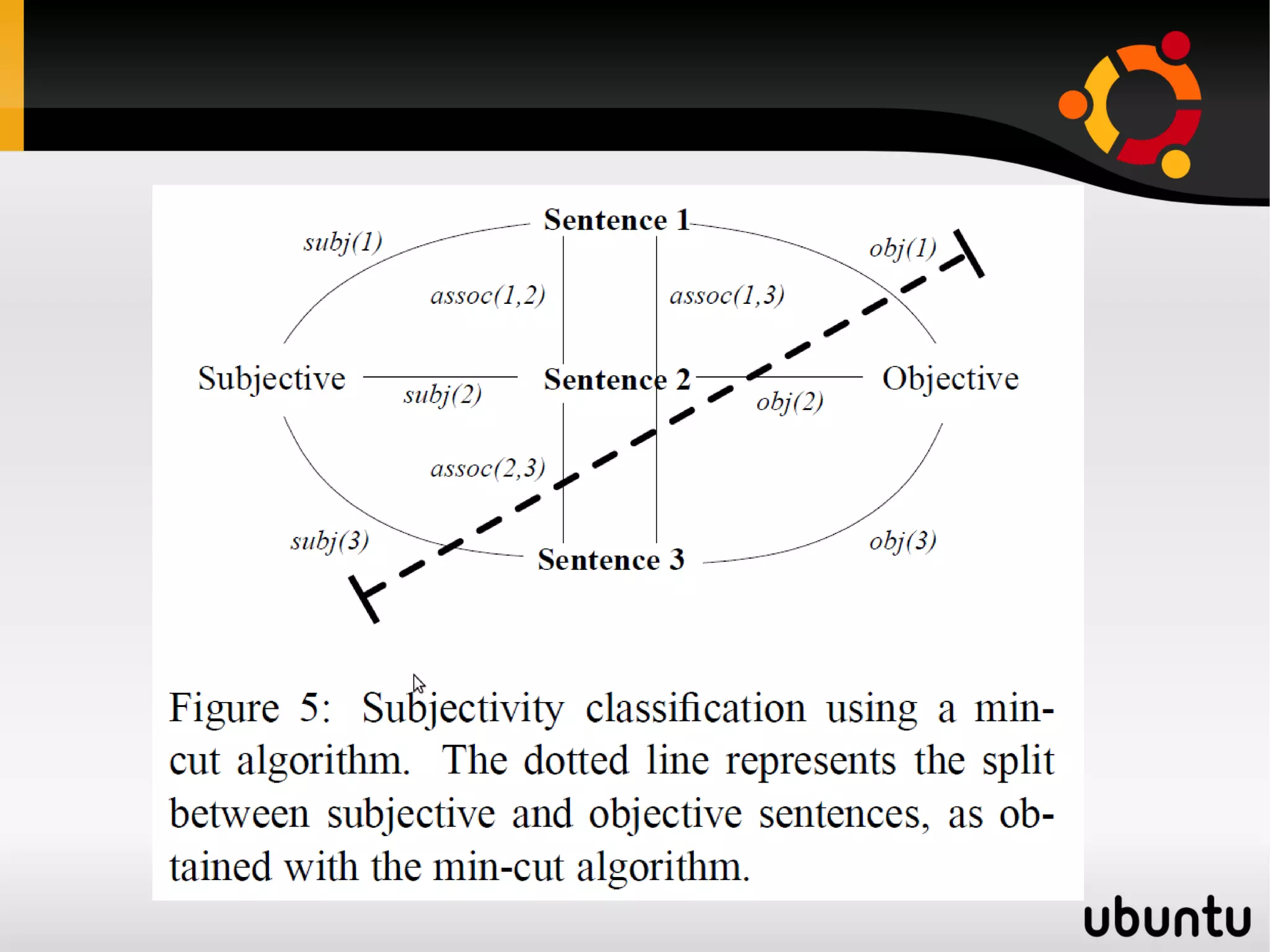

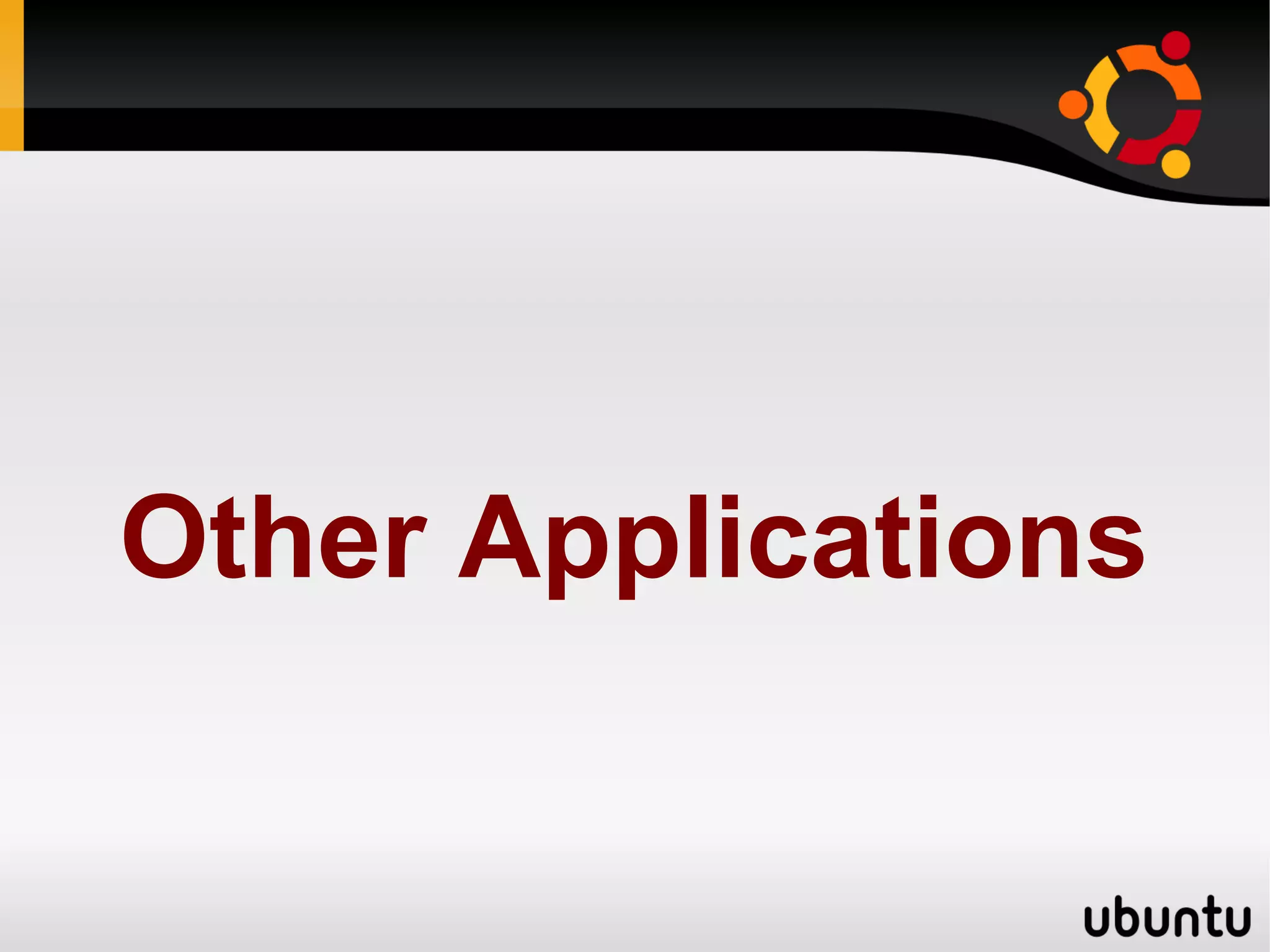

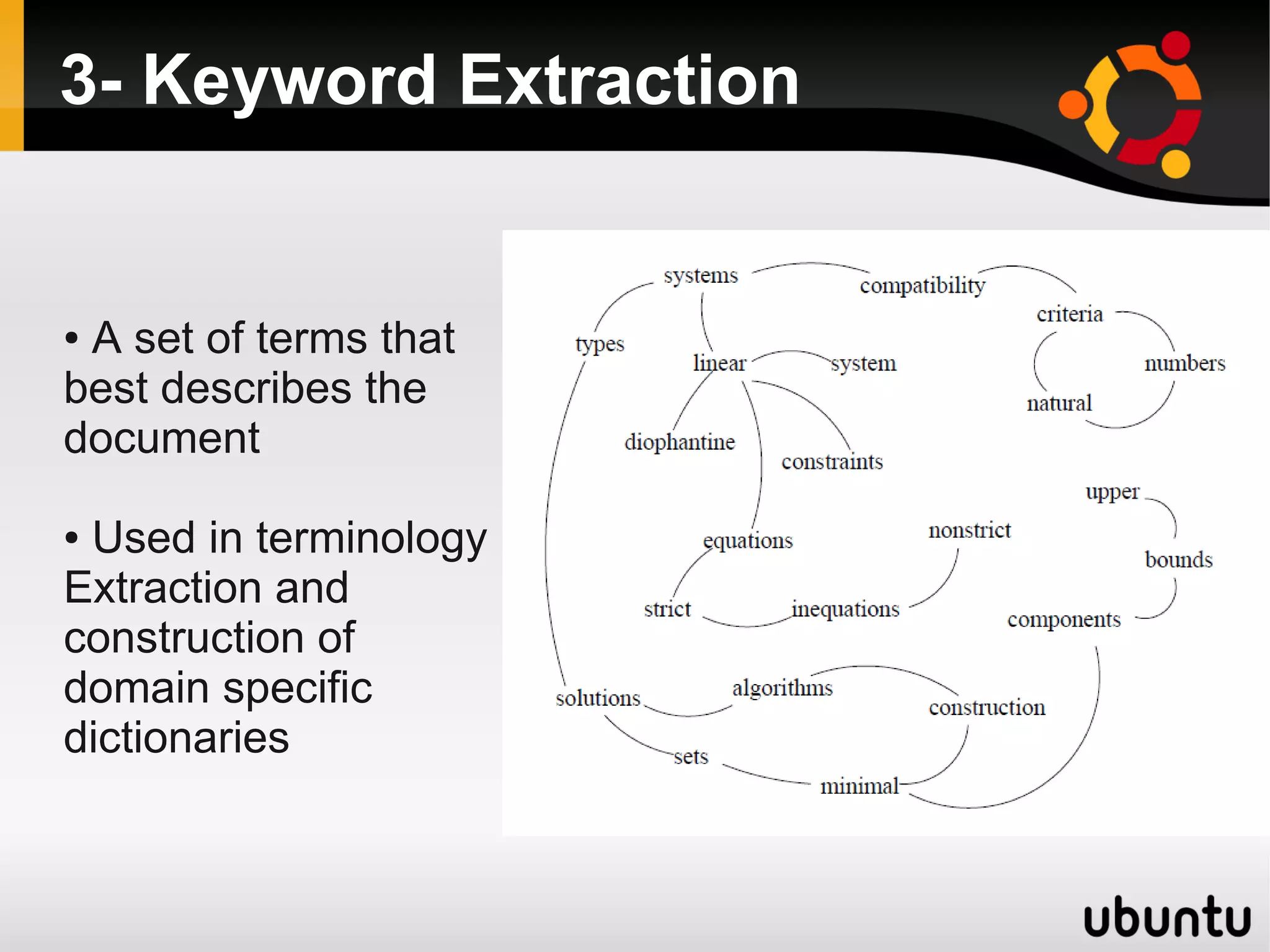

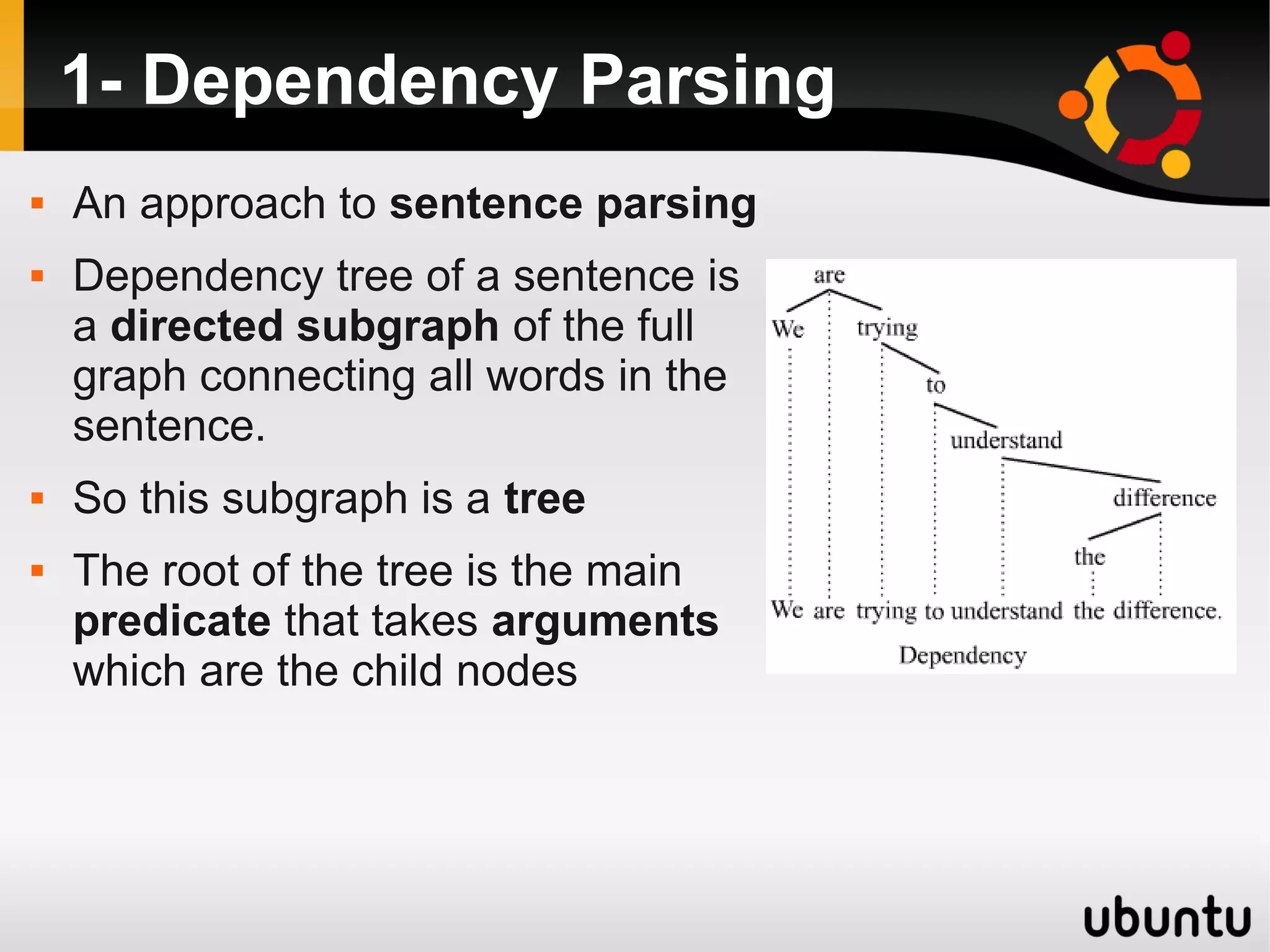

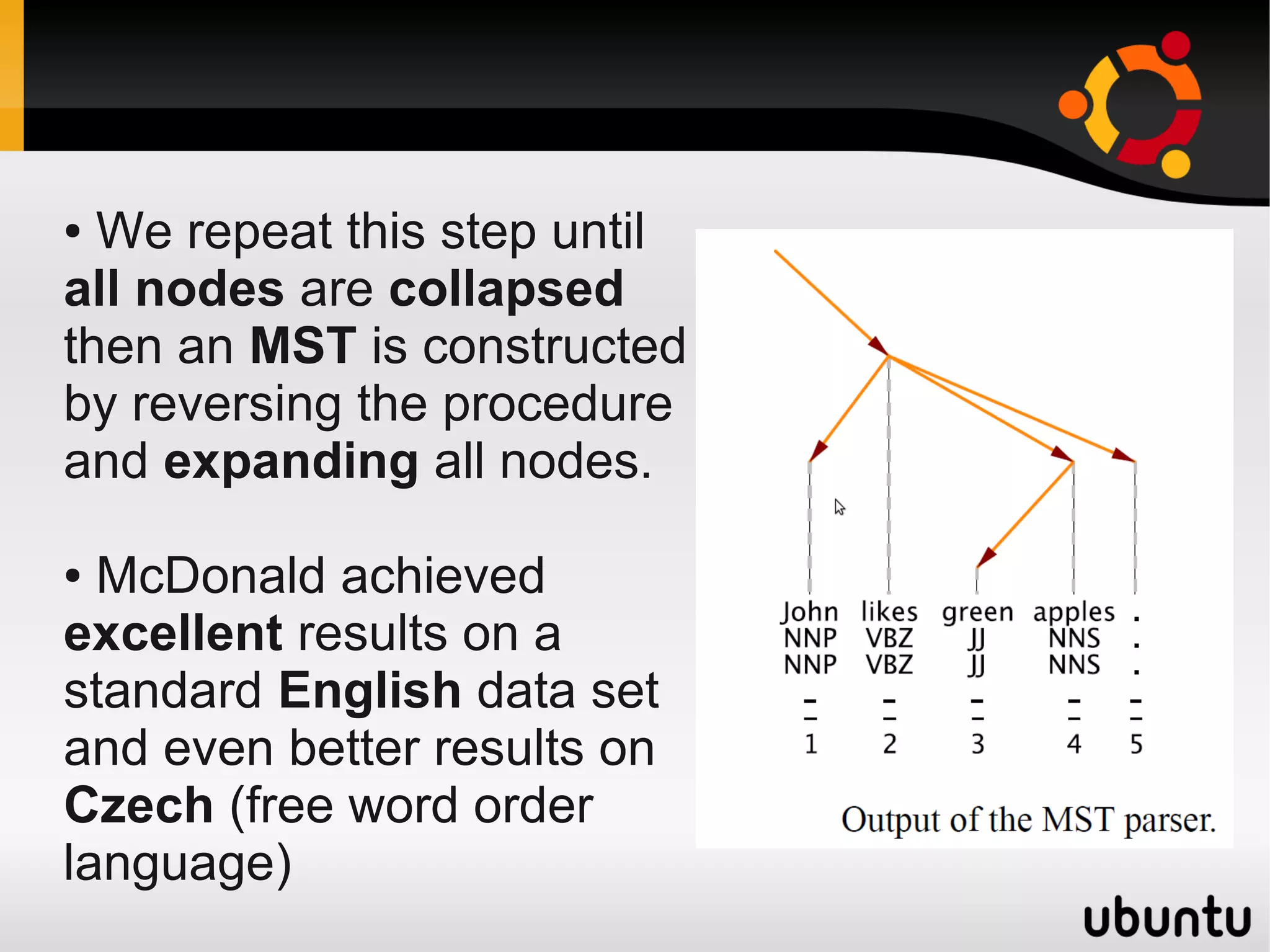

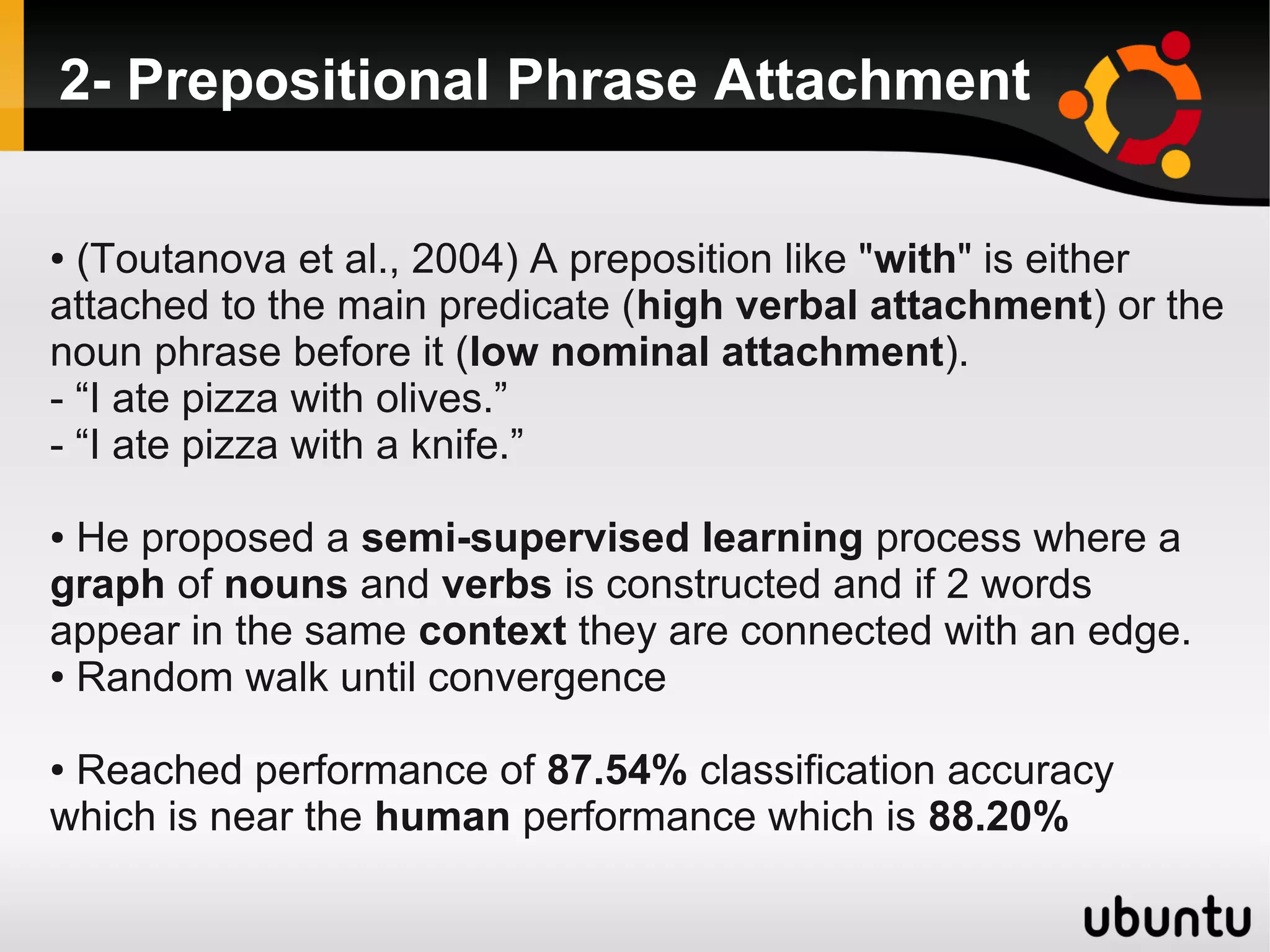

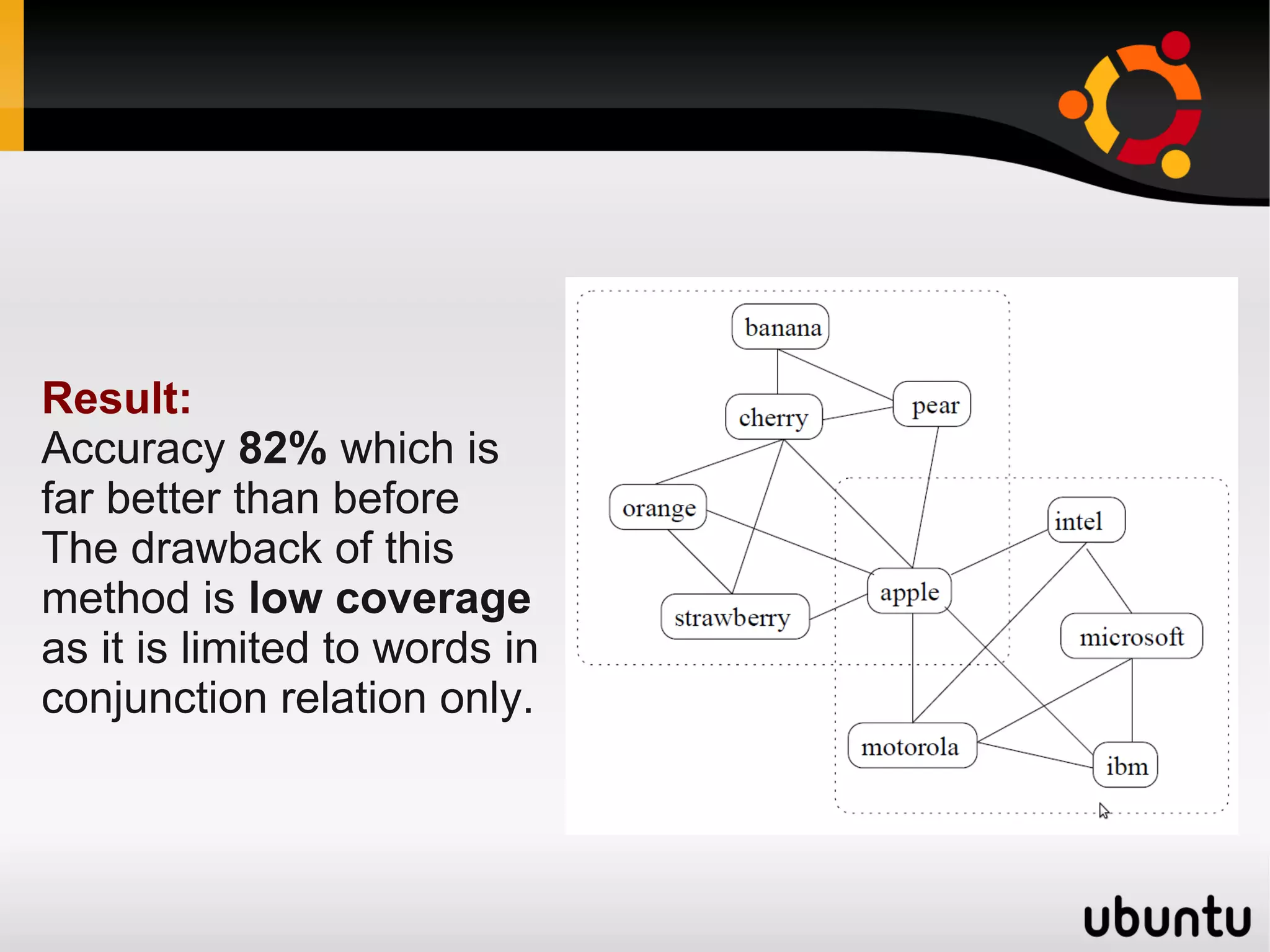

The document discusses the application of graphs in various Natural Language Processing (NLP) tasks such as text summarization, syntactic parsing, and word sense disambiguation. It highlights methods involving dependency parsing, lexical semantics, sentiment analysis, and other NLP applications utilizing graph structures. The document also references notable research and algorithms that enhance performance in these areas, demonstrating the effectiveness of graph-based approaches in understanding and processing language.

![1- Lexical Networks [continued]

b- Lexical Network Properties (Ferrer-i-Cancho and

Sole, 2001)

Goal:

● Observe Lexical Networks properties

Method:

● Build a co-occurrence network where words are

nodes that are linked with edges if they appear in the

same sentences with distance of 2 words at most.

● Half million nodes with over 10 million edges

Result:

● Small-world effect: 2-3 jumps can connect any 2 words

● Distribution of node degree is scale-free](https://image.slidesharecdn.com/networksandnlp-120111140940-phpapp01/75/Networks-and-Natural-Language-Processing-15-2048.jpg)