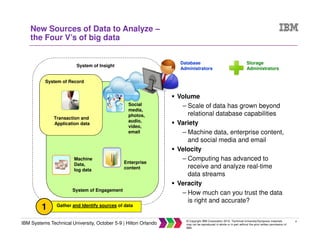

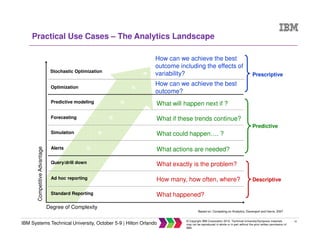

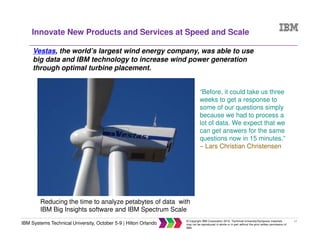

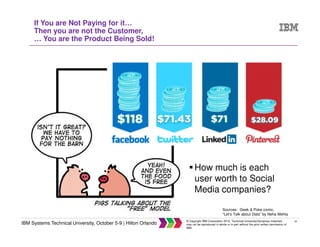

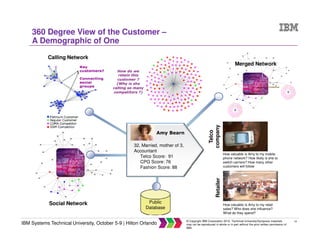

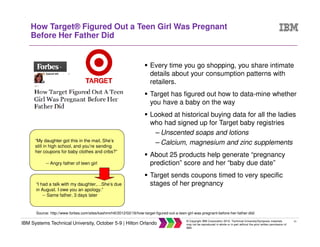

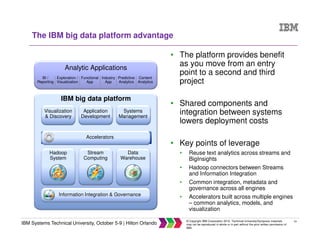

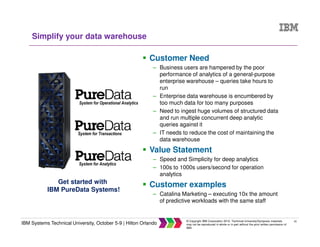

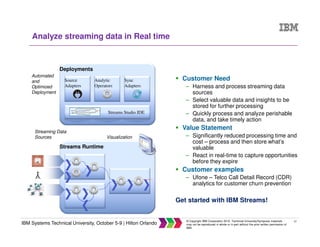

The document discusses big data concepts including what big data is, how the amount and types of data have changed over time, and the four V's of big data - volume, variety, velocity and veracity. It provides examples of practical big data use cases from companies like Vestas and Target. The document also outlines IBM's big data analytics platform and how it can help with tasks like simplifying the data warehouse, analyzing streaming data in real time, and exploiting instrumented assets.