Overview of Bibliometrics - IAP Course version 1.1

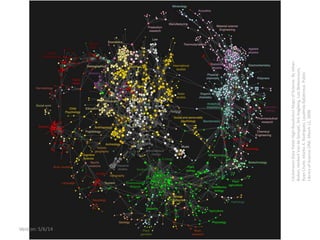

- 1. Overview of Citation Analysis ClickstreamDataYieldsHigh-ResolutionMapsofScience.ByJohan Bollen,HerbertVandeSompel,AricHagberg,LuisBettencourt, RyanChute,MarkoA.Rodriguez,LyudmilaBalakireva.Public LibraryofScienceONE,March11,2009. Version: 4/30/15

- 2. Overview of Citation Analysis Micah Altman Director of Research MIT Libraries Prepared for IAPril MIT April 2014

- 3. DISCLAIMER These opinions are my own, they are not the opinions of MIT, Brookings, any of the project funders, nor (with the exception of co-authored previously published work) my collaborators Secondary disclaimer: “It’s tough to make predictions, especially about the future!” -- Attributed to Woody Allen, Yogi Berra, Niels Bohr, Vint Cerf, Winston Churchill, Confucius, Disreali [sic], Freeman Dyson, Cecil B. Demille, Albert Einstein, Enrico Fermi, Edgar R. Fiedler, Bob Fourer, Sam Goldwyn, Allan Lamport, Groucho Marx, Dan Quayle, George Bernard Shaw, Casey Stengel, Will Rogers, M. Taub, Mark Twain, Kerr L. White, etc. Overview of Citation AnalysisVersion: 4/30/15

- 4. Collaborators & Co-Conspirators • Thanks To… – Sean Thomas Program Manager for Scholarly Repository Services and the Product Manager of DSpace@MIT – Michael Noga – Peter Cohn – Courtney Crummett Overview of Citation AnalysisVersion: 4/30/15

- 5. Related Work • K. Smith-Yoshimura, et al., 2014, Registering Researchers in Authority Files, OCLC Research. • Liz Allen, Jo Scott, Amy Brand, Marjorie M.K. Hlava, Micah Altman 2014, Beyond authorship: recognising the contributions to research; Nature. • Data Synthesis Task Group. 2014. Joint Principles for Data Citation. • CODATA Data Citation Task Group, 2013. Out of Cite, Out of Mind: The Current State of Practice, Policy and Technology for Data Citation. Data Science Journal. 2013;12:1–75. Slides and reprints available from: informatics.mit.edu Overview of Citation AnalysisVersion: 4/30/15

- 6. The MIT libraries provide support for all researchers at MIT: • Research consulting, including: bibliographic information management; literature searches; subject-specific consultation • Data management, including: data management plan consulting; data archiving; metadata creation • Data acquisition and analysis, including: database licensing; statistical software training; GIS consulting, analysis & data collection • Scholarly publishing: open access publication & licensing libraries.mit.edu Overview of Citation AnalysisVersion: 4/30/15

- 7. Roadmap * Background * * Metrics * * Data * * Tools * * Data Processing * * Hacking * * Resources * Overview of Citation AnalysisVersion: 4/30/15

- 8. Overview of Citation Analysis Background (Why?) (What?) (Which?) Version: 4/30/15

- 9. What are bibliometrics? (simple definition) Bibliometrics are measures of scholarly outputs. Overview of Citation AnalysisVersion: 4/30/15

- 10. Scholarly output effects reputation, ranking, and funding of the discipline, institution, and individual scholar We initially use bibliometric analysis to look at the top institutions, by publications and citation count for the past ten years… Universities are ranked by several indicators of academic or research performance, including… highly cited researchers… Citations… are the best understood and most widely accepted measure of research strength. Version: 4/30/15 Overview of Citation Analysis

- 11. Then Overview of Citation Analysis Clarke, Beverly L. "Multiple authorship trends in scientific papers." Science 143.3608 (1964): 822-824. Version: 4/30/15

- 12. Now Overview of Citation AnalysisVersion: 4/30/15

- 13. Now is More Overview of Citation AnalysisVersion: 4/30/15

- 14. What are bibliometrics? (Extended Definition) • Analysis of characteristics of/relationships among research/scholarly outputs/publications – Analysis includes: lists, descriptive statistics, visualization, inference – Outputs include: grants, articles, books, databases, software, patents Overview of Citation AnalysisVersion: 4/30/15

- 15. Which questions are bibliometrics being used to answer? Some examples: • What are the most influential journals in a particular field? • How influential is this scholar? • Where is interdisciplinary research occurring? • Which groups of people effectively collaborate? • Which institutions are using funding most productively? Overview of Citation AnalysisVersion: 4/30/15

- 16. Overview of Citation Analysis Data (Leading Databases) (Subject-Specific) (MIT Internal) (Selection)Version: 4/30/15

- 17. Google Scholar Data Sources • Unspecified coverage, but… • Wide coverage of books, preprint, conference proceedings, non-english work, working papers, patents, institutional repositories Built-in Metrics • Journal H-Index • Author Profiles – Total & Five-Year Counts – I-10 index and H-index – Yearly citations • Limited filtering Overview of Citation Analysis scholar.google.com Version: 4/30/15

- 18. Data • Frequently updated/current • Covers journal articles published after 1995 • Wide disciplinary coverage • Includes theses and patents, and citations from these • Includes some institutional repositories • Commercial Metrics • Citation lists & counts • Author impact & articles – Statistics – Metrics – Graphs • Journal impact – Statistics – Metrics – graphs Overview of Citation Analysis scopus.com Scopus Version: 4/30/15

- 19. Data • Journal coverage after 1899 • Many conference proceedings since 1990 • Many books since 2005 • Limited coverage of non- english works • Doesn’t index institutional repositories and e-print servers • Commercial Metrics • Citation lists & counts • Author impact & articles – Statistics – Metrics – Graphs • Journal impact – Statistics – Metrics – graphs Overview of Citation Analysis apps.webofknowledge.com Web of Science Version: 4/30/15

- 20. Major Subject Specific Catalogs With Citation Metrics • SciFinder: chemical abstracts scifinder.cas.org • PsycInfo: psychological literature www.apa.org/pubs/databases/psycinfo/ • Business Source Complete: business articles www.ebscohost.com/academic/business- source-complete • arXiv: physics, mathematics, nonlinear sciences, computer science, quantitative biology, quantitative finance, statistics (Integrates w/NASA-ADS and INSPIRE) arxiv.org • mathSciNet Mathematical Reviews. Computes collaboration distances. www.ams.org/mathscinet/ • IEEE Digital Library content published by the IEEE including citing references • USPTO: find patents that are cited by/cite others uspto.gov/patft/ • ACM Digital Libraries Full text and citation of ACM articles and proceedings dl.acm.org Overview of Citation Analysis VERA: owens.mit.edu/sfx_local/az/mit_db Version: 4/30/15

- 21. APIs for Scholarly Resources What are API’s? • Application programming interface (APIs), are tools used to expose raw data, query interfaces, or other functions to other software applications • Typically more flexible than interactive interfaces Challenges • Requires programming • Requires data manipulation and reorganization • Variety of interfaces, coverage, results and terms of service Overview of Citation Analysis libguides.mit.edu/apis API What it does arXiv API Gives programmatic access to all of the arXiv data, search and linking facilities BioMed Central API Retrieves: 1) BMC Latest Articles; 2) BMC Editors picks; 3) Data on article subscription and access; 4) Bibliographic search data DVN (Dataverse Network) API for Data Sharing Allows programmatic access to data and metadata in the Dataverse Network, which includes theHarvard Dataverse Network, MIT Libraries- purchased data, and data deposited inother Dataverse Network repositories. Two modules exist: Metadata/Search and Data Access. Digital Public Library of America (DPLA) API Allows programmatic access to metadata in DPLA collections, including partner data from Harvard, New York Public Library, ARTstor, and others. IEEE Xplore XML Search API Allows IEEE customers and 3rd parties such as federated search vendors to query the IEEE Xplore content repository and retrieve results for manipulation and presentation on local web interfaces JSTOR Data for Research Not a true API, but allows computational analysis and selection of JSTOR's scholarly journal and primary resource collections Includes tools for faceted searching and filtering, text analysis, topic modeling, data extraction, and visualization. Nature Blogs API Blog tracking and indexing service; tracks Nature blogs and other third-party science blogs Nature OpenSearch API Bibliographic search service for Nature content NLM APIs NLM offers 21 different APIs for accessing various NLM databases. ORCID API Queries and searches the ORCID researcher identifier system and obtain researcher profile data PLoS Article-Level Metrics API Retrieves article-level metrics (including usage statistics, citation counts, and social networking activity) for articles published in PLOS journals and articles added to PLOS Hubs: Biodiversity PLoS Search API Allows PLoS content to be queried using the 23 terms in the PLoS search, for integration into web, desktop, or mobile applications PubMed E-Utilities API Set of 8 server-side programs for searching 38 NCBI Entrez databases of biomedical literature and data Scopus Integration Scopus Document Search API displays search results on a website. Scopus Cited-By Count API, displays cited-by count for a publication as an image on a web site. Springer Images API Provides images and related text for over 300,000 free images available on Springer Images. Springer Metadata API Provides metadata for over 5 million online documents (e.g. journal articles, book chapters, protocols). Springer Open Access API Provides metadata, full-text content, and images for over 80,000 open access articles from BioMed Central and SpringerOpen journals. STAT!Ref OpenSearch API Bibliographic search service for displaying syndicated results on a website. Web of Science Web Services Bibliographic search service. Allows automatic, real-time querying of records. Primarly for populating an institutional repository. World Bank Indicators Provides access to nine World Bank statistical databases: Version: 4/30/15

- 22. Full (text) Monte API’s Why use Full Text? • Name-entity extraction for relationship analysis: e.g. acknowledged people, funders, grants, institutions, software, datasets • Name-entity extraction for subject analysis: e.g. locations, reagents, genes, chemicals • Topic extraction and clustering Primacy Sources for Full-Text • CrossRef TDM API: tdmsupport.crossref.org/research ers/ • ArXiv bulk data:arxiv.org/help/bulk_data_s3 • PLOS API api.plos.org • Pubmed API: – www.ncbi.nlm.nih.gov/pmc/tools/ oai/ – www.ncbi.nlm.nih.gov/books/NBK 25501/ • OpenAire api.openaire.eu Version: 4/30/15 Overview of Citation Analysis

- 23. Using API’s Choosing tools • Recommend python or R • Many resources such as PUBMED, DataVerse, and arXiv are accessible through OAI-PMH protocol • More in tools section and resources section Example: Harvesting ArXiv with pyoai Version: 4/30/15 Overview of Citation Analysis from oaipmh.client import Client from oaipmh.metadata import MetadataRegistry from lxml import etree URL = 'http://export.arxiv.org/oai2’ registry = MetadataRegistry() class Reader(object): def __call__(self, element): return etree.tostring(element, pretty_print=True, encoding='UTF8') registry.registerReader('oai_dc', Reader()) client = Client(URL, registry) for count, record in enumerate(client.listRecords(metadataPrefix='oai_dc')): header = record[0] metadata = record[1] or '’ print header.identifier() print metadata

- 24. MIT Internal Data Institute Data (Restricted Use) • IS&T DataWarehouse Data from administrative systems. E.g. MIT people, organizations, grants and awards ist.mit.edu/warehouse • Office of the Provost – Institutional Research Provides analytical and research support to the Provost, academic departments, research laboratories and centers. web.mit.edu/ir/ Libraries Data • DSpace@MIT lists of publications in Dspace by author/department dspace.mit.edu • Barton lists of MIT these by author/advisor library.mit.edu Overview of Citation AnalysisVersion: 4/30/15

- 25. Comparing Databases Coverage • Years • Disciplines • Publishers/sources • Venue – journals/conferences/worki ng paper/IR/personal web sites • Documentation of coverage • Completeness Characteristics • Internal vs. external • Free vs. fee-based • API vs. interactive • Open data vs. restrictive licensed • Structured vs. unstructured • Full text vs. metadata Overview of Citation AnalysisVersion: 4/30/15

- 26. Selecting a Database • Free, quick, and useful Google Scholar • Extract data for further simple analysis Scholarometer (google scholar extract), Scopus, WOS • More complete coverage use multiple databases • Specialized subject/single article disciplinary database/API • Extract data for network analysis, text mining API Overview of Citation Analysis Free & Easy $$ and/or programmatic Version: 4/30/15

- 27. Overview of Citation Analysis Measures (Article metrics) (Author Impact) (Journal Impact) (Collaboration) (Network Analysis) Version: 4/30/15

- 28. Article Metrics: Overview What are article-level metrics? • Measures on specific published articles • Typically used in construction of literature reviews; or as building blocks for other measures Common measures • Citations list • Citation counts • References • Captures/bookmarks • Downloads • Mentions • Likes • Views • Readers Overview of Citation Analysis sparc.arl.org/sites/default/files/sparc-alm-primer.pdf Version: 4/30/15

- 29. Article Metrics: Using Google Scholar Steps 1. Go to scholar.google.com 2. Search (Full Text + Metadata) – Unstructured keyword search OR – “Advanced” fielded search 3. Sort – by relevance OR – ny date 4. Filter – By Date range AND/OR – By Corpus (case law, patents) Results • Number of citations to article indexed google scholar • List of citing articles • Article text (sometimes) Overview of Citation AnalysisVersion: 4/30/15

- 30. Article Metrics: Example – Google Scholar Overview of Citation AnalysisVersion: 4/30/15

- 31. Article Metrics: Altmetrics Types • Captures/bookmarks • Downloads • Mentions • Likes • Views • Readers Sources • Social media • Reference management (e.g. citeulike, mendeley ) • Indexes/searches (e.g. Scopus) Sources • PLOS article metrics article-level- metrics.plos.org • Plum Analytics plumanalytics.com • ImpactStory impactstory.org Overview of Citation AnalysisVersion: 4/30/15

- 32. Article Metrics: Database Comparison Google Scholar, Scopus, WOS PLOS Plos Articles Only PlumX Coverage Wide variety PLOS Articles Wide Variety Measures Citation count Citation list Citation count Citation list Views Downloads Mentions Bookmarks Comments Citation count Citation list Views Downloads Mentions Bookmarks Comments Overview of Citation AnalysisVersion: 4/30/15

- 33. ‘Impact’ Factors: Overview What are impact factors? • Descriptive statistics • Usually based on citations • Commonly treated as a proxy for the level of influence of an article, person, or journal Common measures • ISI Journal Impact Factor: The frequency with which the “average article” has been cited in a particular year. It is based on the most recent two years of citations. It is only supplied for journals indexed by ISI in the Web of Science. • Article Citation Count: Total number of citations received from other articles to target article. • H-Index: The maximum number of articles h such that each has received at least h citations Overview of Citation Analysis libraries.mit.edu/scholarly/publishing/impact-factors/ Version: 4/30/15

- 34. Author Impact: Example – Google Scholar Overview of Citation AnalysisVersion: 4/30/15

- 35. Author Impact: Example – Exporting Data with Scholarometer Overview of Citation AnalysisVersion: 4/30/15

- 36. Author Impact: Example – Web of Science Overview of Citation AnalysisVersion: 4/30/15

- 37. Author Impact: Database Comparison Google Scholar Scholar+Scholaro meter Scopus Web of Science Select Any Author Only w/profiles Yes Yes Yes Export data No Yes Yes Yes Exclude articles No Yes Yes Yes Metrics H- index,I10,num cites H-index,I10,num cites H-index,… H-index Visualization Minimal Minimal Yes Yes Longitudinal Minimal Minimal Yes Yes Overview of Citation AnalysisVersion: 4/30/15

- 38. Journal Impact: Using Online Services Scholar 1. Go to scholar.google. com 2. Click on METRICS 3. Google rank and journal h-5 factor displayed 4. Filter by country & field Overview of Citation Analysis Scopus • Go to scopus.com • Click on Journal Analyzer • Select journal • Select statistics Web of Science 1. Go to admin- apps.webofkno wledge.com/JC R/ 2. Select field and year + SUBMIT 3. Select subject + SUBMIT Version: 4/30/15

- 39. Journal Impact: Example – Google Scholar Overview of Citation AnalysisVersion: 4/30/15

- 40. Journal Impact: Example – Web of Science Overview of Citation AnalysisVersion: 4/30/15

- 41. Journal Impact: Example – Scopus Overview of Citation AnalysisVersion: 4/30/15

- 42. Journal Impact: Database Comparison Google Scholar Scopus Web of Science Journals Covered Top 100 ranked in each language Mostly english-language Many (selected) Journals Metrics H5 Median Many Impact factor, Many others Visualization No Yes Yes Longitudinal analysis No Yes Yes Discipline Rankings No No Yes Overview of Citation AnalysisVersion: 4/30/15

- 43. Network Analysis What is network analysis? • Study of objects and interactions modeled as an induced network (or graph) • Units of observation form nodes • Relationships form edges Common measures • Community detection – Modularity – Clustering – Clique • Centrality – Betweeness – Degree – Closeness • Diameter • Visualization Overview of Citation AnalysisVersion: 4/30/15

- 44. Network Analysis: Example – CitNetExplorer Overview of Citation AnalysisVersion: 4/30/15

- 45. Network Analysis: Example – CitNetExplorer Overview of Citation Analysis 1. Use WOS to locate records 2. Add records to “marked list” 3. Click “marked list” 4. Check “cited references” 5. Save to other file formats 6. Select windows tab delimeted 7. Open in CitNetExplorerVersion: 4/30/15

- 46. CoAuthorship Analysis Example – Using R and JSTOR – Part 1 Overview of Citation AnalysisVersion: 4/30/15

- 47. % cut -d"," -f 1-11 citations.CSV >areastudies2003.csv R> areastudies.df< read.table(file="citations.CSV",row.names=NULL ,sep=",",quote="",stringsAsFactors=F,header=T) R> authorList <- strsplit(areastudies.df$author,perl=TRUE,split="t") R> plot(table(sapply(authorList,length))) CoAuthorship Analysis Example – Using R and JSTOR – Part 2 Overview of Citation AnalysisVersion: 4/30/15

- 48. createCoauthorlist<-function(pl){ coauthors<-list() updateCoauthor<-function(co,paperAuthors) { tmp <- unlist( coauthors[co] ) tmp <- union(tmp,unlist(paperAuthors)) coauthors[[co]]<<-tmp } sapply(pl, function(x)sapply(x,function(y)updateCoauthor(y,x))) return (coauthors) } CoAuthorship Analysis Example – Using R and JSTOR – Part 3 Overview of Citation Analysis R> R> coa <-createCoauthorlist(authorList) R> plot(table(sapply(coa,length))) Note: Results are biased down, if a sample of records is used! Version: 4/30/15

- 49. Variations: Retrieving Authors from PLOS Overview of Citation Analysis library(rplos) options(PlosApiKey= “YOURKEY") fetchPlosResults<-function(qstring, fstring,start=0) { moreResults <- TRUE results.df <- NULL batStart<-start batSize <- 999 while (moreResults) { tmp.df <- try(silent=TRUE, searchplos(terms="*:*", toquery = qstring, fields=fstring, start=batStart, limit=batSize) ) if (class(tmp.df) == "try-error") { moreResults<-FALSE } else if (is.null(dim(tmp.df))) { moreResults<-FALSE } else if (dim(tmp.df)[1]==0) { moreResults<-FALSE } else { results.df<-merge(tmp.df,results.df,all=TRUE) batStart <- batStart + batSize cat (paste(batStart,date(),"n")) save(results.df,file="/tmp/plosTMP.RData") } } return(results.df) } plosRes.df <- fetchPlosResults( qstring= 'publication_date:[2012-01-01T00:00:00Z TO 2012-12-31T23:59:59Z]', fstring= "id,author,journal,publication_date,subject,subject_level_1 ,references,article_type" ) Version: 4/30/15

- 50. Limitations Limitations of data • Citation differs systematically from sharing, reading, or ‘use’ • Relationships signaled by citation are heterogenous: citations may indicate evidentiary support, definitions, disagreement, kudos,… • Cited objects are heterogenous – e.g. journals include letters, comments, reviews and original research • Databases may have limited or inconsistent coverage of publishers, fields, years, or types of publications (e.g. conference proceedings), types of objects (databases, software, books, articles) • Some types of objects are often used without being cited Limitations of measures • Most measures are vulnerable to self-citation and other sorts of manipulation • Most measures are descriptive estimates – they are not forecasting or causal inferences • Few studies of the external validity of measures • Few studies on error and bias in estimators Overview of Citation AnalysisVersion: 4/30/15

- 51. Overview of Citation Analysis Tools (Built-in tools) (Analysis tools) Version: 4/30/15

- 52. Built-in Tools • Database portals have built-in tools: Google Scholar; Scholarometer; Web of Science … • Typical restrictions of built-in tools – Single database – Number of records – Usually single-author/single journal metrics – Lacks statistical forecasting/causal models – Limited data-cleaning options – Simple visualizations Overview of Citation AnalysisVersion: 4/30/15

- 53. External Tools Feature sets • Data retrieval • Data processing (next section) • Core statistics • Visualization • Exploratory network analysis • Network modeling Choosing a tool • Open vs. closed source • Free vs. commercial • GUI vs. CLI • Scalability • Single Platform/Multi- Platform • Feature Set • Maintenance/support Overview of Citation AnalysisVersion: 4/30/15

- 54. Publish or Perish • Automatic data retrieval – MS Academic Search – Google Scholar • Standard single-author metrics – Total number of papers and total number of citations – Average citations per paper, citations per author, papers per author, and citations per year – Hirsch's h-index and related parameters and variations • Data export to CSV www.harzing.com/pop.htm Overview of Citation AnalysisVersion: 4/30/15

- 55. Scholarometer Data • Google Scholar • Crowd-source tags (disciplines) – available through API • Data export to CSV Metrics • Single/combined author citation count/h-index rank • Discipline rank/ • Author network visualization • Discipline network visualization Overview of Citation Analysis scholarometer.indiana.edu Version: 4/30/15

- 56. Pajek Analysis • Network visualization • Supports complex networks: multi-relational, longitudinal, 2-mode • Layout control • Clustering • Community detection Overview of Citation Analysis pajek.imfm.si Source: www.public.asu.edu/~majansse/pubs/SupplementIHDP.htm Version: 4/30/15

- 57. CitNetExplorer Features • Citation/bibliometric specific tool • Web of Science import. • Pajek export. • Large networks. (millions of publications) • Simple network visualizations • Network measures: connected components, clusters, core publications … Overview of Citation Analysis citnetexplorer.nl Version: 4/30/15

- 58. CiteSpace Features • Citation/bibliometric tool • Import from WOS, ArXiV, NSF, ADS,Pubmed • Export to CSV, GraphML, Pajek • Time slicing • Network measures: connected components, clusters, core publications … • Topic clustering Overview of Citation Analysis cluster.cis.drexel.edu/~cchen/citespace Version: 4/30/15

- 59. SciMat Features • Workflow support • Network visualization • Data processing and cleanup • Longitudinal analysis • Metrics: h-index Overview of Citation Analysis sci2s.ugr.es/scimat/ Version: 4/30/15

- 60. Gephi Analysis • Network graphs & layout • Dynamic filtering (including time-sliders) • Clustering • SNA: betweeness, closeness, diameter, PageRank, HITS,… • Community detection (modularity) Overview of Citation Analysis gephi.org Version: 4/30/15

- 61. Sci2Tool Analysis and Visualization • Temporal – burst detection • Geospatial • Topical • Networks – trees and graphs Additional Benefits • Parsers for citation data • Bibliometric analysis tools • Portable output files • Direct connections to R and Gephi Overview of Citation Analysis http://sci2.cns.iu.edu Version: 4/30/15

- 62. Command-Line Tools Using Python • Scipy: scientific data processing, statistics, visualization scipy.org • NLTK: text processing and analysis nltk.org • NetworkX: network measures (descriptive) networkx.github.io • Bibtools: parse WOS data, and identify comunities of cocitation www.sebastian-grauwin.com/?page_id=492 • PythonOAI: retrieve bibliographic metadata from OAI sources, such as arXiv pypi.python.org/pypi/pyoai/ Using R • tm: simple text processing and analysis cran.r-project.org/web/packages/tm/ • StatNet: network measures (descriptive); social network analysis (forecasting, causal); visualization statnet.org • Citan: citation analysis cran.r-project.org/web/packages/CITAN • Rplos: retrieve citation data from PLOS http://cran.r-project.org/web/packages/rplos/ • Rmendeley retrieve citation data from Mendeley http://ropensci.org/packages/rmendeley.html • RISmed retrieve data from NCBI http://cran.r- project.org/web/packages/RISmed/index.html • OAIHarvester retrieve data from OAI-PMH Sources cran.r-project.org/web/packages/OAIHarvester/ Overview of Citation Analysis Web integration for interactive visualization: d3js.org Version: 4/30/15

- 63. Characteristics of Tools • Built-in vs. external • Free vs. fee-based • Command line vs. interactive • Open source vs. closed source • Domain – Data extraction, retrieval, integration – Data cleaning and manipulation – Network visualization – Advanced measures – Statistical analysis Overview of Citation AnalysisVersion: 4/30/15

- 64. Choosing tools. • Simple standard impact built-in database tools; Publish or Perish; Scholarometer • Messy data OpenRefine + … • Network analysis measures – Network measures Sci2,SciMat, Pajek – Visualizations Gephi, Pajek, CitNet, SciMat • Need to estimate complex statistical (predictive, statistical) models R • Need maximum software flexibility, integration with software Python Overview of Citation Analysis Quick Start Power Tools Version: 4/30/15

- 65. Overview of Citation Analysis Data Processing (reorganizing data) (cleaning data) (matching names) Version: 4/30/15

- 66. Open Refine • Spreadsheet/database combination – Ease of use of spreadsheets – Reporting and manipulative power of databases • Filters, facets, and clustering – Allow granular overview of what’s in your data – Easily see occurrence distribution of values – Easily make global corrections • Supports both row-level and record-level (multi-row) operations Overview of Citation Analysis openrefine.org Version: 4/30/15

- 67. Open Refine – Reorganize Data Reorganizing Data • Splitting/joining multi- valued cells • Transposing rows/columns • Supports logic-based transformation – Google Refine Expression Language (GREL) – Clojure – Jython Overview of Citation Analysis openrefine.org Version: 4/30/15

- 68. Open Refine – Cleaning Data Cleaning Data • Duplicate detection • Common data transformations – Trimming whitespace – Normalizing text case • Cluster/edit for matching and normalization Additional Benefits • Perform mass edits efficiently • Revision history allows for roll-back to earlier state • Transformations recorded as JSON – Portable for future data sets • Browser-based Overview of Citation Analysis openrefine.org Version: 4/30/15

- 69. Open Refine – Matching Names Matching names • Create filters to navigate larger datasets • Create facets to see all unique values/occurrences • Auto-detect variant entries • Cluster/edit for matching and normalization • Reconciliation services against external data for normalization/aggregation Overview of Citation Analysis openrefine.org Version: 4/30/15

- 70. Name Disambiguation Methods • Dictionary-based entity matching • Phonetic Matching • Rules-based linkage • Probability based linking – Edit distance – Felligi-Sunter algorithm – Machine-learning Tools • Febrl sourceforge.net/projects/fe brl/ • RecordLinkage (for R) cran.r- project.org/web/packages/ RecordLinkage/ • Link-King (for SAS) the-link-king.com Overview of Citation Analysis Source: en.wikipedia.org/wiki/Record_linkage Version: 4/30/15

- 71. Matching Names – Author Identifiers What are Author Identifiers? • Author identifiers give you a way to reliably and unambiguously connect your names(s) with your work throughout your career, including your papers, data, biographical information, etc. This can be helpful in a number of ways: • Provides a means to distinguish between you and other authors with identical or similar names. • Links together all of your works even if you have used different names over the course of your career. • Makes it easy for others (grant funders, other researchers etc.) to find your research output. • Ensures that your work is clearly attributed to you. Getting started with ORCID... • ORCID (Open Researcher and Contributor ID) is a non-prorietary, non-profit community- based registry of research identifiers. • Links authors to their datasets and other works in addition to articles. • Authors can control what information in their ORCID profile they share. Only the ORCID ID is automatically shared. (See their privacy policy.) • It is easy to import research output from other sources (including ResearcherID, Scopus Author ID, and Datacite Metadata Store to your ORCID profile. (See ORCID's import works page.) • Many organizations and publishers have created integrations with ORCID including Nature Publishing Group, Elsevier, and the American Physical Society. • Free, private, 30-second registration: orcid.org/register Overview of Citation Analysis libguides.mit.edu/content.php?pid=573578&sid=4729602 Version: 4/30/15

- 72. Overview of Citation Analysis Bibliometric Hacking Version: 4/30/15

- 73. Sharing, Creativity, Collaboration, Clarity Associated with Citation Impact • Collaboration/team science increases impact • Open access associated with substantially higher citations • Self Citation in moderation is associated with reinforced impact • Sharing data is associated with higher citation rates • Publishing regularly is associated with much higher impact • Citation measures only one type of use – you can collect evidence and measure others • Use clear, titles, and meaningful keywords and abstracts • Mainstream social media, especially twitter, can indicate broader use • Creativity matters Overview of Citation AnalysisVersion: 4/30/15

- 74. Not-so-positive findings Overview of Citation Analysis Daniel Schectman’s Lab Notebook Providing Initial Evidence of Quasi Crystals • Null results are less likely to be submitted and published submit all your results • Publication bias leads to overestimates of effects/significance in many fields • Many data sharing and replication policies are not followed share even when you are not forced to • Good science may not pass peer review be persistent • Much research is not replicable make yours replicable • Many publications are not cited • Multidisciplinary work less cited • Edited volumes are not well cited think carefully about publication venue, significance of research • Retraction rates in scientific journals have substantially increased • Author order is overemphasized in evaluation discuss authorship early, use other ways of describing contributions and distributing credit • Delays in peer-review, and publishing are frequent, and important track your submissions, and politely, but actively manage delays • Not enough time spent on research develop a research habit, and build research in your schedule Version: 4/30/15

- 75. From 10 Simple Rules … Graduate Students • Share your scientific success with the world Postdoctoral Positions • Negotiate first authorship before you start. Getting Published • If you do not write well in the English language, take lessons early • Become a reviewer early in your career. • Decide early on where to try to publish your paper. • Quality (of journals) is everything. Building Reputation • Think Before You Act • Do not ignore criticism • Do not ignore people • Diligently check everything you publish • Always declare conflicts of interest • Do your share for the community • Do not commit to tasks you cannot complete • Do not write poor reviews • Do not write references for people who do not deserve it • Never plagiarize, or doctor your data Overview of Citation Analysis Bourne, Philip E. "Ten simple rules for getting published." PLoS computational biology 1, no. 5 (2005): e57.; Gu, Jenny, and Philip E. Bourne. "Ten simple rules for graduate students." PLoS computational biology 3.11 (2007): e229.; Bourne, Philip E., and Virginia Barbour. "Ten simple rules for building and maintaining a scientific reputation." PLoS computational biology 7, no. 6 (2011): e1002108. Bourne, Philip E., and Iddo Friedberg. "Ten simple rules for selecting a postdoctoral position." PLoS computational biology 2, no. 11 (2006): e121. Version: 4/30/15

- 76. Self Experimentation*: 10 Simple Steps Identify yourself -- register for: 1. An identifier – ORCID 2. Information hubs: ORCID; LinkedIN; your own domain name forward to LinkedIN ; Slideshare 3. Communication channels: twitter, LinkedIN Describe yourself 4. Write and share a 1-paragraph bio 5. Describe your research program in 2 paragraph 6. Create a CV [Post these on your LinkedIn and ORCID profiles] Share 7. Share (on Twitter & LinkedIN) news about something you did or published; an upcoming event in which you will participate; interesting news and publications in your field 8. Make writing; data; publication; software available as Open Access (through your institutional repository, SlideShare, FigShare, Dataverse) Monitor …check and record these things regularly, but not too frequently (once a month) -- and no need to react or adjust immediately 9. Set up tracking– google scholar, google alert, 10. Find your klout schore, H-index, Overview of Citation Analysis *Question: How do you tell an extroverted researcher? Answer: When she talks, she looks down at your shoes. Version: 4/30/15

- 77. Overview of Citation Analysis Resources (Readings) (Software) (Data) (Glossary) Version: 4/30/15

- 78. Recommended Reading • Data Processing - General – Getting Started: programminghistorian.org/lessons/cleaning-data-with-openrefine – References: Verborgh, Ruben, and Max De Wilde. Using OpenRefine. Packt Publishing Ltd, 2013. – Tutorials: github.com/OpenRefine/OpenRefine/wiki/External-Resources • Data Processing – Dealing with Names – Getting Started -- author identifiers guide: libguides.mit.edu/content.php?pid=573578&sid=4729602 – References: Winkler 2012; Name Matching and Record Linkages, U.S. Censushttp://www.census.gov/srd/papers/pdf/rr93-8.pdf Overview of Citation AnalysisVersion: 4/30/15

- 79. Recommended Reading (Continued) • Bibliometric Analysis – Tutorials: Anne-Wil Harzing ,2011 The Publish or Perish Book, part 3: Doing bibliometric research with Google Scholar, Tarma software press Wouter De Nooy , et al.,2011, Exploratory Social Network Analysis with Pajek, 2nd Edition, Cambridge University Press author identifiers guide: libguides.mit.edu/content.php?pid=573578&sid=4729602 article level metrics: sparc.arl.org/sites/default/files/sparc-alm-primer.pdf – References: Eric D. Kolaczyk, 2009, Statistical Analysis of Network Data: Methods and Models, Springer. Overview of Citation AnalysisVersion: 4/30/15

- 80. Available Databases & API’s • Scholarly APIs: libguides.mit.edu/apis • Google Scholar: scholar.google.com • Scopus: scopus.com • Web of science: admin-apps.webofknowledge.com • Author identifiers: libguides.mit.edu/content.php?pid=573578&sid=4729602 • List of MIT-licensed Databases: owens.mit.edu/sfx_local/az/mit_db • Altmetrics: – PLOS article metrics article-level-metrics.plos.org – Plum Analytics plumanalytics.com – ImpactStory impactstory.org Overview of Citation AnalysisVersion: 4/30/15

- 81. Additional Selected Tools • OpenRefine: openrefine.org • Publish or Perish: www.harzing.com/pop.htm • Scholarometer: scholarometer.indiana.edu • CitNet citnetexplorer.nl • CiteSpace cluster.cis.drexel.edu/~cchen/citespace • Gephi gephi.org • Sci2 sci2.cns.iu.edu • Pajek pajek.imfm.si • Scimat sci2s.ugr.es/scimat/ • R Packages: – tm cran.r-project.org/web/packages/tm/ – StatNet statnet.org – CITAN cran.r-project.org/web/packages/CITAN – Rplos: cran.r-project.org/web/packages/rplos/ – Rmendeley ropensci.org/packages/rmendeley.html – RISmed cran.r-project.org/web/packages/RISmed – OAIHarvester cran.r-project.org/web/packages/OAIHarvester/l • Python Packages: – scipy scipy.org – Nltk nltk.org – networkx networkx.github.io – bibtools: www.sebastian-grauwin.com/?page_id=492 – pyOAI pypi.python.org/pypi/pyoai/ Overview of Citation AnalysisVersion: 4/30/15

- 82. Glossary of Metrics • Author H-Index: The maximum number of articles h such that each has received at least h citations • Centrality A measure of the importance of some node in the network based on a selected abstract model of influence/flow across network. Centrality measures include degree centrality (number of connections); closeness centrality (distance of node to other nodes in network); betweenness centrality (proportion of information that must pass through the node to go from one part of the network to another) • (ISI Journal) Impact Factor: The frequency with which the “average article” has been cited in a particular year. It is based on the most recent two years of citations. It is only supplied for journals indexed by ISI in the Web of Science. • Clustering: Method that partition n observations into k clusters based on the characteristics of the object. Clusters are defined either by a set of heuristics for forming the cluster, or according to a solution concept that the clusters will satisfy. One common algorithm, K-Means assigns each observation to a fixed-K number of clusters such that each observation belongs to the cluster that has a mean value closest to that of the observation • Network community structure measures: The detection of highly-interconnected groups of nodes within a network. Methods include hierarchical- clustering; information maximization; modularity; clique-detection • Network Diameter: The greatest distance between any two nodes in the network. • Page Rank: a family of iteratively-calculated recursive impact factors in which citations from other journals are weighted by the impact of those journals Overview of Citation Analysis Maxi, j Min distance i, j( )( )( ) Version: 4/30/15

- 83. Questions? E-mail: escience@mit.edu Web: informatics.mit.edu Overview of Citation AnalysisVersion: 4/30/15

Editor's Notes

- This work. \, by Micah Altman (http://redistricting.info) is licensed under the Creative Commons Attribution-Share Alike 3.0 United States License. To view a copy of this license, visit http://creativecommons.org/licenses/by-sa/3.0/us/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA.

- The structure and design of digital storage systems is a cornerstone of digital preservation. To better understand ongoing storage practices of organizations committed to digital preservation, the National Digital Stewardship Alliance conducted a survey of member organizations. This talk discusses findings from this survey, common gaps, and trends in this area. (I also have a little fun highlighting the hidden assumptions underlying Amazon Glacier's reliability claims. For more on that see this earlier post: http://drmaltman.wordpress.com/2012/11/15/amazons-creeping-glacier-and-digital-preservation )

- 5 Minutes

- First, some background and the problem statement. These are three university rankings issued annually, rankings that are of particular importance to research libraries. Some universities even incorporate raising their ranking in their strategic plans. All three use citations as a factor in determining the rankings, which skews the results towards universities focusing on the sciences rather than the humanities. It also puts added weight to authors of journal articles, which are usually not represented in national authority files.

- 5 Minutes

- 5 Minutes

- - Who is that dashing fellow?

- Hmm… same corpus, but different estimates of impact… note different methods of classification Customizable – can exclude articles from estimate Note export format will require cleanup on “Note” field to calculate other measures

- - Who is that dashing fellow?

- Google scholar is quite limited both in journal covered by metrics and in metrics available

- Google scholar is quite limited both in journal covered by metrics and in metrics available

- Google scholar is quite limited both in journal covered by metrics and in metrics available

- Google scholar is quite limited both in journal covered by metrics and in metrics available

- Google scholar is quite limited both in journal covered by metrics and in metrics available

- 5 Minutes

- 5 Minutes

- 5 Minutes

- 5 Minutes

- Self Citation Acts as impact enforcement, not illegitimate [van Raan 2008] Collaboration – collaborations are responsible for disproportionate portion of impact [Wuchty, et al. 2007] Regularity – strong association between part of very high impact group and publishing t least 3 times/year [Ionnadis, et al 2014] Open access associated with higher citations: Generally associated with higher citations [Eyesenback, 2006; Norris, et al. 2008] For a possible interaction with higher impact, see journals [Koler-Povh, et al 2014] Open access publishing of ETD’s does not obstruct publication as a print book (based on publisher surveys) [Seamsns 2013] Sharing data See CODATA 2013 for a review Citation measures one type of use Downloads are imperfectly correlated with citations, typically much larger, vary by discipline [Bollen et al 2005, Gorraiz et al., 2013] Applied research impact correlates only weakly with journal impact [Sutherland, et al. 2011] Clear Titles Papers with compound titles more highly cited [Fatemah, et al 2014] Pleasant titles and mild humor is ok, but avoid primarily humrous titles [Sagi & Yechiam 2008] Clear Keywords and Abstract Papers with keywords distinct from titles more highly cited [Fatemah, et al 2014] Mainstream social meaia See Bornman 2014a,b on value of twitter for measuring complementary impact Creativity –Deweitt & Denisi 2004

- Null results are less likely to be submitted & published [Franco, et al. 2014, Hopewell et al. 2009, and see CODATA 2012 for a review] Publication bias leads to overestimates of effects/significance in many fields [Fanelli 2012] Many data sharing and replication policies are not followed [See CODATA 2012 for a review] Good science may not pass peer review [Peters & Ceci 1982; Gans & Shephard 1994; Ginther, et al. 2011] Much research is not replicable [See CODATA 2012 for a review] Many publications are not cited at all. [Hamilton 1991; Samuels 2011] Edited volumes are not well cited - The evidence is preliminary but is reinforced by broad anecdotal evidence that edited volumes are typically down weighted in tenure review. [Bishop 2012] Retraction rates in scientific journals have substantially increased [Brembs et. Al 2013 – check CODATA] Author ordering weights too heavily in evaluation – be conscious of it [Liran & Yariv 2006] Multidisciplinary work cited less [Levitt & Thelwall 2008] Editorial delays are frequent, and important [Greenburg 2004; Ioannidis 1998] Not enough time spent on research [> 75% of associate profs spend < 13 hours/week on research and writing, Hurtado 2012]

- 5 Minutes