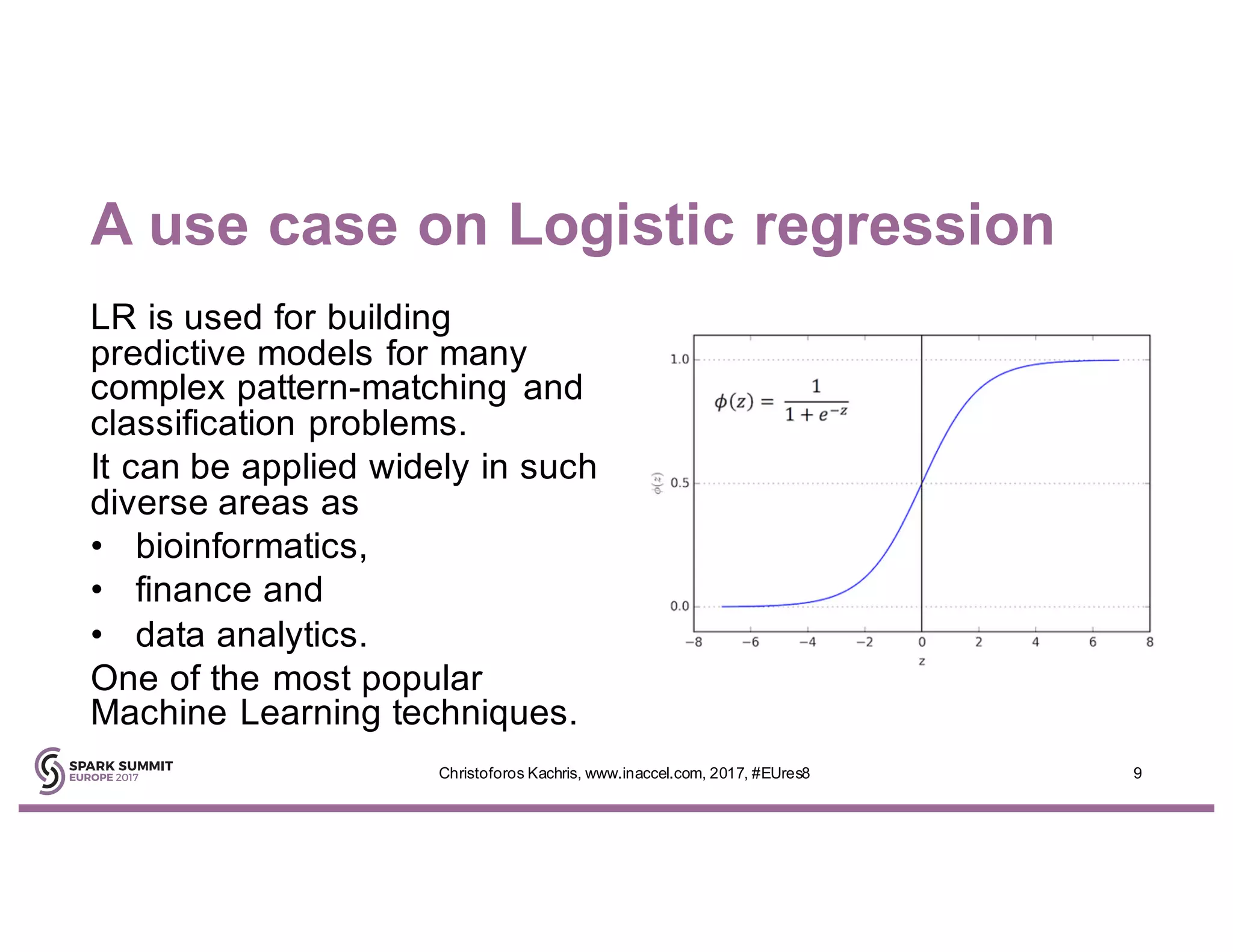

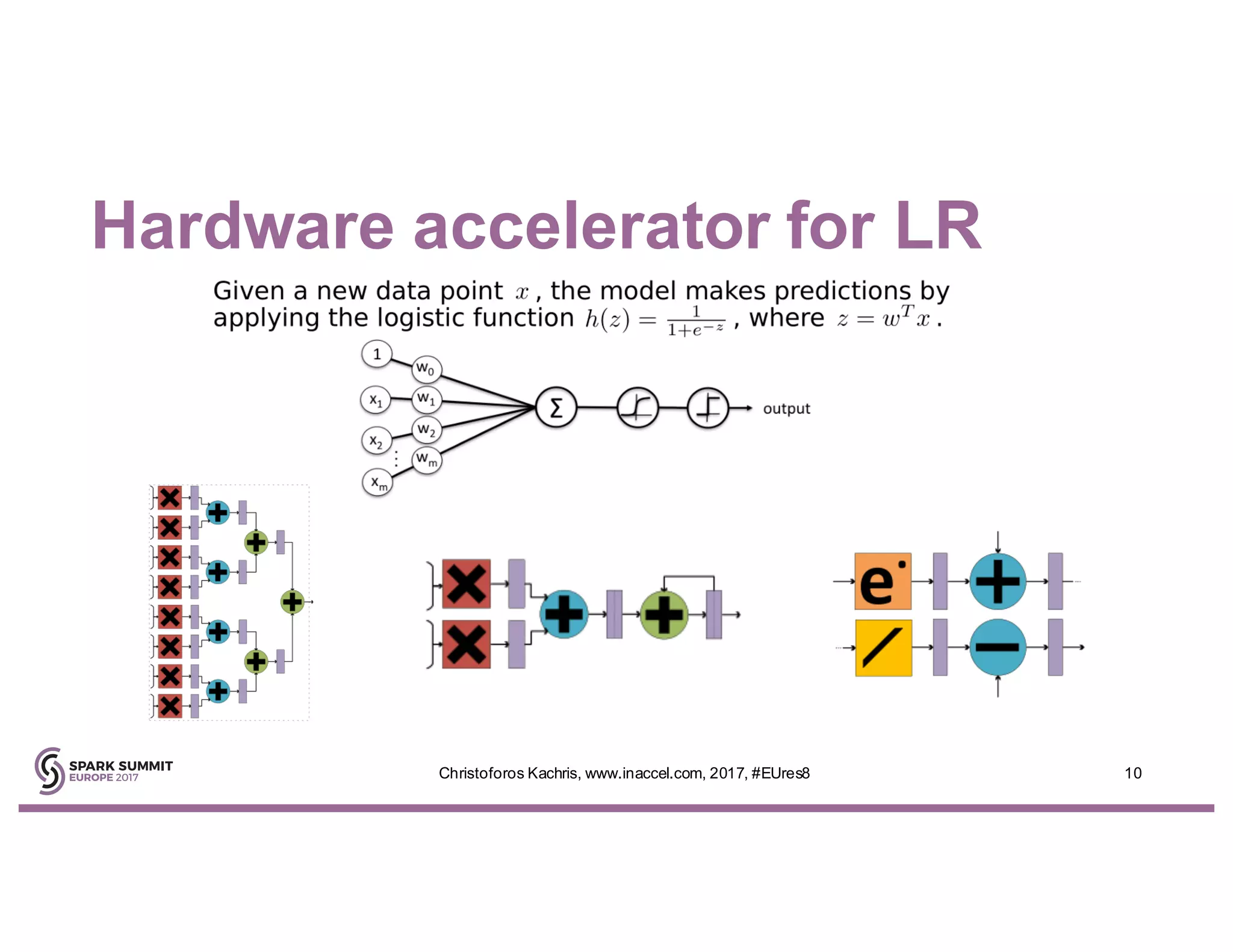

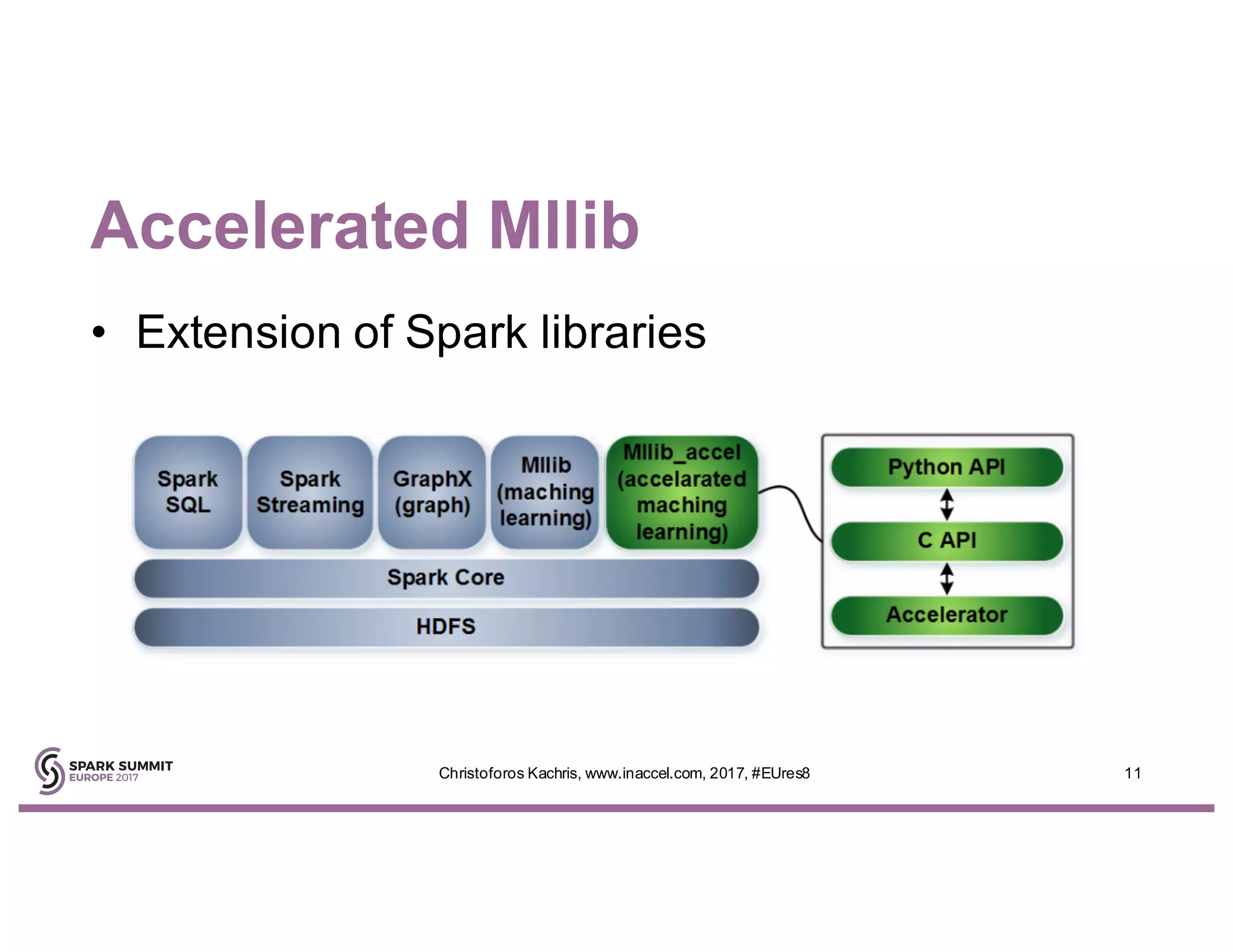

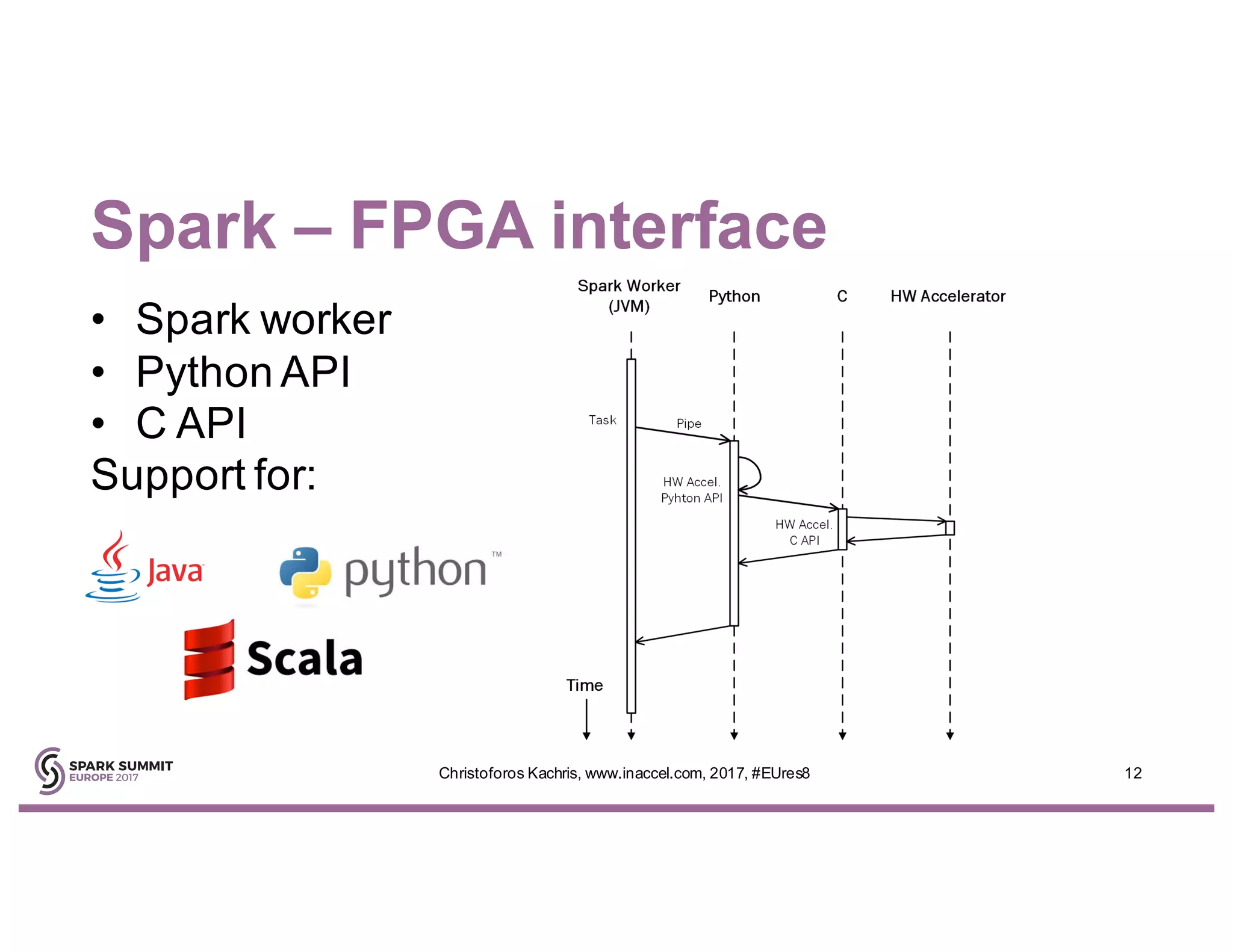

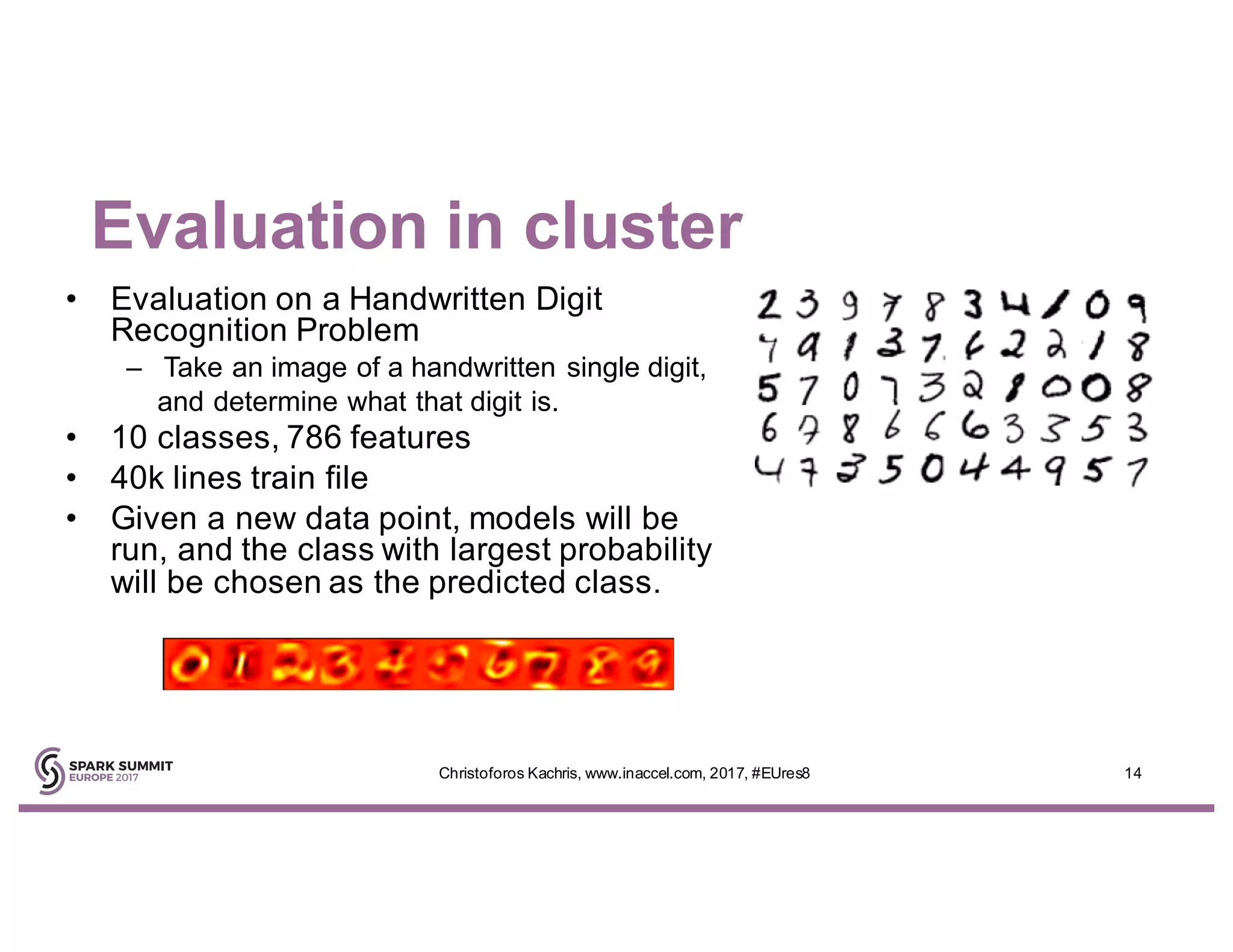

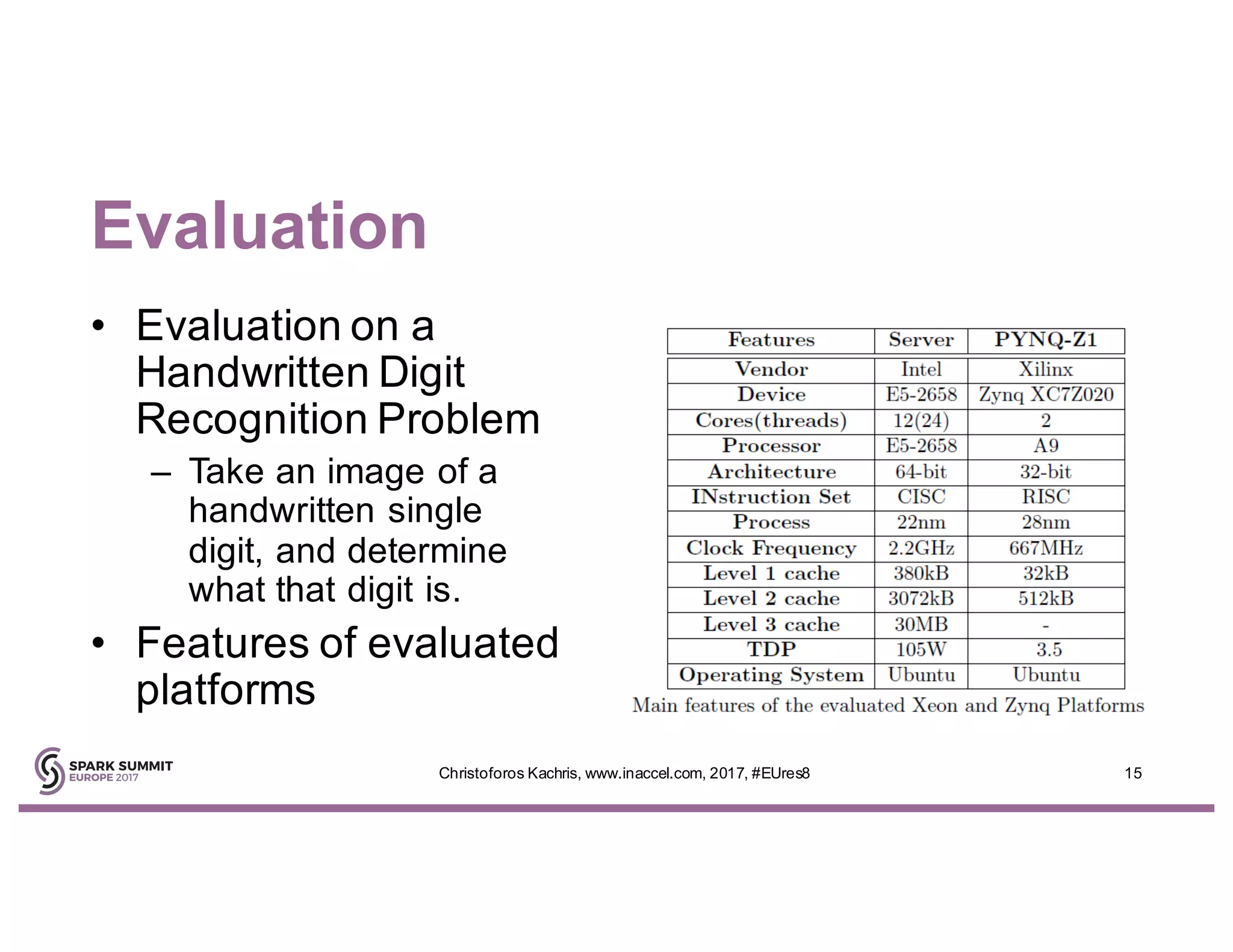

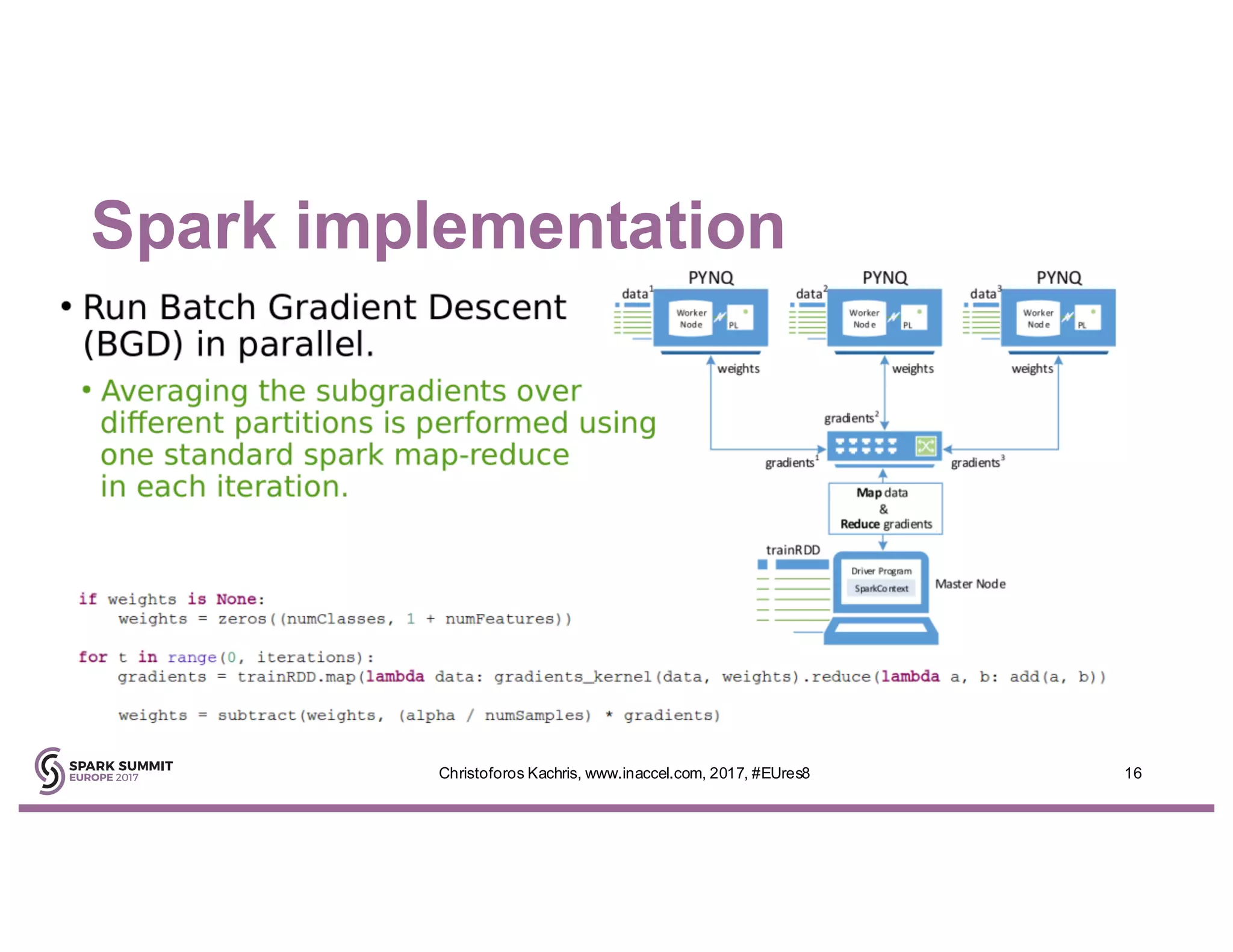

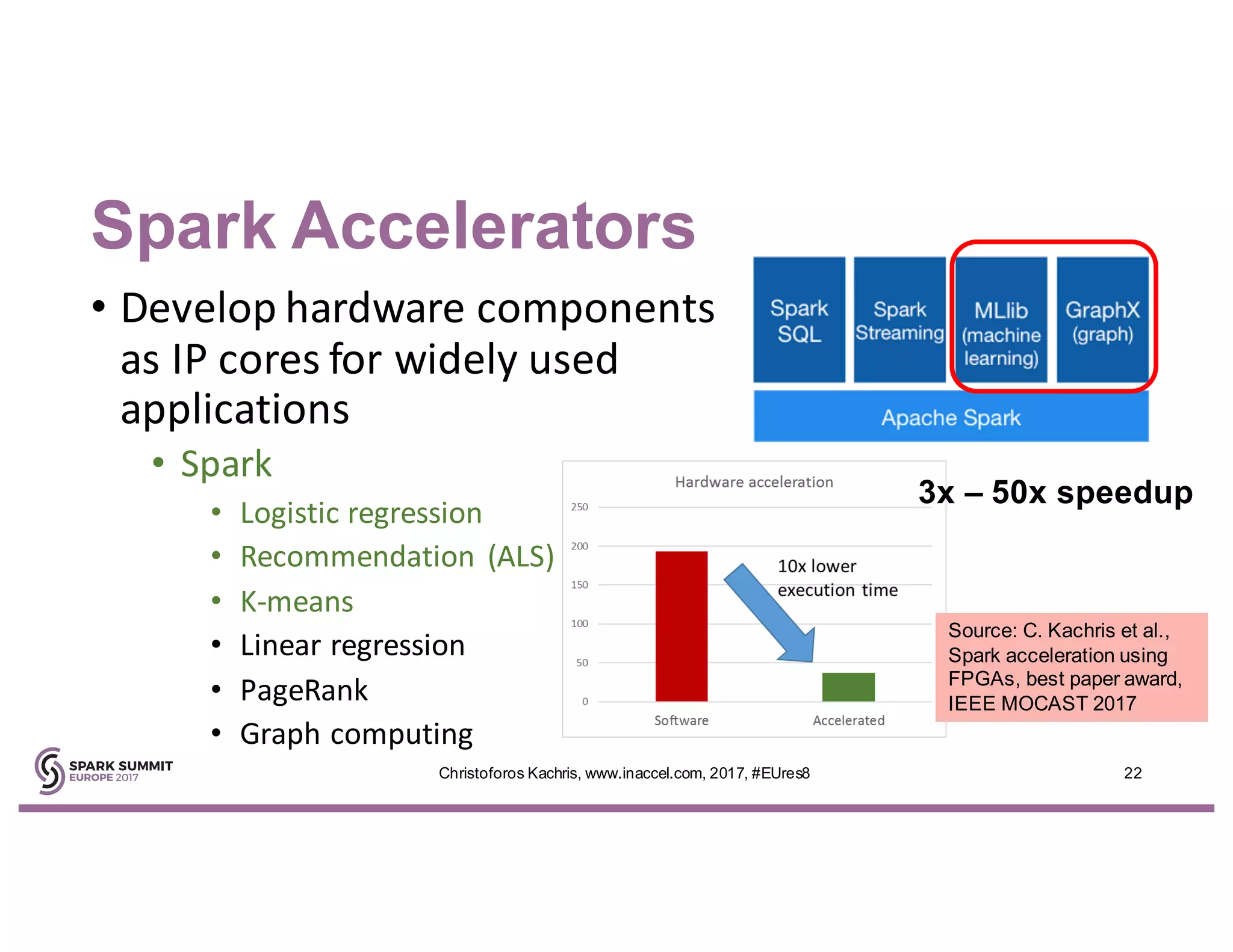

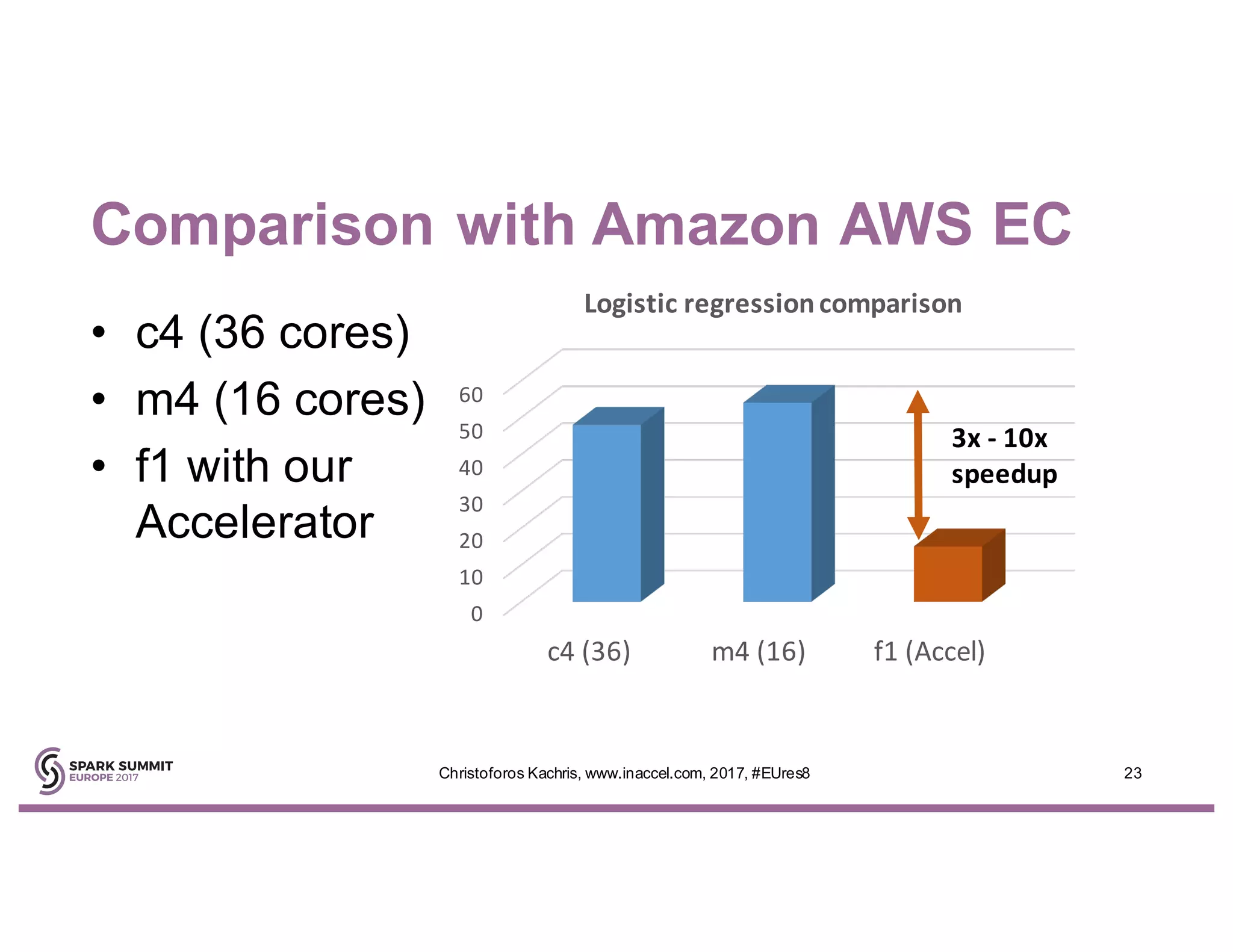

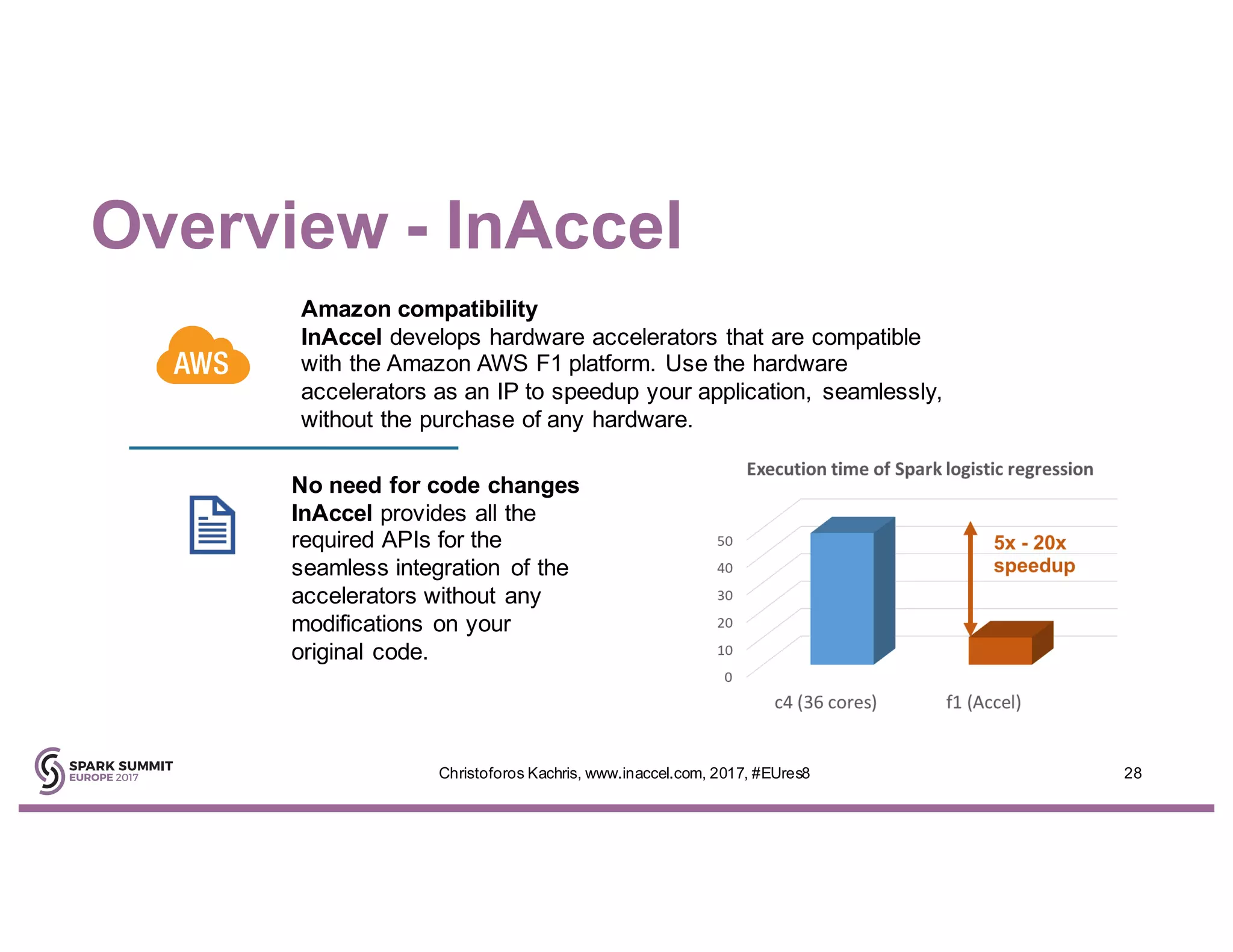

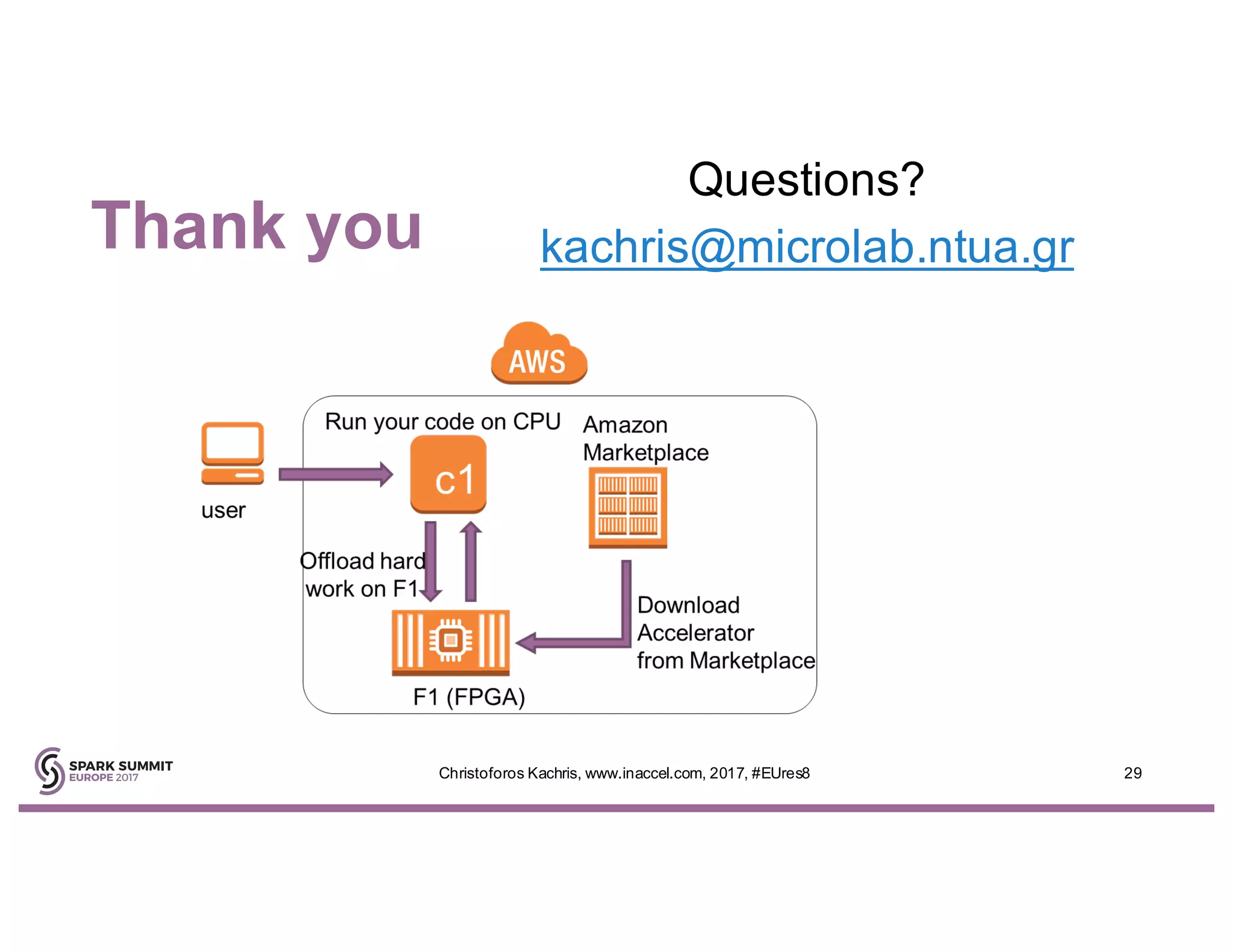

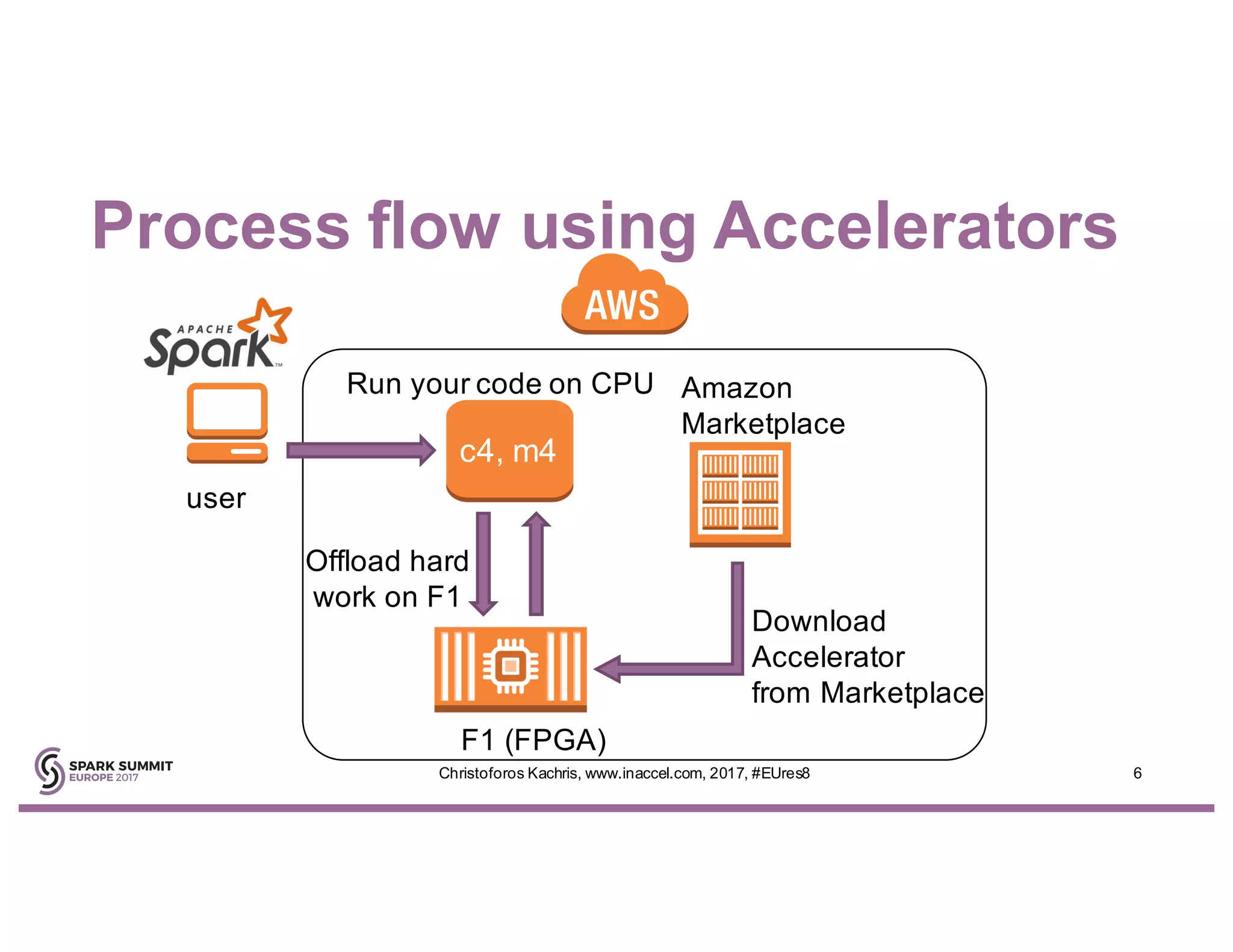

The document discusses the use of FPGA accelerators to enhance the performance of Spark applications on the cloud, particularly in machine learning tasks such as logistic regression and digit recognition. It highlights the advantages of combining CPUs with FPGAs for improved efficiency and speedup compared to traditional setups, showcasing significant performance gains. Inaccel's hardware accelerators facilitate seamless integration with Apache Spark and Amazon AWS, allowing users to enhance their applications without the need for extensive code modifications.

![Flexibility versus Performance

4Christoforos Kachris, www.inaccel.com, 2017, #EUres8

CPU: High flexibility, lower efficiency Accelerators: High throughput, higher efficiency

GPUs and FPGAs can provide massive parallelism and higher efficiency

than CPUs for certain categories of applications

[Source: Amazon AWS f1]](https://image.slidesharecdn.com/l28christoforoskachris-171031223004/75/Hardware-Acceleration-of-Apache-Spark-on-Energy-Efficient-FPGAs-with-Christoforos-Kachris-4-2048.jpg)

![Hardware Acceleration

5Christoforos Kachris, www.inaccel.com, 2017, #EUres8

module fi lter1 ( clock, rst, st rm_in, out)

for (i =0; i<N UMUNITS ; i=i+1 )

always @(posed ge cloc k)

intege r i,j; //index for lo ops

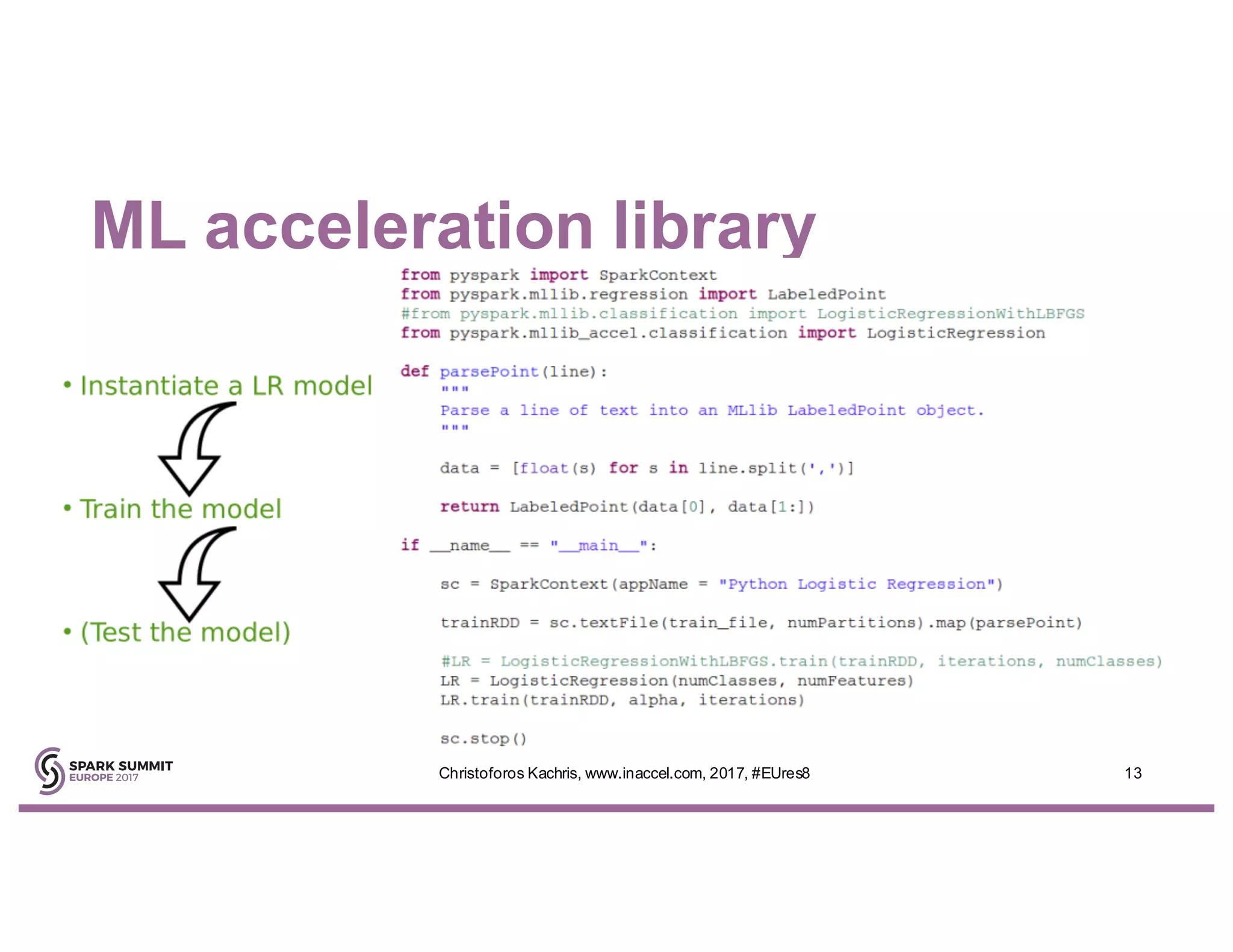

tm p_kerne l[j] = k[i*OFF SETX];

FPGA handles compute-

intensive, deeply pipelined,

hardware-accelerated

operations

CPU handles the rest

application

[Source: Amazon AWS f1]](https://image.slidesharecdn.com/l28christoforoskachris-171031223004/75/Hardware-Acceleration-of-Apache-Spark-on-Energy-Efficient-FPGAs-with-Christoforos-Kachris-5-2048.jpg)

![Amazon Resources

7

Amazon

Machine

Image (AMI)

Amazon FPGA

Image (AFI)

EC2 F1

Instance

CPU

Application

on F1

DDR-4

Attached

Memory

DDR-4

Attached

Memory

DDR-4

Attached

Memory

DDR-4

Attached

Memory

DDR-4

Attached

Memory

DDR-4

Attached

Memory

DDR-4

Attached

Memory

DDR-4

Attached

Memory

FPGA Link

PCIe

DDR

Controllers

Launch Instance

and Load AFI

[Source: Amazon AWS f1]](https://image.slidesharecdn.com/l28christoforoskachris-171031223004/75/Hardware-Acceleration-of-Apache-Spark-on-Energy-Efficient-FPGAs-with-Christoforos-Kachris-7-2048.jpg)

![FPGAs in Amazon AWS

8

Amazon EC2 FPGA

Deployment via Marketplace

Amazon

Machine

Image (AMI)

Amazon FPGA Image

(AFI)

AFI is secured, encrypted,

dynamically loaded into the

FPGA - can’t be copied or

downloaded

Customers

AWS Marketplace

[Source: Amazon AWS f1]](https://image.slidesharecdn.com/l28christoforoskachris-171031223004/75/Hardware-Acceleration-of-Apache-Spark-on-Energy-Efficient-FPGAs-with-Christoforos-Kachris-8-2048.jpg)