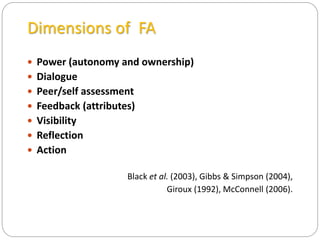

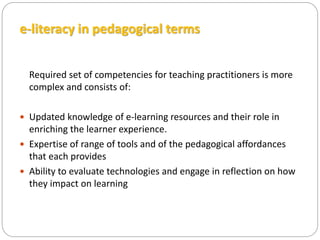

The seminar discusses a conceptual model for formative assessment within open and distance learning (ODL), emphasizing the integration of learning technologies and dialogue for improved student engagement. It explores various assessment types, their purposes, and the impact of technology on feedback mechanisms, highlighting the challenges and diversity of practices in different institutions. The findings suggest that effective formative assessment can enhance e-learning by making feedback central and empowering students in their learning processes.