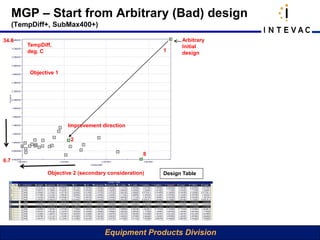

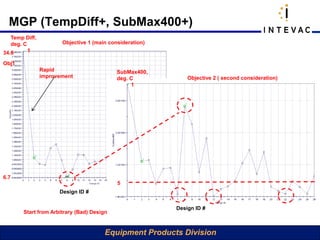

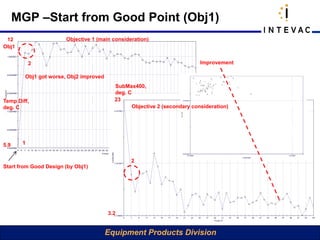

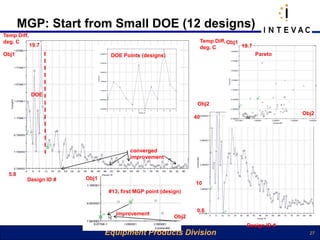

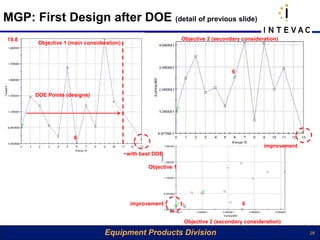

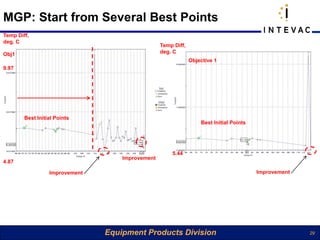

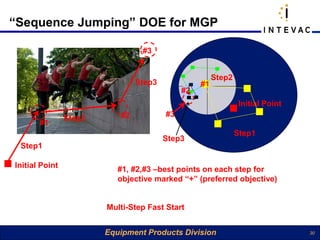

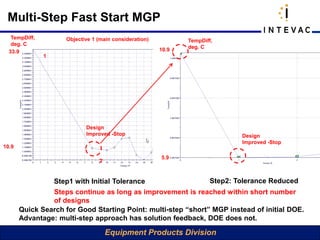

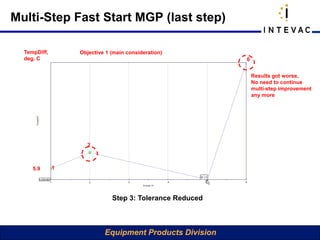

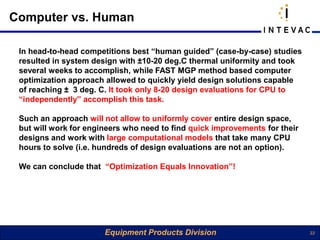

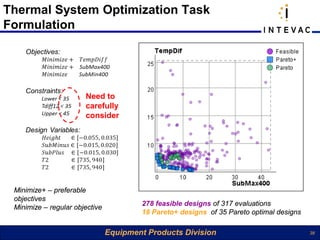

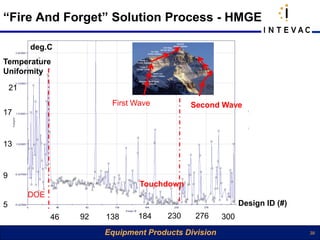

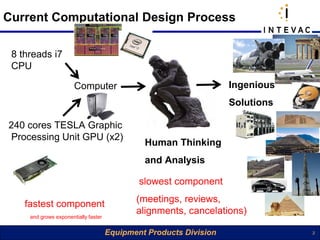

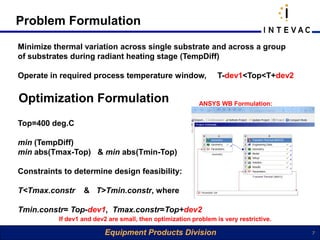

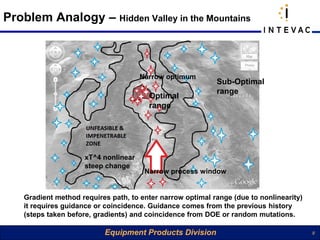

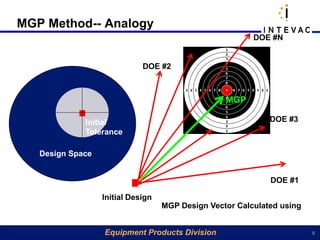

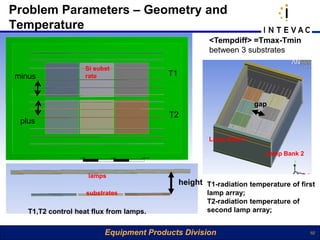

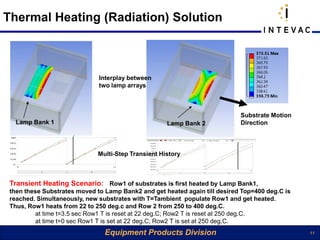

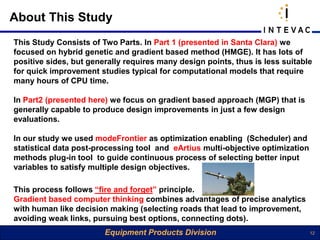

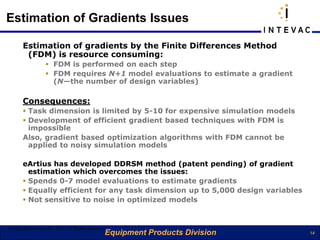

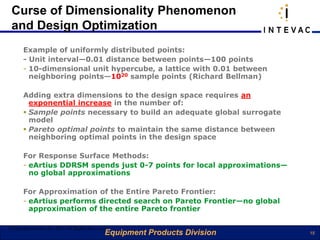

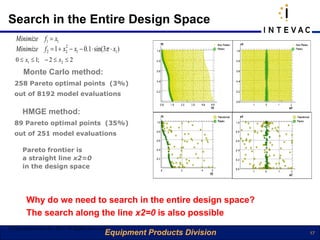

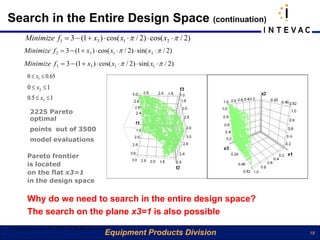

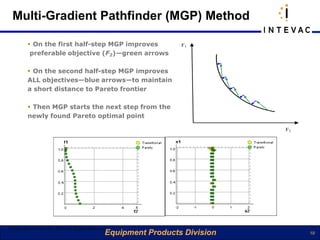

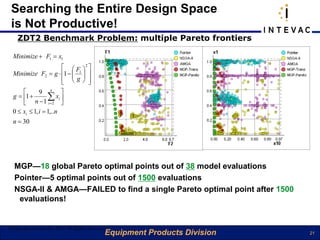

The document discusses a fast design optimization strategy for radiative heat transfer using a gradient-based method, particularly focusing on a technique that performs well under CPU time constraints. It highlights the ability of the multi-gradient pathfinder (mgp) method to produce design improvements efficiently and emphasizes the limitations of traditional multi-objective optimization algorithms, such as low computational efficiency and the curse of dimensionality. The study demonstrates the advantages of using computer optimization over human-guided design processes, achieving better results in fewer design evaluations.

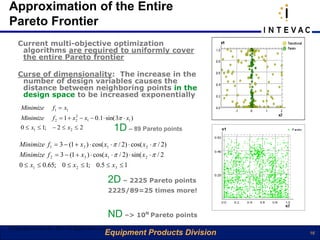

![Searching the Entire Design Space

is Not Productive!

g = 1 + 10 ⋅ (n − 1) + ( x2 + x3 + ... + xn ) − 10 ⋅ [cos(4πx2 ) + cos(4πx3 ) + ... + cos(4πxn )], n = 10

2 2 2

h = 1 − F1 / g − (F1 / g ) ⋅ sin(10πF1 ); [ X ] ∈ [0;1]

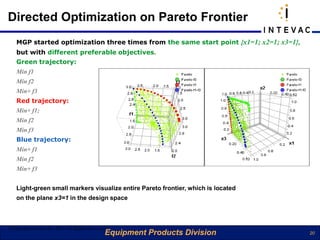

Minimize + F1 = x1 MGP spent 185 evaluations, and found exact solutions

Minimize F2 = g ⋅ h Pointer, NSGA-II, AMGA spent 2000 evaluations each, and failed

©Copyright eArtius Inc 2011 All Rights Reserved

Equipment Products Division 22](https://image.slidesharecdn.com/fastoptimizationintevacoct63final-13178794989585-phpapp01-111006004359-phpapp01/85/Fast-Optimization-Intevac-22-320.jpg)