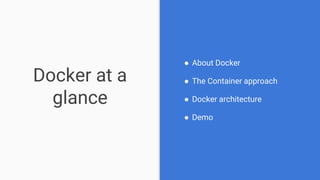

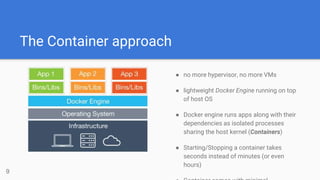

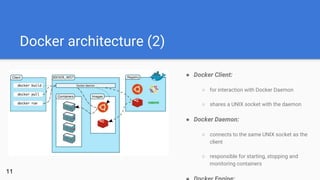

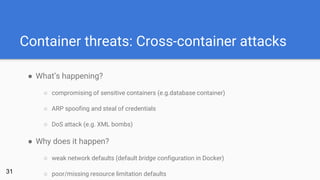

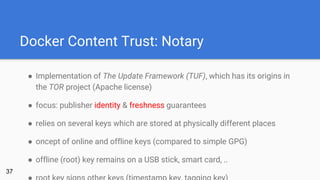

The document explores the security aspects of Docker containers compared to traditional virtual machines, highlighting Docker's lightweight architecture and isolation mechanisms. It addresses concerns regarding container security, discussing user namespaces, capabilities, and mandatory access control as potential solutions to vulnerabilities. Ultimately, it raises questions about the future of Docker's security and the inherent risks tied to its reliance on the host operating system's security features.

![Docker CLI AuthN/AuthZ

[This page is intentionally left blank]

33

Ok,

there’s still TLS ..](https://image.slidesharecdn.com/dockersecurity-161023114852/85/Exploring-Docker-Security-33-320.jpg)

![Sources (1)

46

Internet:

Docker Inc. (2016): Docker Docs [https://docs.docker.com/]

Docker Inc. (2016): What is Docker? [https://www.docker.com/what-docker]

Ridwan, Mahmud (2016): Separation Anxiety: A Tutorial for Isolating Your System with Linux Namespaces

[https://www.toptal.com/linux/separation-anxiety-isolating-your-system-with-linux-namespaces]

Wikipedia (2016): Descretionary Access Control [https://de.wikipedia.org/wiki/Discretionary_Access_Control]

Wikipedia (2016): Virtuelle Maschine [https://de.wikipedia.org/wiki/Virtuelle_Maschine]

Literature:](https://image.slidesharecdn.com/dockersecurity-161023114852/85/Exploring-Docker-Security-46-320.jpg)