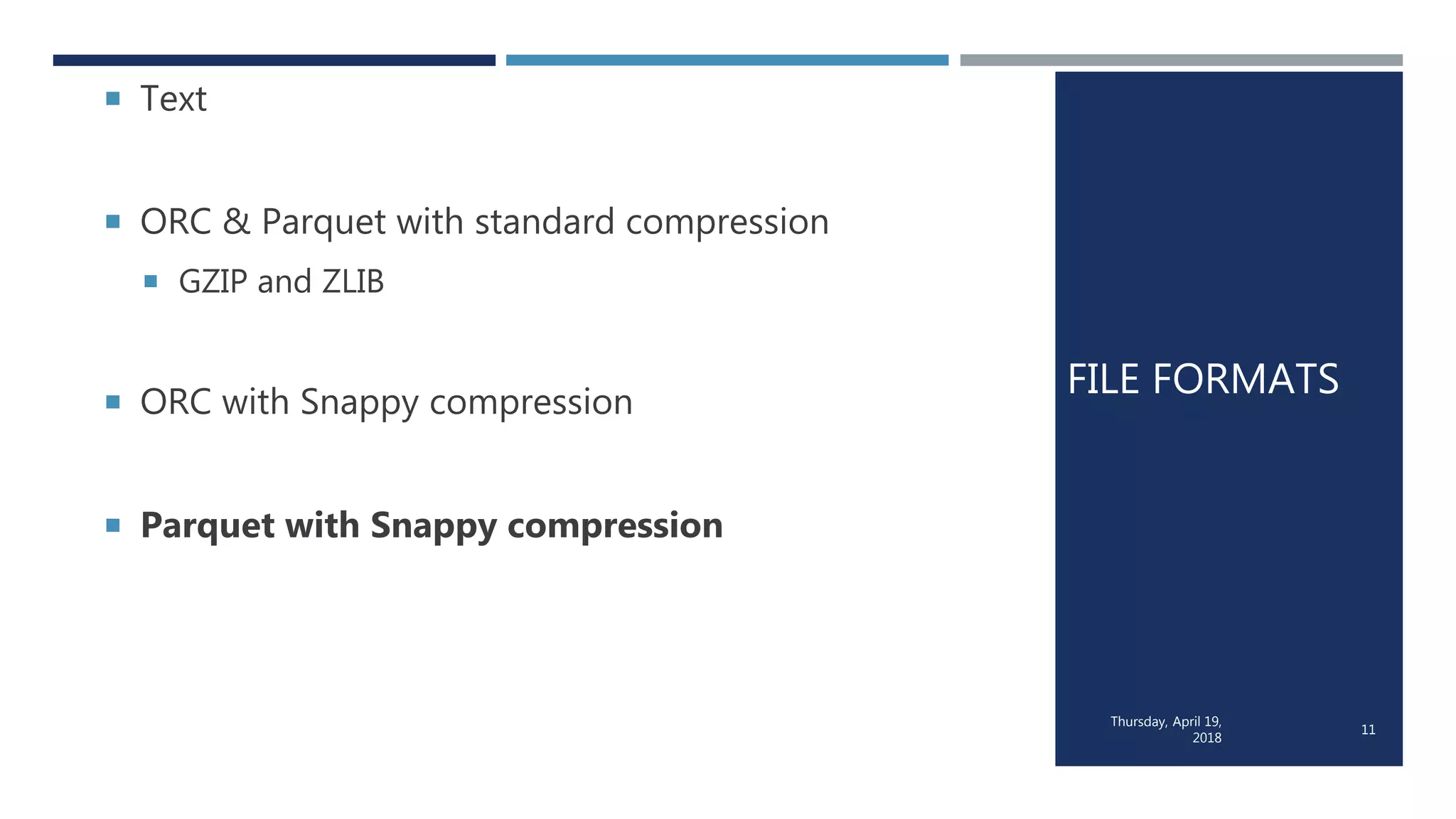

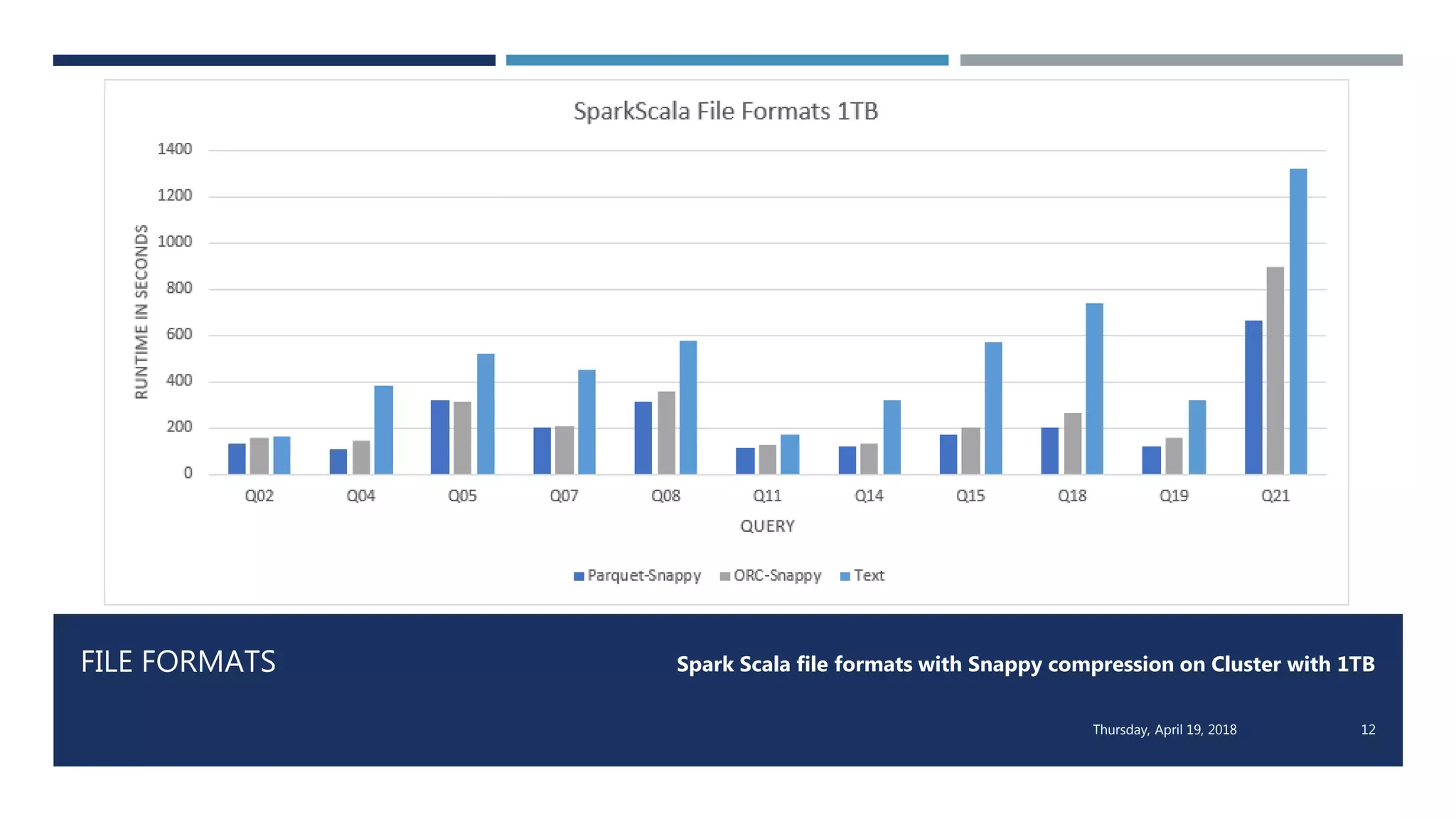

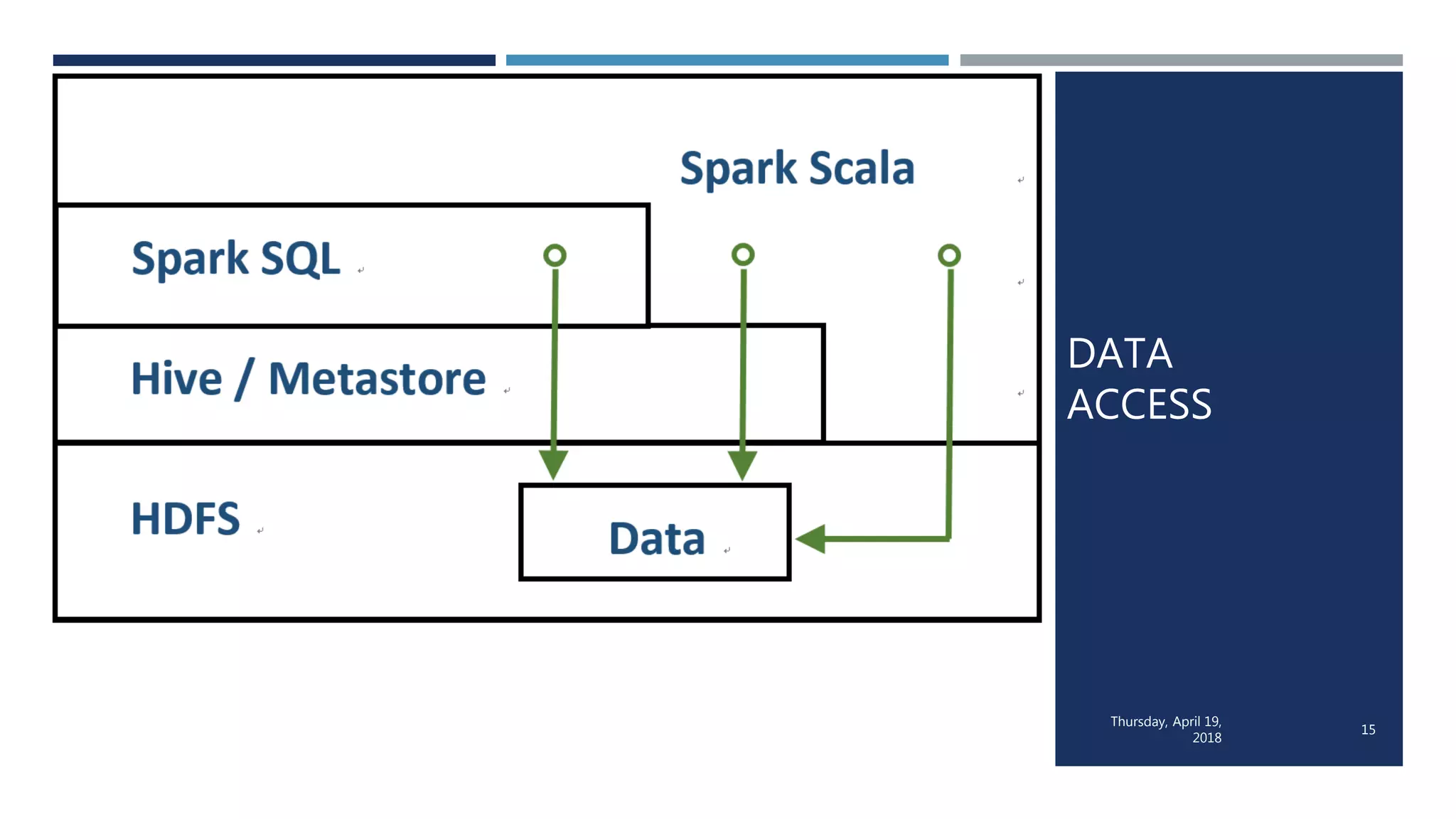

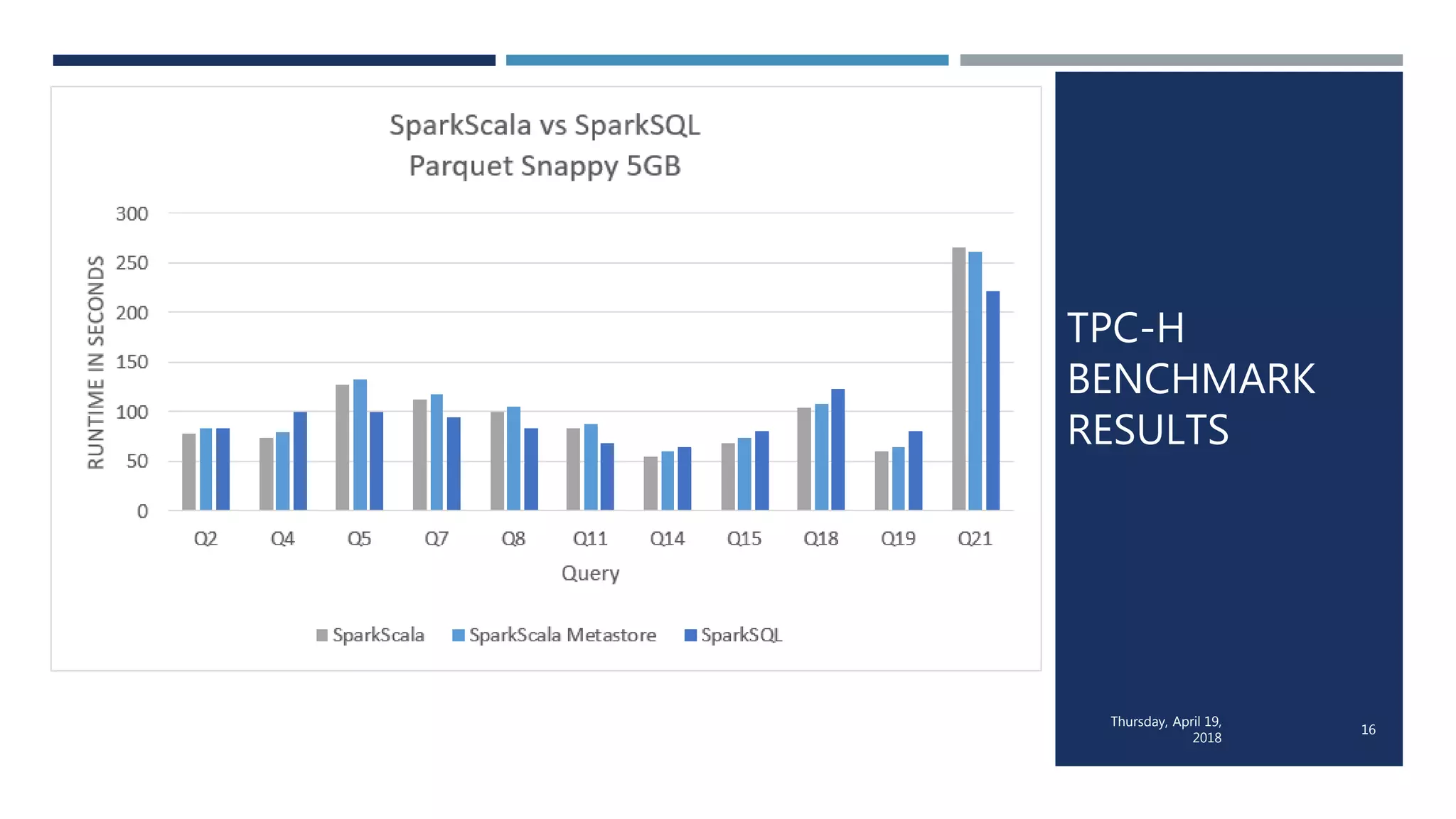

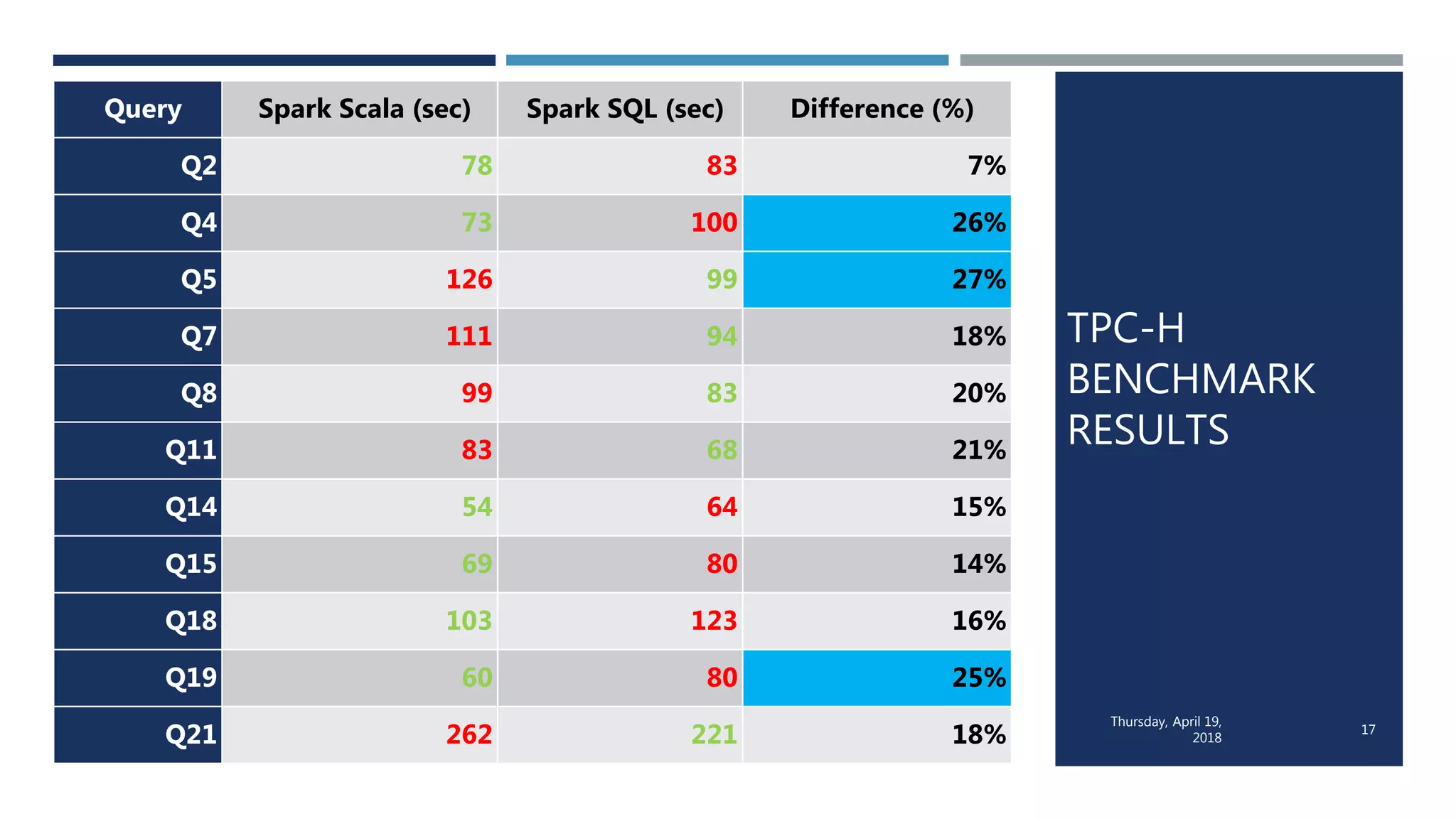

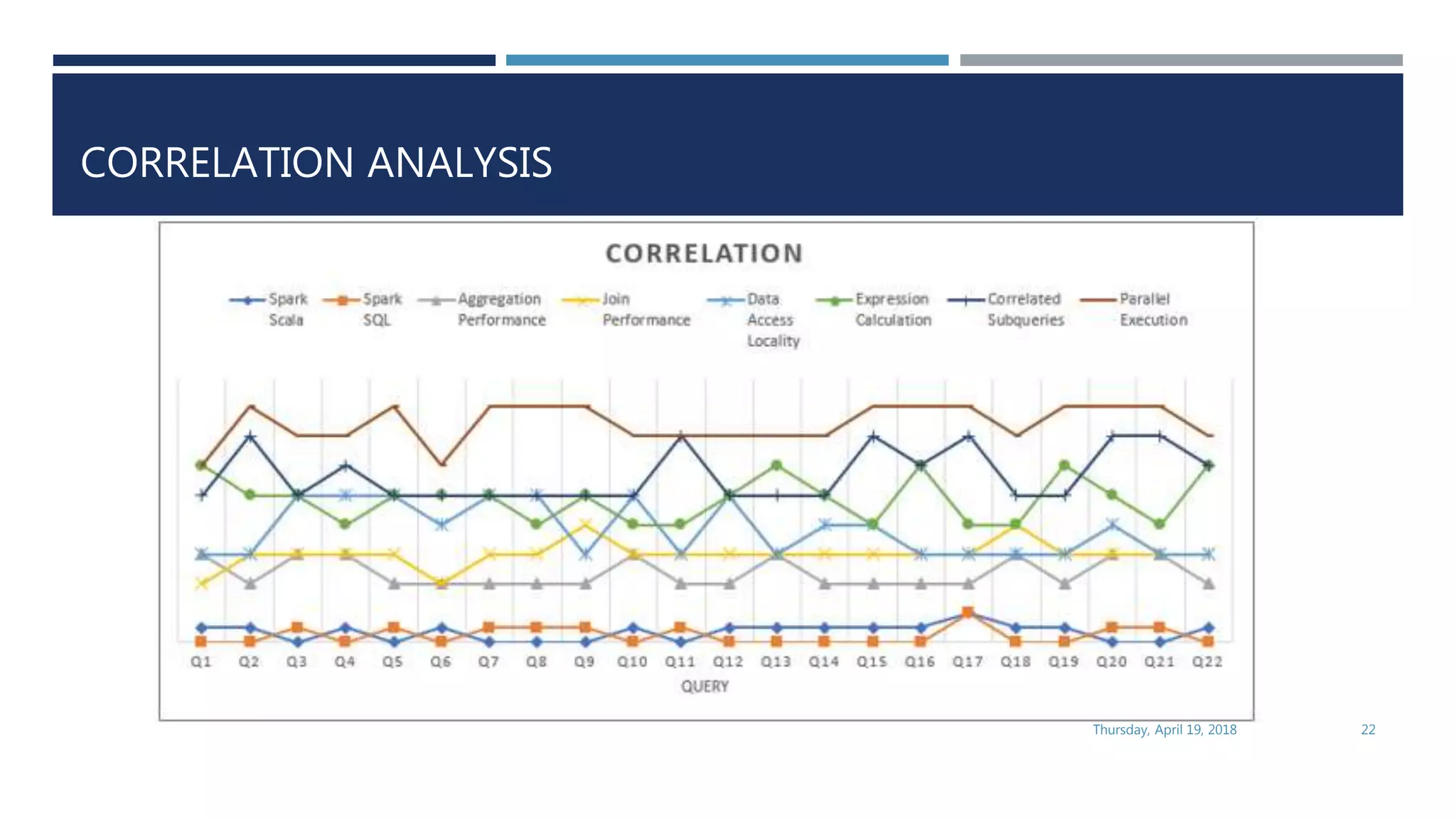

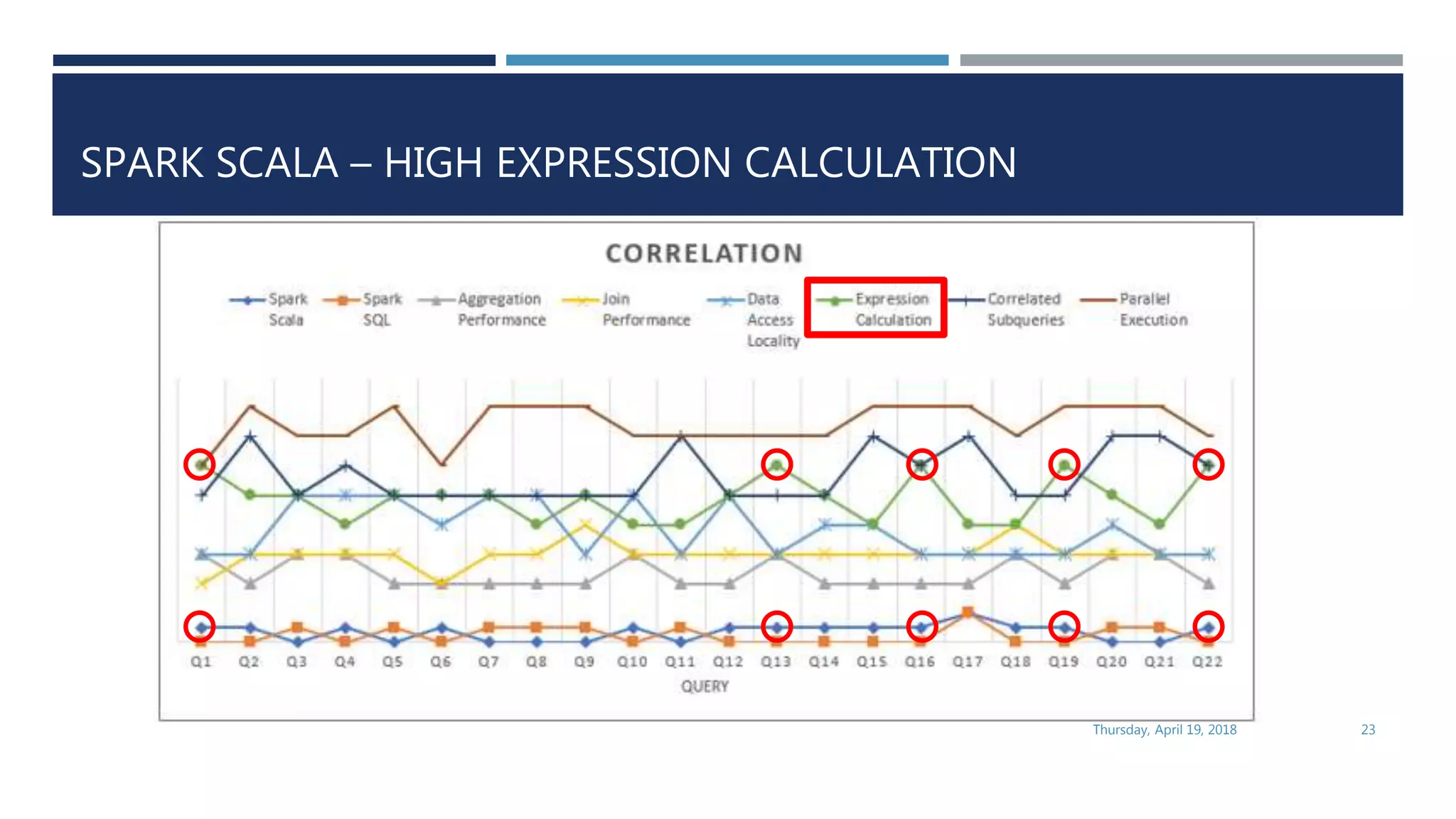

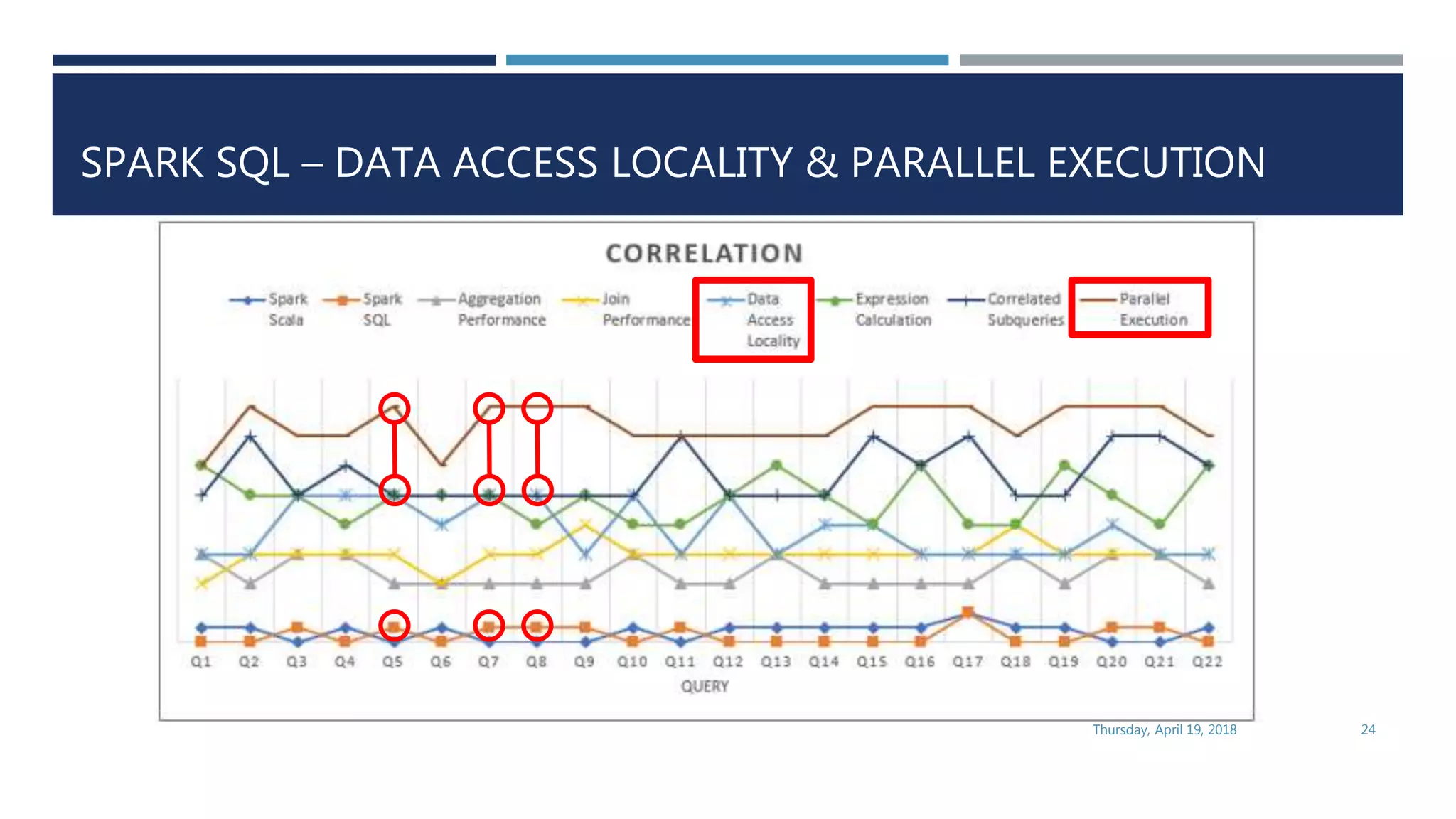

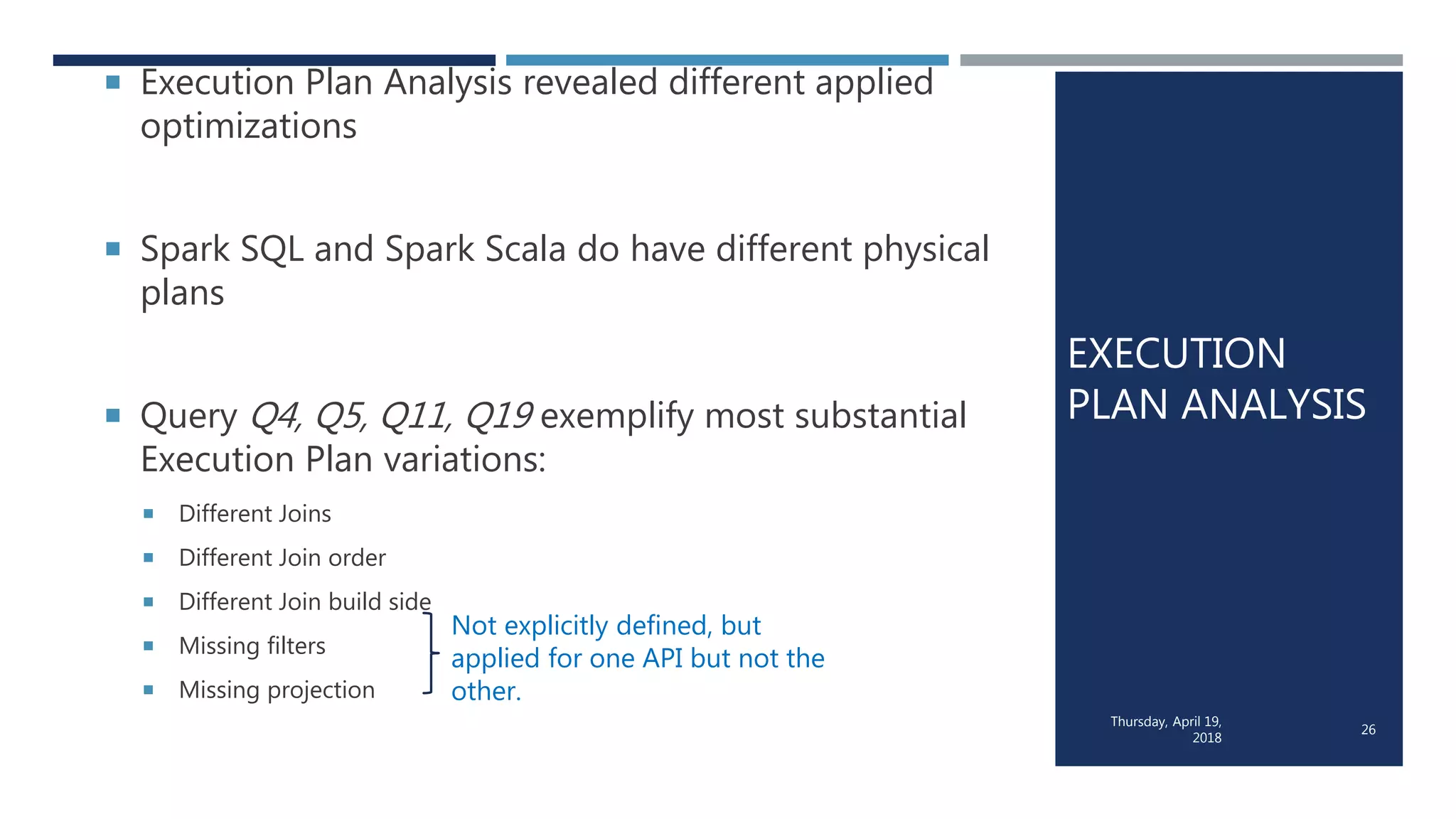

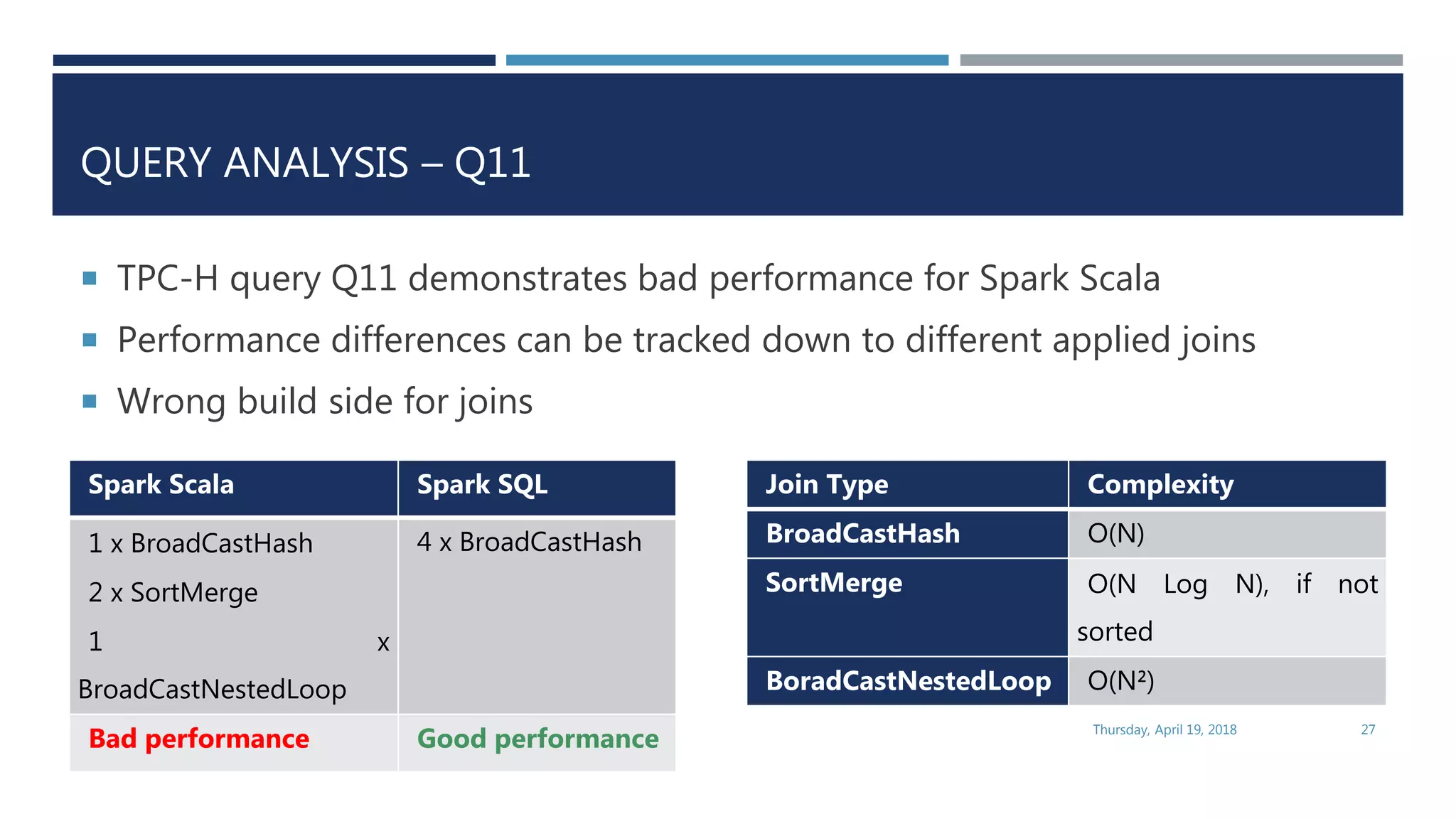

This document evaluates the performance of Apache Spark using TPC-H benchmarks, comparing Spark SQL and Spark Scala. Key findings indicate up to a 30% performance increase by switching APIs, highlighting that parquet with snappy compression offers the best performance. It also reveals that Hive metastore introduces some overhead without improving performance significantly.