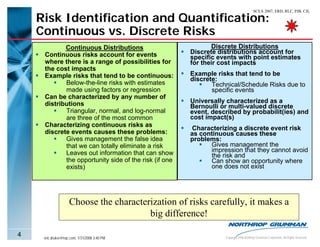

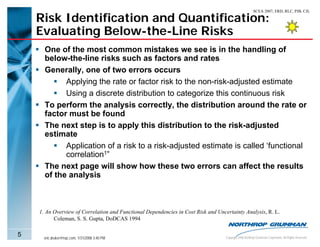

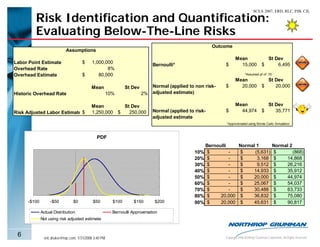

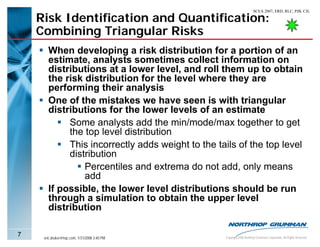

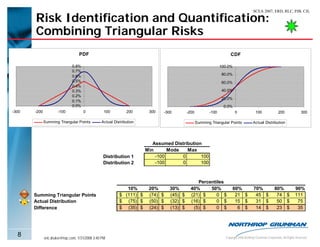

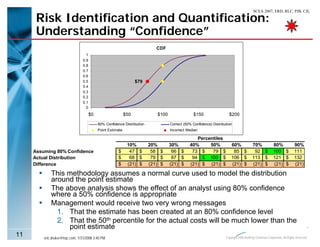

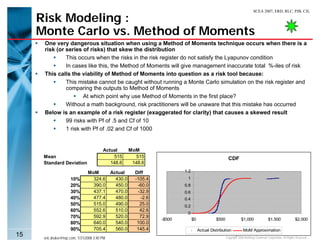

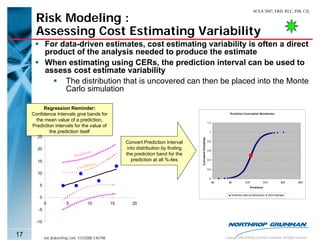

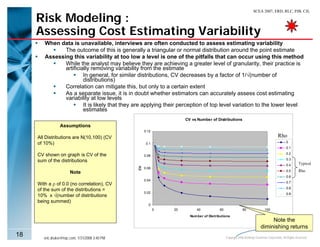

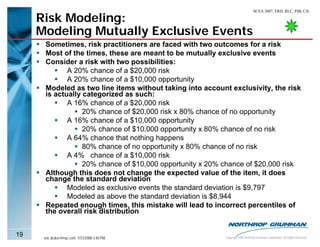

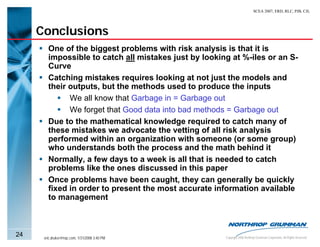

The document discusses common pitfalls in popular risk analysis methodologies. It identifies mistakes in risk identification and quantification, such as characterizing risks as continuous when they are discrete, or vice versa. It also discusses errors in modeling below-the-line risks, combining triangular distributions, and misunderstanding the meaning of "confidence" in estimates. The document aims to explain these issues and their potential effects on misleading management with inaccurate risk analyses.