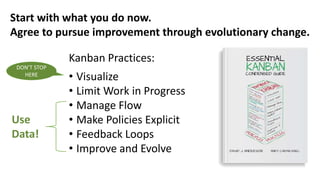

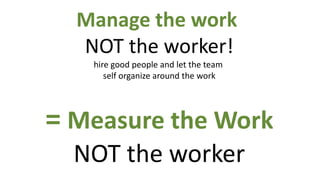

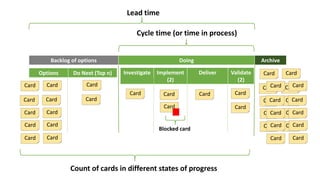

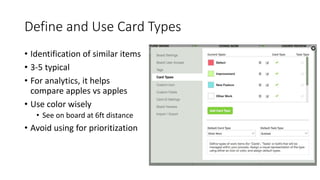

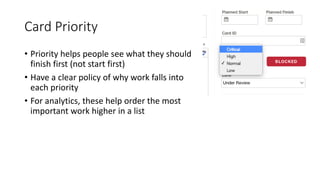

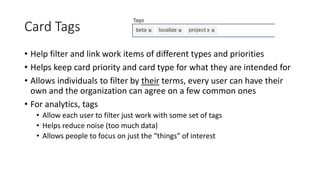

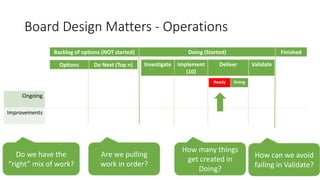

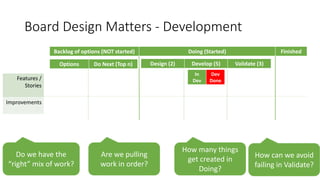

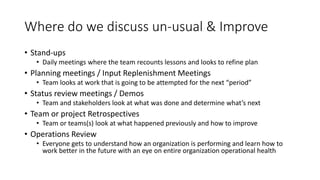

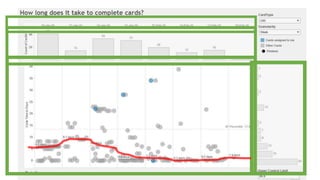

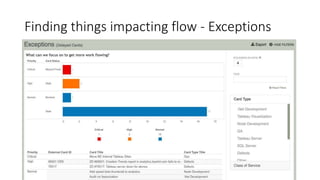

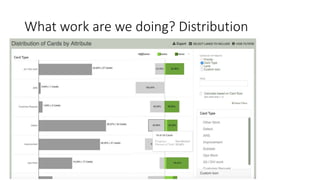

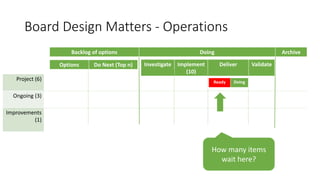

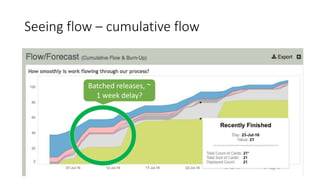

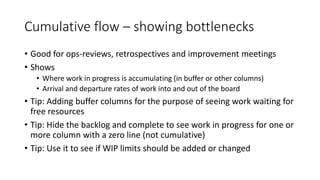

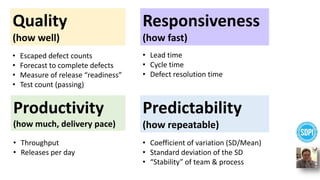

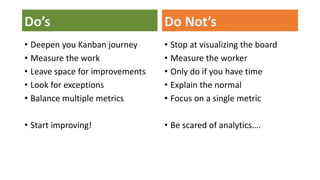

The document discusses strategies for driving continuous improvement using data, emphasizing the importance of metrics in managing workflows and responsiveness. It highlights key Kanban practices such as visualizing work, limiting work in progress, and setting explicit policies for prioritizing tasks. Also included are design ideas for boards, analytical insights, and actionable steps for teams to optimize their processes and promote efficiency.