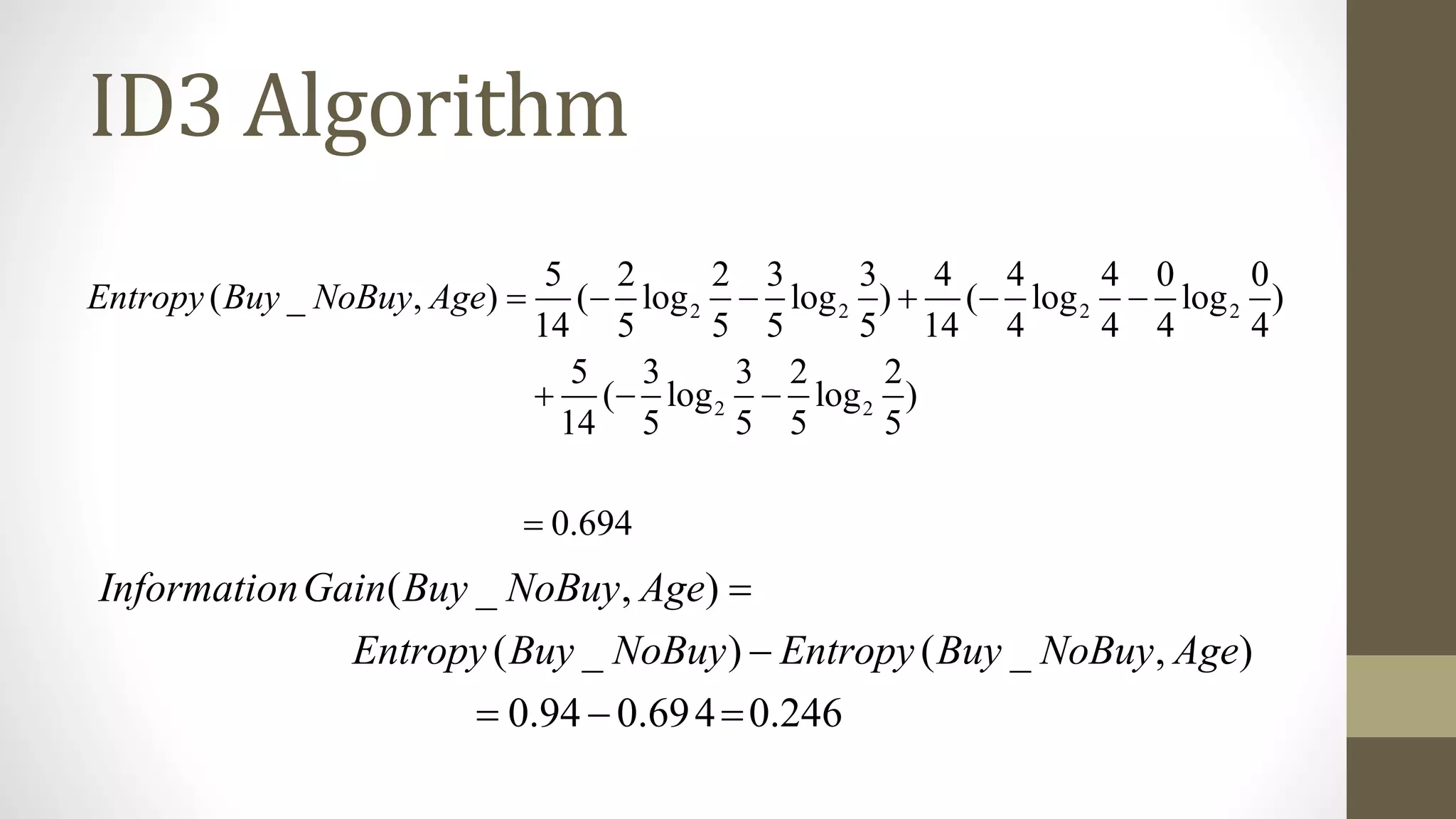

This document describes the random forest algorithm for classification. Random forest creates many decision trees on random subsets of the training data and attributes. Each tree votes for a class, and the class with the most votes across all trees is the random forest prediction. The document outlines the steps to build a random forest: 1) bootstrap samples of data to create training sets, 2) select random subsets of attributes at each node, 3) grow each tree, 4) aggregate trees by majority vote. An example applies random forest to predict if a person will buy a computer based on age, income, student status, and credit rating.