Embed presentation

Download as PDF, PPTX

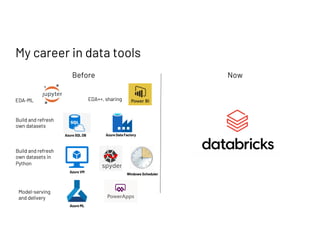

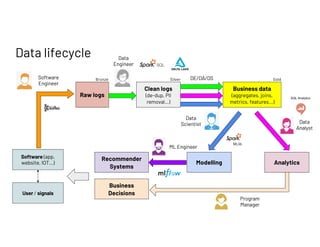

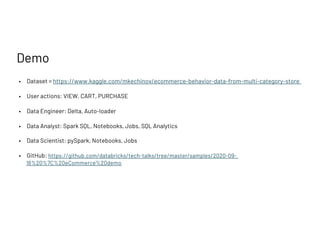

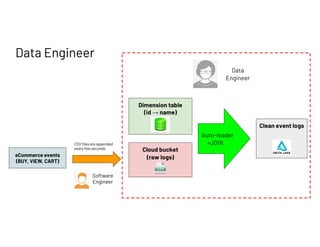

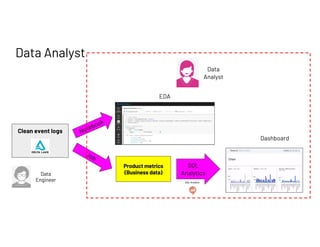

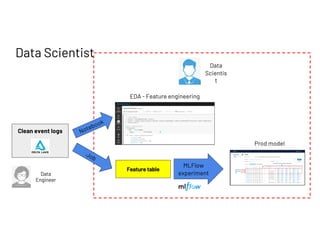

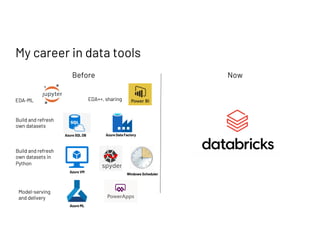

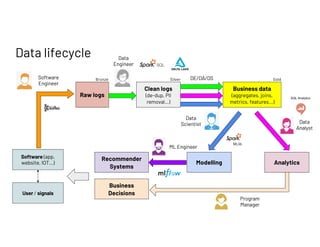

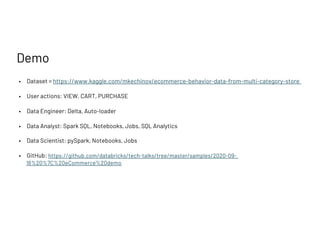

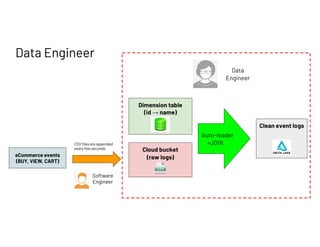

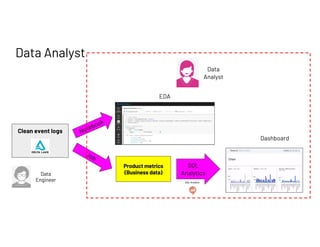

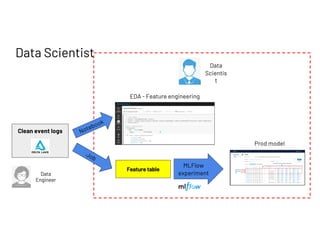

The document outlines a presentation by Francois Callewaert, a senior data scientist at Databricks, focusing on data lifecycle and processes involved in data analytics and machine learning. It covers the roles of data engineers, analysts, and scientists in handling datasets, as well as a practical demo leveraging tools like Azure and Spark for data management and analysis. The presentation emphasizes the integration of various technologies to enhance data-driven business decisions.