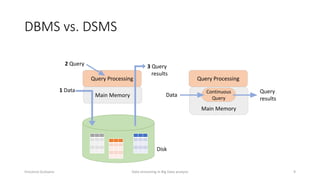

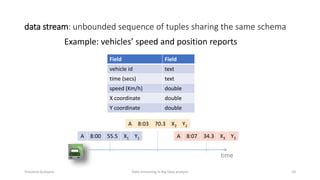

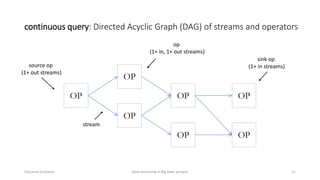

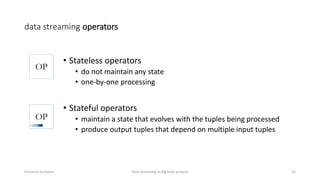

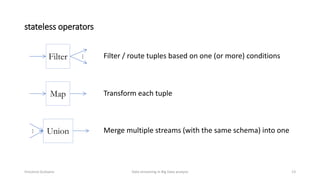

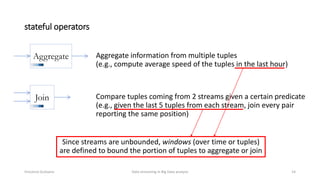

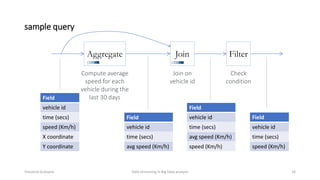

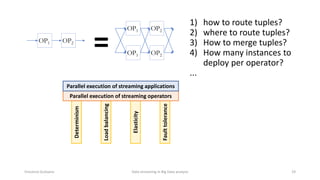

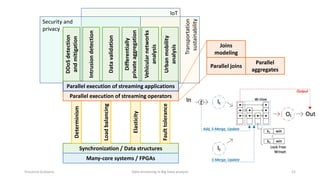

The document outlines key concepts and research related to data streaming in big data analysis, emphasizing its importance for real-time data processing. It discusses the evolution of data streaming technologies, highlighting challenges such as latency, security, and privacy, and introduces various data processing operators and techniques. The presentation also touches upon future research directions and applications in fields like vehicular networks and smart infrastructures.