Embed presentation

Downloaded 275 times

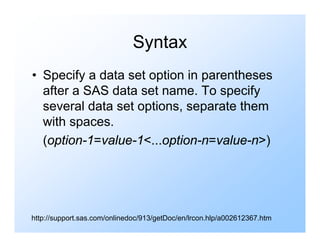

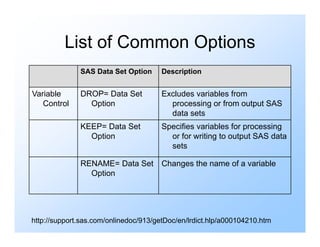

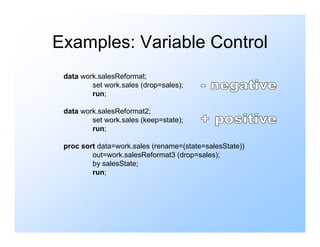

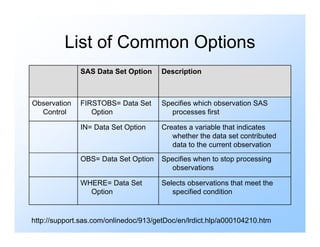

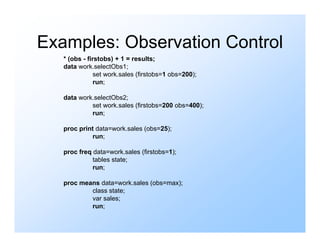

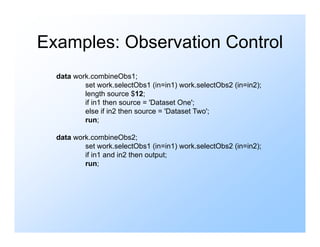

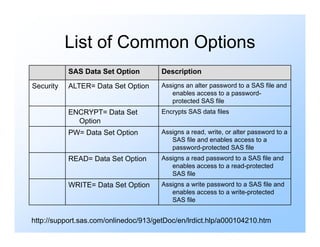

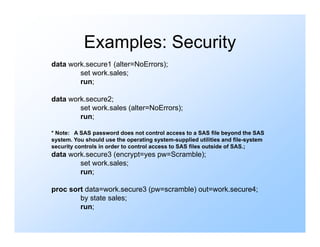

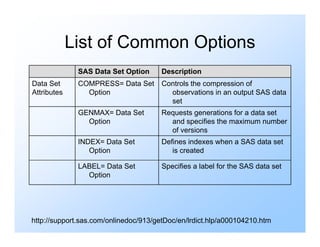

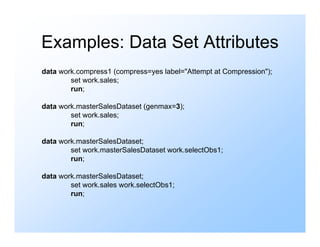

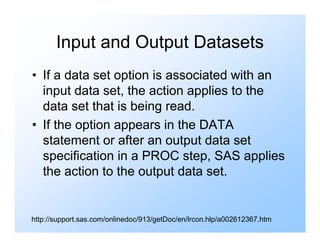

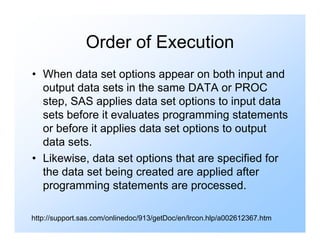

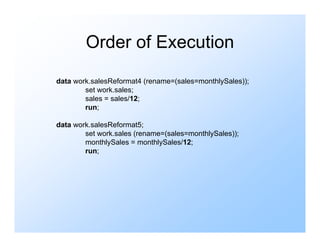

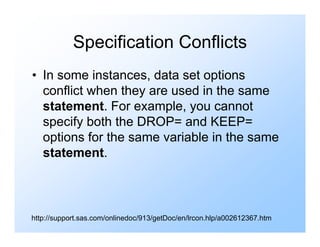

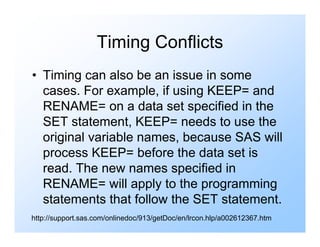

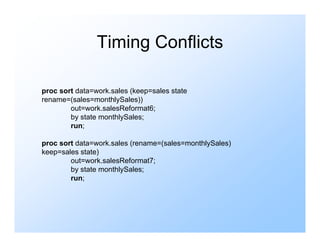

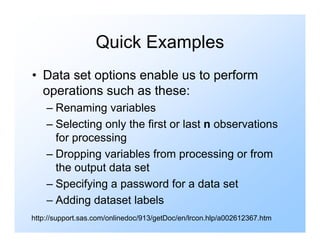

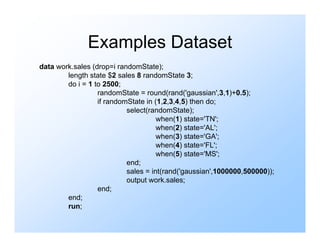

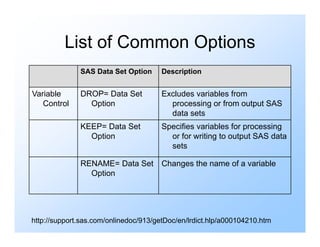

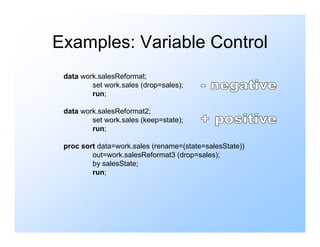

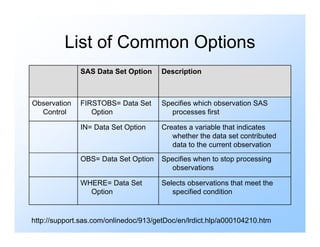

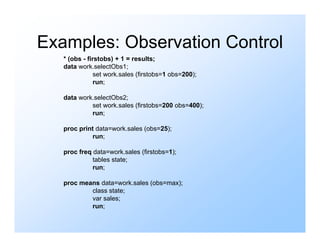

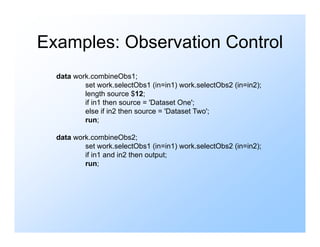

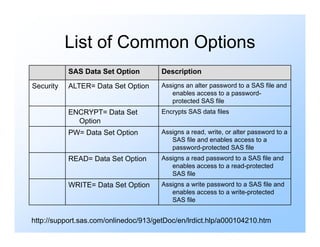

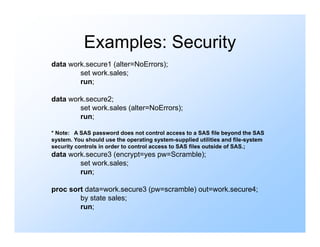

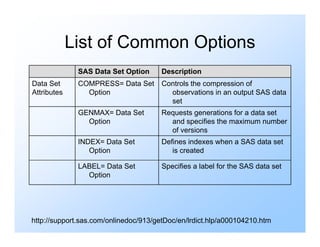

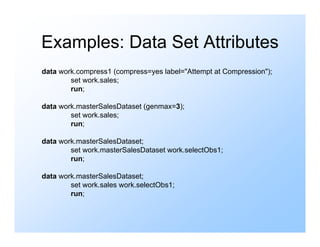

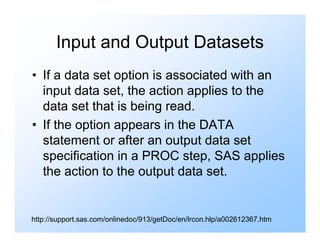

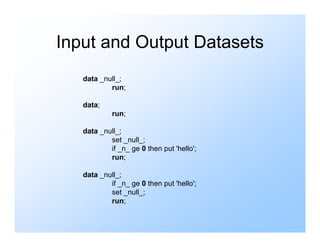

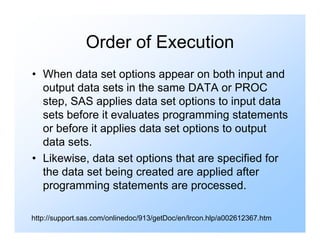

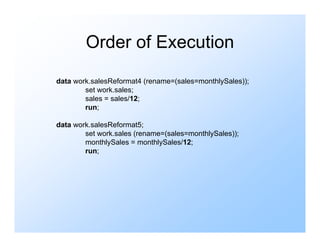

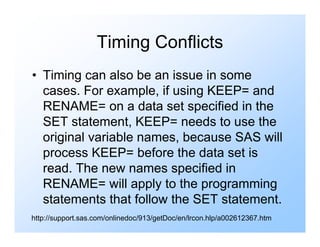

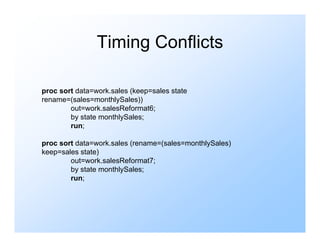

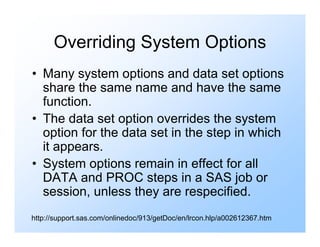

Data set options allow features during dataset processing and control variables, observations, security, and attributes. They are specified in parentheses after a SAS data set name and include options like DROP, KEEP, RENAME, FIRSTOBS, and LABEL. Data set options apply to input datasets before programming statements and to output datasets after statements are processed.