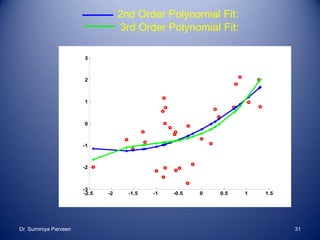

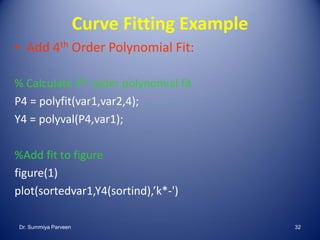

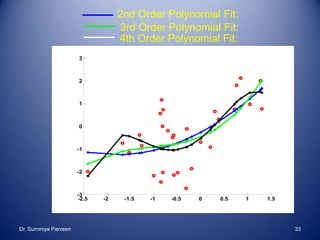

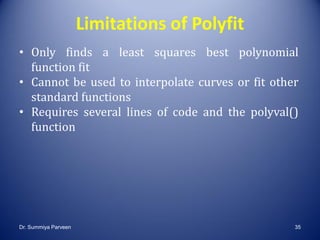

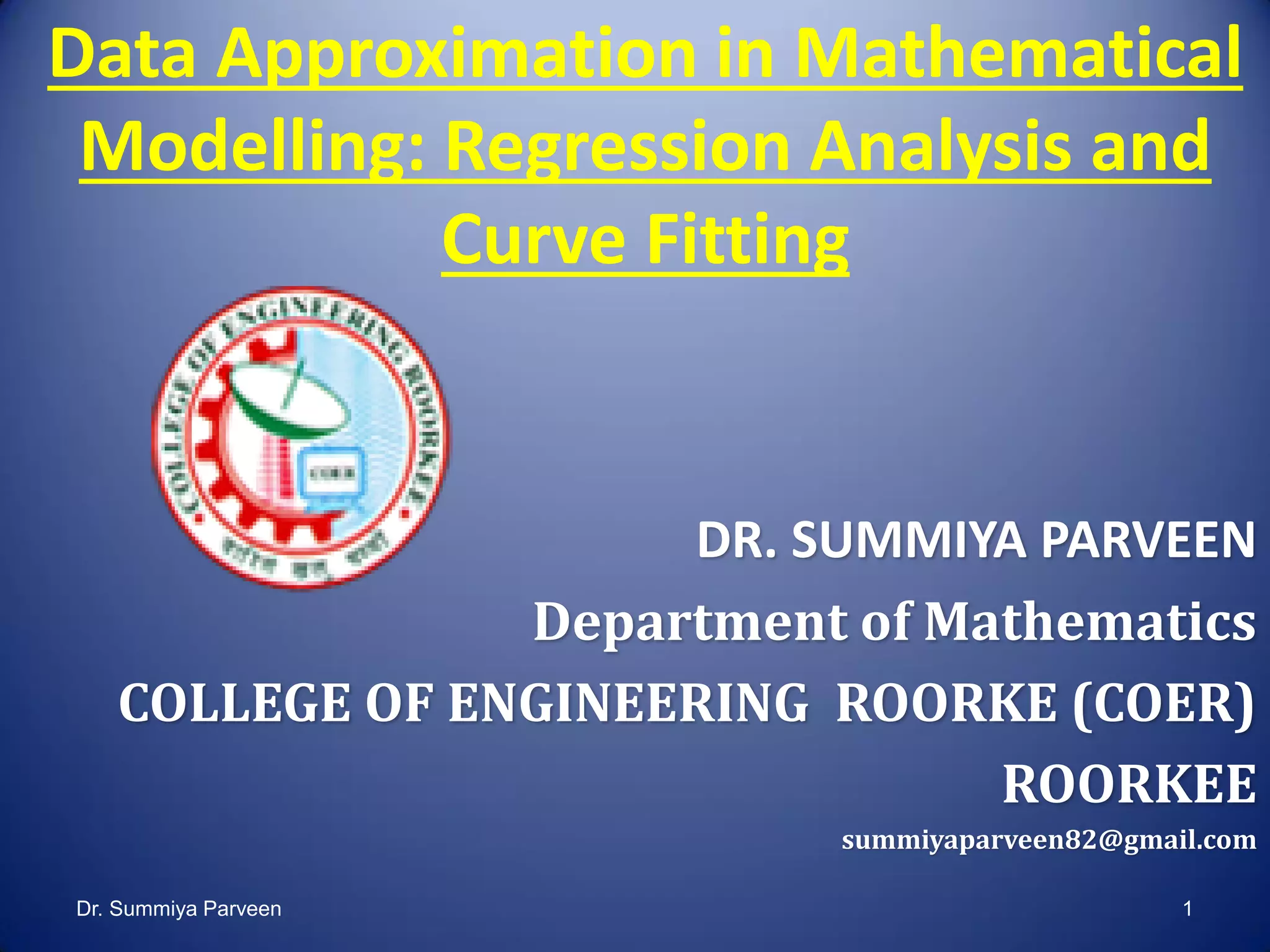

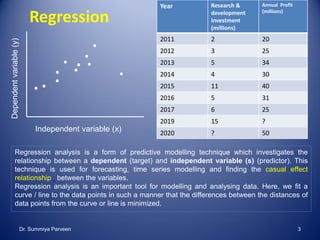

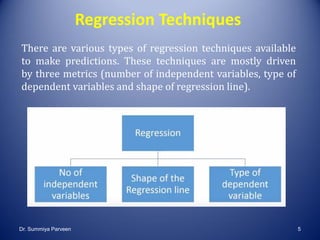

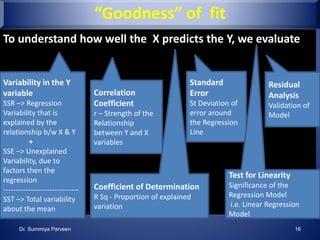

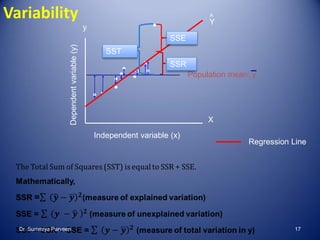

The document summarizes a lecture on regression analysis and curve fitting in mathematical modelling. It introduces regression analysis and its applications. It describes different regression techniques like linear, nonlinear, polynomial and multiple regression. It provides examples of fitting linear and polynomial curves to data using MATLAB. It discusses assessing the goodness of fit using metrics like residual norm and coefficient of determination.

![Curve Fitting using inbuilt functions

polyfit(x,y,n)

finds the coefficients of a polynomial P(x) of degree n that fits

the data

It uses least-square minimization

n = 1 (linear fit)

[P] = polyfit(X,Y,N)

returns P, a matrix containing the slope and the x intercept for a

linear fit

[Y] = polyval(P,X)

calculates the Y values for every X point on the line of best fit

Dr. Summiya Parveen 27](https://image.slidesharecdn.com/dataapproximationinmathematicalmodellingregressionanalysisandcurvefitting-180801071911/85/Data-Approximation-in-Mathematical-Modelling-Regression-Analysis-and-curve-fitting-27-320.jpg)

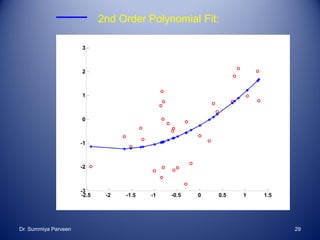

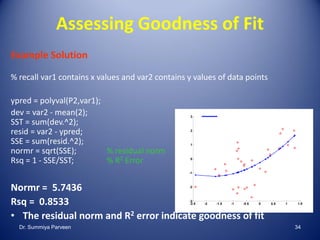

![Curve Fitting Example

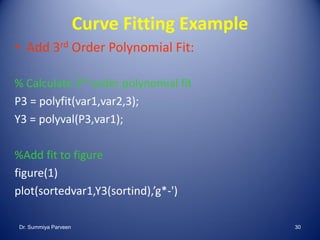

• 2nd Order Polynomial Fit:

%read data

[var1, var2] = textread(‘week8_testdata2.txt','%f%f','headerlines',1)

% Calculate 2nd order polynomial fit

P2 = polyfit(var1,var2,2);

Y2 = polyval(P2,var1);

%Plot fit

close all

figure(1)

hold on

plot(var1,var2,'ro')

[sortedvar1, sortind] = sort(var1)

plot(sortedvar1,Y2(sortind),'b*-')Dr. Summiya Parveen 28](https://image.slidesharecdn.com/dataapproximationinmathematicalmodellingregressionanalysisandcurvefitting-180801071911/85/Data-Approximation-in-Mathematical-Modelling-Regression-Analysis-and-curve-fitting-28-320.jpg)