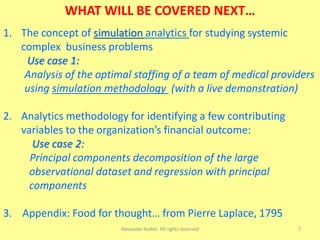

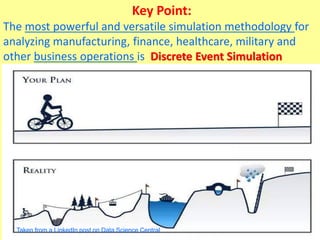

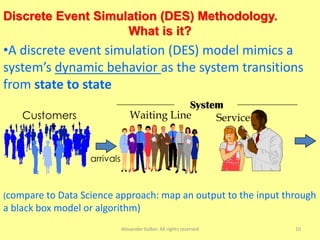

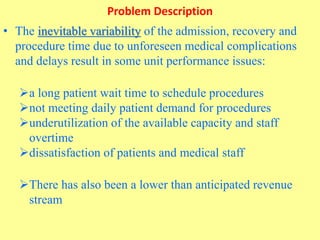

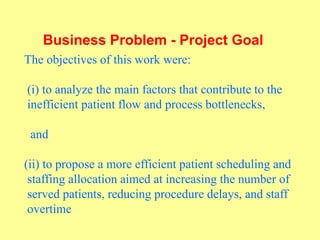

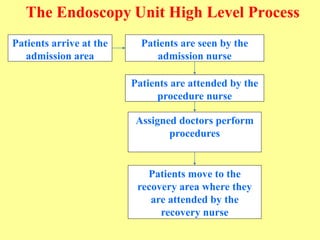

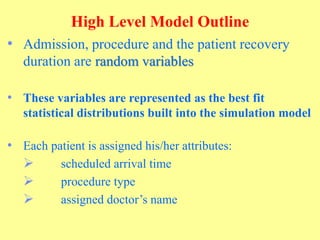

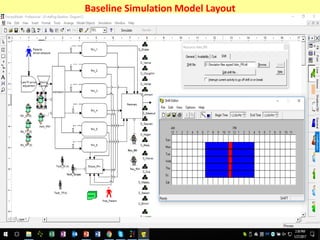

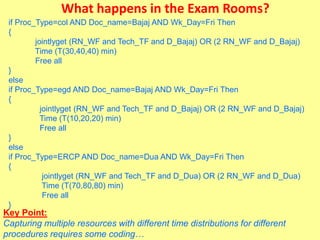

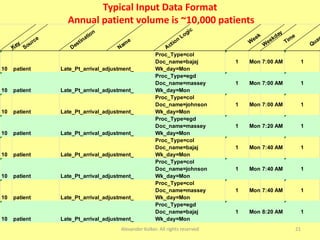

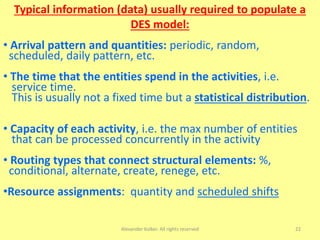

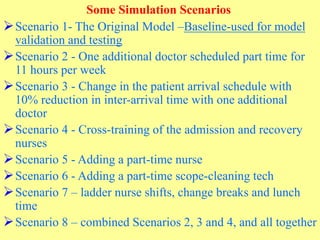

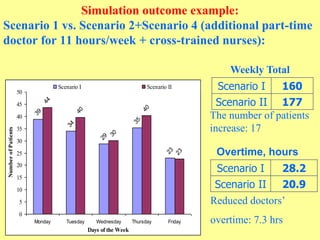

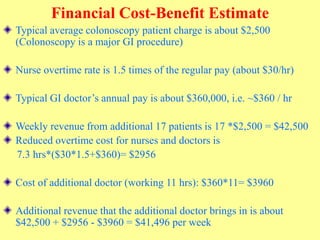

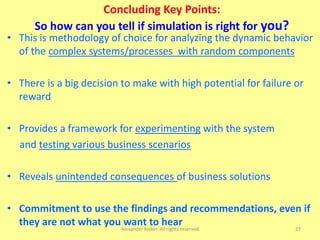

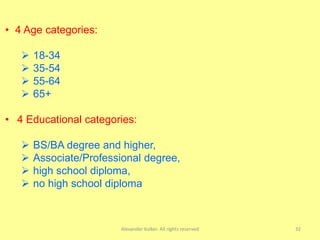

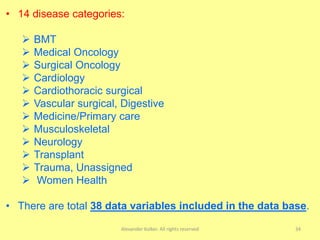

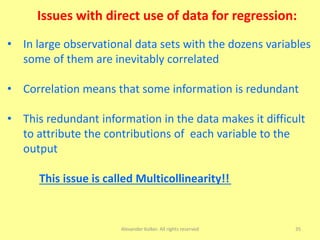

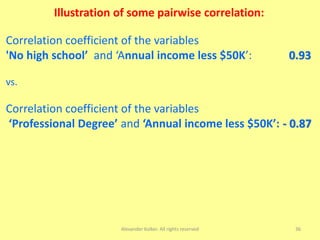

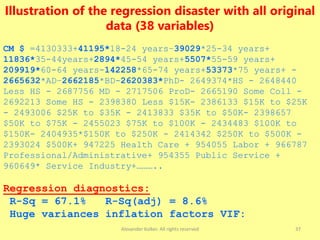

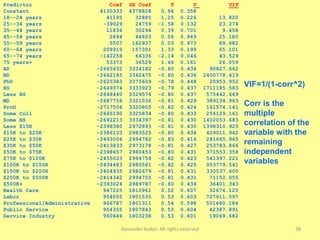

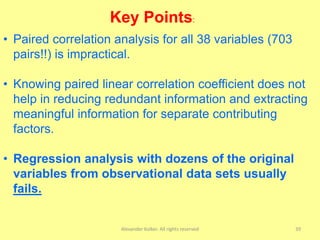

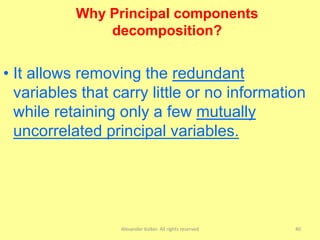

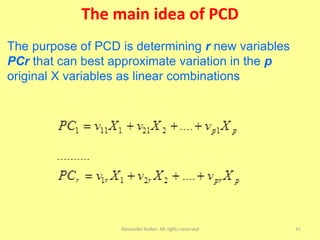

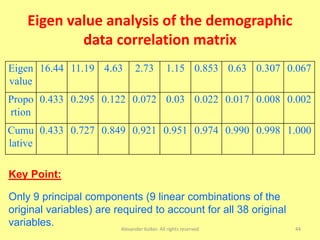

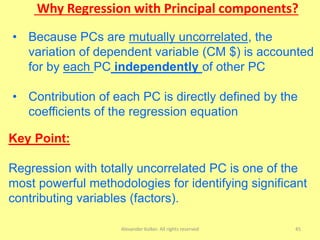

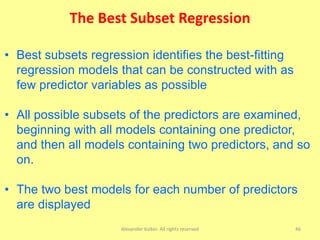

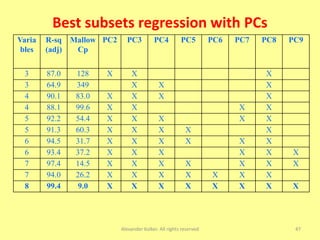

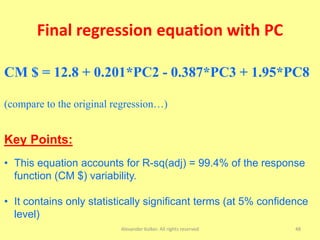

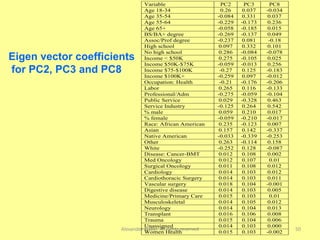

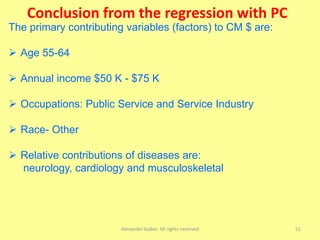

The document discusses the importance of shifting the focus of data analytics from technology to methodology in business settings for better decision-making and outcomes. It presents two use cases: one involving simulation analytics for optimizing staffing in healthcare settings using discrete event simulation, and another about analyzing demographic factors impacting financial performance using principal component analysis. The content emphasizes using rigorous methodologies to address complex business challenges and make informed decisions.