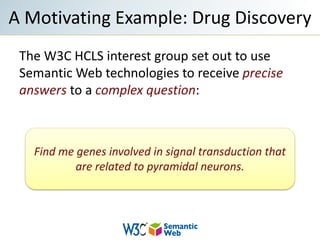

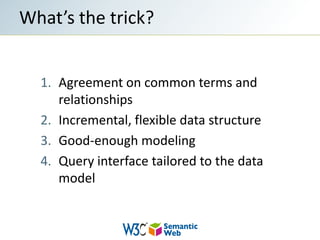

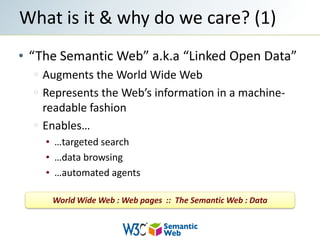

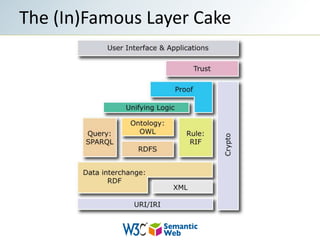

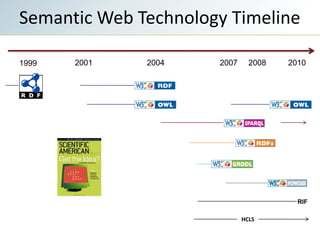

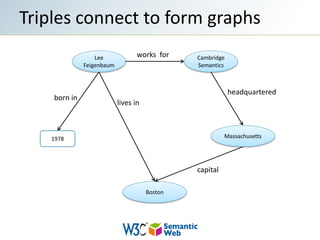

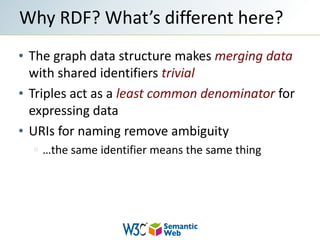

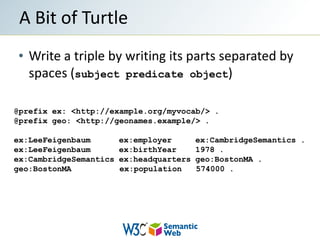

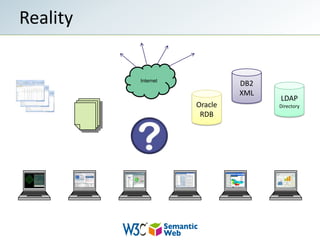

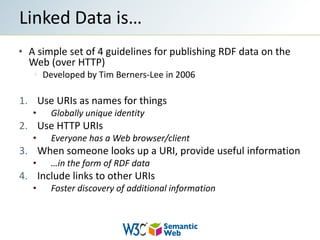

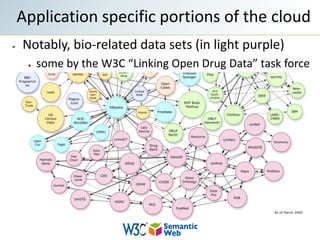

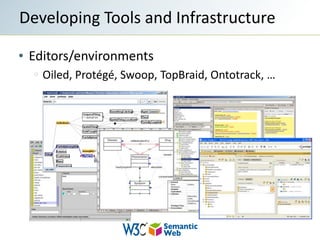

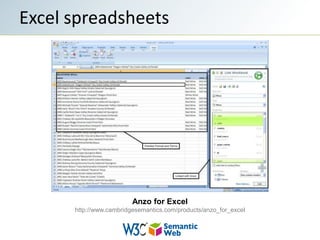

The document provides an overview of semantic web technologies and their application in fields like drug discovery and life sciences. It discusses the integration of disparate data sources through standards like RDF and SPARQL to enable effective data querying and interoperability. Additionally, it highlights the challenges in implementing these technologies, including a shortage of skilled professionals and the need for clear messaging.