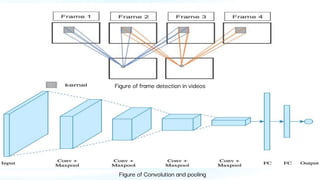

This document proposes using a pre-trained 3D convolutional neural network (CNN), named C3D, for violence detection in videos captured by cameras. The approach involves detecting persons in the video stream and processing only relevant frames to improve efficiency, addressing the challenges of identifying complex visual patterns. The goal is to enhance safety by accurately detecting violence and abnormal activities to protect individuals from threats such as harassment.