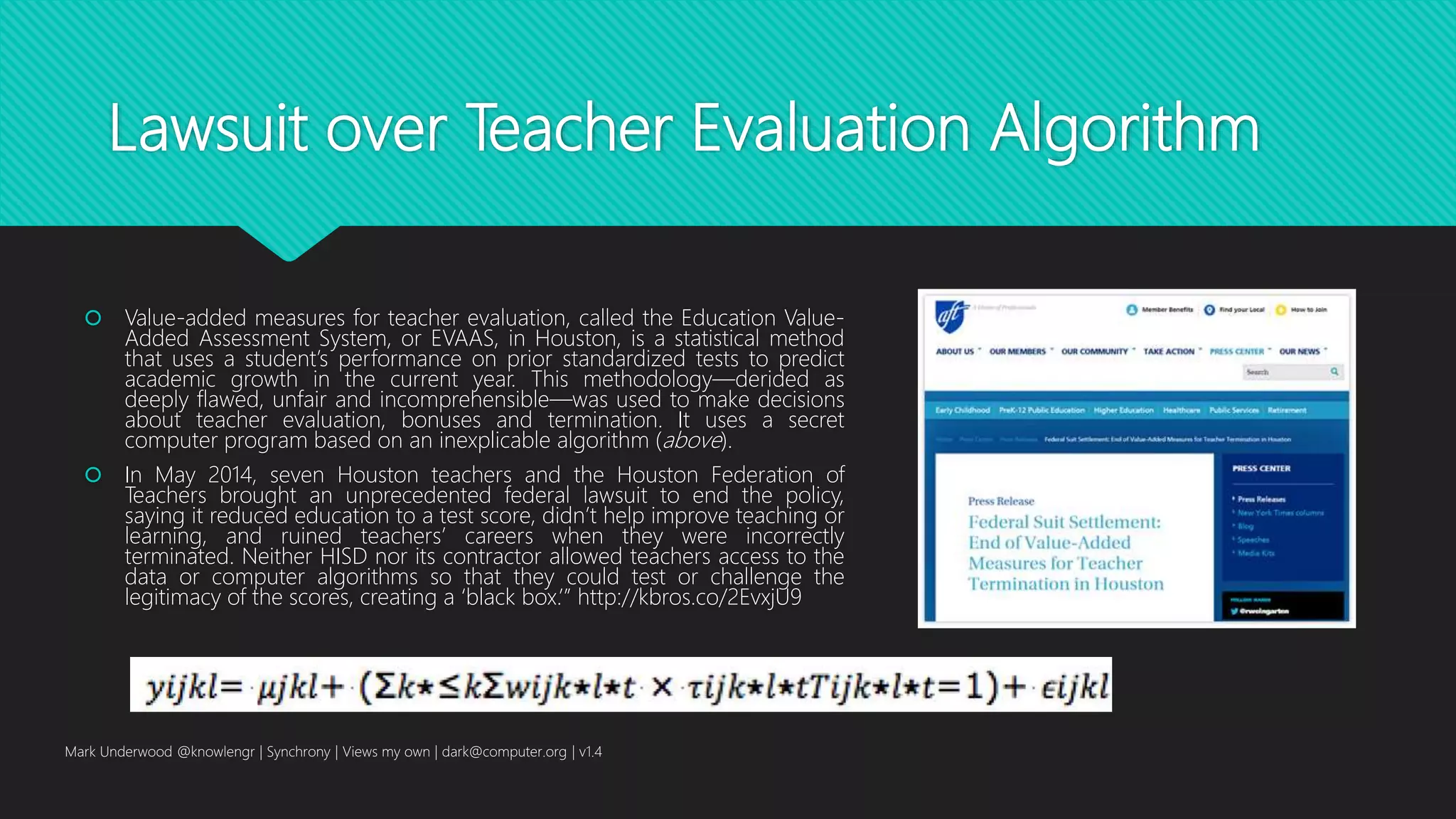

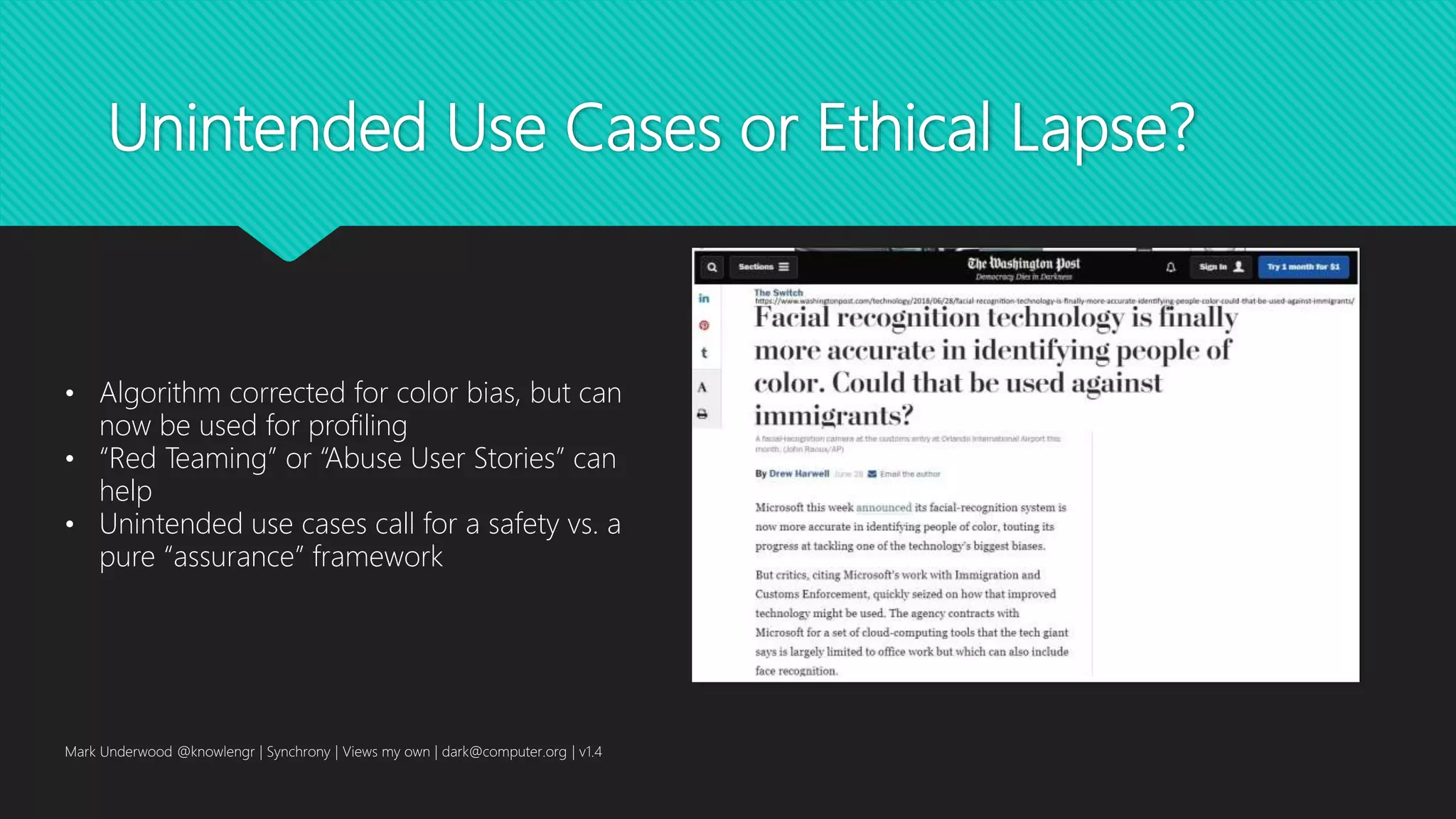

This document discusses codes of ethics and concerns regarding artificial intelligence and autonomous systems. It provides an overview of the various IEEE P7000 working groups that are examining issues around big data, machine learning, and ethics. It also mentions some case studies and examples that raise ethical issues relating to areas like bias, privacy, and fairness. The goal is to help engineers address ethical considerations in AI system design and development.

![Case Study 8

“The [Google DeepMind et al. team] research

acknowledges that current "deep learning" approaches to

AI have failed to achieve the ability to even approach

human cognitive skills. Without dumping all that's been

achieved with things such as "convolutional neural

networks," or CNNs, the shining success of machine

learning, they propose ways to impart broader reasoning

skills.”

Mark Underwood @knowlengr | Synchrony | Views my own | dark@computer.org | v1.4](https://image.slidesharecdn.com/mark-underwood-code-of-ethics-ethics-of-code-181024142202/75/Codes-of-Ethics-and-the-Ethics-of-Code-17-2048.jpg)

![Explainability / Interpretability

Mark Underwood @knowlengr | Synchrony | Views my own | dark@computer.org | v1.4

“[We need to] find ways of making techniques like

deep learning more understandable to their creators

and accountable to their users. Otherwise it will be

hard to predict when failures might occur—and it’s

inevitable they will. That’s one reason Nvidia’s car is

still experimental.”](https://image.slidesharecdn.com/mark-underwood-code-of-ethics-ethics-of-code-181024142202/75/Codes-of-Ethics-and-the-Ethics-of-Code-57-2048.jpg)

![Insights from More Mature Settings

AI Analytics for distributed military coalitions

“. . . Research has recently started to address such concerns and

prominent directions include explainable AI [4], quantification of

input influence in machine learning algorithms [5], ethics

embedding in decision support systems [6], “interruptability” for

machine learning systems [7], and data transparency [8]. “

“. . . devices that manage themselves and generate their own

management policies, discussing the similarities between such

systems and Skynet.”

Mark Underwood @knowlengr | Synchrony | Views my own | dark@computer.org | v1.4

S. Calo, D. Verma, E. Bertino, J. Ingham, and G. Cirincione, "How to prevent skynet

from forming (a perspective from Policy-Based autonomic device management),"

in 2018 IEEE 38th International Conference on Distributed Computing Systems

(ICDCS), Jul. 2018, pp. 1369-1376. [Online]. Available:

http://dx.doi.org/10.1109/ICDCS.2018.00137](https://image.slidesharecdn.com/mark-underwood-code-of-ethics-ethics-of-code-181024142202/75/Codes-of-Ethics-and-the-Ethics-of-Code-60-2048.jpg)

![Agile development & quality engineering

“[Studies] indicate that there is a significant

correlation between the inclusion of ethical

tools in the process of planning in Agile

methodologies and the achievement of

improved performance in three quality

parameters: schedule, product functionality

and cost. “

Mark Underwood @knowlengr | Synchrony | Views my own | dark@computer.org | v1.4](https://image.slidesharecdn.com/mark-underwood-code-of-ethics-ethics-of-code-181024142202/75/Codes-of-Ethics-and-the-Ethics-of-Code-70-2048.jpg)

![Selected Quality References

H. Abdulhalim, Y. Lurie, and S. Mark, "Ethics as a quality driver in agile software projects," Journal of

Service Science and Management, vol. 11, no. 1, pp. 13-25, 2018. [Online]. Available:

http://dx.doi.org/10.4236/jssm.2018.111002

Mark Underwood @knowlengr | Synchrony | Views my own | dark@computer.org | v1.4](https://image.slidesharecdn.com/mark-underwood-code-of-ethics-ethics-of-code-181024142202/75/Codes-of-Ethics-and-the-Ethics-of-Code-71-2048.jpg)