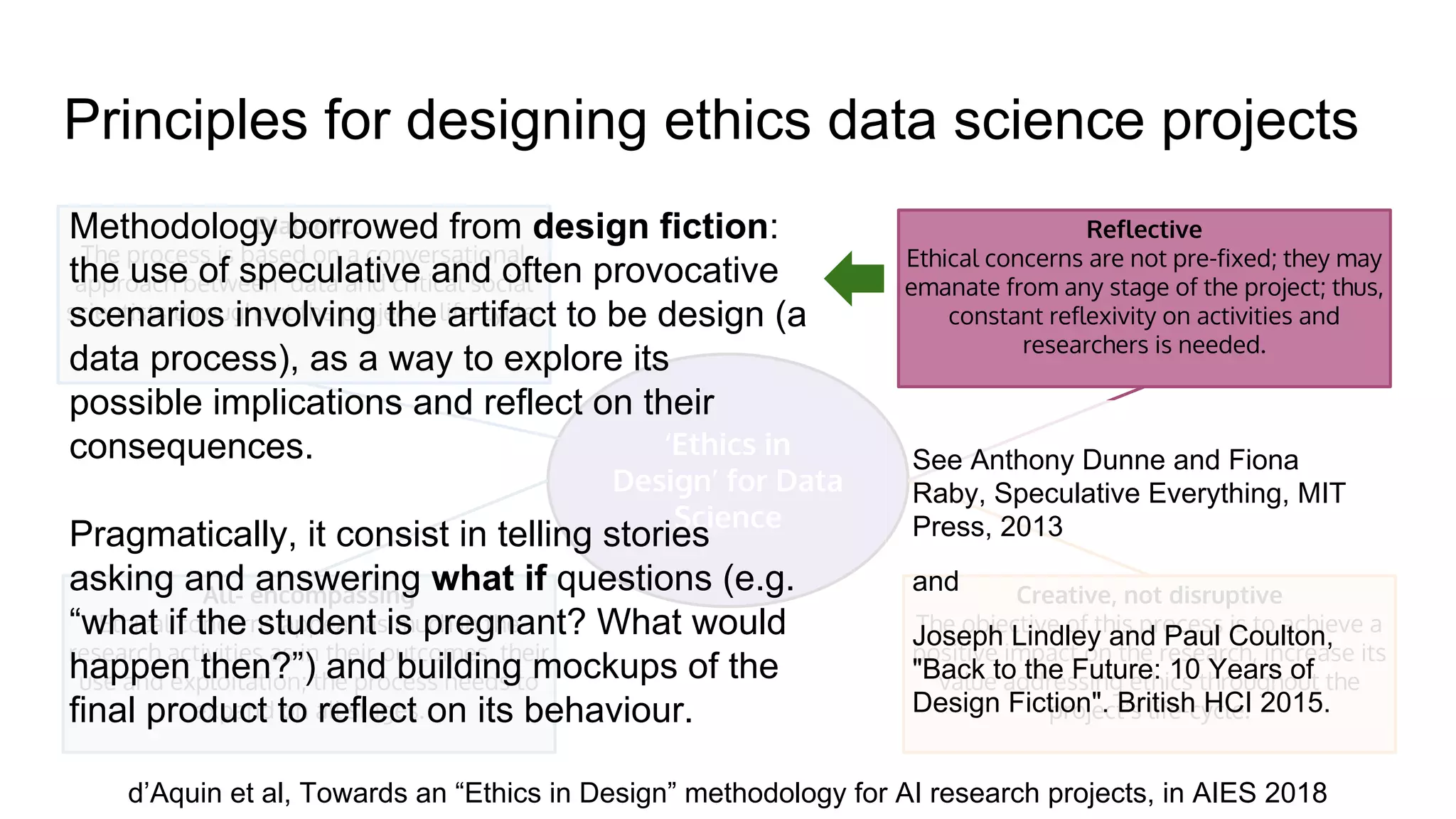

The document outlines the principles of data ethics, emphasizing the importance of ethical practices in data collection, processing, and application concerning human lives and society. It discusses various case studies demonstrating potential pitfalls in data ethics, such as biases and privacy breaches, and advocates for an 'ethics in design' methodology for AI research that incorporates constant reflection throughout the project lifecycle. The author stresses that relying solely on regulations is insufficient to address ethical challenges, necessitating a collaborative approach involving social scientists and technologists.