Cochrane workshop2016

•Download as PPTX, PDF•

1 like•1,583 views

slides to support 90 minute workshop at Cochrane Collaboration meetup in Birmingham

Report

Share

Report

Share

Recommended

Cochrane workshop 2016

Published on Mar 16, 2016 bt PMR

slides to support 90 minute workshop at Cochrane Collaboration meetup in Birmingham

Liberating facts from the scientific literature - Jisc Digifest 2016

Published on Mar 4, 2016 by PMR

Text and data mining (TDM) techniques can be applied to a wide range of materials, from published research papers, books and theses, to cultural heritage materials, digitised collections, administrative and management reports and documentation, etc. Use cases include academic research, resource discovery and business intelligence.

This workshop will show the value and benefits of TDM techniques and demonstrate how ContentMine aims to liberate 100,000,000 facts from the scientific literature, and ContentMine will provide a hands on demo on a topical and accessible scientific/medical subject.

Automatic Extraction of Science and Medicine from the scholarly literature

Many scientists have to extract many facts out the scholarly literature - to evaluate other work or to extract useful collections of facts. This shows the approach, especially for systematic reviews of animal or clinical trials

The culture of researchData

Published on Jan 29, 2016 by PMR

Keynote talk to LEARN (LERU/H2020 project) for research data management. Emphasizes that problems are cultural not technical. Promotes modern approaches such as Git / continuous Integration, announces DAT. Asserts that the Right to Read in the Right to Mine. Calls for widespread development of content mining (TDM)

Automatic Extraction of Knowledge from the Literature

ContentMine tools (and the Harvest alliance) can be used to search the literature for knowledge, especially in biomedicine. All tools are Open and shortly we shall be indexing the complete daily scholarly literature

Content Mining of Science and Medicine

Published on Feb 29, 2016 by PMR

An overview of Text and Data Mining (ContentMining) including live demonstrations. The fundamentals: discover, scrape, normalize , facet/index, analyze, publish are exemplified using the recent Zika outbreak. Mining covers textual and non-textual content and examples of chemistry and phylogenetic tress are given.

Automatic Extraction of Knowledge from Biomedical literature

a plenary lecture to Cochrane Collaboration in Birmingham, on the value of automatically extracting knowledge. Covers the Why? How? What? Who? and problems and invites collaboration

ContentMine (TDM) at JISC Digifest

The latest developments in mining scientific documents using TheContentMine open technology

Recommended

Cochrane workshop 2016

Published on Mar 16, 2016 bt PMR

slides to support 90 minute workshop at Cochrane Collaboration meetup in Birmingham

Liberating facts from the scientific literature - Jisc Digifest 2016

Published on Mar 4, 2016 by PMR

Text and data mining (TDM) techniques can be applied to a wide range of materials, from published research papers, books and theses, to cultural heritage materials, digitised collections, administrative and management reports and documentation, etc. Use cases include academic research, resource discovery and business intelligence.

This workshop will show the value and benefits of TDM techniques and demonstrate how ContentMine aims to liberate 100,000,000 facts from the scientific literature, and ContentMine will provide a hands on demo on a topical and accessible scientific/medical subject.

Automatic Extraction of Science and Medicine from the scholarly literature

Many scientists have to extract many facts out the scholarly literature - to evaluate other work or to extract useful collections of facts. This shows the approach, especially for systematic reviews of animal or clinical trials

The culture of researchData

Published on Jan 29, 2016 by PMR

Keynote talk to LEARN (LERU/H2020 project) for research data management. Emphasizes that problems are cultural not technical. Promotes modern approaches such as Git / continuous Integration, announces DAT. Asserts that the Right to Read in the Right to Mine. Calls for widespread development of content mining (TDM)

Automatic Extraction of Knowledge from the Literature

ContentMine tools (and the Harvest alliance) can be used to search the literature for knowledge, especially in biomedicine. All tools are Open and shortly we shall be indexing the complete daily scholarly literature

Content Mining of Science and Medicine

Published on Feb 29, 2016 by PMR

An overview of Text and Data Mining (ContentMining) including live demonstrations. The fundamentals: discover, scrape, normalize , facet/index, analyze, publish are exemplified using the recent Zika outbreak. Mining covers textual and non-textual content and examples of chemistry and phylogenetic tress are given.

Automatic Extraction of Knowledge from Biomedical literature

a plenary lecture to Cochrane Collaboration in Birmingham, on the value of automatically extracting knowledge. Covers the Why? How? What? Who? and problems and invites collaboration

ContentMine (TDM) at JISC Digifest

The latest developments in mining scientific documents using TheContentMine open technology

ContentMine + EPMC: Finding Zika!

Use of ContentMine tools on the Open Access subset of EuropePubMedCentral to discover new knowledge about the Zika virus.

Three slides have embedded movies - these do not show in slideshare and a first pass of this can be seen as a single file at https://vimeo.com/154705161

Automatic Extraction of Knowledge from Biomedical literature

Published on Mar 16, 2016 by PMR

A plenary lecture to Cochrane Collaboration in Birmingham, on the value of automatically extracting knowledge. Covers the Why? How? What? Who? and problems and invites collaboration

Automatic Extraction of Knowledge from the Literature

Published on May 11, 2016 by PMR

ContentMine tools (and the Harvest alliance) can be used to search the literature for knowledge, especially in biomedicine. All tools are Open and shortly we shall be indexing the complete daily scholarly literature

Text and Data Mining explained at FTDM

An overview of Text and Data Mining (ContentMining) including live demonstrations. The fundamentals: discover, scrape, normalize , facet/index, analyze, publish are exemplified using the recent Zika outbreak. Mining covers textual and non-textual content and examples of chemistry and phylogenetic tress are given.

Content Mining of Science in Europe

Talk to OpenForum Academy (Open Forum Europe) about Text and data Mining. Four use cases selected fo non-scientists. Also discussion of latest on Europena copyright reform and TDM exceptions

ContentMine + EPMC: Finding Zika!

Published on Feb 07, 2016 by PMR

Use of ContentMine tools on the Open Access subset of EuropePubMedCentral to discover new knowledge about the Zika virus. Includes clips of the software in action

Digital Scholarship: Enlightenment or Devastated Landscape?

Published on Dec 17, 2015 by PMR

Every year 500 Billion USD of public funding is spent on research, but much of this lies hidden in papers that are never read. I describe how machines can help us to read the literature. However there is massive opposition from publishers who are trying to prevent open scholarship and who build walled gardens that they control

Content Mining at Wellcome Trust

Presentation for researchers and policy makers at a 2-day meeting sponsored by and at WellcomeTrust, UK.

Amanuens.is HUmans and machines annotating scholarly literature

about 10,000 scholarly articles ("papers") are published each day. Amanuens.is is a symbiont of ContentMine and Hypothes.is (both Shuttleworth projects/Fellows) which annotates theses using an array of controlled vocabularies ("dictionaries"). The results, in semantic form are used to annotate the original material. The talk had live demos and used plant chemistry as the examples

Open software and knowledge for MIOSS

Talk to EBI Industry group on Open Software for chemical and pharmaceutical sciences. Covers examples of chemistry , wit demos, and argues that all public knowledge should be Openly accessible

High throughput mining of the scholarly literature

Published on Jun 7, 2016 by PMR

Talk given to statisticians in Tilburg, with emphasis on scholarly comms for detecting unusual features. Includes demo of Amanuens.is and image mining

High throughput mining of the scholarly literature

Talk given to statisticians in Tilburg, with emphasis on scholarly comms for detecting unusual features. Includes demo of Amanuens.is and image mining

Open software and knowledge for MIOSS

Published on May 18, 2016 by PMR

Talk to EBI Industry group on Open Software for chemical and pharmaceutical sciences. Covers examples of chemistry , wit demos, and argues that all public knowledge should be Openly accessible

Content Mining of Science in Cambridge

Published on Jan 08, 2016 by PMR

Invited talk to Cambridge librarians on ContentMining and how iCambridge is leading in several ways

Can Computers understand the scientific literature (includes compscie material)

Published on Jan 24, 2014 by PMR

With the semantic web machines can autonomously carry out many knowledge-based tasks as well as humans. The main problems are not technical but the prevention of access to information. I advocate automatic downloading and indexing of all scientific information

Amanuens.is HUmans and machines annotating scholarly literature

Published on May 19, 2016 by PMR

about 10,000 scholarly articles ("papers") are published each day. Amanuens.is is a symbiont of ContentMine and Hypothes.is (both Shuttleworth projects/Fellows) which annotates theses using an array of controlled vocabularies ("dictionaries"). The results, in semantic form are used to annotate the original material. The talk had live demos and used plant chemistry as the examples

High throughput mining of the scholarly literature; talk at NIH

The scientific and medical literature contains huge amounts of valuable unused information. This talk shows how to discover it, extract, re-use and interpret it. Wikidata is presented as a key new tool and infrastructure. Everyone can become involved. However some of the barriers to use are sociopolitical and these are identified and discussed.

Museum impact: linking-up specimens with research published on them

An informal talk for the monthly #SciFri series of talks at the Natural History Museum, London. 2015-09-18

Mining the scientific literature for plants and chemistry

ContentMine can read the daily scientific literature and extract facts. This talk was given to the OpenPlant project - with whom ContentMine collaborate at a meeting on 2016-07-25/27 in Norwich. Examples of extracted facts are given.

Towards Responsible Content Mining: A Cambridge perspective

ContentMining (Text and Data Mining) is now legal in the UK for non-commercial research. Cambridge UK is a natural centre, with several components:

* a world-class University and Library

* many publishers, both Open Access and conventional

* a digital culture

* ContentMine - a leading proponent and practitioner of mining

Cambridge University Press welcomes content mining and invited PMR to give a talk there. He showed the technology and protocols and proposed a practical way forward in 2017

ContentMining and Clinical Trials

A workshop run at the UK Cochrane Centre to explore contentmining for clinical trials.

More Related Content

What's hot

ContentMine + EPMC: Finding Zika!

Use of ContentMine tools on the Open Access subset of EuropePubMedCentral to discover new knowledge about the Zika virus.

Three slides have embedded movies - these do not show in slideshare and a first pass of this can be seen as a single file at https://vimeo.com/154705161

Automatic Extraction of Knowledge from Biomedical literature

Published on Mar 16, 2016 by PMR

A plenary lecture to Cochrane Collaboration in Birmingham, on the value of automatically extracting knowledge. Covers the Why? How? What? Who? and problems and invites collaboration

Automatic Extraction of Knowledge from the Literature

Published on May 11, 2016 by PMR

ContentMine tools (and the Harvest alliance) can be used to search the literature for knowledge, especially in biomedicine. All tools are Open and shortly we shall be indexing the complete daily scholarly literature

Text and Data Mining explained at FTDM

An overview of Text and Data Mining (ContentMining) including live demonstrations. The fundamentals: discover, scrape, normalize , facet/index, analyze, publish are exemplified using the recent Zika outbreak. Mining covers textual and non-textual content and examples of chemistry and phylogenetic tress are given.

Content Mining of Science in Europe

Talk to OpenForum Academy (Open Forum Europe) about Text and data Mining. Four use cases selected fo non-scientists. Also discussion of latest on Europena copyright reform and TDM exceptions

ContentMine + EPMC: Finding Zika!

Published on Feb 07, 2016 by PMR

Use of ContentMine tools on the Open Access subset of EuropePubMedCentral to discover new knowledge about the Zika virus. Includes clips of the software in action

Digital Scholarship: Enlightenment or Devastated Landscape?

Published on Dec 17, 2015 by PMR

Every year 500 Billion USD of public funding is spent on research, but much of this lies hidden in papers that are never read. I describe how machines can help us to read the literature. However there is massive opposition from publishers who are trying to prevent open scholarship and who build walled gardens that they control

Content Mining at Wellcome Trust

Presentation for researchers and policy makers at a 2-day meeting sponsored by and at WellcomeTrust, UK.

Amanuens.is HUmans and machines annotating scholarly literature

about 10,000 scholarly articles ("papers") are published each day. Amanuens.is is a symbiont of ContentMine and Hypothes.is (both Shuttleworth projects/Fellows) which annotates theses using an array of controlled vocabularies ("dictionaries"). The results, in semantic form are used to annotate the original material. The talk had live demos and used plant chemistry as the examples

Open software and knowledge for MIOSS

Talk to EBI Industry group on Open Software for chemical and pharmaceutical sciences. Covers examples of chemistry , wit demos, and argues that all public knowledge should be Openly accessible

High throughput mining of the scholarly literature

Published on Jun 7, 2016 by PMR

Talk given to statisticians in Tilburg, with emphasis on scholarly comms for detecting unusual features. Includes demo of Amanuens.is and image mining

High throughput mining of the scholarly literature

Talk given to statisticians in Tilburg, with emphasis on scholarly comms for detecting unusual features. Includes demo of Amanuens.is and image mining

Open software and knowledge for MIOSS

Published on May 18, 2016 by PMR

Talk to EBI Industry group on Open Software for chemical and pharmaceutical sciences. Covers examples of chemistry , wit demos, and argues that all public knowledge should be Openly accessible

Content Mining of Science in Cambridge

Published on Jan 08, 2016 by PMR

Invited talk to Cambridge librarians on ContentMining and how iCambridge is leading in several ways

Can Computers understand the scientific literature (includes compscie material)

Published on Jan 24, 2014 by PMR

With the semantic web machines can autonomously carry out many knowledge-based tasks as well as humans. The main problems are not technical but the prevention of access to information. I advocate automatic downloading and indexing of all scientific information

Amanuens.is HUmans and machines annotating scholarly literature

Published on May 19, 2016 by PMR

about 10,000 scholarly articles ("papers") are published each day. Amanuens.is is a symbiont of ContentMine and Hypothes.is (both Shuttleworth projects/Fellows) which annotates theses using an array of controlled vocabularies ("dictionaries"). The results, in semantic form are used to annotate the original material. The talk had live demos and used plant chemistry as the examples

High throughput mining of the scholarly literature; talk at NIH

The scientific and medical literature contains huge amounts of valuable unused information. This talk shows how to discover it, extract, re-use and interpret it. Wikidata is presented as a key new tool and infrastructure. Everyone can become involved. However some of the barriers to use are sociopolitical and these are identified and discussed.

Museum impact: linking-up specimens with research published on them

An informal talk for the monthly #SciFri series of talks at the Natural History Museum, London. 2015-09-18

Mining the scientific literature for plants and chemistry

ContentMine can read the daily scientific literature and extract facts. This talk was given to the OpenPlant project - with whom ContentMine collaborate at a meeting on 2016-07-25/27 in Norwich. Examples of extracted facts are given.

Towards Responsible Content Mining: A Cambridge perspective

ContentMining (Text and Data Mining) is now legal in the UK for non-commercial research. Cambridge UK is a natural centre, with several components:

* a world-class University and Library

* many publishers, both Open Access and conventional

* a digital culture

* ContentMine - a leading proponent and practitioner of mining

Cambridge University Press welcomes content mining and invited PMR to give a talk there. He showed the technology and protocols and proposed a practical way forward in 2017

What's hot (20)

Automatic Extraction of Knowledge from Biomedical literature

Automatic Extraction of Knowledge from Biomedical literature

Automatic Extraction of Knowledge from the Literature

Automatic Extraction of Knowledge from the Literature

Digital Scholarship: Enlightenment or Devastated Landscape?

Digital Scholarship: Enlightenment or Devastated Landscape?

Amanuens.is HUmans and machines annotating scholarly literature

Amanuens.is HUmans and machines annotating scholarly literature

High throughput mining of the scholarly literature

High throughput mining of the scholarly literature

High throughput mining of the scholarly literature

High throughput mining of the scholarly literature

Can Computers understand the scientific literature (includes compscie material)

Can Computers understand the scientific literature (includes compscie material)

Amanuens.is HUmans and machines annotating scholarly literature

Amanuens.is HUmans and machines annotating scholarly literature

High throughput mining of the scholarly literature; talk at NIH

High throughput mining of the scholarly literature; talk at NIH

Museum impact: linking-up specimens with research published on them

Museum impact: linking-up specimens with research published on them

Mining the scientific literature for plants and chemistry

Mining the scientific literature for plants and chemistry

Towards Responsible Content Mining: A Cambridge perspective

Towards Responsible Content Mining: A Cambridge perspective

Viewers also liked

ContentMining and Clinical Trials

A workshop run at the UK Cochrane Centre to explore contentmining for clinical trials.

Guias de practica clinica 2016 (primera parte): Introducción, alcances, objet...

Curso de introducción a las guías de práctica clínica

ContentMining for France and Europe; Lessons from 2 years in UK

I have spend 2 years carrying out Content Mining (aka Text and Data Mining) in the UK under the 2014 "Hargreaves" exception. This talk was given in Paris, to ADBU , after France had passed the law of the numeric Republique. I illustrate what worked in what did not and why and offer ideas to France and Europe

El Ensayo Clínico Aleatorio: introducción

Para cualquiera que quiera entender las razones de la distribución aleatoria y el uso de los ECAs para las revisiones sistemáticas y síntesis de la evidencia

Guias de practica clinica 2016 (3a parte)

de la evidencia a la recomendación utilizando el sistema GRADE como parte del seminario para la OPS

Sesión clínica: "Meta análisis y revisiones sistemáticas"

Por la Dra. Eloisa Delsors, médico de familia y tutora de residentes del Centro de Salud Jesús Marín, nos habla mediante una sesión clínica sobre los meta análisis y revisiones sistemáticas.

Viewers also liked (12)

Guias de practica clinica 2016 (primera parte): Introducción, alcances, objet...

Guias de practica clinica 2016 (primera parte): Introducción, alcances, objet...

ContentMining for France and Europe; Lessons from 2 years in UK

ContentMining for France and Europe; Lessons from 2 years in UK

Sesión clínica: "Meta análisis y revisiones sistemáticas"

Sesión clínica: "Meta análisis y revisiones sistemáticas"

Similar to Cochrane workshop2016

OSFair2017 Workshop | Bioschemas

Carole Goble presents the Bioschemas | OSFair2017 Workshop

Workshop title: How FAIR friendly is your data catalogue?

Workshop overview:

This workshop will build upon the work planned by the EOSCpilot data interoperability task and the BlueBridge workshop held on April 3 at the RDA meeting. We will investigate common mechanisms for interoperation of data catalogues that preserve established community standards, norms and resources, while simplifying the process of being/becoming FAIR. Can we have a simple interoperability architecture based on a common set of metadata types? What are the minimum metadata requirements to expose FAIR data to EOSC services and EOSC users?

DAY 3 - PARALLEL SESSION 6 & 7

Systematic reviews searching part 2 2019

Construct a EMBASE Search that complements your MEDLINE search

Discuss other databases to consider for searching

Understand the role of GreyLit in systematic reviews

Searching for clinical trials

Download and manage results

ChemSpider – The Vision and Challenges Associated with Building a Free Online...

ChemSpider – The Vision and Challenges Associated with Building a Free Online...US Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

RSC ChemSpider -- Managing and Integrating Chemistry on the Internet to Build...

RSC ChemSpider -- Managing and Integrating Chemistry on the Internet to Build...US Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

The increasing availability of free and open access resources for scientists on the internet presents us with a revolution in data availability. The Royal Society of Chemistry hosts ChemSpider, a free access website for chemists built with the intention of building community for chemists (http://www.chemspider.com/).

ChemSpider is an aggregator of chemistry related information, at present over 20 million unique chemical entities linked out to over 300 separate data sources, ChemSpider has taken on the task of both robotically and manually curating publicly available data sources. It is also a public deposition platform where chemists can deposit their own data including novel structures, analytical data, synthesis procedures and host data associated with the growing activities associated with Open Notebook Science.

This presentation will examine chemistry on the internet, the dubious quality of what is available and how the ChemSpider crowdsourced curation platform is fast becoming one of the centralized hubs for resourcing information about chemical entities.

We will also review our efforts to provide free resources for synthesis procedures, spectral data and structure-based searching of the chemistry literature and how chemists can contribute directly to each of these projects.

Systematic Review

A systematic review uses systematic and explicit methods to identify, select, critically appraise, and extract and analyze data from relevant research [Higgins & Green 2011].

Literature search

Presented at the 14th BISOP – Belgrade Symposium on Pain, on 17 May 2019, as part of the WORKSHOP 1: How to Design and Publish Your Research Idea

Whitney Symposium Lecture June 2008

Whitney Symposium Lecture June 2008US Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

A Presentation Given at the General Electric Whitney Symposium on NetworksEbi public meeting on internet chemistry databases november 2010

Ebi public meeting on internet chemistry databases november 2010US Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

Exhaustive Literature Searching (Systematic Reviews)

Details the search component of systematic review projects.

A Global Commons for Scientific Data: Molecules and Wikidata

Methods for extracting facts from the scientific literature, and linking them to Wikidata IDs. Wikidata is introduced by an architectural example and bioscience. Then we explore how data can be extracted from text and from images

ChemSpider as a Foundation for Crowdsourcing and Collaborations in Open Chemi...

ChemSpider as a Foundation for Crowdsourcing and Collaborations in Open Chemi...US Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

This was a presentation I gave to an audience at Nature Publishing Group in New York on May 7th 2009. It's a long presentation and over an hour in length. Not much new here relative to other presentations...just a knitting together of many of the others on here.

There is an increasing availability of free and open access resources for scientists to use on the internet. Coupled with an increasing number of Open Source software programs we are in the middle of a revolution in data availability and tools to manipulate these data. ChemSpider is a free access website built with the intention of providing a structure centric community for chemists. As an aggregator of chemistry related information from many sources, at present over 21.5 million unique chemical entities from over 190 separate data sources, ChemSpider has taken on the task of both robotically and manually integrating and curating publicly available data sources. ChemSpider has also provided an environment for users to deposit, curate and annotate chemistry-related information. This has allowed the community to enhance ChemSpider by adding analytical data, associating synthetic pathways and publications and connecting to social networking resources. I will discuss how ChemSpider is fast becoming the premier curated platform and centralized hub for resourcing information about chemical entities and how the platform provides the foundation data for services allowing the analysis of analytical data and collaborative science.How the web has weaved a web of interlinked chemistry data final

How the web has weaved a web of interlinked chemistry data finalUS Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

The internet has provided access to unprecedented quantities of data. In the domain of chemistry specifically over the past decade the web has become populated with tens of millions of chemical structures and related properties of assays together with tens of thousands of spectra and syntheses. The data have, to a large extent, remained disparate and disconnected. In recent years with the wave of Web 2.0 participation any chemist can contribute to both the sharing and validation of chemistry-related data whether it be via Wikipedia, the online encyclopedia, or one of the multiple public compound databases. The presentation will offer a perspective of what is available today, our experiences of building a public compound database to link together the internet and a suggested path forward for enabling even greater integration and connectivity for chemistry data for the masses to both use and participate in developing. PubChem: a public chemical information resource for big data chemistry

Presented at the Joint Statistical Meetings (JSM) 2020 (virtual) on August 3, 2020.

==== Abstract ====

The idea of “big data” has recently been drawing much attention of the scientific community as well as the general public. An example of big data in Chemistry is the data contained in PubChem, which is a public database of chemical substance descriptions and their biological activities at the National Institutes of Health. PubChem is a sizeable system with 235 million depositor-provided substance descriptions, 96 million unique chemical structures, 1.1 million biological assays, and 268 million biological activity result outcomes. It also contains significant amounts of scientific research data and the inter-relationships between chemicals, proteins, genes, scientific literature, patents and more. PubChem resources have been used in many studies for developing bioactivity and toxicity prediction models, discovering multi-target ligands, and identifying new macromolecule targets of compounds (for drug-repurposing or off-target side effect prediction). This presentation provides an overview of how PubChem’s data, tools, and services can be used for bioassay data analysis and virtual screening (VS) and discusses important aspects of exploiting PubChem for drug discovery.

Serving the medicinal chemistry community with Royal Society of Chemistry che...

Serving the medicinal chemistry community with Royal Society of Chemistry che...US Environmental Protection Agency (EPA), Center for Computational Toxicology and Exposure

The Royal Society of Chemistry (RSC) is a major participant in providing access to chemistry related data via the web. As an internationally renowned society for the chemical sciences, a scientific publisher and the host of the ChemSpider database for the community, RSC continues to make dramatic strides in providing online access to data. ChemSpider provides access to over 30 million chemicals sourced from over 500 data suppliers and linked out to related information on the web. The platform is a crowdsourcing environment whereby members of the community can participate in validating and expanding the content of the database. With a set of application programming interfaces ChemSpider is used by various organizations and projects to serve up data for various purposes. These include structure identification for mass spectrometry instrument vendors, RSC databases such as the Marinlit natural products database and a European grant-based project from the Innovative Medicines Initiative fund. This presentation will provide an overview of various cheminformatics activities and projects that RSC is involved with to serve the medicinal chemistry community. This will include the Open PHACTS semantic web project, the PharmaSea project to identify new pharmaceutical leads from the ocean and the UK National Compound Collection to identify new lead compounds contained within PhD theses.Clinical Anatomy 9566

Workshop presented to graduate students in Clinical Anatomy 9566 at the University of Western Ontario on November 26, 2010.

Similar to Cochrane workshop2016 (20)

ChemSpider – The Vision and Challenges Associated with Building a Free Online...

ChemSpider – The Vision and Challenges Associated with Building a Free Online...

RSC ChemSpider -- Managing and Integrating Chemistry on the Internet to Build...

RSC ChemSpider -- Managing and Integrating Chemistry on the Internet to Build...

Ebi public meeting on internet chemistry databases november 2010

Ebi public meeting on internet chemistry databases november 2010

Exhaustive Literature Searching (Systematic Reviews)

Exhaustive Literature Searching (Systematic Reviews)

Web services and the Development of Semantic Applications

Web services and the Development of Semantic Applications

A Global Commons for Scientific Data: Molecules and Wikidata

A Global Commons for Scientific Data: Molecules and Wikidata

ChemSpider as a Foundation for Crowdsourcing and Collaborations in Open Chemi...

ChemSpider as a Foundation for Crowdsourcing and Collaborations in Open Chemi...

How the web has weaved a web of interlinked chemistry data final

How the web has weaved a web of interlinked chemistry data final

PubChem: a public chemical information resource for big data chemistry

PubChem: a public chemical information resource for big data chemistry

Serving the medicinal chemistry community with Royal Society of Chemistry che...

Serving the medicinal chemistry community with Royal Society of Chemistry che...

More from petermurrayrust

Omdi2021 Ontologies for (Materials) Science in the Digital Age

A review of computable ontologies relevant to science and how they can help Materials Science in particular

Open Science Principles and Practice

Talk to Indian National Young Academy of Scientists on Open Sciuence. Emphasis on Open Notebook and Data Science, mining data from journals

Can machines understand the scientific literature?

A presentation to Cambridge MPhil Computational Biology. 2020-11-11 . Presenters Peter Murray-Rust, Shweata Hegde and Ambreen Hamadani from https://github.com/petermr/openvirus .

This chunk is PMR with a large break in the middle for SH and AH talks.

I cover Global Challenges, knowledge equity, semantics of scientific articles, Wikidata, Data Extraction from images, and ethics/politics.

Answer: Yes, technically. No, politically as the Publisher-Academic Complex will block it.

OpenVirus at OpenPublishingFest

Semantic content created from Open Access papers to help in the fight against viral epidemics. Includes contributions from NIPGR interns, 5 supported by Indian National Young Academy of Scientists.

Open Virus Indian Presentation

Overview of openVirus project. Interns in India have worked for 2 months to extract scientific knowledge from the literature about viral epidemics. Covers data science, machine learning and virtual collaboration

Automatic mining of data from materials science literature

The literature on materials science (batteries, etc.) contains huge amounts of scientific facts, but not in easily accessible form. our AMI program has been developed to automatically:

scrape , clean, annotate and display/publish

data for re-use in science.

Examples will be given from electrochemistry, magnetism and other fields . The general principles and (open) tech are applicable to many other disciplines.

Climate Change and Human Migration

A presentation by Open Climate Knowledge for European Forum for Advanced Practices. Showing how the scientific literature can be searched for knowledge on this multidisciplinary topic.

openVirus - tools for discovering literature on viruses

Open tools for scraping scientific articles from the scientific literature on a high-throughput

XML for science; its huge potential; but are pubiishers preventing it?

XML can represent almost all well derfined scientific objects. chemistry, plants medcine. But it's not yet widely used. Is this because publishers oppose thr re-use of science?

Early Career Reseachers in Science. Start Early, Be Open , Be Brave

Highlights the importance of supporting Early Career Researchers to pursue their own ideas, possibly alongside their main research. Illustrated with biology but applies to all fields of science. This was a 14 min presentation and shows narratives of how ECRs develop and reinforce each other.

Early Career Reseachers and Open Healthcare

Presentation given at NUI, Galway 2019-04-11 for Open Science Week.

An overview of Early Career Researchers, their innovation and contribution towards Open Infrastructure

Rapid biomedical search

The ContentMine system (Open Source) can search EuropePMC and download hundreds of articles in seconds. These can be indexed by AMI dictionaries allowing a rapid evaluations and refinement of the search

Scientific search for everyone

The scientific and medical literature is a vast resource of knowledge, but it needs turning into semantic FAIR form. The ContentMine can do this and we presented a rapid overview of the potential

Openplant2018 Poster; Semantic searching

A poster presented at OpenPLant 2018 showing how ContentMine dictionaries can enhance the precision and power of search (in this case plants)

Extracting science from the archive

A 10-minute talk to lovers of early science (e.g. 1600-1900) at the Royal Society. Archivists , computer vision, scientific historical metadata all relevant.

I chose 4 examples of monochrome diagrams that I can extract something from automatically. Some of the methids would scale to larger volumes , e.g. tables for figures, or maps with points

WikiFactMine: Ontology for Everybody and Everything

WikiFactMine https://www.wikidata.org/wiki/Wikidata:WikiFactMine consists of several hundreds dictionaries created from Wikidata. They cover everything from science to medicine to geo to arts. Every item has a unique identifier (Q) and normally has several properties (P) creating a series of triples. Using SPARQL it's possible to create sophiticated queries and run them in seconds

Disrupting the Publisher-Academic Complex

The Publisher -Academic complex is a dystopian cycle where academia gives (mega)publishers manuscripts, reviews and money and the publishers give personal and institutional glory(vanity). This is analysed in its origins, impact and harm. The disruption can come from Advocacy/Activism, Community and Tools. Disruption comes from doing things Better or Novel, not Prices

AUDIO : https://soundcloud.com/damahub/peter-murray-rust-disturbing-the-publisher-academic-complex-210418-british-library

Thanks to DaMaHub

This has now been edited by Ewan McAndrew (Edinburgh Wikimedian in Residence) many thanks - to synchronize the slides with the soundtrack. https://media.ed.ac.uk/media/1_46h85ltt Brilliant

Paradise Lost and The Right to Read is the Right to Mine

Presented to UIUC CIRSS seminars to a mixed group of Library, CS, domain scientists with a great contingent of Early Career Researchers. Starts by honouring the creation of the wonderful NCSA Mosaic at UIUC in 1993 and the paradise of knowledge and community it opened. Then shows the gradual and tragic decline of the web into a megacorporate neocolonialist empire, where knowledge is sacrificed for money and power.

You have seen many of the slides before but the words are different and have been recorded.

Young people in an Age of Knowledge Neocolonialism

Knowledge is being ruthlessly enclosed by megacorporations.

More from petermurrayrust (20)

Omdi2021 Ontologies for (Materials) Science in the Digital Age

Omdi2021 Ontologies for (Materials) Science in the Digital Age

Can machines understand the scientific literature?

Can machines understand the scientific literature?

Automatic mining of data from materials science literature

Automatic mining of data from materials science literature

openVirus - tools for discovering literature on viruses

openVirus - tools for discovering literature on viruses

XML for science; its huge potential; but are pubiishers preventing it?

XML for science; its huge potential; but are pubiishers preventing it?

Early Career Reseachers in Science. Start Early, Be Open , Be Brave

Early Career Reseachers in Science. Start Early, Be Open , Be Brave

WikiFactMine: Ontology for Everybody and Everything

WikiFactMine: Ontology for Everybody and Everything

Paradise Lost and The Right to Read is the Right to Mine

Paradise Lost and The Right to Read is the Right to Mine

Young people in an Age of Knowledge Neocolonialism

Young people in an Age of Knowledge Neocolonialism

Recently uploaded

The Normal Electrocardiogram - Part I of II

These lecture slides, by Dr Sidra Arshad, offer a quick overview of physiological basis of a normal electrocardiogram.

Learning objectives:

1. Define an electrocardiogram (ECG) and electrocardiography

2. Describe how dipoles generated by the heart produce the waveforms of the ECG

3. Describe the components of a normal electrocardiogram of a typical bipolar leads (limb II)

4. Differentiate between intervals and segments

5. Enlist some common indications for obtaining an ECG

Study Resources:

1. Chapter 11, Guyton and Hall Textbook of Medical Physiology, 14th edition

2. Chapter 9, Human Physiology - From Cells to Systems, Lauralee Sherwood, 9th edition

3. Chapter 29, Ganong’s Review of Medical Physiology, 26th edition

4. Electrocardiogram, StatPearls - https://www.ncbi.nlm.nih.gov/books/NBK549803/

5. ECG in Medical Practice by ABM Abdullah, 4th edition

6. ECG Basics, http://www.nataliescasebook.com/tag/e-c-g-basics

New Directions in Targeted Therapeutic Approaches for Older Adults With Mantl...

i3 Health is pleased to make the speaker slides from this activity available for use as a non-accredited self-study or teaching resource.

This slide deck presented by Dr. Kami Maddocks, Professor-Clinical in the Division of Hematology and

Associate Division Director for Ambulatory Operations

The Ohio State University Comprehensive Cancer Center, will provide insight into new directions in targeted therapeutic approaches for older adults with mantle cell lymphoma.

STATEMENT OF NEED

Mantle cell lymphoma (MCL) is a rare, aggressive B-cell non-Hodgkin lymphoma (NHL) accounting for 5% to 7% of all lymphomas. Its prognosis ranges from indolent disease that does not require treatment for years to very aggressive disease, which is associated with poor survival (Silkenstedt et al, 2021). Typically, MCL is diagnosed at advanced stage and in older patients who cannot tolerate intensive therapy (NCCN, 2022). Although recent advances have slightly increased remission rates, recurrence and relapse remain very common, leading to a median overall survival between 3 and 6 years (LLS, 2021). Though there are several effective options, progress is still needed towards establishing an accepted frontline approach for MCL (Castellino et al, 2022). Treatment selection and management of MCL are complicated by the heterogeneity of prognosis, advanced age and comorbidities of patients, and lack of an established standard approach for treatment, making it vital that clinicians be familiar with the latest research and advances in this area. In this activity chaired by Michael Wang, MD, Professor in the Department of Lymphoma & Myeloma at MD Anderson Cancer Center, expert faculty will discuss prognostic factors informing treatment, the promising results of recent trials in new therapeutic approaches, and the implications of treatment resistance in therapeutic selection for MCL.

Target Audience

Hematology/oncology fellows, attending faculty, and other health care professionals involved in the treatment of patients with mantle cell lymphoma (MCL).

Learning Objectives

1.) Identify clinical and biological prognostic factors that can guide treatment decision making for older adults with MCL

2.) Evaluate emerging data on targeted therapeutic approaches for treatment-naive and relapsed/refractory MCL and their applicability to older adults

3.) Assess mechanisms of resistance to targeted therapies for MCL and their implications for treatment selection

ACUTE SCROTUM.....pdf. ACUTE SCROTAL CONDITIOND

Acute scrotum is a general term referring to an emergency condition affecting the contents or the wall of the scrotum.

There are a number of conditions that present acutely, predominantly with pain and/or swelling

A careful and detailed history and examination, and in some cases, investigations allow differentiation between these diagnoses. A prompt diagnosis is essential as the patient may require urgent surgical intervention

Testicular torsion refers to twisting of the spermatic cord, causing ischaemia of the testicle.

Testicular torsion results from inadequate fixation of the testis to the tunica vaginalis producing ischemia from reduced arterial inflow and venous outflow obstruction.

The prevalence of testicular torsion in adult patients hospitalized with acute scrotal pain is approximately 25 to 50 percent

Phone Us ❤85270-49040❤ #ℂall #gIRLS In Surat By Surat @ℂall @Girls Hotel With...

Phone Us ❤85270-49040❤ #ℂall #gIRLS In Surat By Surat @ℂall @Girls Hotel With 100% Satisfaction

Alcohol_Dr. Jeenal Mistry MD Pharmacology.pdf

Ethanol (CH3CH2OH), or beverage alcohol, is a two-carbon alcohol

that is rapidly distributed in the body and brain. Ethanol alters many

neurochemical systems and has rewarding and addictive properties. It

is the oldest recreational drug and likely contributes to more morbidity,

mortality, and public health costs than all illicit drugs combined. The

5th edition of the Diagnostic and Statistical Manual of Mental Disorders

(DSM-5) integrates alcohol abuse and alcohol dependence into a single

disorder called alcohol use disorder (AUD), with mild, moderate,

and severe subclassifications (American Psychiatric Association, 2013).

In the DSM-5, all types of substance abuse and dependence have been

combined into a single substance use disorder (SUD) on a continuum

from mild to severe. A diagnosis of AUD requires that at least two of

the 11 DSM-5 behaviors be present within a 12-month period (mild

AUD: 2–3 criteria; moderate AUD: 4–5 criteria; severe AUD: 6–11 criteria).

The four main behavioral effects of AUD are impaired control over

drinking, negative social consequences, risky use, and altered physiological

effects (tolerance, withdrawal). This chapter presents an overview

of the prevalence and harmful consequences of AUD in the U.S.,

the systemic nature of the disease, neurocircuitry and stages of AUD,

comorbidities, fetal alcohol spectrum disorders, genetic risk factors, and

pharmacotherapies for AUD.

KDIGO 2024 guidelines for diabetologists

KDIGO guidelines 2024 for evaluation and management of CKD, related to diabetes and management of diabetic kidney disease

ANATOMY AND PHYSIOLOGY OF URINARY SYSTEM.pptx

Valuable Content of Human Anatomy and Physiology of Urinary system as per PCI Syllabus for Pharmacy and PharmD Students.

Lung Cancer: Artificial Intelligence, Synergetics, Complex System Analysis, S...

RESULTS: Overall life span (LS) was 2252.1±1742.5 days and cumulative 5-year survival (5YS) reached 73.2%, 10 years – 64.8%, 20 years – 42.5%. 513 LCP lived more than 5 years (LS=3124.6±1525.6 days), 148 LCP – more than 10 years (LS=5054.4±1504.1 days).199 LCP died because of LC (LS=562.7±374.5 days). 5YS of LCP after bi/lobectomies was significantly superior in comparison with LCP after pneumonectomies (78.1% vs.63.7%, P=0.00001 by log-rank test). AT significantly improved 5YS (66.3% vs. 34.8%) (P=0.00000 by log-rank test) only for LCP with N1-2. Cox modeling displayed that 5YS of LCP significantly depended on: phase transition (PT) early-invasive LC in terms of synergetics, PT N0—N12, cell ratio factors (ratio between cancer cells- CC and blood cells subpopulations), G1-3, histology, glucose, AT, blood cell circuit, prothrombin index, heparin tolerance, recalcification time (P=0.000-0.038). Neural networks, genetic algorithm selection and bootstrap simulation revealed relationships between 5YS and PT early-invasive LC (rank=1), PT N0—N12 (rank=2), thrombocytes/CC (3), erythrocytes/CC (4), eosinophils/CC (5), healthy cells/CC (6), lymphocytes/CC (7), segmented neutrophils/CC (8), stick neutrophils/CC (9), monocytes/CC (10); leucocytes/CC (11). Correct prediction of 5YS was 100% by neural networks computing (area under ROC curve=1.0; error=0.0).

CONCLUSIONS: 5YS of LCP after radical procedures significantly depended on: 1) PT early-invasive cancer; 2) PT N0--N12; 3) cell ratio factors; 4) blood cell circuit; 5) biochemical factors; 6) hemostasis system; 7) AT; 8) LC characteristics; 9) LC cell dynamics; 10) surgery type: lobectomy/pneumonectomy; 11) anthropometric data. Optimal diagnosis and treatment strategies for LC are: 1) screening and early detection of LC; 2) availability of experienced thoracic surgeons because of complexity of radical procedures; 3) aggressive en block surgery and adequate lymph node dissection for completeness; 4) precise prediction; 5) adjuvant chemoimmunoradiotherapy for LCP with unfavorable prognosis.

micro teaching on communication m.sc nursing.pdf

Microteaching is a unique model of practice teaching. It is a viable instrument for the. desired change in the teaching behavior or the behavior potential which, in specified types of real. classroom situations, tends to facilitate the achievement of specified types of objectives.

How STIs Influence the Development of Pelvic Inflammatory Disease.pptx

STIs may cause PID. For the both disease, herbal medicine Fuyan Pill can be a solution.

HOT NEW PRODUCT! BIG SALES FAST SHIPPING NOW FROM CHINA!! EU KU DB BK substit...

Contact us if you are interested:

Email / Skype : kefaya1771@gmail.com

Threema: PXHY5PDH

New BATCH Ku !!! MUCH IN DEMAND FAST SALE EVERY BATCH HAPPY GOOD EFFECT BIG BATCH !

Contact me on Threema or skype to start big business!!

Hot-sale products:

NEW HOT EUTYLONE WHITE CRYSTAL!!

5cl-adba precursor (semi finished )

5cl-adba raw materials

ADBB precursor (semi finished )

ADBB raw materials

APVP powder

5fadb/4f-adb

Jwh018 / Jwh210

Eutylone crystal

Protonitazene (hydrochloride) CAS: 119276-01-6

Flubrotizolam CAS: 57801-95-3

Metonitazene CAS: 14680-51-4

Payment terms: Western Union,MoneyGram,Bitcoin or USDT.

Deliver Time: Usually 7-15days

Shipping method: FedEx, TNT, DHL,UPS etc.Our deliveries are 100% safe, fast, reliable and discreet.

Samples will be sent for your evaluation!If you are interested in, please contact me, let's talk details.

We specializes in exporting high quality Research chemical, medical intermediate, Pharmaceutical chemicals and so on. Products are exported to USA, Canada, France, Korea, Japan,Russia, Southeast Asia and other countries.

Evaluation of antidepressant activity of clitoris ternatea in animals

Evaluation of antidepressant activity of clitoris ternatea in animals

ARTIFICIAL INTELLIGENCE IN HEALTHCARE.pdf

Artificial intelligence (AI) refers to the simulation of human intelligence processes by machines, especially computer systems. It encompasses tasks such as learning, reasoning, problem-solving, perception, and language understanding. AI technologies are revolutionizing various fields, from healthcare to finance, by enabling machines to perform tasks that typically require human intelligence.

ARTHROLOGY PPT NCISM SYLLABUS AYURVEDA STUDENTS

PPT RELATED TO ARTHROLOGY ACCORDING TO NCISM AYURVEDA

Knee anatomy and clinical tests 2024.pdf

This includes all relevant anatomy and clinical tests compiled from standard textbooks, Campbell,netter etc..It is comprehensive and best suited for orthopaedicians and orthopaedic residents.

Pulmonary Thromboembolism - etilogy, types, medical- Surgical and nursing man...

Disruption of blood supply to lung alveoli due to blockage of one or more pulmonary blood vessels is called as Pulmonary thromboembolism. In this presentation we will discuss its causes, types and its management in depth.

Recently uploaded (20)

New Directions in Targeted Therapeutic Approaches for Older Adults With Mantl...

New Directions in Targeted Therapeutic Approaches for Older Adults With Mantl...

Phone Us ❤85270-49040❤ #ℂall #gIRLS In Surat By Surat @ℂall @Girls Hotel With...

Phone Us ❤85270-49040❤ #ℂall #gIRLS In Surat By Surat @ℂall @Girls Hotel With...

Lung Cancer: Artificial Intelligence, Synergetics, Complex System Analysis, S...

Lung Cancer: Artificial Intelligence, Synergetics, Complex System Analysis, S...

How STIs Influence the Development of Pelvic Inflammatory Disease.pptx

How STIs Influence the Development of Pelvic Inflammatory Disease.pptx

HOT NEW PRODUCT! BIG SALES FAST SHIPPING NOW FROM CHINA!! EU KU DB BK substit...

HOT NEW PRODUCT! BIG SALES FAST SHIPPING NOW FROM CHINA!! EU KU DB BK substit...

Evaluation of antidepressant activity of clitoris ternatea in animals

Evaluation of antidepressant activity of clitoris ternatea in animals

Maxilla, Mandible & Hyoid Bone & Clinical Correlations by Dr. RIG.pptx

Maxilla, Mandible & Hyoid Bone & Clinical Correlations by Dr. RIG.pptx

Pulmonary Thromboembolism - etilogy, types, medical- Surgical and nursing man...

Pulmonary Thromboembolism - etilogy, types, medical- Surgical and nursing man...

Cochrane workshop2016

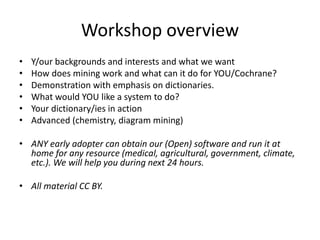

- 1. Workshop overview • Y/our backgrounds and interests and what we want • How does mining work and what can it do for YOU/Cochrane? • Demonstration with emphasis on dictionaries. • What would YOU like a system to do? • Your dictionary/ies in action • Advanced (chemistry, diagram mining) • ANY early adopter can obtain our (Open) software and run it at home for any resource (medical, agricultural, government, climate, etc.). We will help you during next 24 hours. • All material CC BY.

- 2. Cochrane UK & Ireland Symposium 2016, Birmingham, UK, 2016-03-15 Let the Machine Help with your Systematic Reviews Peter Murray-Rust1,2 Christopher Kittel2 [1]University of Cambridge [2]TheContentMine Simple, Universal, Knowledge creation and re-use

- 3. The Right to Read is the Right to Mine**PeterMurray-Rust, 2011 http://contentmine.org

- 4. Resources • Europe PubMedCentral http://europepmc.org/ • ContentMine toolkit https://github.com/ContentMine/ • Wikidata: https://www.wikidata.org/wiki/Wikidata:Main_Page • Hypothes.is https://hypothes.is/ [1] • Etherpad: http://pads.cottagelabs.com/p/cochrane2016 • Note: early adopters can obtain our (Open) software and run it at home… • [1] Not used in CochraneBham workshop

- 7. catalogue getpapers query Daily Crawl EPMC, arXiv CORE , HAL, (UNIV repos) ToC services PDF HTML DOC ePUB TeX XML PNG EPS CSV XLSURLs DOIs crawl quickscrape norma Normalizer Structurer Semantic Tagger Text Data Figures ami UNIV Repos search Lookup CONTENT MINING Chem Phylo Trials Crystal Plants COMMUNITY plugins Visualization and Analysis PloSONE, BMC, peerJ… Nature, IEEE, Elsevier… Publisher Sites scrapers queries taggers abstract methods references Captioned Figures Fig. 1 HTML tables 30, 000 pages/day Semantic ScholarlyHTML Facts CONTENTMINE Complete OPEN Platform for Mining Scientific Literature dictionaries

- 9. abstract methods references Captioned Figures Fig. 1 HTML tables abstract methods references Captioned Figures Fig. 1 HTML tables Dict A Dict B Image Caption Table Caption MINING with sections and dictionaries [W3C Annotation / https://hypothes.is/ ]

- 10. Disease Dictionary (ICD-10) <dictionary title="disease"> <entry term="1p36 deletion syndrome"/> <entry term="1q21.1 deletion syndrome"/> <entry term="1q21.1 duplication syndrome"/> <entry term="3-methylglutaconic aciduria"/> <entry term="3mc syndrome” <entry term="corpus luteum cyst”/> <entry term="cortical blindness" /> SELECT DISTINCT ?thingLabel WHERE { ?thing wdt:P494 ?wd . ?thing wdt:P279 wd:Q12136 . SERVICE wikibase:label { bd:serviceParam wikibase:language "en" } } wdt:P494 = ICD-10 (P494) identifier wd:Q12136 = disease (Q12136) abnormal condition that affects the body of an organism Wikidata ontology for disease

- 11. • ChEBI (chemicals at EBI) ftp://ftp.ebi.ac.uk/pub/databases/chebi/Flat_file_tab_delimited/names_3star.tsv.gz) • combined with WIKIDATA: World Health Organisation International Nonproprietary Name (P2275) * => 4947 items in the dictionary (inn.xml) DRUGS <dictionary title="inn"> <entry term="(r)-fenfluramine"/> <entry term="abacavir"/> <entry term="abafungin"/> <entry term="abafungina"/> <entry term="abafungine"/> <entry term="abafunginum"/> <entry term="abamectin"/> <entry term="abarelix"/> <entry term="abatacept"/>

- 12. <dictionary title="funders"> <!— from http://help.crossref.org/funder-registry with thanks --> <entry id="http://dx.doi.org/10.13039/100001436" term="1675 Foundation"/> <entry id="http://dx.doi.org/10.13039/100004343" term="3M"/> <entry id=“http://dx.doi.org/10.13039/501100005957” term="8020 Promotion Foundation"/> <entry id="http://dx.doi.org/10.13039/501100007139" term="A Richer Life Foundation"/> <entry id="http://dx.doi.org/10.13039/100006543" term="A World Celiac Community Foundation"/> <entry id="http://dx.doi.org/10.13039/100001962" term="A-T Children's Project"/> <entry id="http://dx.doi.org/10.13039/100008456" term="A. Alfred Taubman Medical Research Institute"/> 11566 entries Funders Dictionary

- 13. Dengue Mosquito

- 14. <dictionary name="genus"> <entry term="Aa"/> <entry term="Aaaba"/> <entry term="Aacanthocnema"/> <entry term="Aaosphaeria"/> <entry term="Aaptos"/> <entry term="Aaptosyax"/> <entry term="Aaroniella"/> <entry term="Aaronsohnia"/> <entry term="Abablemma"/> Genera from NCBI TaxDump

- 15. <dictionary title="hgnc"> <entry term="A1BG" name="alpha-1-B glycoprotein"/> <entry term="A1BG-AS1" name="A1BG antisense RNA 1"/> <entry term="A1CF" name="APOBEC1 complementation factor"/> <entry term="A2M" name="alpha-2-macroglobulin"/> <entry term="A2M-AS1" name="A2M antisense RNA 1 (head to head)"/> <entry term="A2ML1" name="alpha-2-macroglobulin-like 1"/> <entry term="A2ML1-AS1" name="A2ML1 antisense RNA 1"/> Human Genes (HGNC)

- 16. <entry term="Aaas" name="achalasia, adrenocortical insufficiency, alacrimia"/> <entry term="Aacs" name="acetoacetyl-CoA synthetase"/> <entry term="Aadac" name="arylacetamide deacetylase (esterase)"/> <entry term="Aadacl2" name="arylacetamide deacetylase-like 2"/> <entry term="Aadacl3" name="arylacetamide deacetylase-like 3"/> <entry term="Aadat" name="aminoadipate aminotransferase"/> <entry term="Aaed1" name="AhpC/TSA antioxidant enzyme domain containing 1"/> <entry term="Aagab" name="alpha- and gamma-adaptin binding protein"/> <entry term="Aak1" name="AP2 associated kinase 1"/> <entry term="Aamdc" name="adipogenesis associated Mth938 domain containing"/> <entry term="Aamp" name="angio-associated migratory protein"/> Mouse genes (JAXson)

- 17. Ebola!

- 18. <dictionary title="tropicalVirus"> <entry term="ZIKV" name="Zika virus"/> <entry term="Zika" name="Zika virus"/> <entry term="DENV" name="Dengue virus"/> <entry term="Dengue" name="Dengue virus"/> <entry term="CHIKV" name="Chikungunya virus"/> <entry term="Chikungunya" name="Chikungunya virus"/> <entry term="WNV" name="West Nile virus"/> <entry term="West Nile" name="West Nile virus"/> <entry term="YFV" name="Yellow fever virus"/> <entry term="Yellow fever" name="Yellow fever virus"/> <entry term="HPV" name="Human papilloma virus"/> <entry term="Human papilloma virus" name="Human papilloma virus"/> </dictionary> Terms co-ocurring with “Zika”

- 19. <dictionary title="cochrane"> <entry term="Cochrane Library"/> <entry term="Cochrane Reviews"/> <entry term="Cochrane Central Register of Controlled Trials"/> <entry term="Cochrane"/> <entry term="randomize"/> <entry term="meta-analysis"/> <entry term="Embase"/> <entry term="MEDLINE"/> <entry term="eligibility"/> <entry term="exclusion"/> <entry term="outcome"/> <entry term="Review Manager"/> <entry term="STATA"/> <entry term="RCT"/> </dictionary> Terms lexically related to “meta-analysis”

- 20. Mining strategy • Discover. negotiate permissions . => bibliography • Crawl / Scrape (download), documents AND supplemental • Normalize. PDF => XML • Index: facets => Facts and snippets (“entities”) • Interpret/analyze entities => relationships, aggregations (“Transformative”) • Publish

- 21. catalogue getpapers query Daily Crawl EuPMC, arXiv CORE , HAL, (UNIV repos) ToC services PDF HTML DOC ePUB TeX XML PNG EPS CSV XLSURLs DOIs crawl quickscrape norma Normalizer Structurer Semantic Tagger Text Data Figures ami UNIV Repos search Lookup CONTENT MINING Chem Phylo Trials Crystal Plants COMMUNITY plugins Visualization and Analysis PloSONE, BMC, peerJ… Nature, IEEE, Elsevier… Publisher Sites scrapers queries taggers abstract methods references Captioned Figures Fig. 1 HTML tables 30, 000 pages/day Semantic ScholarlyHTML Facts CONTENTMINE Complete OPEN Platform for Mining Scientific Literature

- 22. Demo PMR runs getpapers and ami Chris runs Python visualization of drug co-occurrence

- 23. Systematic Reviews Can we: • eliminate true negatives automatically? • extract data from formulaic language? • mine diagrams? • Annotate existing sources? • forward-reference clinical trials?

- 24. Polly has 20 seconds to read this paper… …and 10,000 more

- 25. ContentMine software can do this in a few minutes Polly: “there were 10,000 abstracts and due to time pressures, we split this between 6 researchers. It took about 2-3 days of work (working only on this) to get through ~1,600 papers each. So, at a minimum this equates to 12 days of full-time work (and would normally be done over several weeks under normal time pressures).”

- 26. 400,000 Clinical Trials In 10 government registries Mapping trials => papers http://www.trialsjournal.com/content/16/1/80 2009 => 2015. What’s happened in last 6 years?? Search the whole scientific literature For “2009-0100068-41”

- 28. Diagram Mining

- 29. Ln Bacterial load per fly 11.5 11.0 10.5 10.0 9.5 9.0 6.5 6.0 Days post—infection 0 1 2 3 4 5 Bitmap Image and Tesseract OCR