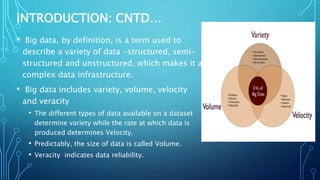

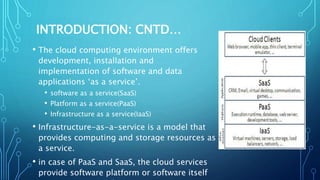

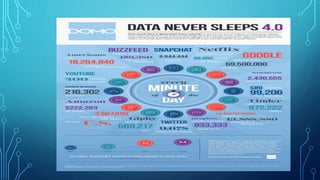

The document discusses the rise of cloud-based big data analytics in response to the growing volume and complexity of data generated in the digital age. It highlights challenges in analyzing big data using traditional methods and presents examples of applications in telecom and finance, such as call detail record processing and credit card fraud detection. The conclusion emphasizes the importance of cloud infrastructure for data analytics while also acknowledging ongoing issues like security and privacy.