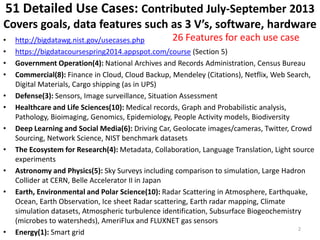

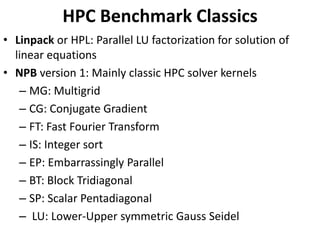

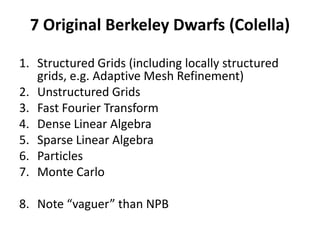

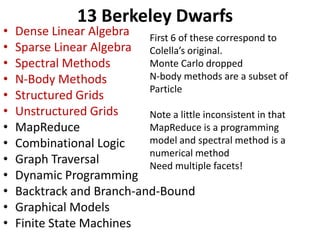

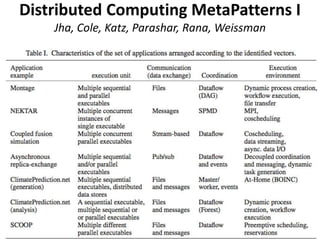

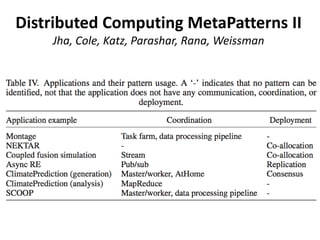

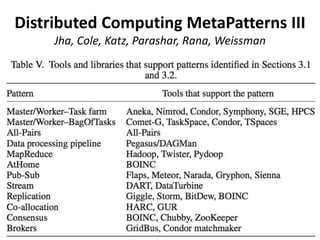

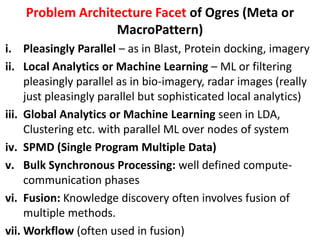

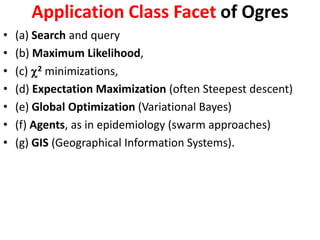

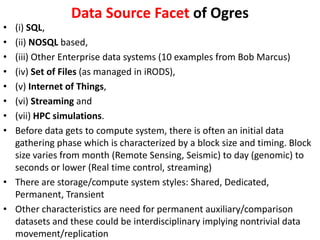

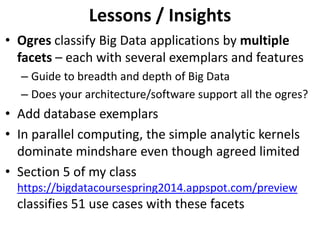

The document discusses big data applications and architectures, highlighting various use cases from sectors like government, healthcare, finance, and energy. It emphasizes the importance of analytics patterns and benchmarks for evaluating performance in parallel computing, while introducing concepts like 'Ogres' to classify applications by multiple facets. Additionally, it captures detailed insights into data features, processing styles, and collaborative research efforts across diverse fields.