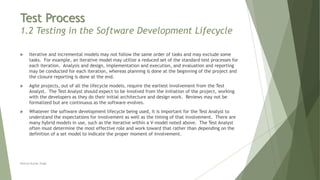

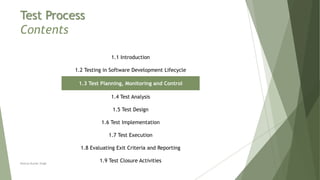

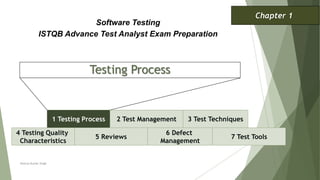

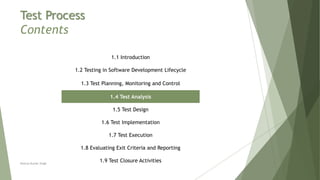

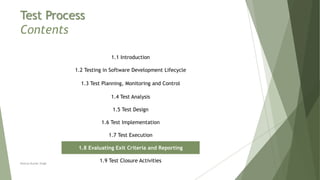

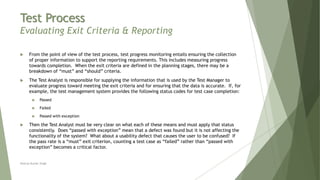

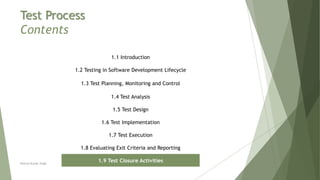

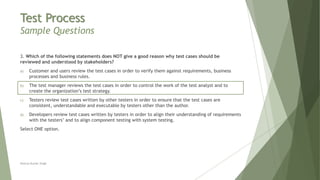

The document outlines the comprehensive testing process for software development, detailing activities including test planning, analysis, design, implementation, execution, and closure, specifically tailored for advanced ISTQB test analysts. It emphasizes the importance of aligning testing with various software development lifecycle models, understanding entry and exit criteria, and ensuring effective communication among stakeholders. The document also provides guidelines for organizing and executing tests, utilizing metrics for monitoring progress, and adapting testing strategies to different project requirements.