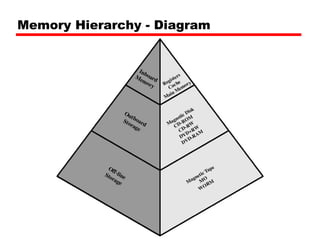

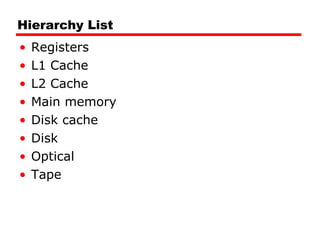

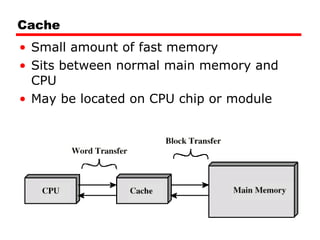

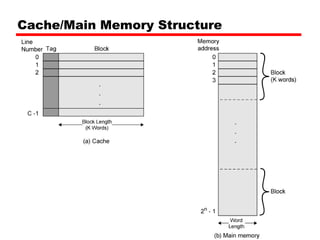

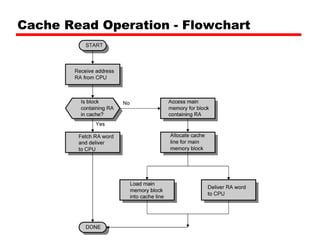

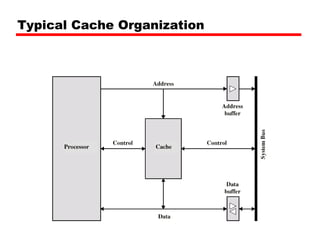

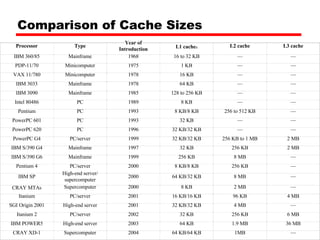

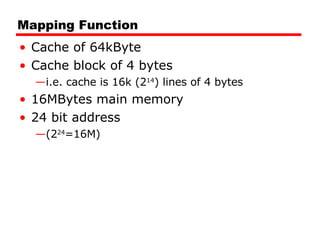

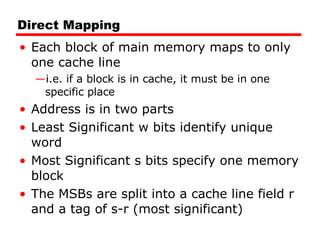

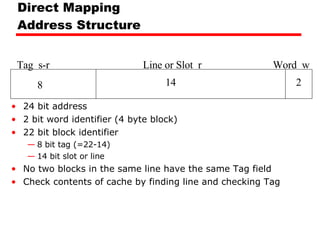

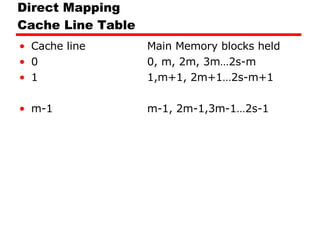

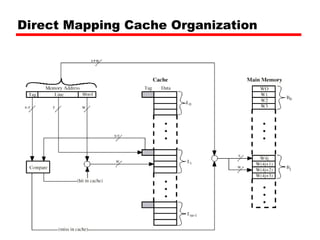

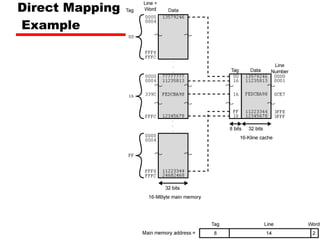

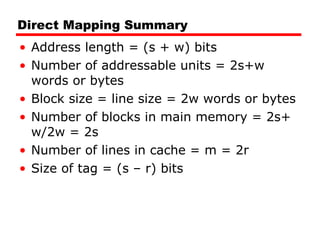

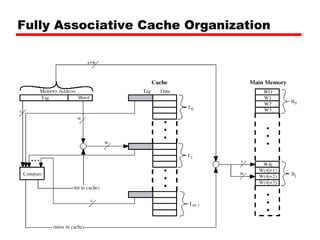

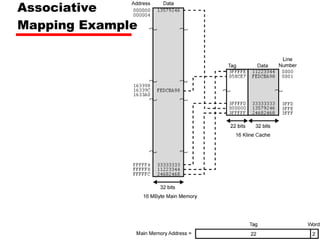

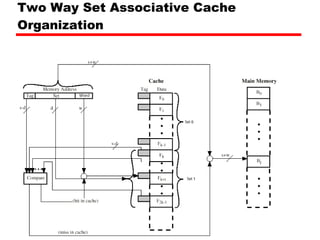

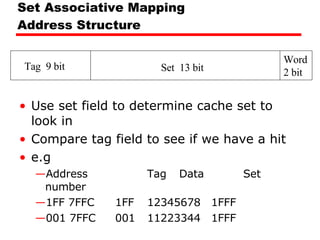

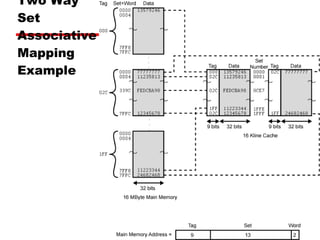

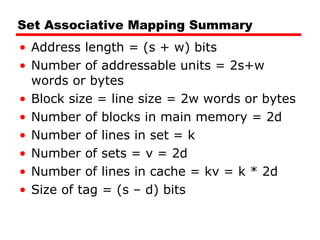

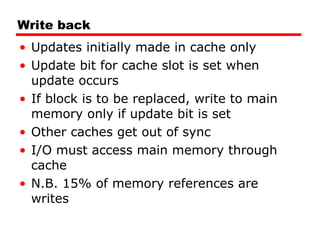

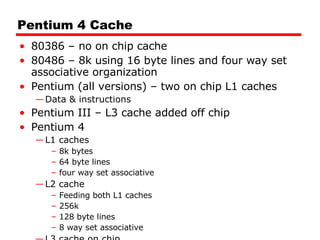

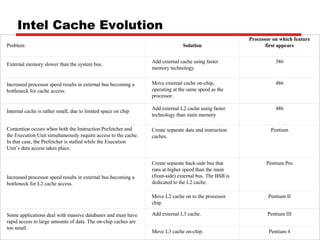

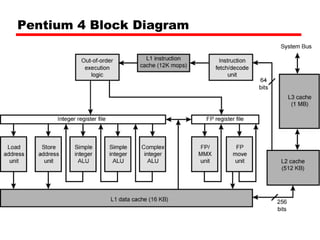

The document summarizes key characteristics of cache memory including location, capacity, unit of transfer, access methods, performance, physical types, organization, and hierarchy. It discusses cache memory in terms of where it is located (internal or external to the CPU), its typical sizes (word, block), access techniques (sequential, random, associative), performance metrics (access time, transfer rate), common physical implementations (SRAM, disk), and organizational aspects like mapping functions, replacement algorithms, and write policies. A cache sits between the CPU and main memory, using fast but small memory to speed up access to frequently used data from larger but slower main memory.