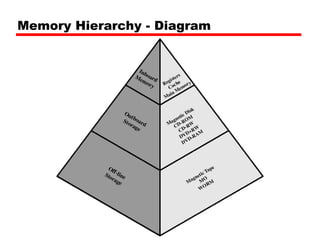

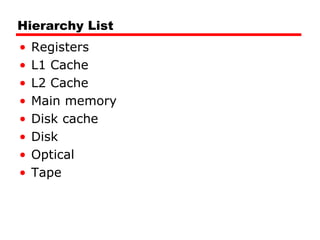

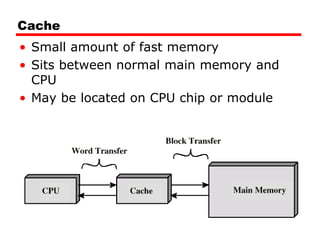

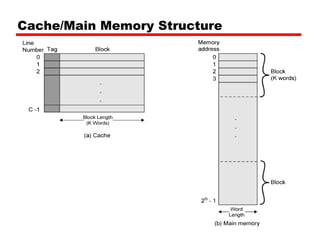

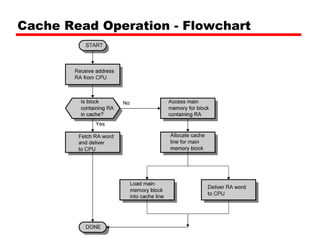

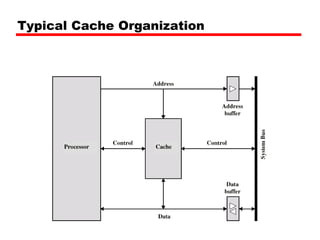

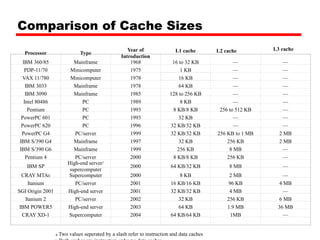

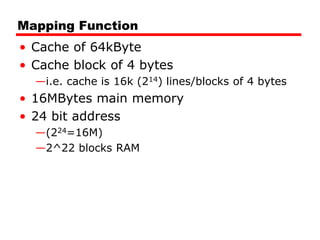

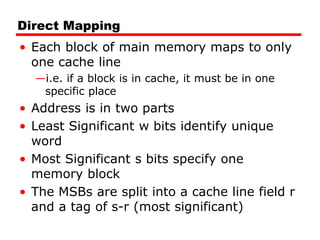

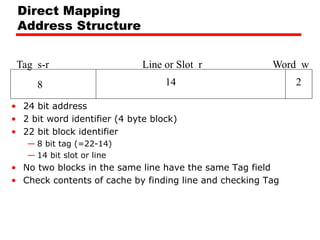

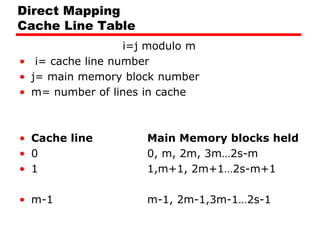

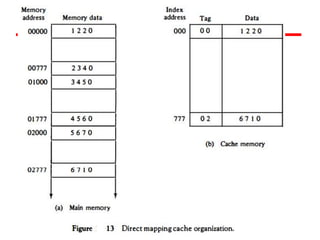

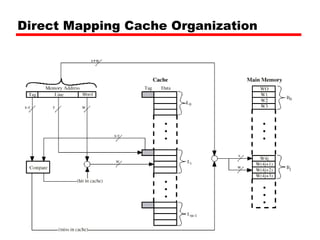

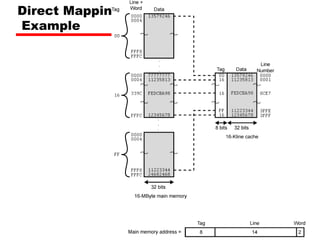

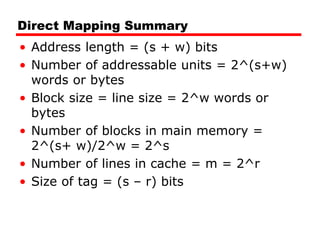

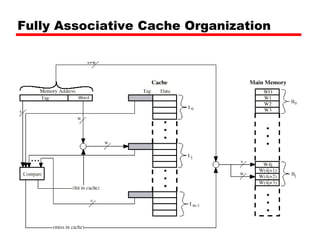

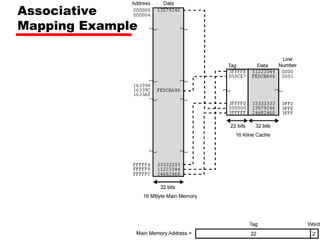

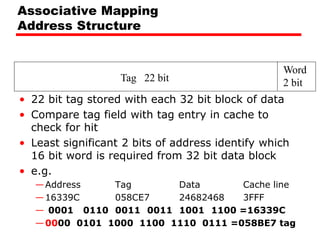

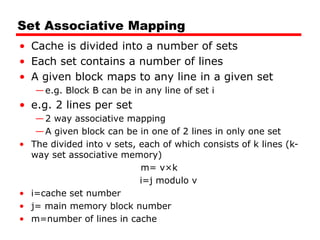

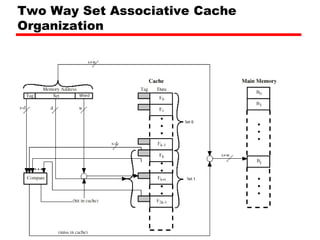

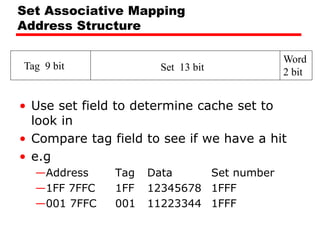

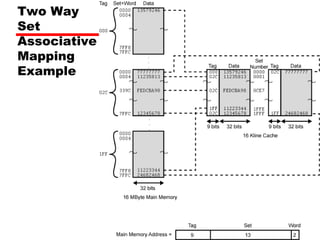

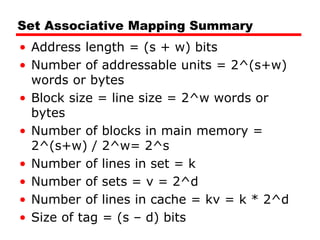

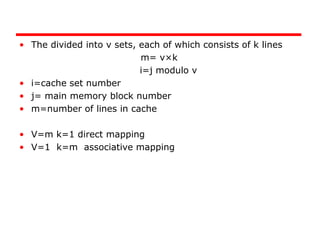

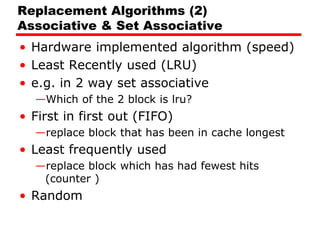

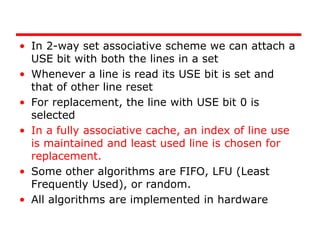

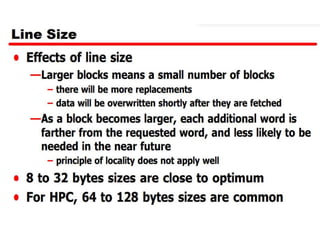

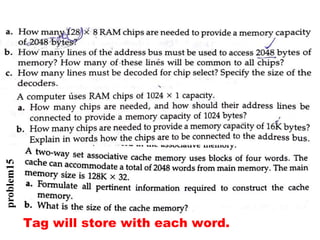

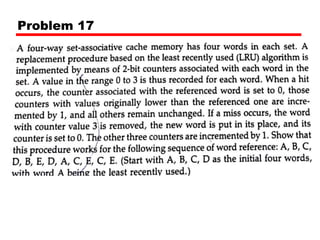

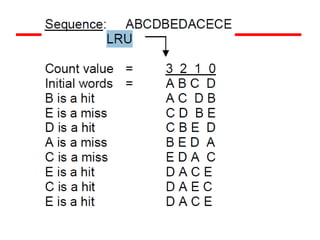

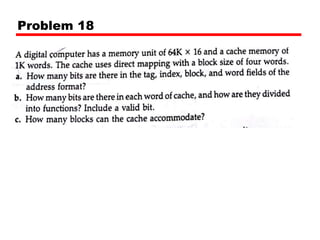

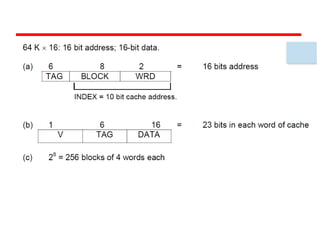

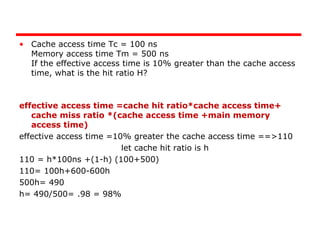

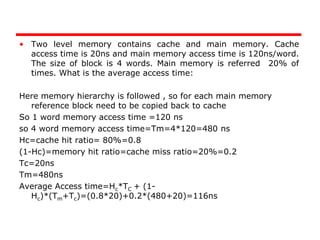

The document discusses cache memory characteristics including location, capacity, unit of transfer, access methods, performance, physical type, organization, and mapping functions. It provides details on direct mapping, associative mapping, set associative mapping, replacement algorithms, and write policies for cache memory. Key aspects covered include cache hierarchy, cache operation, typical cache organization, comparison of cache sizes over time, and how mapping functions, block size, and number of sets/ways impact cache design.