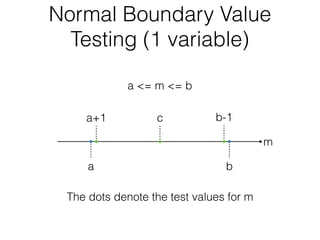

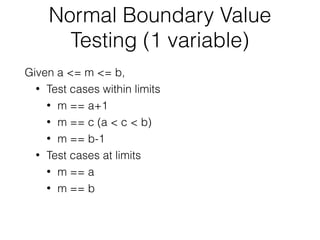

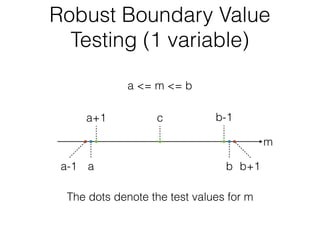

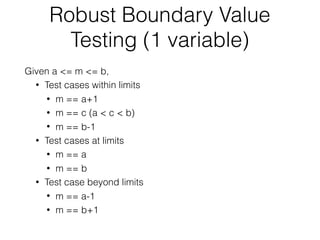

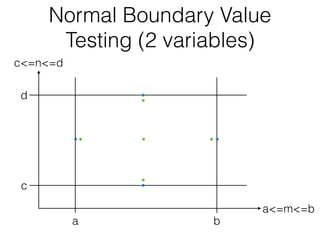

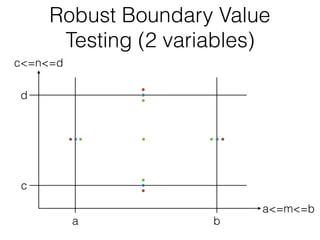

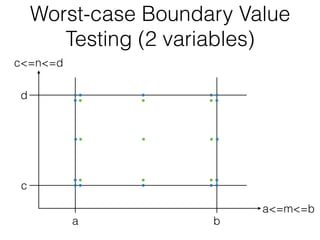

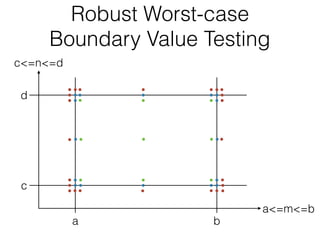

The document discusses boundary value testing, focusing on both normal and robust variants for one and two variables, outlining test cases within and at limits, as well as beyond limits. It highlights the differences between normal boundary value testing, which assumes single-fault conditions, and worst-case boundary value testing, which does not. Additionally, it notes limitations such as not accounting for variable interactions and potential oversaturation of tests in worst-case scenarios.