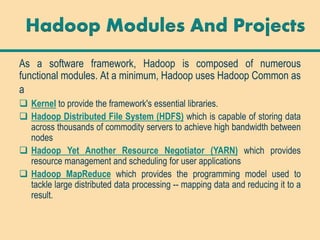

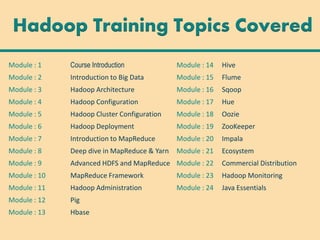

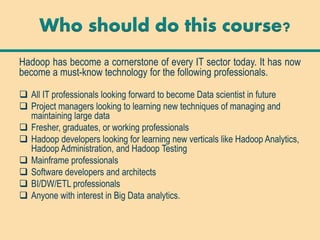

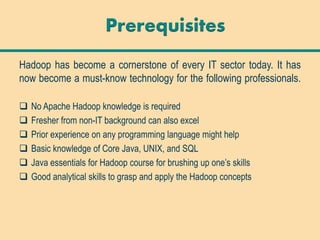

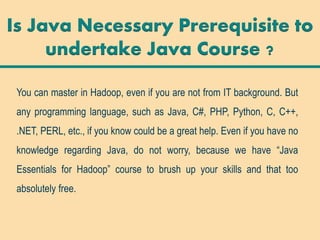

The document outlines the importance of Hadoop, an open-source Java-based framework for processing large datasets in distributed computing environments, emphasizing its relevance in today's data-centric industries. It details the training program which covers various modules related to Hadoop and its ecosystem, targeting IT professionals, project managers, and anyone interested in big data analytics. Additionally, it provides information about prerequisites for the course, training methods, and potential career opportunities for Hadoop professionals.