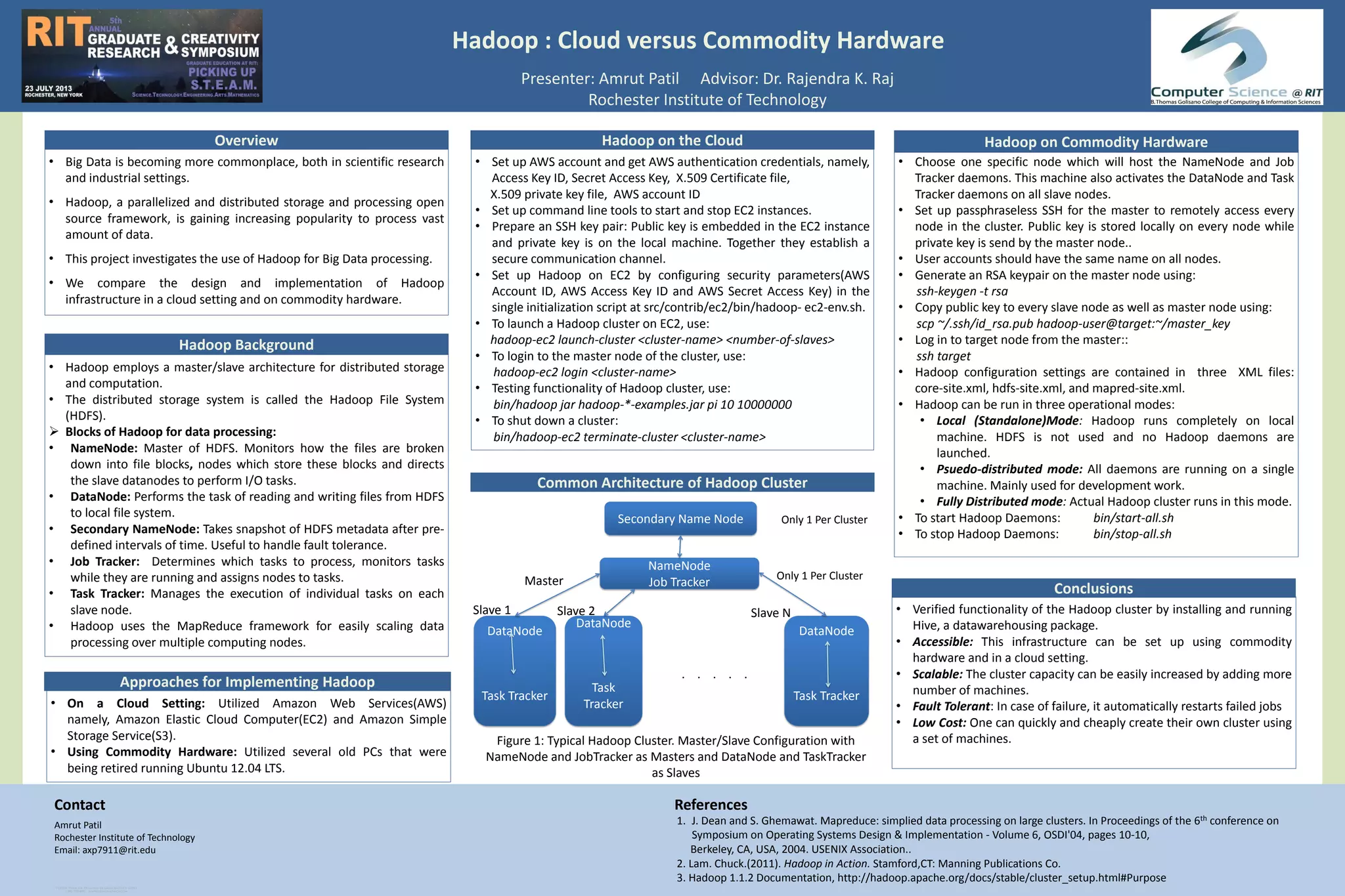

This document compares implementing Hadoop infrastructure on Amazon Web Services (AWS) versus commodity hardware. It discusses setting up Hadoop clusters on both AWS Elastic Compute Cloud (EC2) instances and several retired PCs running Ubuntu. The document also provides an overview of the Hadoop architecture, including the roles of the NameNode, DataNode, JobTracker, and TaskTracker in distributed storage and processing within Hadoop.