This document provides an overview of Hadoop, including:

1. Hadoop is an open-source software framework for distributed storage and processing of large datasets across clusters of commodity hardware.

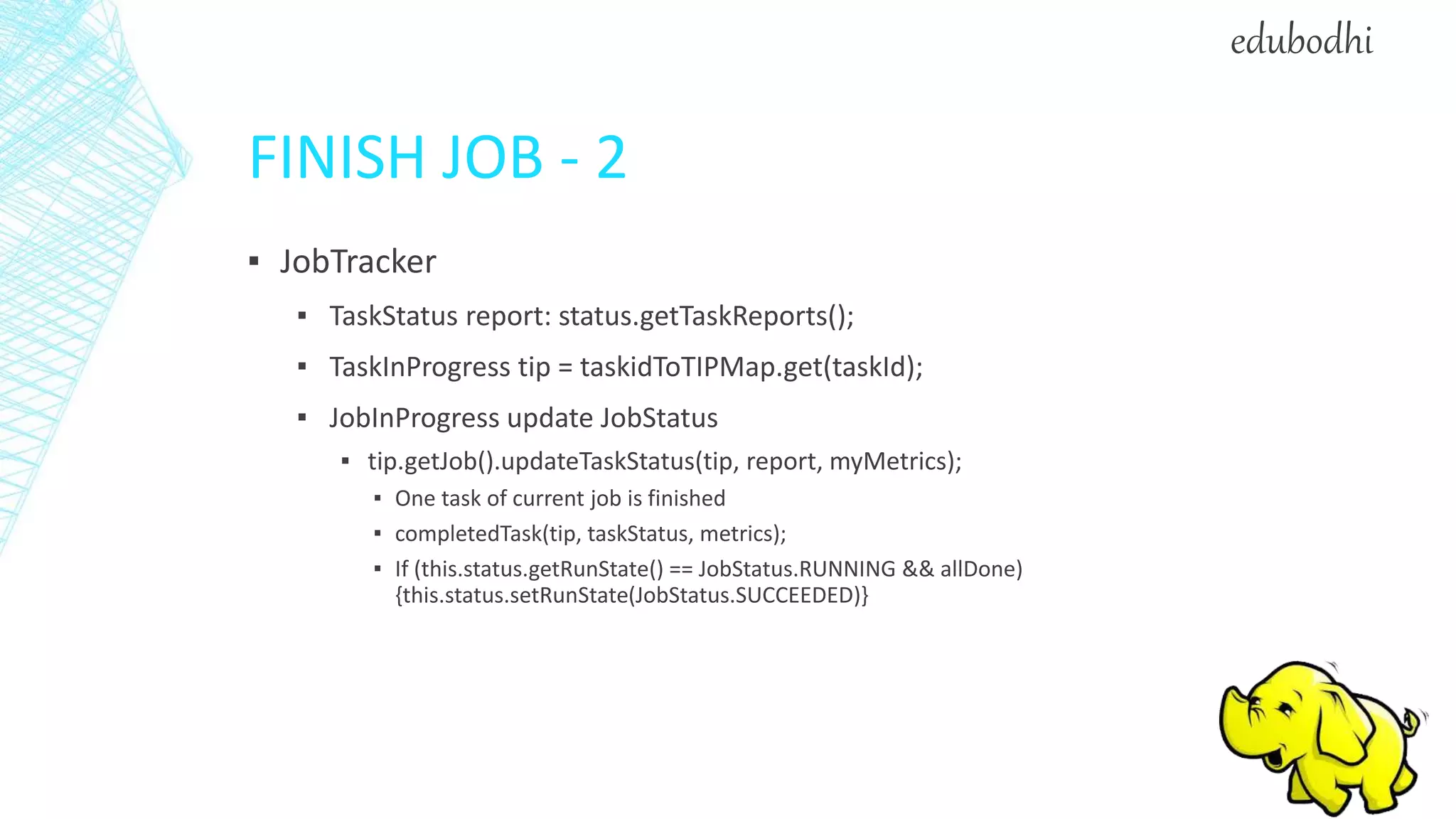

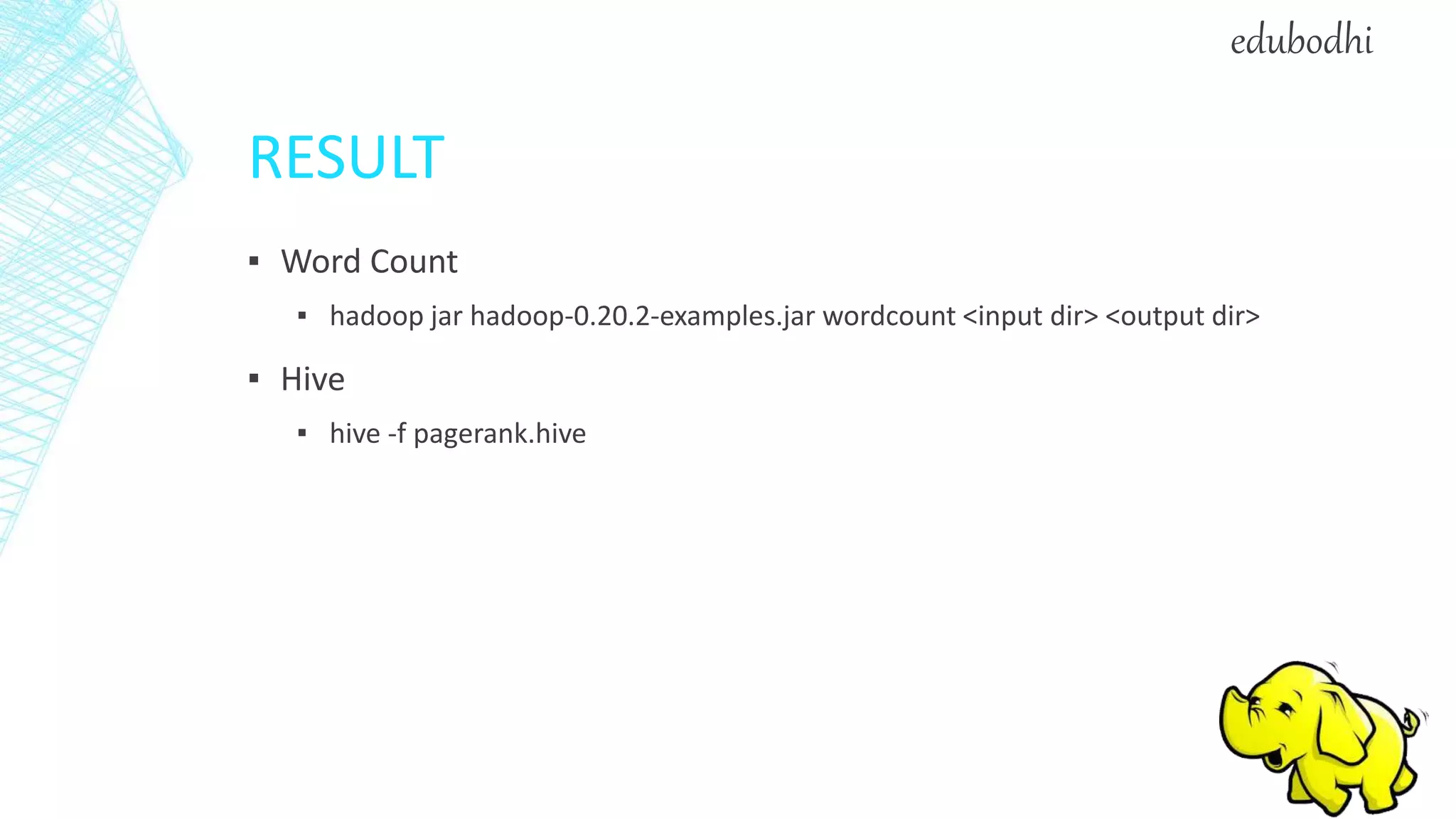

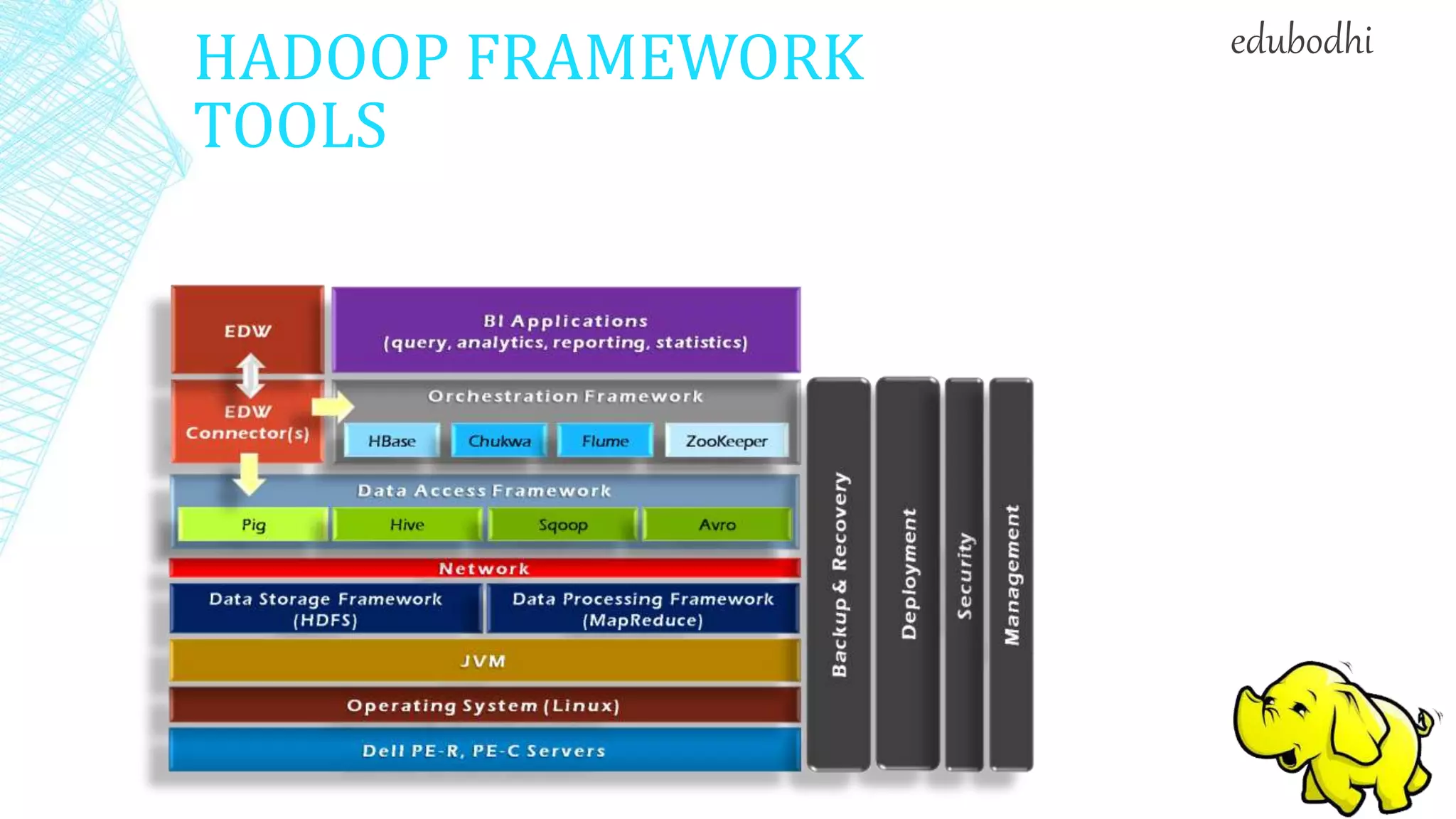

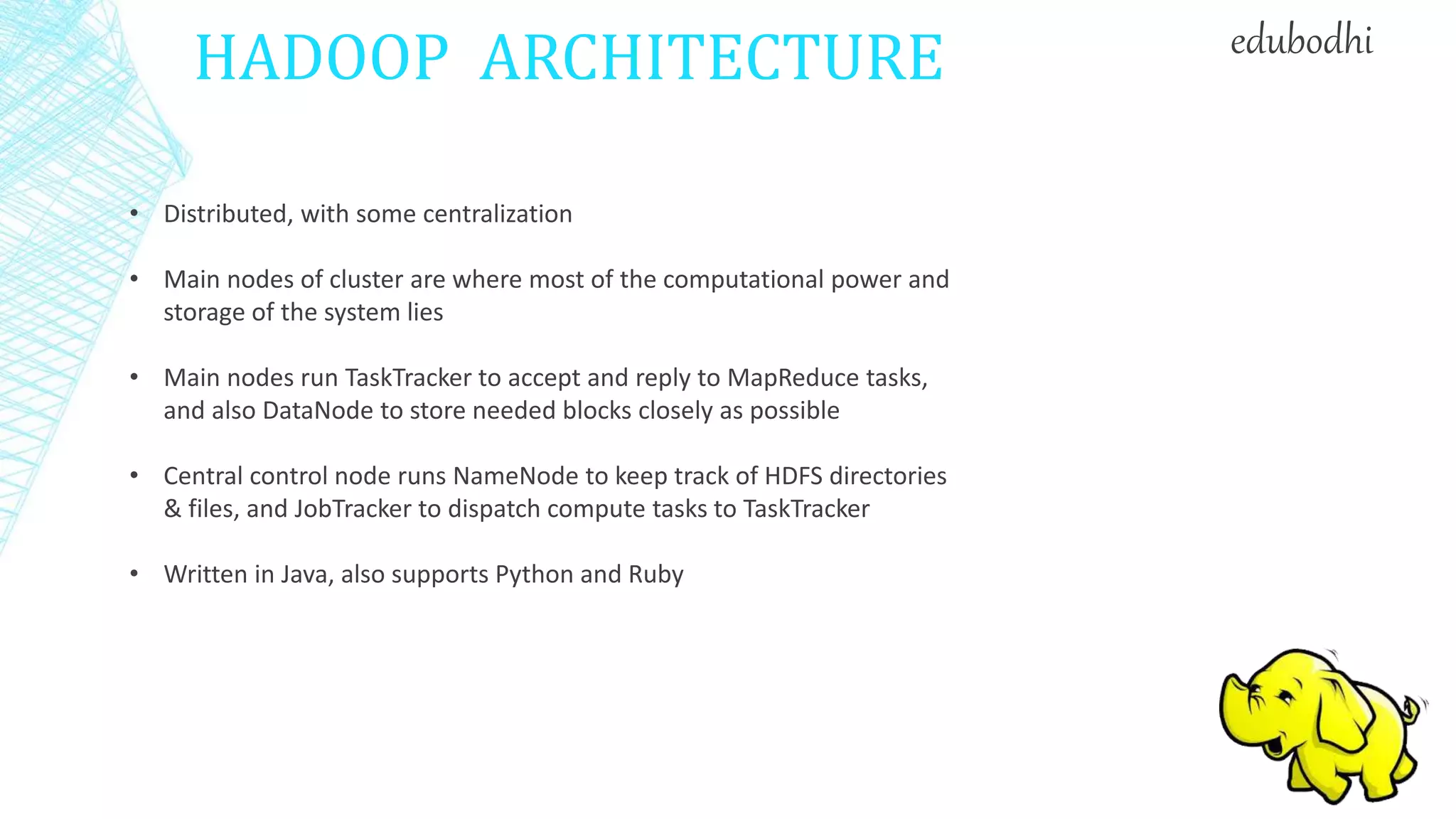

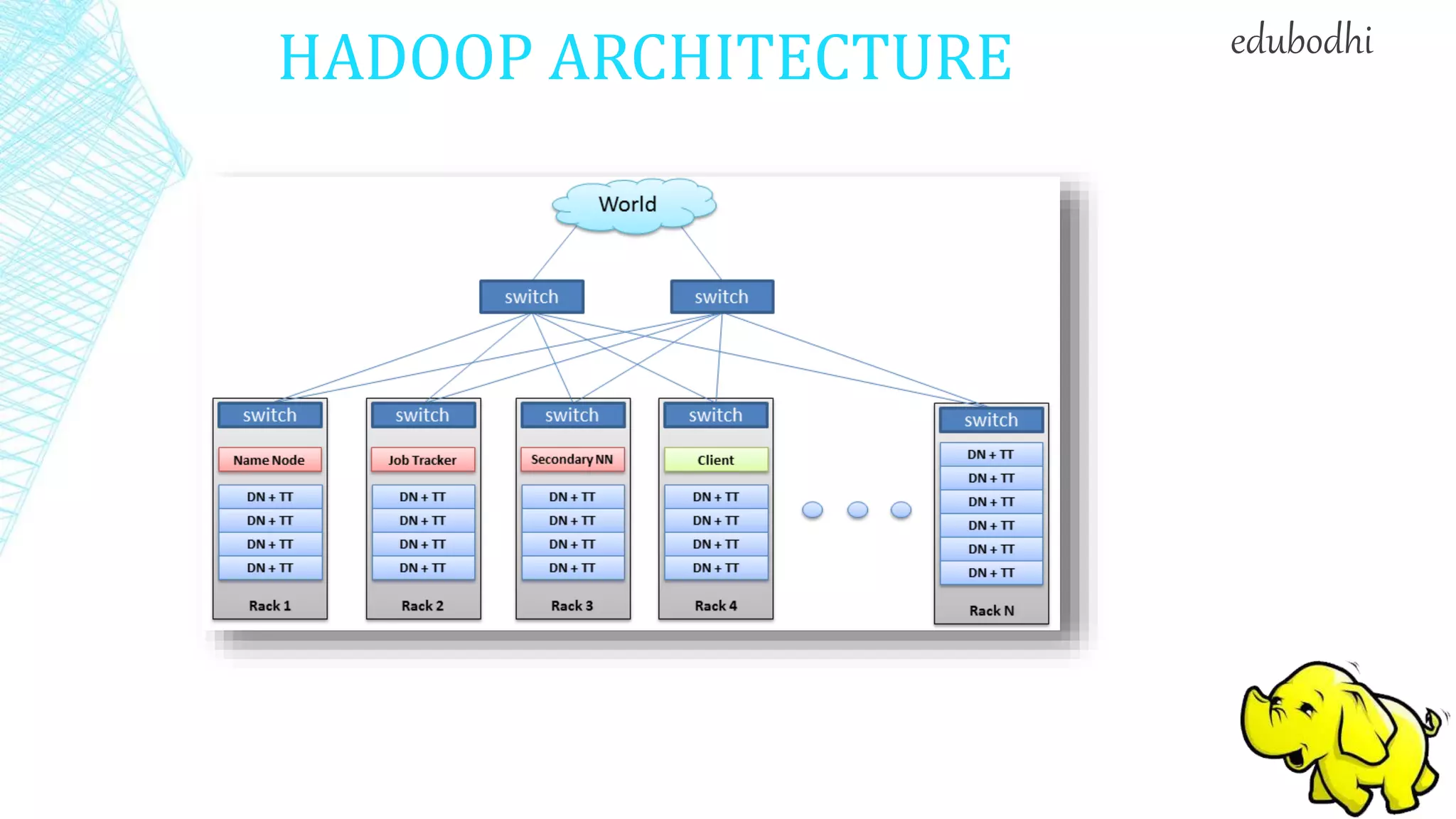

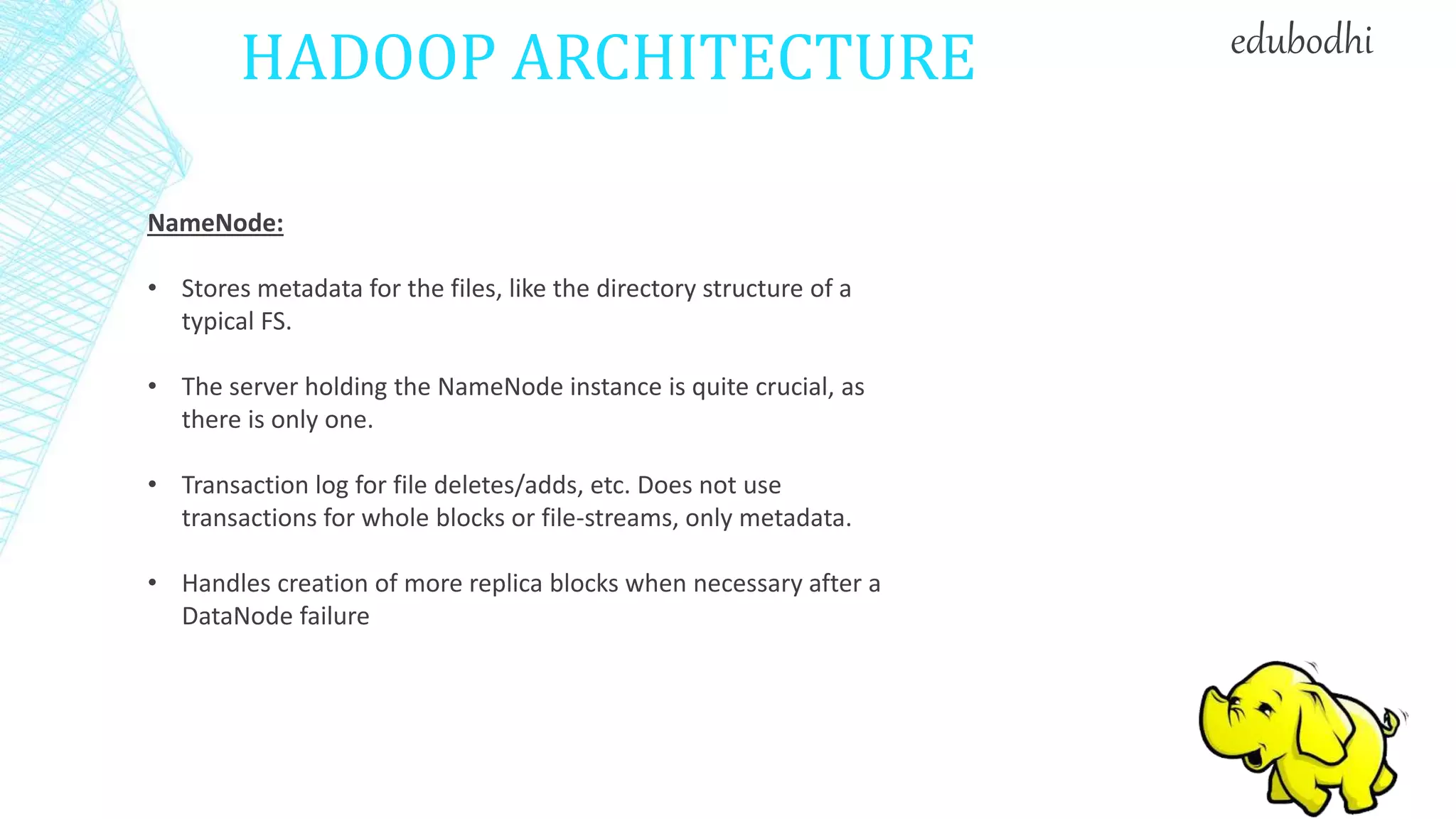

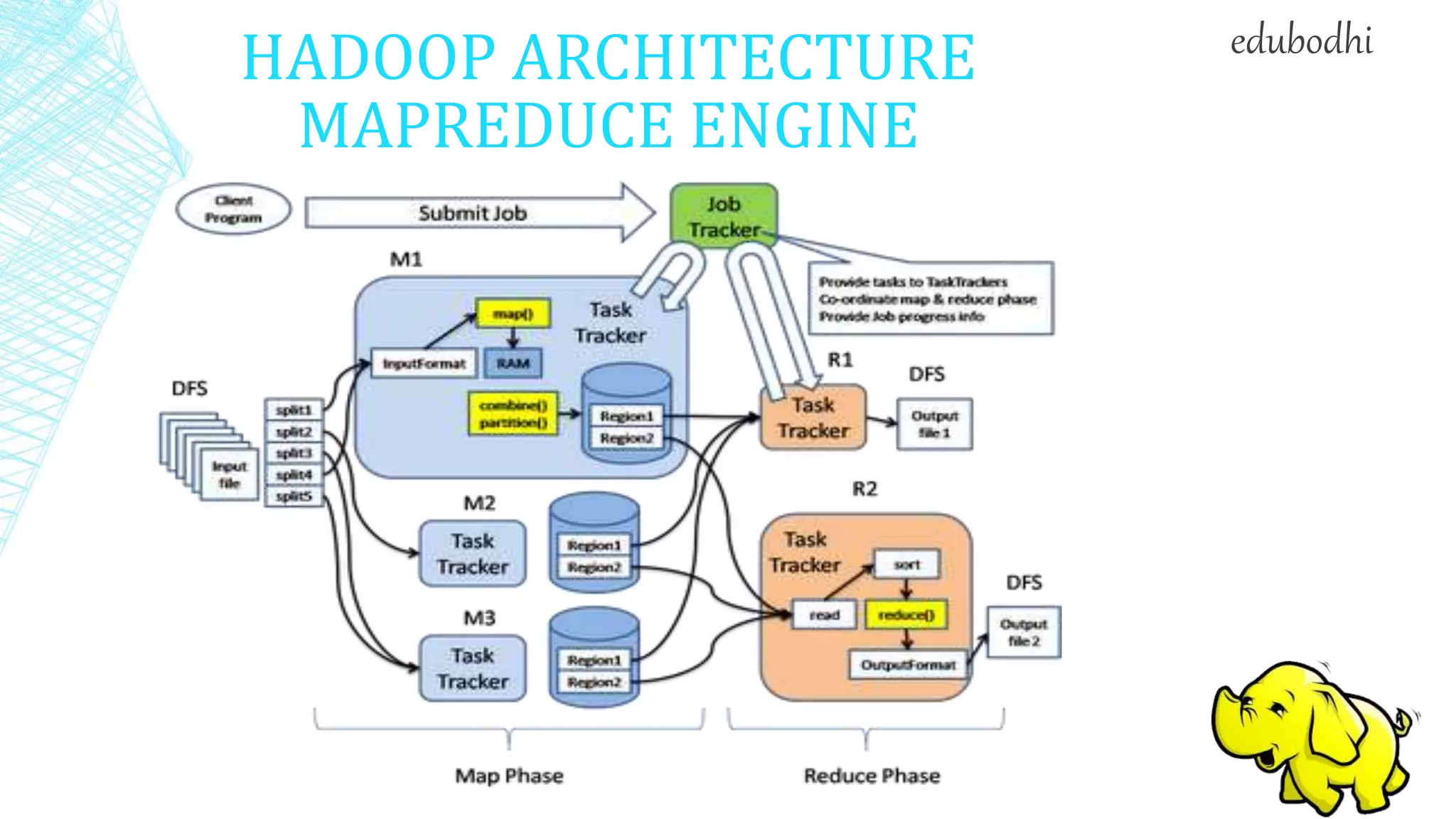

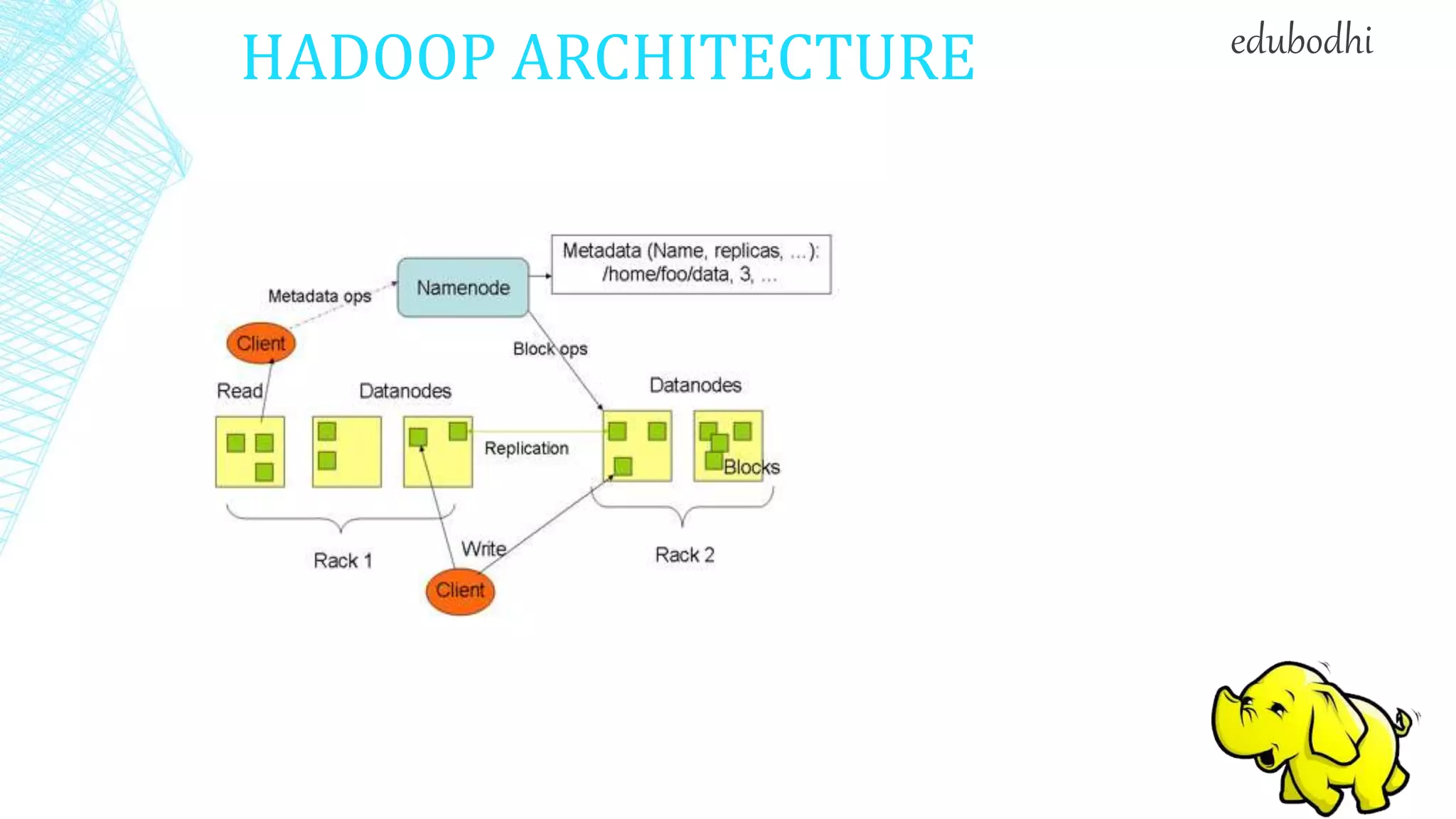

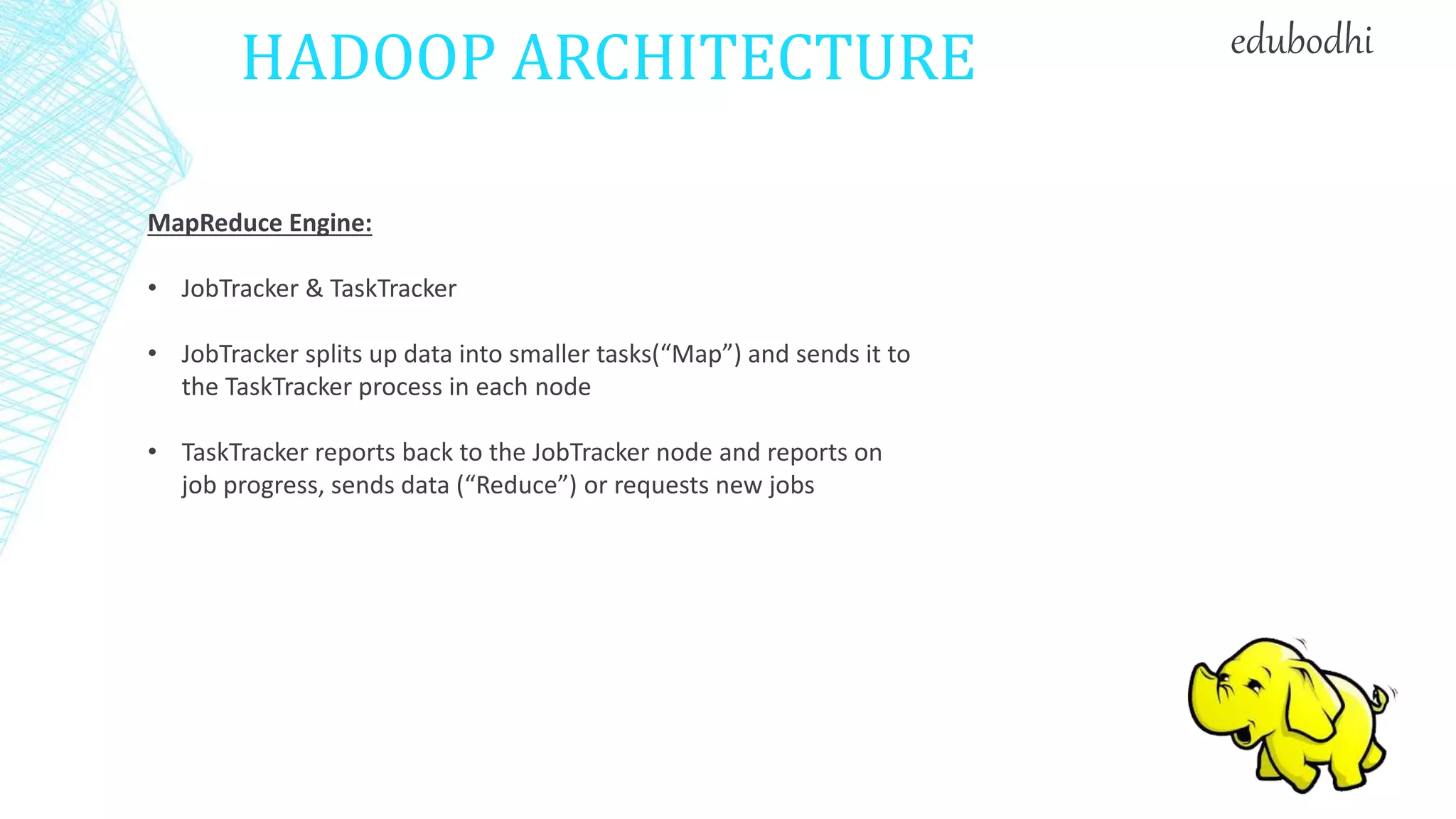

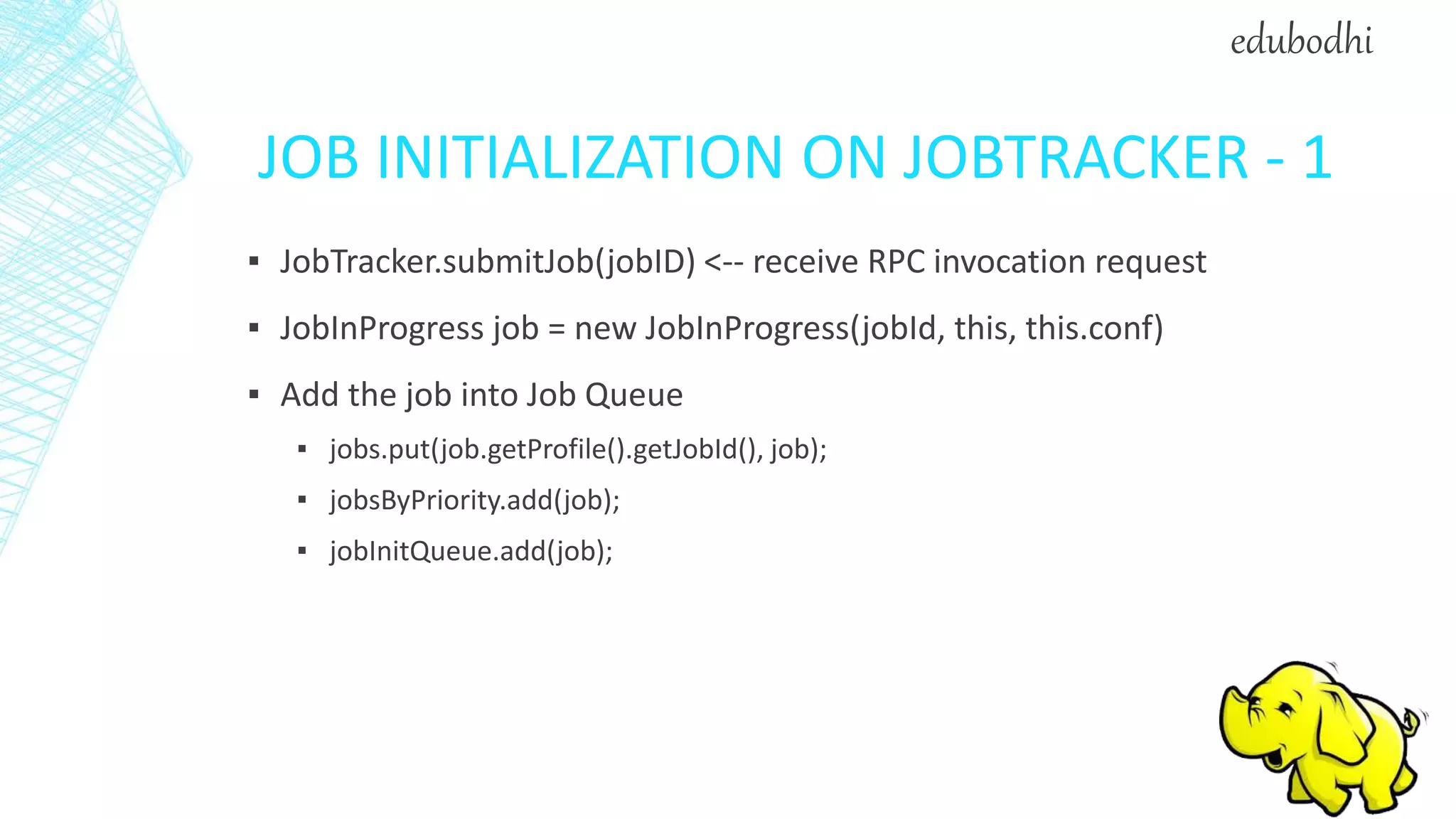

2. It describes the architecture of Hadoop, including the Hadoop Distributed File System (HDFS) and MapReduce engine. HDFS uses a master/slave architecture with a NameNode and DataNodes, while MapReduce uses a JobTracker and TaskTrackers.

3. It discusses some common uses of Hadoop in industry, such as for log processing, web search indexing, and ad-hoc queries at large companies like Yahoo, Facebook, and Amazon.

![JOBCLIENT.SUBMITJOB - 1

▪ Check input and output, e.g. check if the output directory is already

existing

▪ job.getInputFormat().validateInput(job);

▪ job.getOutputFormat().checkOutputSpecs(fs, job);

▪ Get InputSplits, sort, and write output to HDFS

▪ InputSplit[] splits = job.getInputFormat().

getSplits(job, job.getNumMapTasks());

▪ writeSplitsFile(splits, out); // out is $SYSTEMDIR/$JOBID/job.split

edubodhi](https://image.slidesharecdn.com/introductiontohadoopadministration-160125234413/75/Introduction-to-Hadoop-Administration-31-2048.jpg)

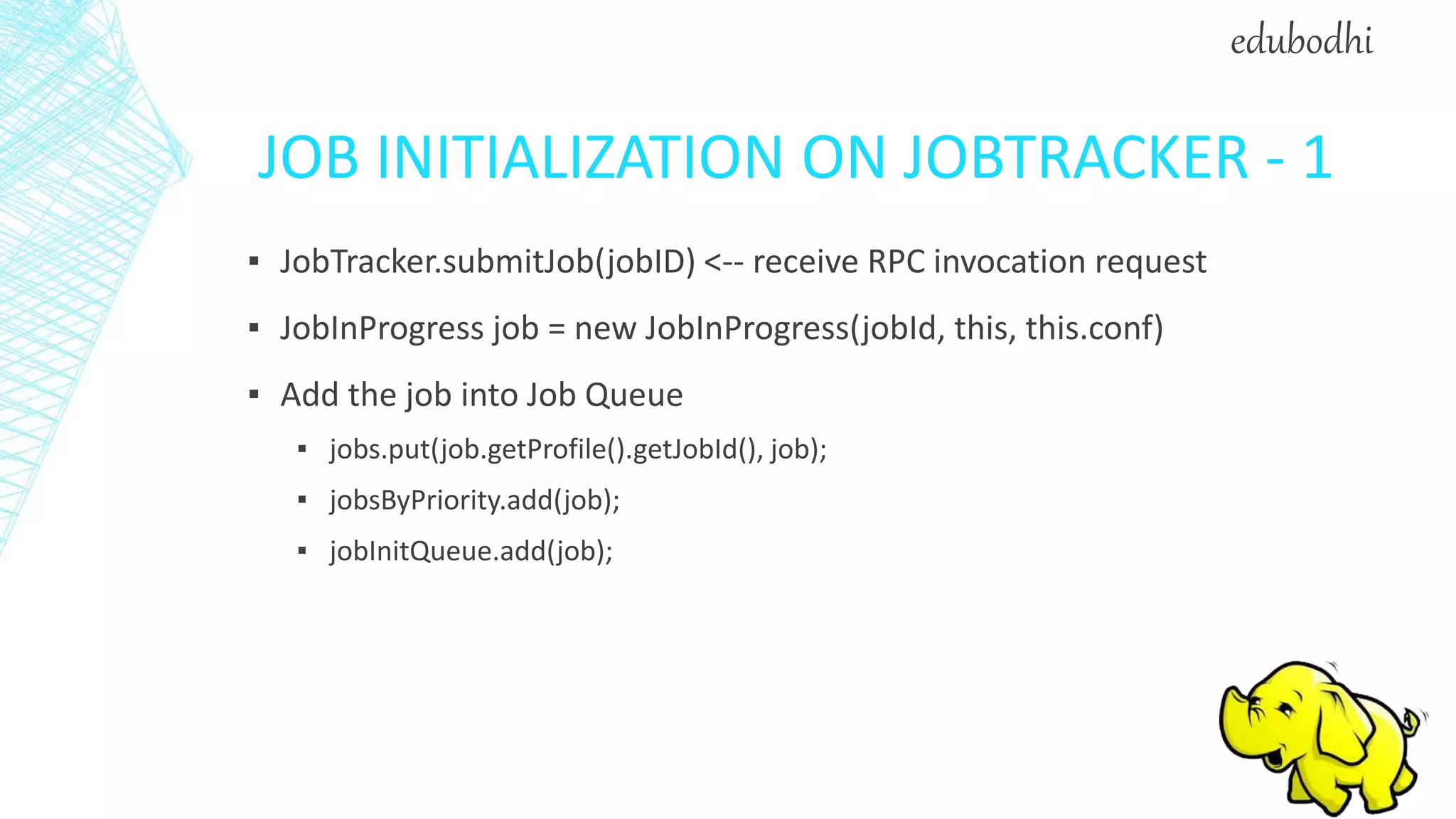

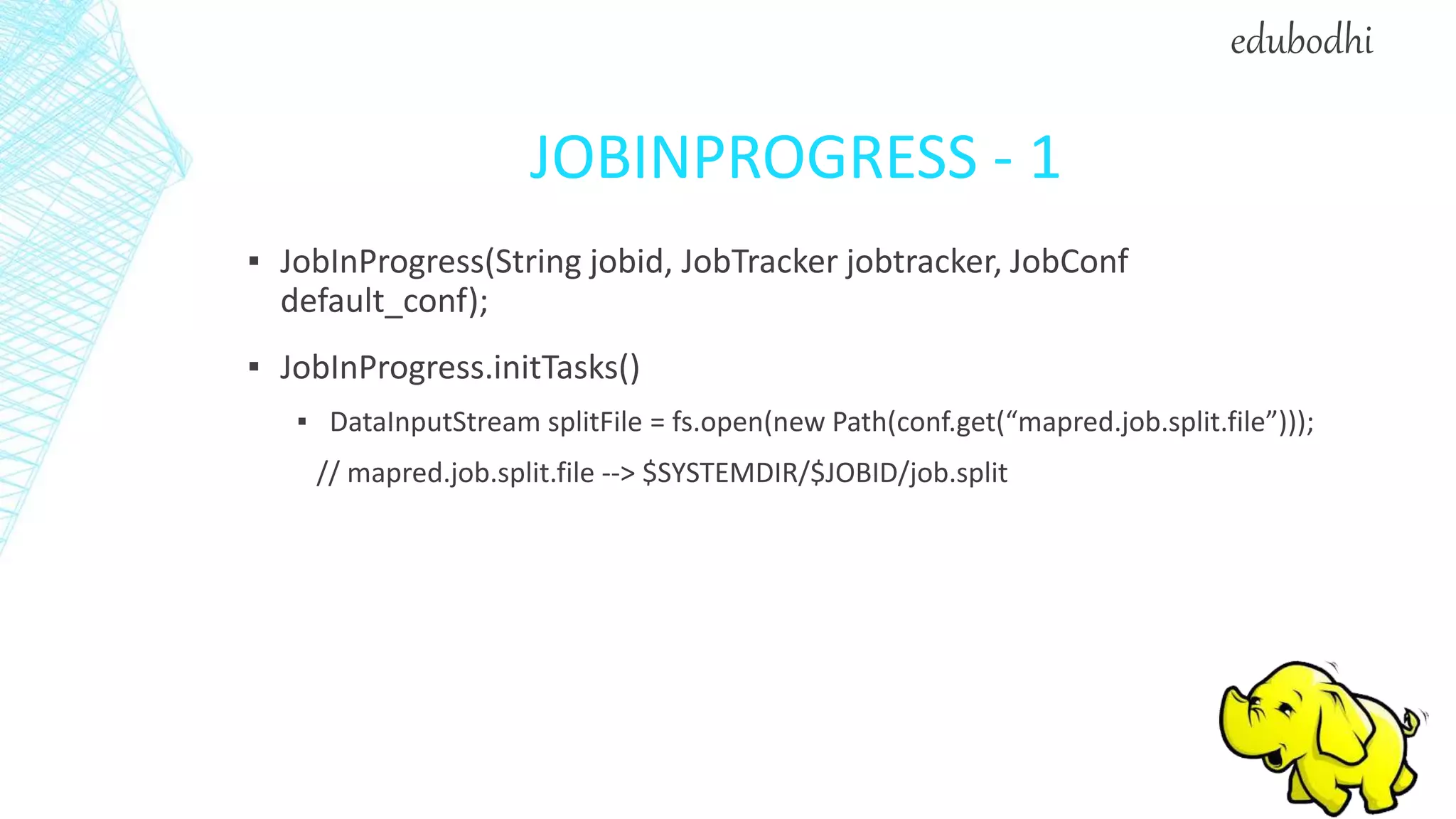

![JOBINPROGRESS - 2

▪ splits = JobClient.readSplitFile(splitFile);

▪ numMapTasks = splits.length;

▪ maps[i] = new TaskInProgress(jobId, jobFile, splits[i], jobtracker, conf,

this, i);

▪ reduces[i] = new TaskInProgress(jobId, jobFile, splits[i], jobtracker, conf,

this, i);

▪ JobStatus --> JobStatus.RUNNING

edubodhi](https://image.slidesharecdn.com/introductiontohadoopadministration-160125234413/75/Introduction-to-Hadoop-Administration-41-2048.jpg)

![JOBINPROGRESS - 2

▪ splits = JobClient.readSplitFile(splitFile);

▪ numMapTasks = splits.length;

▪ maps[i] = new TaskInProgress(jobId, jobFile, splits[i], jobtracker, conf,

this, i);

▪ reduces[i] = new TaskInProgress(jobId, jobFile, splits[i], jobtracker, conf,

this, i);

▪ JobStatus --> JobStatus.RUNNING

edubodhi](https://image.slidesharecdn.com/introductiontohadoopadministration-160125234413/75/Introduction-to-Hadoop-Administration-46-2048.jpg)