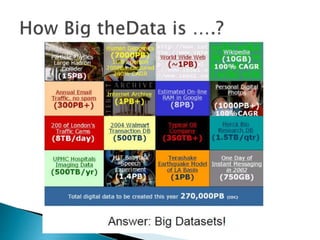

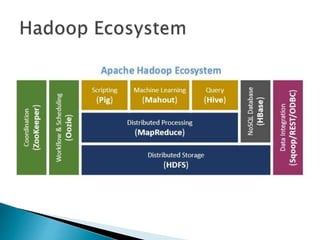

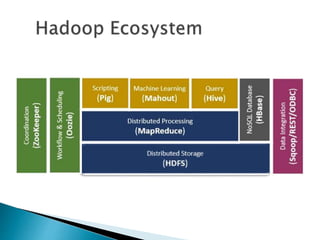

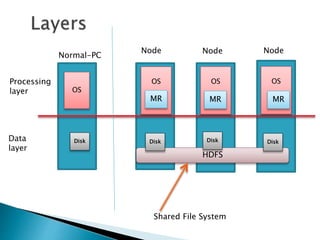

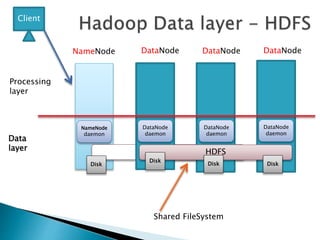

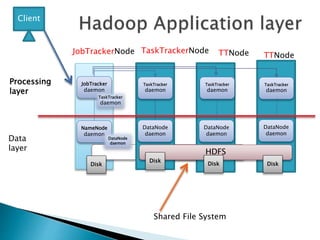

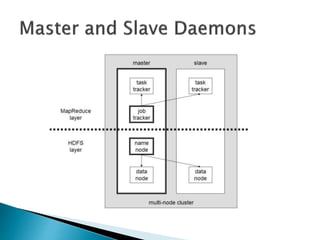

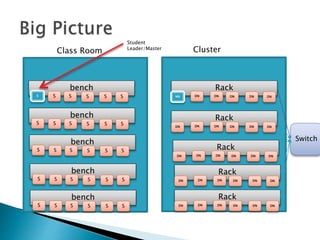

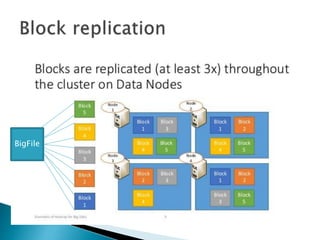

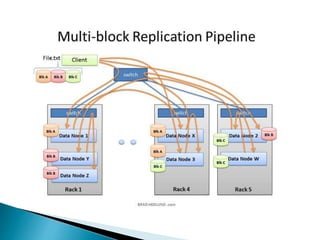

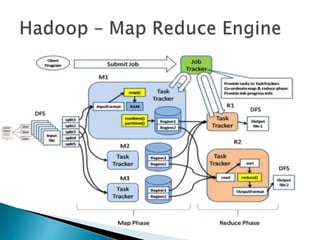

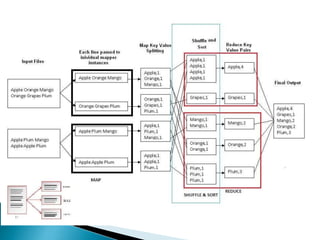

This document discusses big data and Hadoop. It defines big data as very large and complex datasets that are difficult to process using traditional methods. It then lists some of the challenges of big data like storage, processing speed, and cost. The document introduces Hadoop as an open-source framework that can address these challenges by allowing distributed storage and processing of large datasets across commodity hardware. It provides a high-level overview of how Hadoop works, including its core components HDFS for storage and MapReduce for distributed processing.