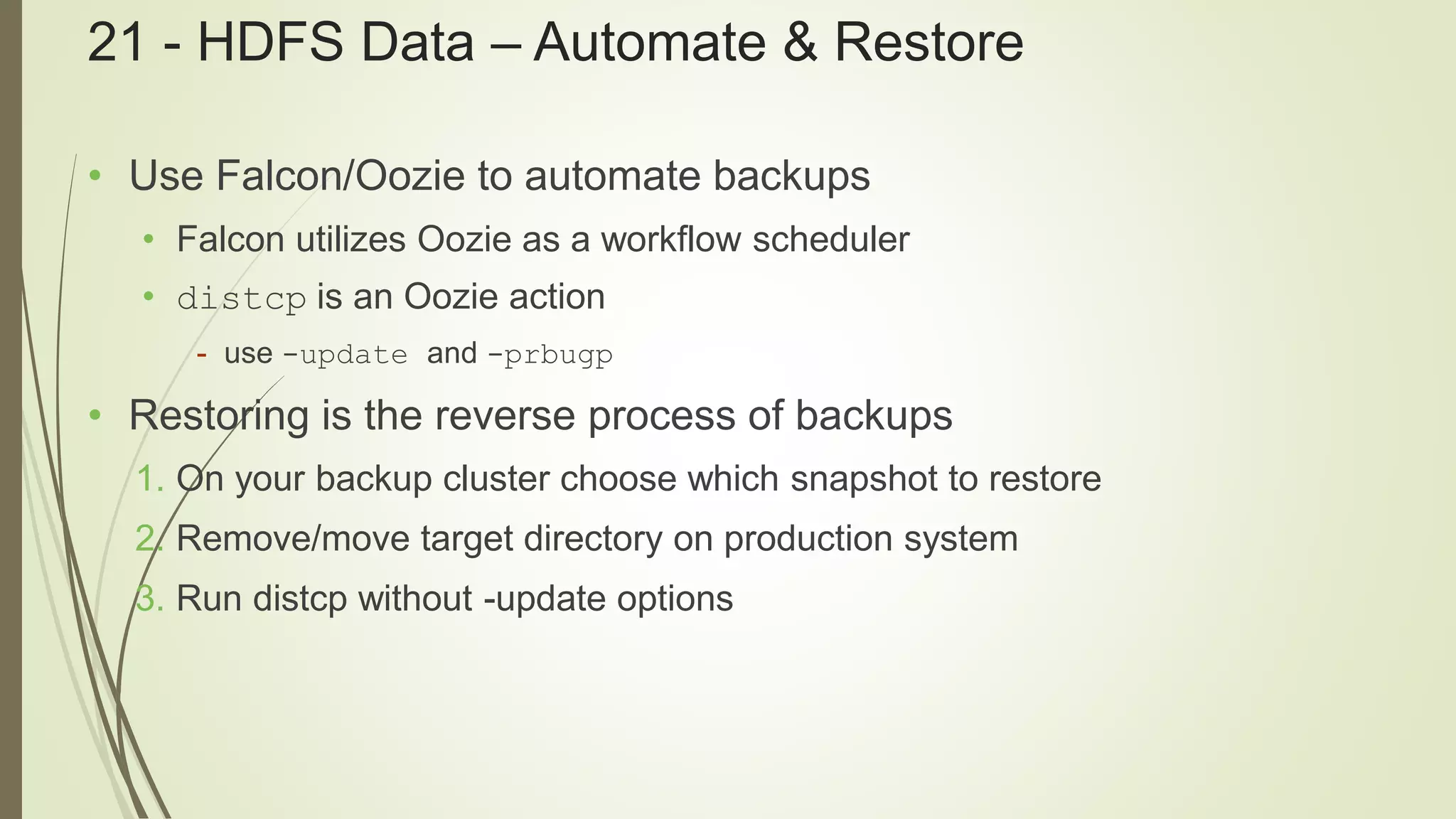

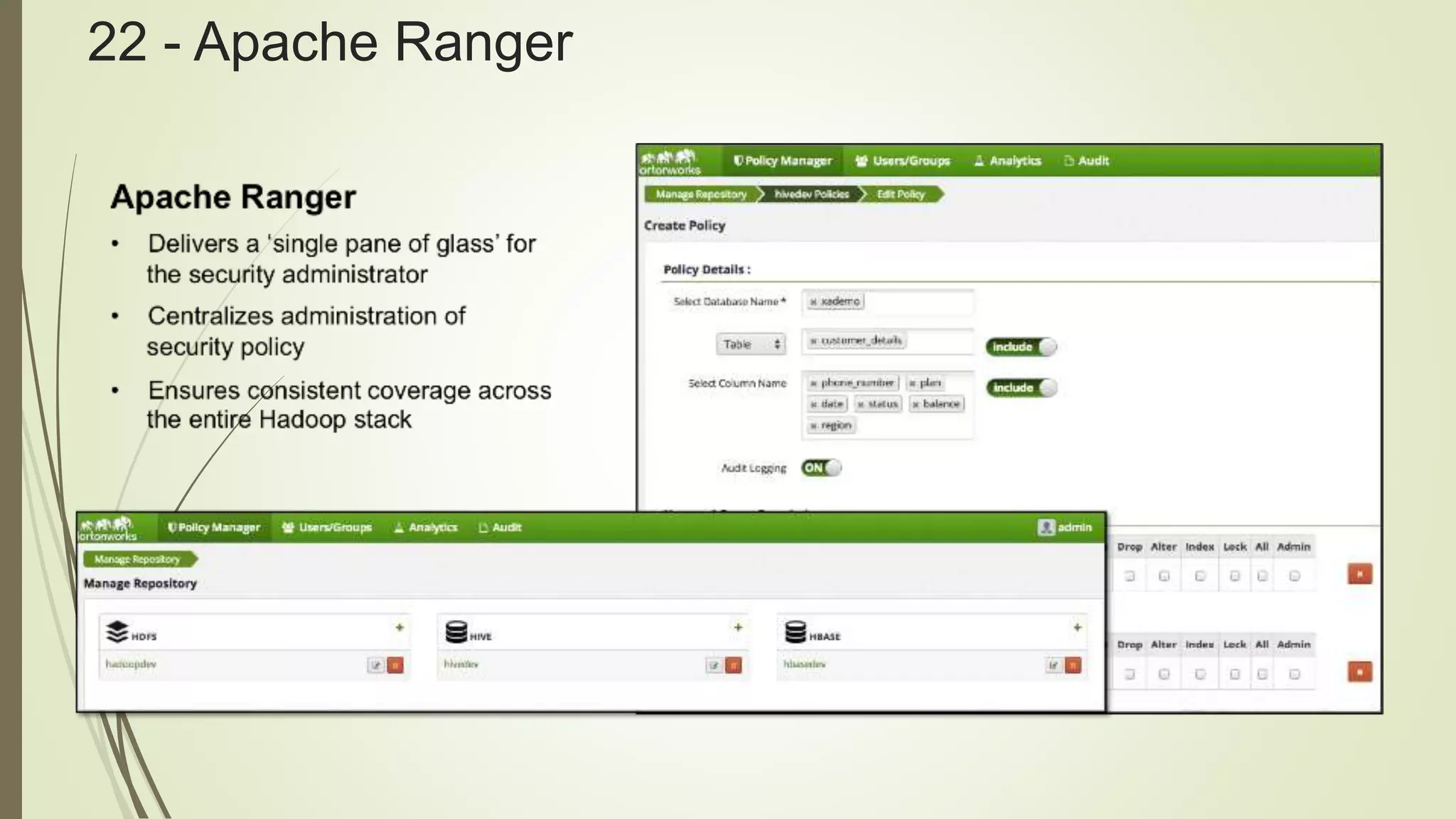

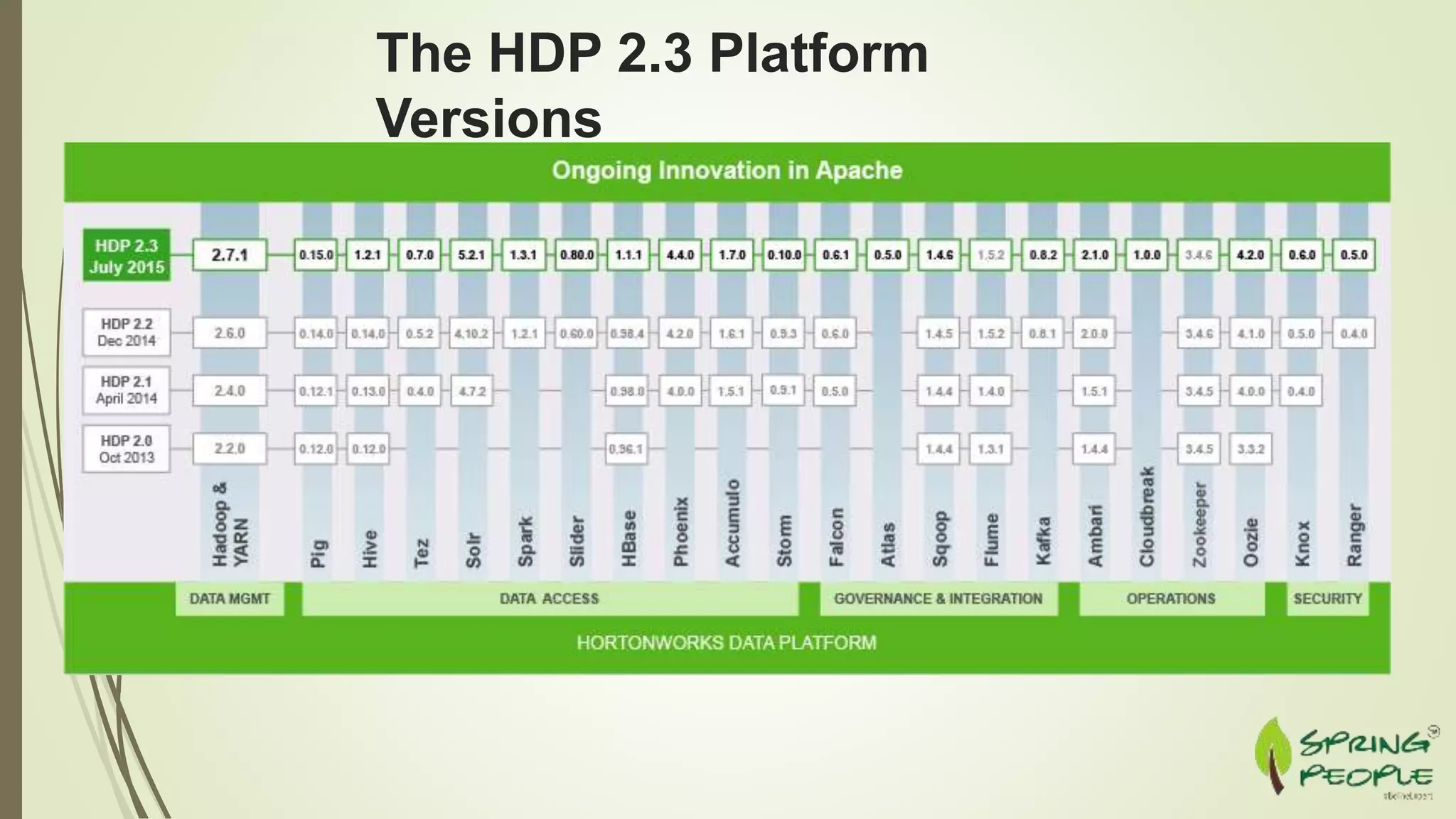

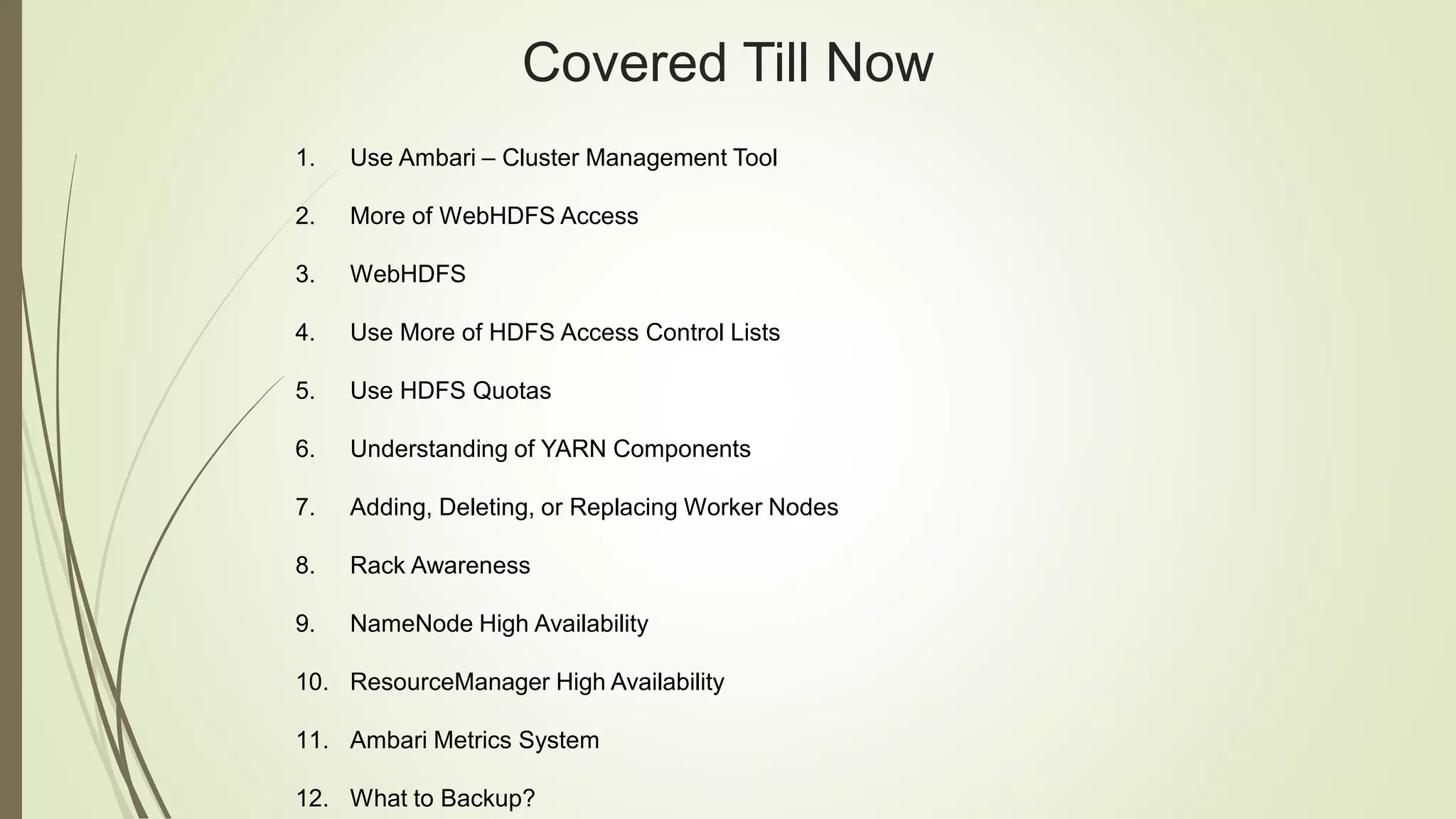

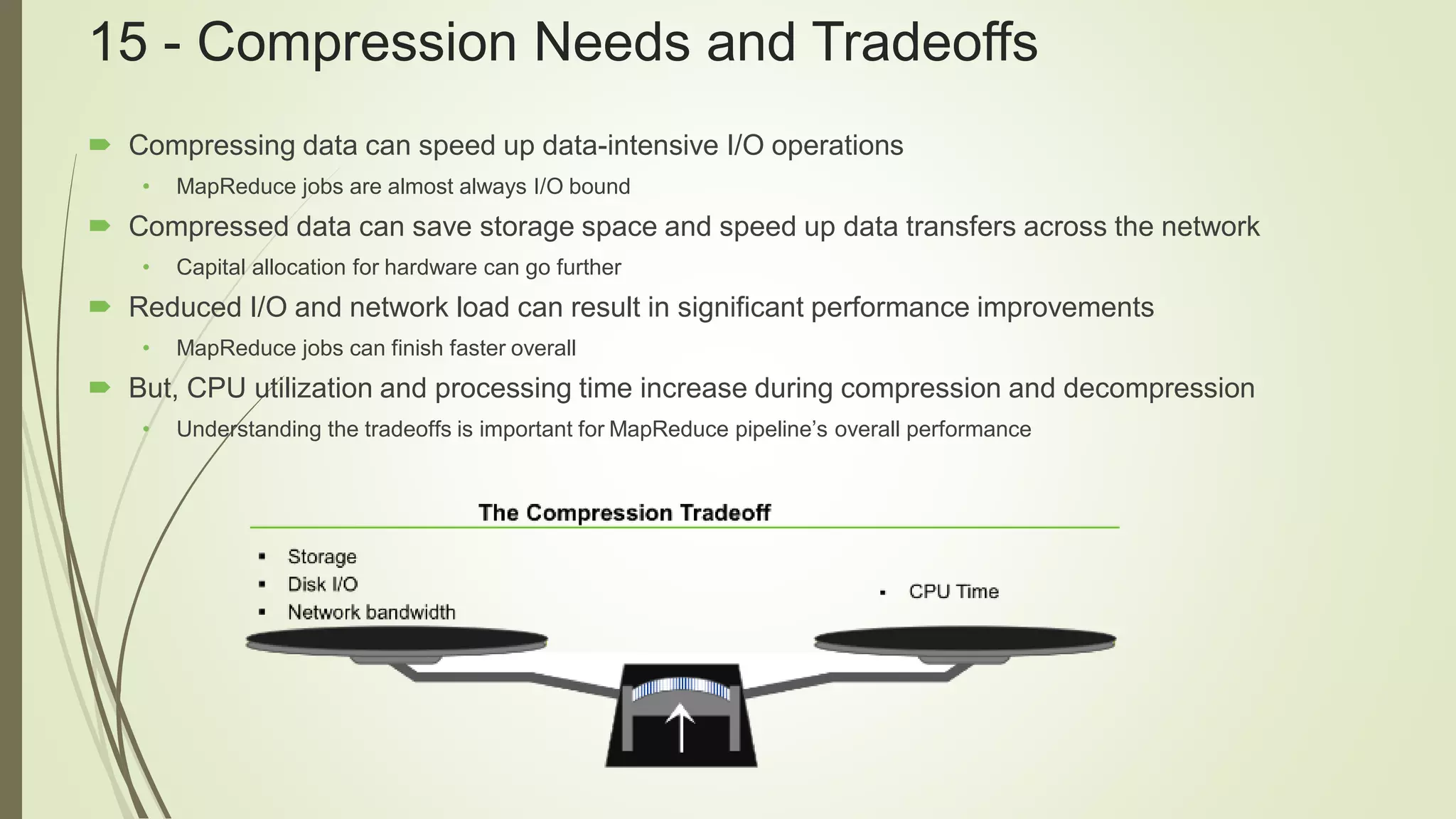

The document outlines best practices for Hadoop administration using HDP 2.3, including features of the Hadoop ecosystem, such as Ambari for cluster management and YARN components. It also covers topics like HDFS configurations, data compression tradeoffs, Sqoop security measures, and automating backups with Falcon and Oozie. Additionally, it promotes upcoming training sessions at SpringPeople for further education on these technologies.

![19 - Running Balancer

• Can be run periodically as a batch job

• Examples: every 24 hours or weekly

• Run after new nodes have been added to the cluster

• To run balancer:

hdfs balancer [-threshold <threshold>] [-policy <policy>]]

• Runs until there are no blocks to move

or

Until it has lost contact with the NameNode

• Can be stopped with a Ctrl+C](https://image.slidesharecdn.com/hadoopadminbestpracticespart2-160812144002/75/Best-Practices-for-Administering-Hadoop-with-Hortonworks-Data-Platform-HDP-2-3-_Part-2-13-2048.jpg)