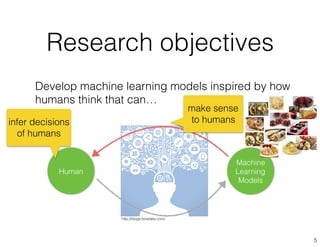

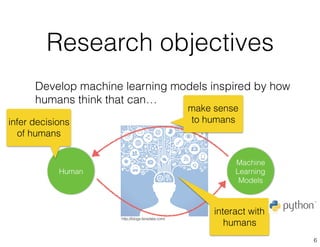

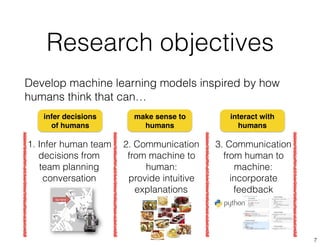

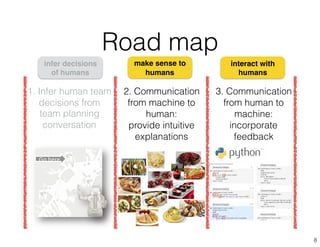

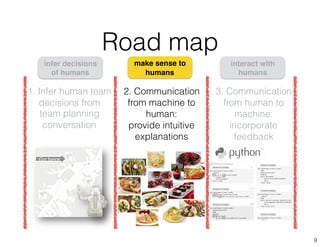

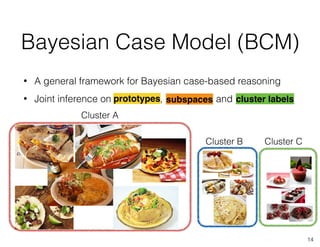

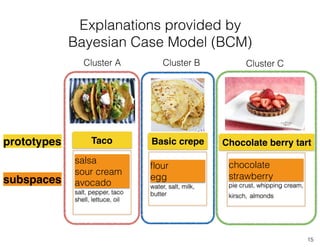

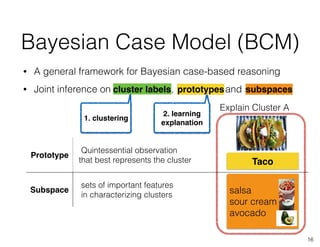

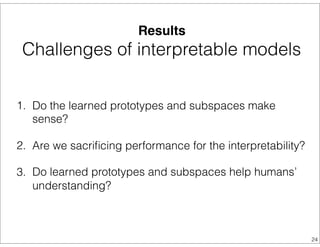

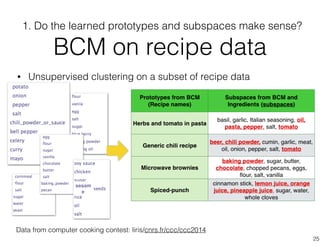

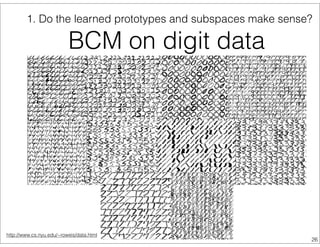

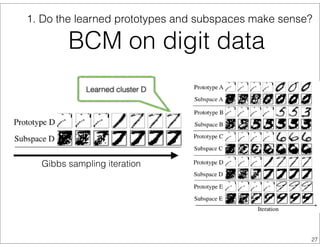

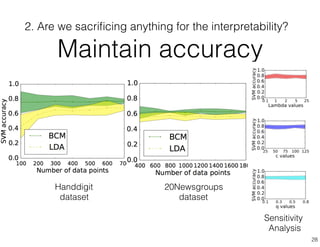

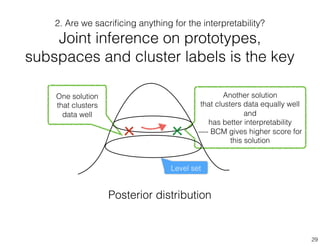

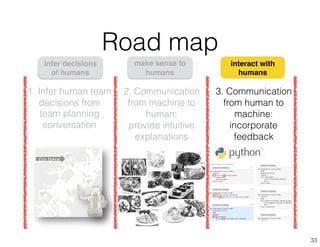

The document discusses the development of interactive and interpretable machine learning models designed to enhance human-machine collaboration by mirroring human decision-making processes. It describes the Bayesian Case Model (BCM) and its application in various domains, emphasizing the importance of intuitively explaining machine learning outcomes to users while allowing for user feedback to improve model interpretations. The research aims to bridge the gap between machine learning and human reasoning, paving the way for effective communication between machines and humans.

![• Human’s tactical decision is based on

exemplar-based reasoning (matching and

prototyping) [Cohen 96, Newell 72]

• Skilled fire fighters use recognition-primed

decision making — a situation is matched

to typical cases [Klein 89]

• Machines can better support peoples’

decision-making by representing data in

the same way

Mirror the way humans think

10](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-10-320.jpg)

![Case-based reasoning and

interpretable models

11

Case-based reasoning

• Applied to various applications thanks

to its intuitive power

[Aamodt 94, Slade 91, Bekkerman 06]

Limitations

• Always require labels (supervised)

• Does not scale to complex problems

• Does not leverage global patterns of

data

Interpretable models

• Decision tree [De`ath 00]

• Sparse linear classifiers

[Tibshirani 96, Ustun 14]

• Prototype-based [Graf 09]

Limitations

• Sparsity is not enough [Freitas 14]

• Linear models or supervised](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-11-320.jpg)

![Our approach:

Bayesian Case Model (BCM)

*

Bayesian generative models

Case-based reasoning

Bayesian Case Model (BCM)

• Leverage the power of examples (prototypes) and

subspaces (hot features) to explain machine

learning results

prototypes

subspaces

Explain

complicated

concepts using

examples

12

[Kim, Rudin, Shah NIPS 2014]](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-12-320.jpg)

![It is a crepe, since it has flour and egg.

It is inspired by Mexican food, because

it has avocado, salsa and sour cream.

Cluster labels:

• Admixture model for modeling the underlying distributions

Cluster A Cluster B Cluster C

= [A, B, A]mexican_crepe

Bayesian Case Model (BCM)

1. Clustering part

17](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-17-320.jpg)

![It is a crepe, since it has flour and egg.

It is sweet crepe that is like chocolate

and berry dessert.

• Admixture model for modeling the underlying distributions

Cluster A Cluster B Cluster C

= [B, C, C]chocolate_crepe

Bayesian Case Model (BCM)

1. Clustering part

18](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-18-320.jpg)

![• Cluster distribution + supervised classification methods can be

used for evaluating the clustering performance[1]

• Hyper parameter can be used to control how many different cluster

labels within one data point

The concentration

parameter:

Cluster distribution

of the data point

[1] D. Blei, A. Ng, M. Jordan 2003

Bayesian Case Model (BCM)

1. Clustering part

19](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-19-320.jpg)

![Collapsed Gibbs sampling

for inference

• Observed to converge quickly in admixture models

• Integrating out and for efficient inference

30

[Kim, Rudin, Shah NIPS 2014]](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-30-320.jpg)

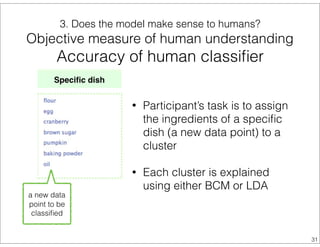

![• 384 classification questions asked to 24 people

• Statistically significantly better performance with BCM

(85.9% v.s. 71.3%)

a new data

point to be

classified

Explanations of clusters

Clusters explained

using

1. BCM :

ingredients of the

prototype recipe

2. LDA:

representative

ingredients of

each cluster

3. Does the model make sense to humans?

Objective measure of human understanding

Accuracy of human classifier

32

[Kim, Rudin, Shah NIPS 2014]

sesam

e](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-32-320.jpg)

![Related work on

interactive machine learning

• Interact via multiple model parameter

settings [Patel 10, Amershi 15]

• Design smart interfaces [Amershi 11]

and visualization [Chaney 12, Gou 03]

• Interact via simplified medium of

interaction [Kapoor 10, Ware 01]

Prototypes

and

Subspaces!

37](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-37-320.jpg)

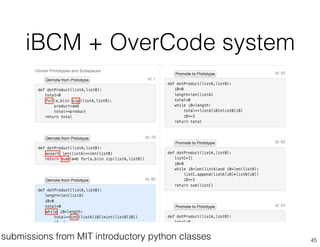

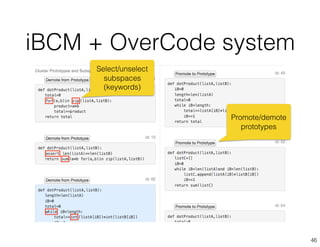

![iBCM for introductory

programming education

44

• Why education?

• Current teachers’ workflow for creating grading rubric:

randomly pick 4-5 assignments and Hodgepodge Grading

[Cross 99]

• Understanding this variation is important for providing

appropriate, tailored feedback to students [Basu13, Huang 13]

• What are the challenges?

• Extracting right features — OverCode [Glassman 15]](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-44-320.jpg)

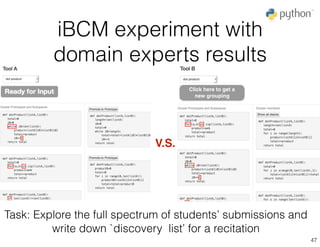

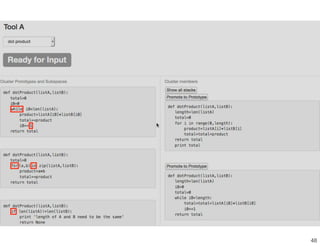

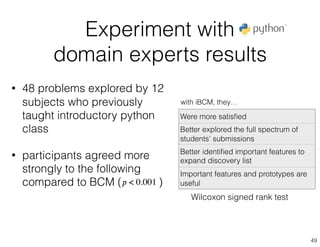

![Experiment with

domain experts results

• 48 problems explored by 12

subjects who previously

taught introductory python

class

• participants agreed more

strongly to the following

compared to BCM ( )

Were more satisfied

Better explored the full spectrum of

students’ submissions

Better identified important features to

expand discovery list

Important features and prototypes are

useful𝑝 < 0.001

50

with iBCM, they…

“[iBCM enabled me to] go in depth

as to how students could do”

“ [iBCM] is useful with large datasets

where brute-force would not be practical.”

Wilcoxon signed rank test](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-50-320.jpg)

![Summary

51

[Kim, Chacha, Shah AAAI13]

[Kim, Chacha, Shah JAIR15]

Communication from

machine to human:

provide intuitive

explanations

make sense to

humans

interact with

humans

[Kim, Rudin, Shah NIPS 2014]

[Kim, Glassman, Johnson, Shah submitted*]

[Kim, Patel, Rostamizadeh, Shah AAAI 2015]

Inspiration: how humans

make decisions

Approach: case-based

Bayesian model

Results: provided intuitive

explanations while

maintaining performance

Approach: enable

interaction by

decomposing sampling

inference steps

Results: implemented and

validated the approach in

education domain

Communication from

human to machine:

incorporate feedback](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-51-320.jpg)

![miss-classified data

Next steps

• Interpretability for data

exploration: visualization

• Domain specific interpretability:

learning features that

distinguishes clusters

• Interactive machine learning for

debugging models or hyper

parameter explorations

predicted:

politics

Doc id #24

True label: medicine

[Kim, Patel, Rostamizadeh, Shah AAAI 2015]

52

[Kim, Doshi-Velez, Shah NIPS 2015]](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-52-320.jpg)

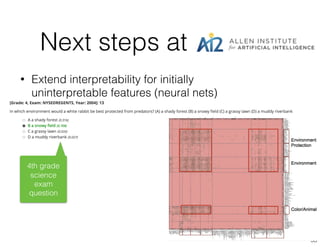

![Q&A

[Kim, Chacha, Shah AAAI13]

[Kim, Chacha, Shah JAIR15]

Communication from

machine to human:

provide intuitive

explanations

make sense to

humans

interact with

humans

[Kim, Rudin, Shah NIPS 2014]

[Kim, Glassman, Johnson, Shah submitted*]

[Kim, Patel, Rostamizadeh, Shah AAAI 2015]

Inspiration: how humans

make decisions

Approach: case-based

Bayesian model

Results: provided intuitive

explanations while

maintaining performance

Approach: enable

interaction by

decomposing sampling

inference steps

Results: implemented and

validated the approach in

education domain

Communication from

human to machine:

incorporate feedback

[Kim, Doshi-Velez, Shah NIPS 2015]

AI2 is hiring

research interns

any time of the year.

Shoot me an email

if interested!

beenk@allenai.org](https://image.slidesharecdn.com/beenresearchtalks2015-160114232047/85/Been-Kim-Interpretable-machine-learning-Nov-2015-54-320.jpg)