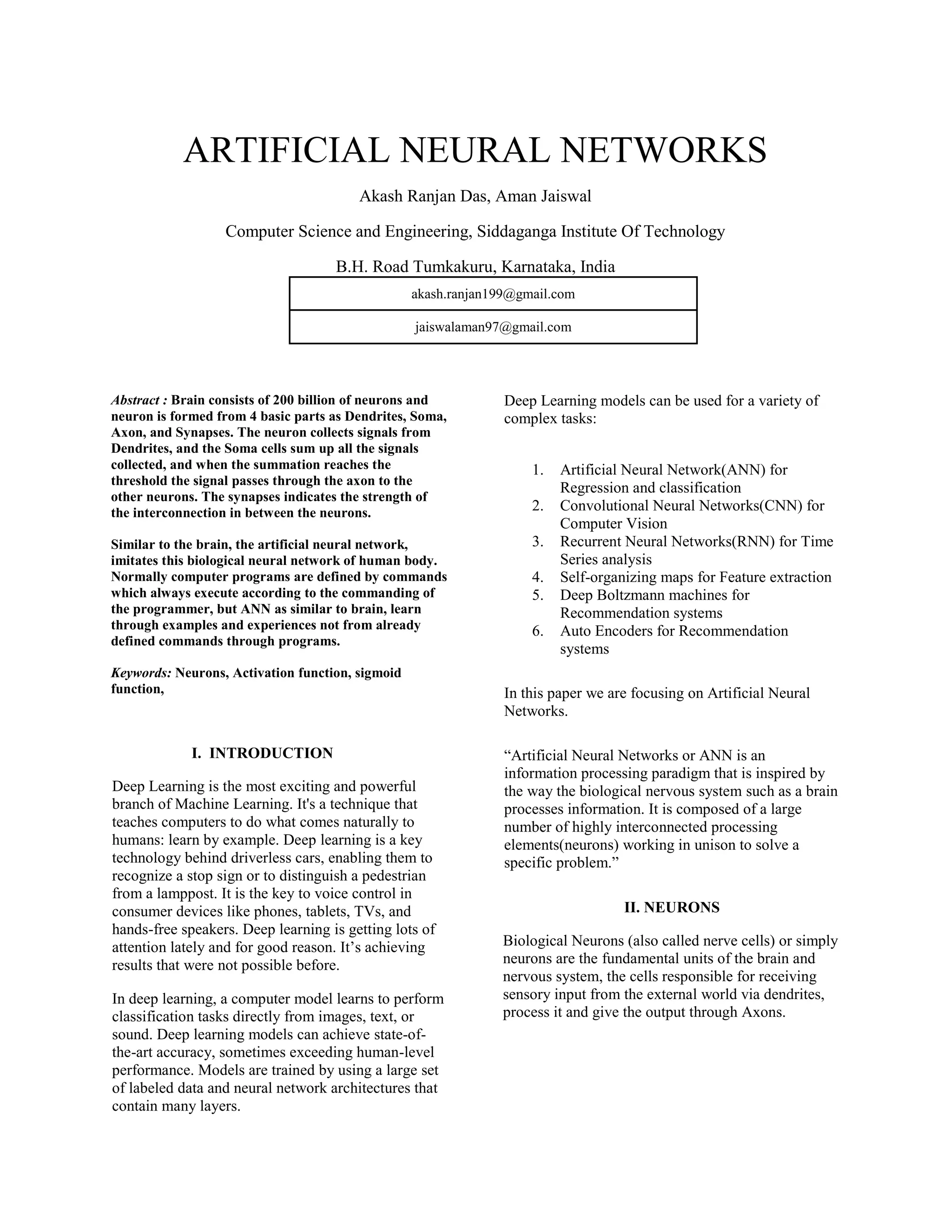

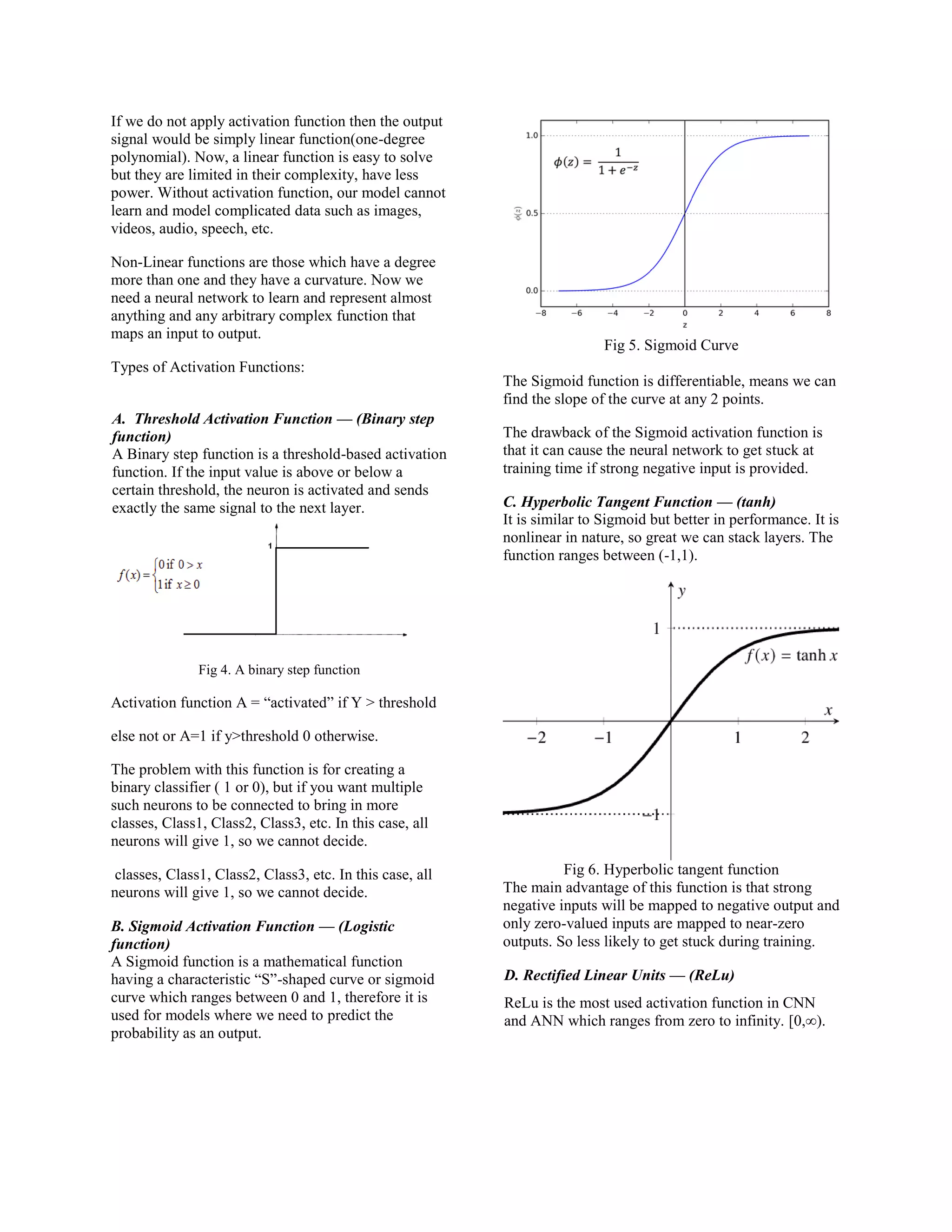

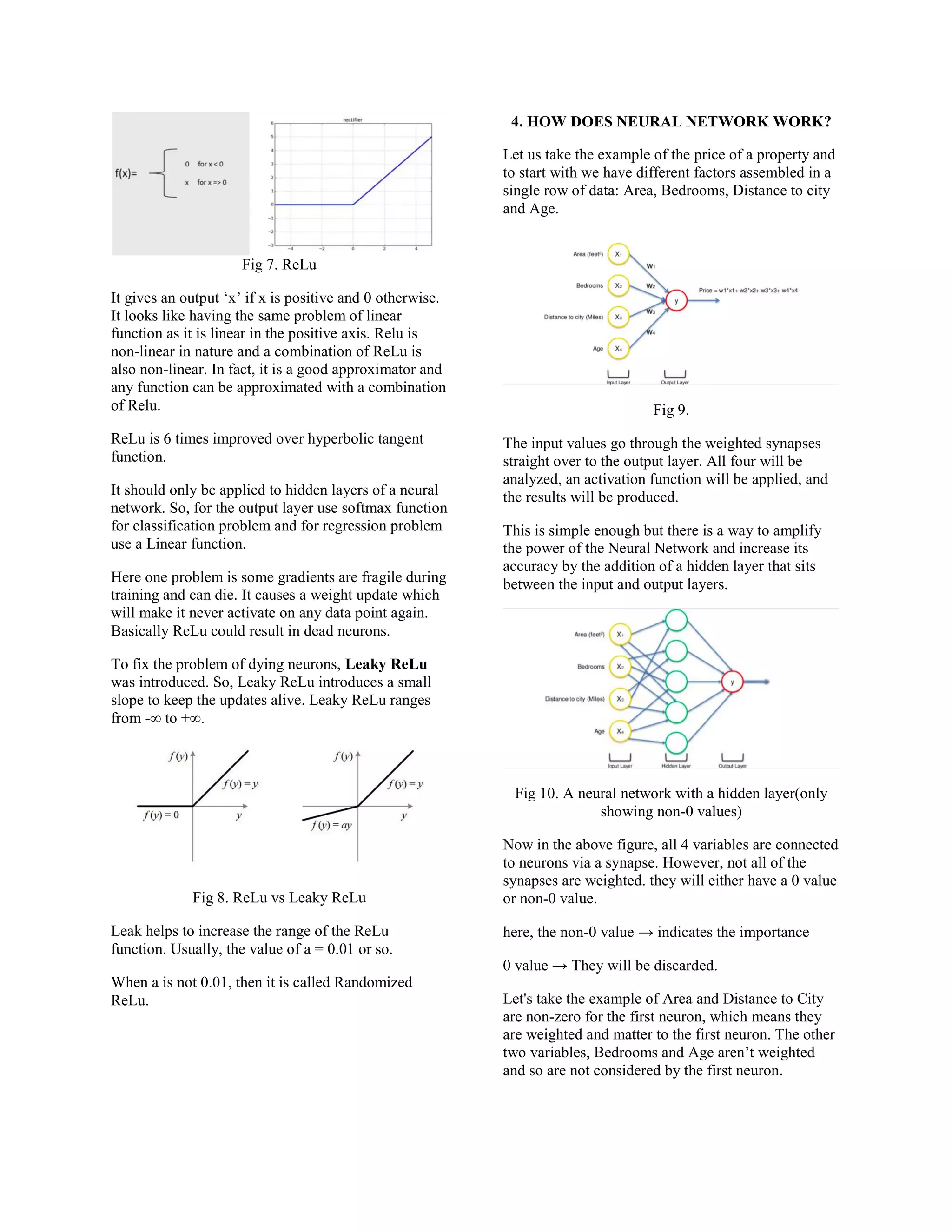

This document discusses artificial neural networks (ANNs) and how they are inspired by biological neural networks in the human brain. It provides details on the basic components of biological neurons (dendrites, soma, axon, synapses) and how ANNs attempt to mimic this structure. The document then describes some key aspects of ANNs, including activation functions like sigmoid, tanh, ReLU, and how neural networks work by taking input values, applying weights and an activation function, and producing an output. It focuses on ANNs for problems like regression and classification.