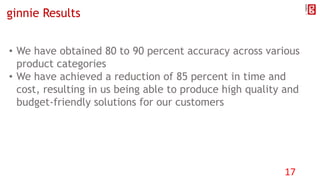

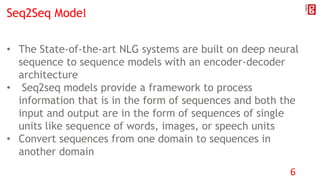

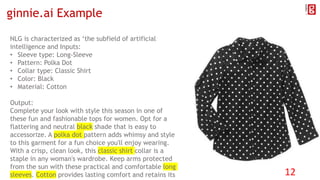

The document discusses the application of Natural Language Generation (NLG) in eCommerce, focusing on its types, including template-based and advanced NLG systems. It highlights the advantages of using advanced NLG tools to generate unique product descriptions quickly, thereby improving product visibility and purchase likelihood. The presentation also covers the sequence-to-sequence models and encoder-decoder architecture integral to modern NLG systems, along with the platform ginnie.ai, which exemplifies these concepts in practice.

![Using Seq2Seq Model in ginnie

LSTM

Encoder

LSTM

Decoder

“Apparel Women Shirts Color Black”

“[START] Breathable and durable cotton materials allow for lightweight all-day comfort”

“Available in a dark, cool black for lots of attitude[STOP]”

“Apparel Women Shirts Material Cotton”

“Apparel Women Shirts Collar Classic”

“Apparel Women Shirts Sleeve Long-Sleeve” “[START] Get ready for the office in the fashionable long sleeves of this design”

“[START] Available in a dark, cool black for lots of attitude”

“[START] Designed with a classic shirt collar for a timeless look that is always in style”

“Breathable and durable cotton materials allow for lightweight all-day comfort[STOP]”

“Designed with a classic shirt collar for a timeless look that is always in style[STOP]”

“Get ready for the office in the fashionable long sleeves of this design[STOP]”

Internal LSTM

states(h,c)

16](https://image.slidesharecdn.com/applicationofnlginecommerce-190331021536/85/Application-of-NLG-in-e-commerce-16-320.jpg)