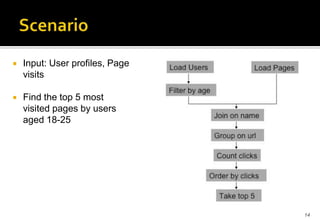

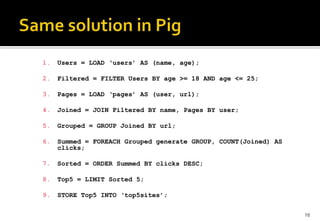

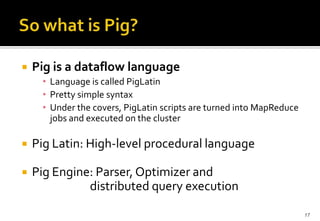

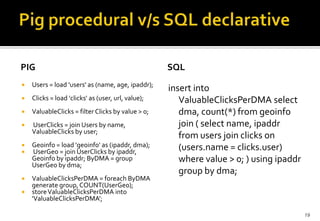

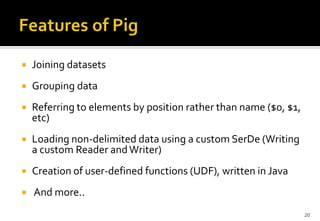

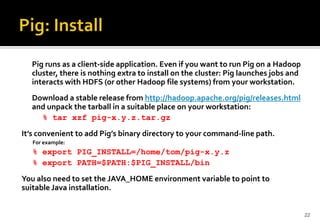

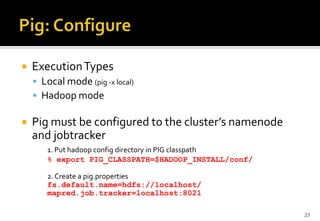

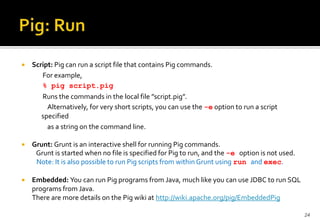

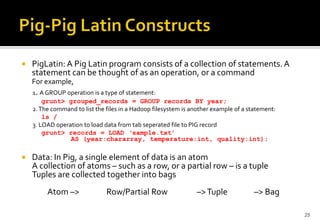

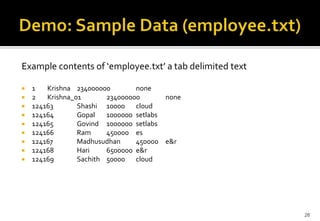

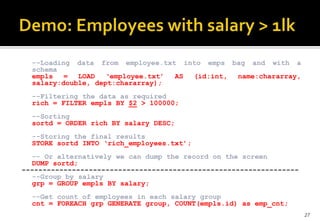

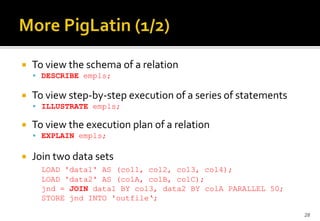

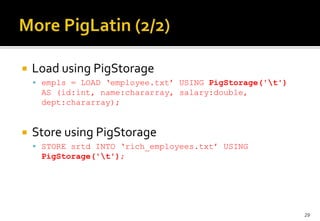

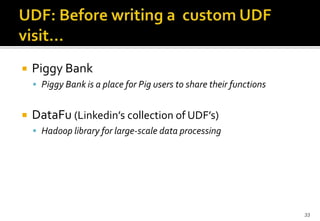

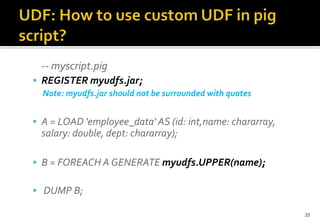

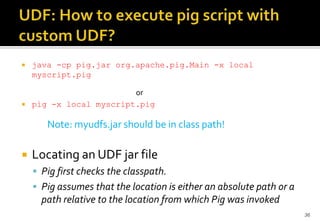

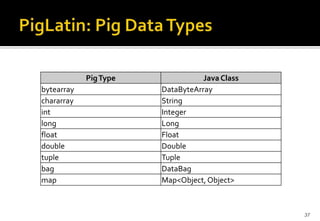

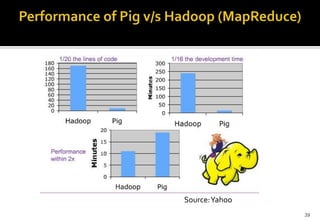

This document provides an overview of Apache Pig and Pig Latin for querying large datasets. It discusses why Pig was created due to limitations in SQL for big data, how Pig scripts are written in Pig Latin using a simple syntax, and how PigLatin scripts are compiled into MapReduce jobs and executed on Hadoop clusters. Advanced topics covered include user-defined functions in PigLatin for custom data processing and sharing functions through Piggy Bank.