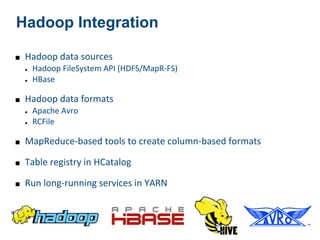

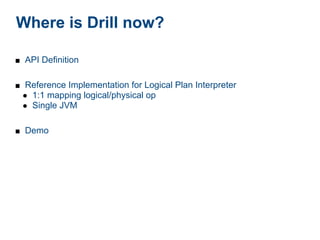

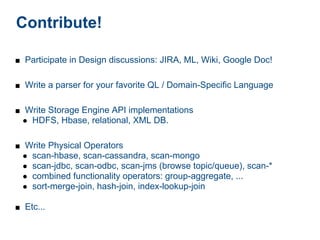

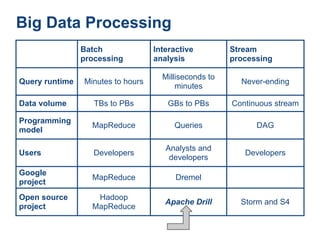

The document discusses Apache Drill, an open-source project designed for low-latency interactive queries and optimized for handling large data sets across various data models. It details its extensible architecture, compatibility with different query languages, and integration with Hadoop ecosystems, while emphasizing its scalability and performance in big data processing. Additionally, it invites contributions and participation from the community for ongoing development and expansion.

![DrQL Example

local-logs = donuts.json:

SELECT

{ ppu,

"id": "0003", typeCount =

"type": "donut",

COUNT(*) OVER PARTITION BY ppu,

"name": "Old Fashioned",

quantity =

"ppu": 0.55,

"sales": 300, SUM(sales) OVER PARTITION BY ppu,

"batters": sales =

{ SUM(ppu*sales) OVER PARTITION BY

"batter": ppu

[ FROM local-logs donuts

{ "id": "1001", "type": "Regular" },

{ "id": "1002", "type": "Chocolate" } WHERE donuts.ppu < 1.00

] ORDER BY dountuts.ppu DESC;

},

"topping":

[

{ "id": "5001", "type": "None" },

{ "id": "5002", "type": "Glazed" },

{ "id": "5003", "type": "Chocolate" },

{ "id": "5004", "type": "Maple" }

]

}](https://image.slidesharecdn.com/pjug-pdx-2013-01-15-130116123210-phpapp02/85/Apache-Drill-PJUG-Jan-15-2013-14-320.jpg)

![Logical Plan Syntax:

Operators & Expressions

query:[

{

op:"sequence",

do:[

{

op: "scan",

memo: "initial_scan",

ref: "donuts",

source: "local-logs",

selection: {data: "activity"}

},

{

op: "transform",

transforms: [

{ ref: "donuts.quanity", expr: "donuts.sales"}

]

},

{

op: "filter",

expr: "donuts.ppu < 1.00"

},

---](https://image.slidesharecdn.com/pjug-pdx-2013-01-15-130116123210-phpapp02/85/Apache-Drill-PJUG-Jan-15-2013-17-320.jpg)

![Logical Streaming Example

0

1

2

3

4

{ @id: <refnum>, op: “window-frame”,

input: <input>,

keys: [ 0

<name>,... 01

], 012

ref: <name>, 123

before: 2, 234

after: here

}](https://image.slidesharecdn.com/pjug-pdx-2013-01-15-130116123210-phpapp02/85/Apache-Drill-PJUG-Jan-15-2013-18-320.jpg)

![Representing a DAG

{ @id: 19, op: "aggregate",

input: 18,

type: <simple|running|repeat>,

keys: [<name>,...],

aggregations: [

{ref: <name>, expr: <aggexpr> },...

]

}](https://image.slidesharecdn.com/pjug-pdx-2013-01-15-130116123210-phpapp02/85/Apache-Drill-PJUG-Jan-15-2013-19-320.jpg)

![Multiple Inputs

{ @id: 25, op: "cogroup",

groupings: [

{ref: 23, expr: “id”}, {ref: 24, expr: “id”}

]

}](https://image.slidesharecdn.com/pjug-pdx-2013-01-15-130116123210-phpapp02/85/Apache-Drill-PJUG-Jan-15-2013-20-320.jpg)