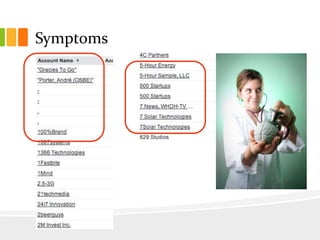

The document outlines a comprehensive seven-step plan for data cleansing within organizations, emphasizing strategy, accountability, and automation to maintain data quality. Each step addresses specific tactics such as creating validation rules, engaging staff in data ownership, and utilizing automation tools to manage data efficiently. It also includes practical advice on using reports and dashboards to monitor data hygiene, suggest helpful apps, and promote a culture of clean data throughout the organization.