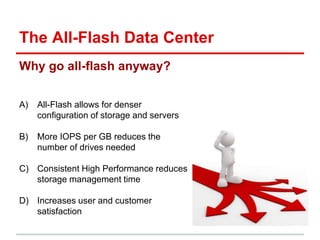

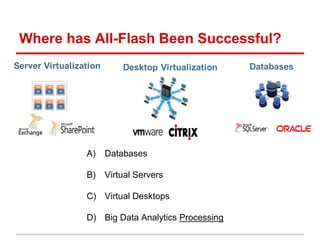

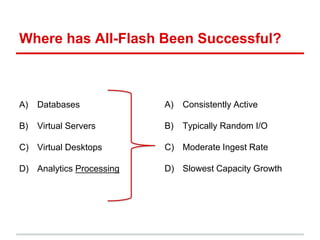

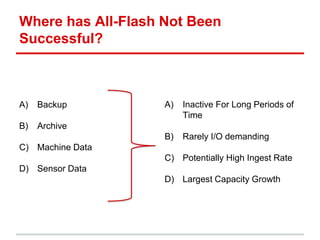

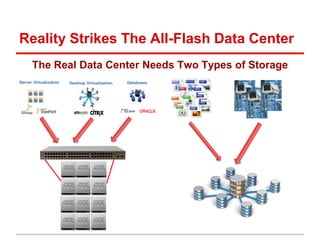

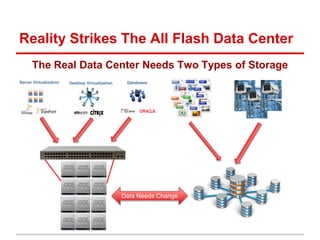

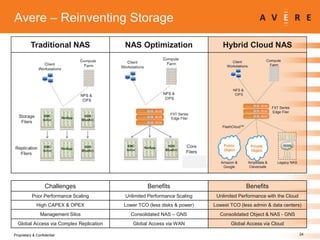

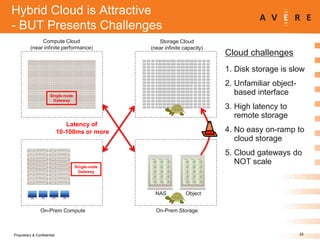

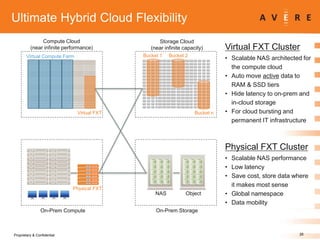

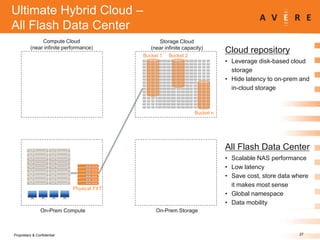

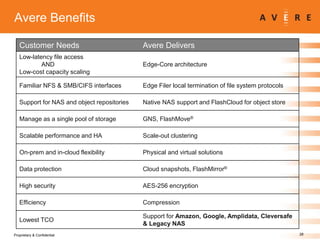

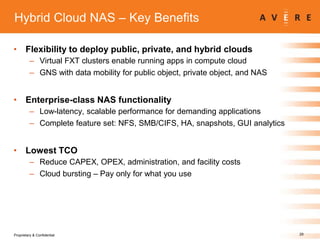

The document presents a webinar aimed at educating attendees about creating an all-flash data center using cloud technologies, addressing challenges associated with hybrid and all-flash arrays. Key topics include the benefits of all-flash storage, the effectiveness of such solutions in various applications, and strategies for integrating cloud storage with on-premises systems. It also features insights from industry experts and offers guidance on leveraging cloud capabilities for enhanced data storage performance.