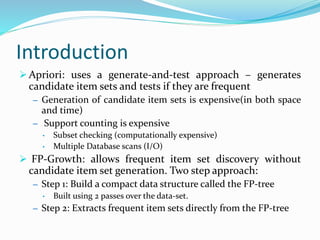

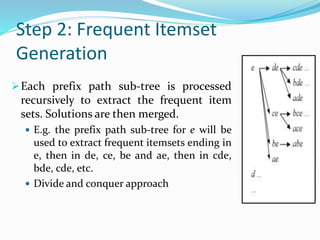

FP-Growth is an algorithm for frequent itemset mining that improves upon the Apriori algorithm. It uses a compact data structure called an FP-tree to store transaction data, avoiding the generation of candidate itemsets. The FP-tree is constructed in two passes over the dataset. Frequent itemsets are then extracted from the FP-tree by looking for combinations in a bottom-up manner, dividing the problem into smaller subproblems. This allows frequent itemsets to be discovered more efficiently than Apriori in terms of both time and memory usage.