2021 03-02-transformer interpretability

•Download as PPTX, PDF•

0 likes•864 views

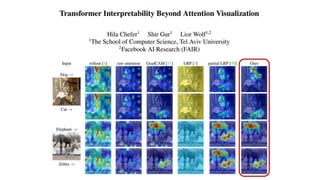

- The document proposes a novel method to compute relevancy for Transformer networks by propagating relevance through attention maps in a layer-wise manner rather than averaging across layers or relying on simplistic assumptions. - It introduces a non-parametric relevance propagation method based on Taylor decomposition to backpropagate relevance through attention, skip connections and other operations in each layer separately. - Experiments show that the proposed layer-wise relevance propagation method outperforms existing methods at identifying relevant regions in images and is more robust to input perturbations.

Report

Share

Report

Share

Recommended

2021 03-01-on the relationship between self-attention and convolutional layers

on the relationship between self-attention and convolutional layers

PR-284: End-to-End Object Detection with Transformers(DETR)

TensorFlow Korea 논문읽기모임 PR12 284번째 논문 review입니다.

이번 논문은 Facebook에서 나온 DETR(DEtection with TRansformer) 입니다.

arxiv-sanity에 top recent/last year에서 가장 상위에 자리하고 있는 논문이기도 합니다(http://www.arxiv-sanity.com/top?timefilter=year&vfilter=all)

최근에 ICLR 2021에 submit된 ViT로 인해서 이제 Transformer가 CNN을 대체하는 것 아닌가 하는 얘기들이 많이 나오고 있는데요, 올 해 ECCV에 발표된 논문이고 feature extraction 부분은 CNN을 사용하긴 했지만 transformer를 활용하여 효과적으로 Object Detection을 수행하는 방법을 제안한 중요한 논문이라고 생각합니다. 이 논문에서는 detection 문제에서 anchor box나 NMS(Non Maximum Supression)와 같은 heuristic 하고 미분 불가능한 방법들이 많이 사용되고, 이로 인해서 유독 object detection 문제는 딥러닝의 철학인 end-to-end 방식으로 해결되지 못하고 있음을 지적하고 있습니다. 그 해결책으로 bounding box를 예측하는 문제를 set prediction problem(중복을 허용하지 않고, 순서에 무관함)으로 보고 transformer를 활용한 end-to-end 방식의 알고리즘을 제안하였습니다. anchor box도 필요없고 NMS도 필요없는 DETR 알고리즘의 자세한 내용이 알고싶으시면 영상을 참고해주세요!

영상링크: https://youtu.be/lXpBcW_I54U

논문링크: https://arxiv.org/abs/2005.12872

Overview of Convolutional Neural Networks

In this presentation we discuss the convolution operation, the architecture of a convolution neural network, different layers such as pooling etc. This presentation draws heavily from A Karpathy's Stanford Course CS 231n

Machine Learning - Introduction to Convolutional Neural Networks

Abstract: This PDSG workshop introduces basic concepts of convolutional neural networks. Concepts covered are image pixels, image preprocessing, feature detectors, feature maps, convolution, ReLU, pooling and flattening.

Level: Fundamental

Requirements: No prior programming or statistics knowledge required. Some knowledge of neural networks is recommended.

Recommended

2021 03-01-on the relationship between self-attention and convolutional layers

on the relationship between self-attention and convolutional layers

PR-284: End-to-End Object Detection with Transformers(DETR)

TensorFlow Korea 논문읽기모임 PR12 284번째 논문 review입니다.

이번 논문은 Facebook에서 나온 DETR(DEtection with TRansformer) 입니다.

arxiv-sanity에 top recent/last year에서 가장 상위에 자리하고 있는 논문이기도 합니다(http://www.arxiv-sanity.com/top?timefilter=year&vfilter=all)

최근에 ICLR 2021에 submit된 ViT로 인해서 이제 Transformer가 CNN을 대체하는 것 아닌가 하는 얘기들이 많이 나오고 있는데요, 올 해 ECCV에 발표된 논문이고 feature extraction 부분은 CNN을 사용하긴 했지만 transformer를 활용하여 효과적으로 Object Detection을 수행하는 방법을 제안한 중요한 논문이라고 생각합니다. 이 논문에서는 detection 문제에서 anchor box나 NMS(Non Maximum Supression)와 같은 heuristic 하고 미분 불가능한 방법들이 많이 사용되고, 이로 인해서 유독 object detection 문제는 딥러닝의 철학인 end-to-end 방식으로 해결되지 못하고 있음을 지적하고 있습니다. 그 해결책으로 bounding box를 예측하는 문제를 set prediction problem(중복을 허용하지 않고, 순서에 무관함)으로 보고 transformer를 활용한 end-to-end 방식의 알고리즘을 제안하였습니다. anchor box도 필요없고 NMS도 필요없는 DETR 알고리즘의 자세한 내용이 알고싶으시면 영상을 참고해주세요!

영상링크: https://youtu.be/lXpBcW_I54U

논문링크: https://arxiv.org/abs/2005.12872

Overview of Convolutional Neural Networks

In this presentation we discuss the convolution operation, the architecture of a convolution neural network, different layers such as pooling etc. This presentation draws heavily from A Karpathy's Stanford Course CS 231n

Machine Learning - Introduction to Convolutional Neural Networks

Abstract: This PDSG workshop introduces basic concepts of convolutional neural networks. Concepts covered are image pixels, image preprocessing, feature detectors, feature maps, convolution, ReLU, pooling and flattening.

Level: Fundamental

Requirements: No prior programming or statistics knowledge required. Some knowledge of neural networks is recommended.

Convolutional Neural Network (CNN) presentation from theory to code in Theano

I collected and created presentation file about deep learning algorithm of convolutional neural network

PR095: Modularity Matters: Learning Invariant Relational Reasoning Tasks

Tensorflow-KR 논문읽기모임 95번째 발표영상입니다

Modularity Matters라는 제목으로 visual relational reasoning 문제를 풀 수 있는 방법을 제시한 논문입니다. 기존 CNN들이 이런 문제이 취약함을 보여주고 이를 해결하기 위한 방법을 제시합니다. 관심있는 주제이기도 하고 Bengio 교수님 팀에서 쓴 논문이라서 review 해보았습니다

발표영상: https://youtu.be/dAGI3mlOmfw

논문링크: https://arxiv.org/abs/1806.06765

PR-317: MLP-Mixer: An all-MLP Architecture for Vision

Computer Vision 분야에서 CNN은 과연 살아남을 수 있을까요?

안녕하세요 TensorFlow Korea 논문 읽기 모임 PR-12의 317번째 논문 리뷰입니다.

이번에는 Google Research, Brain Team의 MLP-Mixer: An all-MLP Architecture for Vision을 리뷰해보았습니다.

Attention의 공격도 버거운데 이번에는 MLP(Multi-Layer Perceptron)의 공격입니다.

MLP만을 사용해서 Image Classification을 하는데 성능도 좋고 속도도 빠르고....

구조를 간단히 소개해드리면 ViT(Vision Transformer)의 self-attention 부분을 MLP로 변경하였습니다.

MLP block 2개를 사용하여 하나는 patch(token)들 간의 연산을 하는데 사용하고, 하나는 patch 내부 연산을 하는데 사용합니다.

사실 MLP를 사용하긴 했지만 논문에도 언급되어 있듯이, 이 부분을 일종의 convolution이라고 볼 수 있는데요...

그래도 transformer 기반의 network이 가질 수밖에 없는 quadratic complexity를 linear로 낮춰주고

convolution의 inductive bias 거의 없이 아주아주 simple한 구조를 활용하여 이렇게 좋은 성능을 보여준 점이 멋집니다.

반면에 역시나 data를 많이 써야 한다거나, MLP의 한계인 fixed length의 input만 받을 수 있다는 점은 단점이라고 생각하는데요,

이 연구를 시작으로 MLP도 다시한번 조명받는 계기가 되면 좋을 것 같네요

비슷한 시점에 나온 비슷한 연구들도 마지막에 간략하게 소개하였습니다.

재미있게 봐주세요. 감사합니다!

논문링크: https://arxiv.org/abs/2105.01601

영상링크: https://youtu.be/KQmZlxdnnuY

Convolutional Neural Network - CNN | How CNN Works | Deep Learning Course | S...

This presentation on Convolutional neural network tutorial (CNN) will help you understand what is a convolutional neural network, hoe CNN recognizes images, what are layers in the convolutional neural network and at the end, you will see a use case implementation using CNN. CNN is a feed forward neural network that is generally used to analyze visual images by processing data with grid like topology. A CNN is also known as a "ConvNet". Convolutional networks can also perform optical character recognition to digitize text and make natural-language processing possible on analog and hand-written documents. CNNs can also be applied to sound when it is represented visually as a spectrogram. Now, lets deep dive into this presentation to understand what is CNN and how do they actually work.

Below topics are explained in this CNN presentation(Convolutional Neural Network presentation)

1. Introduction to CNN

2. What is a convolutional neural network?

3. How CNN recognizes images?

4. Layers in convolutional neural network

5. Use case implementation using CNN

Simplilearn’s Deep Learning course will transform you into an expert in deep learning techniques using TensorFlow, the open-source software library designed to conduct machine learning & deep neural network research. With our deep learning course, you’ll master deep learning and TensorFlow concepts, learn to implement algorithms, build artificial neural networks and traverse layers of data abstraction to understand the power of data and prepare you for your new role as deep learning scientist.

Why Deep Learning?

It is one of the most popular software platforms used for deep learning and contains powerful tools to help you build and implement artificial neural networks.

Advancements in deep learning are being seen in smartphone applications, creating efficiencies in the power grid, driving advancements in healthcare, improving agricultural yields, and helping us find solutions to climate change. With this Tensorflow course, you’ll build expertise in deep learning models, learn to operate TensorFlow to manage neural networks and interpret the results.

And according to payscale.com, the median salary for engineers with deep learning skills tops $120,000 per year.

You can gain in-depth knowledge of Deep Learning by taking our Deep Learning certification training course. With Simplilearn’s Deep Learning course, you will prepare for a career as a Deep Learning engineer as you master concepts and techniques including supervised and unsupervised learning, mathematical and heuristic aspects, and hands-on modeling to develop algorithms. Those who complete the course will be able to:

Learn more at: https://www.simplilearn.com/

Convolutional Neural Network Models - Deep Learning

Convolutional Neural Network Models - Deep Learning

Convolutional Neural Network

ILSVRC

AlexNet (2012)

ZFNet (2013)

VGGNet (2014)

GoogleNet 2014)

ResNet (2015)

Conclusion

Convolutional Neural Network (CNN) is a multi-layer neural network

PR-108: MobileNetV2: Inverted Residuals and Linear Bottlenecks

Tensorflow-KR 논문읽기모임 Season2 108번째 발표 영상입니다

Google의 MobileNet 후속논문인 MobileNet V2를 review해 보았습니다

참고영상 : MobileNet v1 - https://youtu.be/7UoOFKcyIvM

발표영상 : https://youtu.be/mT5Y-Zumbbw

논문링크 : https://arxiv.org/abs/1801.04381

DBSCAN : A Clustering Algorithm

This document contains a description of the dbscan algorithm, the operating principle of the algorithm, its advantages and disadvantages.

Convolutional Neural Network and Its Applications

In machine learning, a convolutional neural network is a class of deep, feed-forward artificial neural networks that have successfully been applied fpr analyzing visual imagery.

Semi orthogonal low-rank matrix factorization for deep neural networks

The ppt to introduce this work.

Convolutional neural network from VGG to DenseNet

Paper review presentation of the ResNets and DenseNet

Introduction to deep learning

Deep learning (also known as deep structured learning or hierarchical learning) is the application of artificial neural networks (ANNs) to learning tasks that contain more than one hidden layer. Deep learning is part of a broader family of machine learning methods based on learning data representations, as opposed to task-specific algorithms. Learning can be supervised, partially supervised or unsupervised.

220206 transformer interpretability beyond attention visualization

안녕하세요 딥러닝 논문 읽기 모임입니다. 오늘 업로드된 논문 리뷰 영상은 'Transformer Interpretability Beyond Attention Visualization'라는 제목의 논문입니다.

트랜스포머는 지금 까지 논문 리뷰 영상을 업로드 하면서 가장 많이 언급한 모델중 하나입니다. NLP를 넘어, 이미지 처리 매우 많은 영역에서 소타 네트워크로 쓰였습니다. 해당 논문은 이미지 처리 영역에서의 Transformer가 의사결정을 내리는 과정에 대해 특히 self Attention 모듈에 관해 다양한 방법으로 심층적으로 연구한 논문 입니다!

오늘 논문 리뷰를 위해 펀디멘탈팀 김채현님이 자세한리뷰 도와주셨습니다!

많은 관심 미리 감사드립니다!

https://youtu.be/XCED5bd2WT0

More Related Content

What's hot

Convolutional Neural Network (CNN) presentation from theory to code in Theano

I collected and created presentation file about deep learning algorithm of convolutional neural network

PR095: Modularity Matters: Learning Invariant Relational Reasoning Tasks

Tensorflow-KR 논문읽기모임 95번째 발표영상입니다

Modularity Matters라는 제목으로 visual relational reasoning 문제를 풀 수 있는 방법을 제시한 논문입니다. 기존 CNN들이 이런 문제이 취약함을 보여주고 이를 해결하기 위한 방법을 제시합니다. 관심있는 주제이기도 하고 Bengio 교수님 팀에서 쓴 논문이라서 review 해보았습니다

발표영상: https://youtu.be/dAGI3mlOmfw

논문링크: https://arxiv.org/abs/1806.06765

PR-317: MLP-Mixer: An all-MLP Architecture for Vision

Computer Vision 분야에서 CNN은 과연 살아남을 수 있을까요?

안녕하세요 TensorFlow Korea 논문 읽기 모임 PR-12의 317번째 논문 리뷰입니다.

이번에는 Google Research, Brain Team의 MLP-Mixer: An all-MLP Architecture for Vision을 리뷰해보았습니다.

Attention의 공격도 버거운데 이번에는 MLP(Multi-Layer Perceptron)의 공격입니다.

MLP만을 사용해서 Image Classification을 하는데 성능도 좋고 속도도 빠르고....

구조를 간단히 소개해드리면 ViT(Vision Transformer)의 self-attention 부분을 MLP로 변경하였습니다.

MLP block 2개를 사용하여 하나는 patch(token)들 간의 연산을 하는데 사용하고, 하나는 patch 내부 연산을 하는데 사용합니다.

사실 MLP를 사용하긴 했지만 논문에도 언급되어 있듯이, 이 부분을 일종의 convolution이라고 볼 수 있는데요...

그래도 transformer 기반의 network이 가질 수밖에 없는 quadratic complexity를 linear로 낮춰주고

convolution의 inductive bias 거의 없이 아주아주 simple한 구조를 활용하여 이렇게 좋은 성능을 보여준 점이 멋집니다.

반면에 역시나 data를 많이 써야 한다거나, MLP의 한계인 fixed length의 input만 받을 수 있다는 점은 단점이라고 생각하는데요,

이 연구를 시작으로 MLP도 다시한번 조명받는 계기가 되면 좋을 것 같네요

비슷한 시점에 나온 비슷한 연구들도 마지막에 간략하게 소개하였습니다.

재미있게 봐주세요. 감사합니다!

논문링크: https://arxiv.org/abs/2105.01601

영상링크: https://youtu.be/KQmZlxdnnuY

Convolutional Neural Network - CNN | How CNN Works | Deep Learning Course | S...

This presentation on Convolutional neural network tutorial (CNN) will help you understand what is a convolutional neural network, hoe CNN recognizes images, what are layers in the convolutional neural network and at the end, you will see a use case implementation using CNN. CNN is a feed forward neural network that is generally used to analyze visual images by processing data with grid like topology. A CNN is also known as a "ConvNet". Convolutional networks can also perform optical character recognition to digitize text and make natural-language processing possible on analog and hand-written documents. CNNs can also be applied to sound when it is represented visually as a spectrogram. Now, lets deep dive into this presentation to understand what is CNN and how do they actually work.

Below topics are explained in this CNN presentation(Convolutional Neural Network presentation)

1. Introduction to CNN

2. What is a convolutional neural network?

3. How CNN recognizes images?

4. Layers in convolutional neural network

5. Use case implementation using CNN

Simplilearn’s Deep Learning course will transform you into an expert in deep learning techniques using TensorFlow, the open-source software library designed to conduct machine learning & deep neural network research. With our deep learning course, you’ll master deep learning and TensorFlow concepts, learn to implement algorithms, build artificial neural networks and traverse layers of data abstraction to understand the power of data and prepare you for your new role as deep learning scientist.

Why Deep Learning?

It is one of the most popular software platforms used for deep learning and contains powerful tools to help you build and implement artificial neural networks.

Advancements in deep learning are being seen in smartphone applications, creating efficiencies in the power grid, driving advancements in healthcare, improving agricultural yields, and helping us find solutions to climate change. With this Tensorflow course, you’ll build expertise in deep learning models, learn to operate TensorFlow to manage neural networks and interpret the results.

And according to payscale.com, the median salary for engineers with deep learning skills tops $120,000 per year.

You can gain in-depth knowledge of Deep Learning by taking our Deep Learning certification training course. With Simplilearn’s Deep Learning course, you will prepare for a career as a Deep Learning engineer as you master concepts and techniques including supervised and unsupervised learning, mathematical and heuristic aspects, and hands-on modeling to develop algorithms. Those who complete the course will be able to:

Learn more at: https://www.simplilearn.com/

Convolutional Neural Network Models - Deep Learning

Convolutional Neural Network Models - Deep Learning

Convolutional Neural Network

ILSVRC

AlexNet (2012)

ZFNet (2013)

VGGNet (2014)

GoogleNet 2014)

ResNet (2015)

Conclusion

Convolutional Neural Network (CNN) is a multi-layer neural network

PR-108: MobileNetV2: Inverted Residuals and Linear Bottlenecks

Tensorflow-KR 논문읽기모임 Season2 108번째 발표 영상입니다

Google의 MobileNet 후속논문인 MobileNet V2를 review해 보았습니다

참고영상 : MobileNet v1 - https://youtu.be/7UoOFKcyIvM

발표영상 : https://youtu.be/mT5Y-Zumbbw

논문링크 : https://arxiv.org/abs/1801.04381

DBSCAN : A Clustering Algorithm

This document contains a description of the dbscan algorithm, the operating principle of the algorithm, its advantages and disadvantages.

Convolutional Neural Network and Its Applications

In machine learning, a convolutional neural network is a class of deep, feed-forward artificial neural networks that have successfully been applied fpr analyzing visual imagery.

Semi orthogonal low-rank matrix factorization for deep neural networks

The ppt to introduce this work.

Convolutional neural network from VGG to DenseNet

Paper review presentation of the ResNets and DenseNet

What's hot (20)

Convolutional Neural Network (CNN) presentation from theory to code in Theano

Convolutional Neural Network (CNN) presentation from theory to code in Theano

PR095: Modularity Matters: Learning Invariant Relational Reasoning Tasks

PR095: Modularity Matters: Learning Invariant Relational Reasoning Tasks

PR-317: MLP-Mixer: An all-MLP Architecture for Vision

PR-317: MLP-Mixer: An all-MLP Architecture for Vision

Convolutional Neural Network - CNN | How CNN Works | Deep Learning Course | S...

Convolutional Neural Network - CNN | How CNN Works | Deep Learning Course | S...

Convolutional Neural Network Models - Deep Learning

Convolutional Neural Network Models - Deep Learning

PR-108: MobileNetV2: Inverted Residuals and Linear Bottlenecks

PR-108: MobileNetV2: Inverted Residuals and Linear Bottlenecks

Semi orthogonal low-rank matrix factorization for deep neural networks

Semi orthogonal low-rank matrix factorization for deep neural networks

Similar to 2021 03-02-transformer interpretability

Introduction to deep learning

Deep learning (also known as deep structured learning or hierarchical learning) is the application of artificial neural networks (ANNs) to learning tasks that contain more than one hidden layer. Deep learning is part of a broader family of machine learning methods based on learning data representations, as opposed to task-specific algorithms. Learning can be supervised, partially supervised or unsupervised.

220206 transformer interpretability beyond attention visualization

안녕하세요 딥러닝 논문 읽기 모임입니다. 오늘 업로드된 논문 리뷰 영상은 'Transformer Interpretability Beyond Attention Visualization'라는 제목의 논문입니다.

트랜스포머는 지금 까지 논문 리뷰 영상을 업로드 하면서 가장 많이 언급한 모델중 하나입니다. NLP를 넘어, 이미지 처리 매우 많은 영역에서 소타 네트워크로 쓰였습니다. 해당 논문은 이미지 처리 영역에서의 Transformer가 의사결정을 내리는 과정에 대해 특히 self Attention 모듈에 관해 다양한 방법으로 심층적으로 연구한 논문 입니다!

오늘 논문 리뷰를 위해 펀디멘탈팀 김채현님이 자세한리뷰 도와주셨습니다!

많은 관심 미리 감사드립니다!

https://youtu.be/XCED5bd2WT0

NS-CUK Seminar: H.E.Lee, Review on "Gated Graph Sequence Neural Networks", I...

NS-CUK Seminar: H.E.Lee, Review on "Gated Graph Sequence Neural Networks", ICLR 2016

Introduction to Neural Networks and Deep Learning

Understanding Neural Networks and Deep Learning

From basic perceptron, MLPs to CNNs

NS-CUK Seminar: H.E.Lee, Review on "Structural Deep Embedding for Hyper-Netw...

NS-CUK Seminar: H.E.Lee, Review on "Structural Deep Embedding for Hyper-Networks", AAAI 20218

MNN

MNN, MultiLayer Neural Network, Classificatin By Neural Network, Multi Layer Perception, Neural Network, Back backpropagation Algorithm, Feed Forward Neural Netowrk

NS-CUK Journal club: HBKim, Review on "Neural Graph Collaborative Filtering",...

NS-CUK Journal club: HBKim, Review on "Neural Graph Collaborative Filtering", SIGIR 2019

Deep learning from a novice perspective

This is a set of slides that introduces the layman to Deep Learning and also presents a road-map for further studies.

Introduction to Neural networks (under graduate course) Lecture 6 of 9

Undergraduate course content:

Introduction and a historical review

Neural network concepts

Basic models of ANN

Linearly separable functions

Non Linearly separable functions

NN Learning techniques

Associative networks

Mapping networks

Spatiotemporal Network

Stochastic Networks

Perceptron (neural network)

i. Perceptron

Representation & Issues

Classification

learning

ii. linear Separability

Similar to 2021 03-02-transformer interpretability (20)

220206 transformer interpretability beyond attention visualization

220206 transformer interpretability beyond attention visualization

NS-CUK Seminar: H.E.Lee, Review on "Gated Graph Sequence Neural Networks", I...

NS-CUK Seminar: H.E.Lee, Review on "Gated Graph Sequence Neural Networks", I...

NS-CUK Seminar: H.E.Lee, Review on "Structural Deep Embedding for Hyper-Netw...

NS-CUK Seminar: H.E.Lee, Review on "Structural Deep Embedding for Hyper-Netw...

ANNs have been widely used in various domains for: Pattern recognition Funct...

ANNs have been widely used in various domains for: Pattern recognition Funct...

NS-CUK Journal club: HBKim, Review on "Neural Graph Collaborative Filtering",...

NS-CUK Journal club: HBKim, Review on "Neural Graph Collaborative Filtering",...

Introduction to Neural networks (under graduate course) Lecture 6 of 9

Introduction to Neural networks (under graduate course) Lecture 6 of 9

Deep Feed Forward Neural Networks and Regularization

Deep Feed Forward Neural Networks and Regularization

More from JAEMINJEONG5

Jaemin_230701_Simple_Copy_paste.pptx

Simple Copy-Paste is a Strong Data Augmentaiton Method for Instance Segmentation

2021 03-02-distributed representations-of_words_and_phrases

distributed representations of words and phrases

2020 11 2_automated sleep stage scoring of the sleep heart

automated sleep stage scoring of the sleep heart

More from JAEMINJEONG5 (12)

2021 03-02-distributed representations-of_words_and_phrases

2021 03-02-distributed representations-of_words_and_phrases

2020 11 2_automated sleep stage scoring of the sleep heart

2020 11 2_automated sleep stage scoring of the sleep heart

Recently uploaded

Modelagem de um CSTR com reação endotermica.pdf

Modelagem em função de transferencia. CSTR não-linear.

Governing Equations for Fundamental Aerodynamics_Anderson2010.pdf

Governing Equations for Fundamental Aerodynamics

A review on techniques and modelling methodologies used for checking electrom...

The proper function of the integrated circuit (IC) in an inhibiting electromagnetic environment has always been a serious concern throughout the decades of revolution in the world of electronics, from disjunct devices to today’s integrated circuit technology, where billions of transistors are combined on a single chip. The automotive industry and smart vehicles in particular, are confronting design issues such as being prone to electromagnetic interference (EMI). Electronic control devices calculate incorrect outputs because of EMI and sensors give misleading values which can prove fatal in case of automotives. In this paper, the authors have non exhaustively tried to review research work concerned with the investigation of EMI in ICs and prediction of this EMI using various modelling methodologies and measurement setups.

Design and Analysis of Algorithms-DP,Backtracking,Graphs,B&B

Dynamic Programming

Backtracking

Techniques for Graphs

Branch and Bound

Building Electrical System Design & Installation

Guide for Building Electrical System Design & Installation

6th International Conference on Machine Learning & Applications (CMLA 2024)

6th International Conference on Machine Learning & Applications (CMLA 2024) will provide an excellent international forum for sharing knowledge and results in theory, methodology and applications of on Machine Learning & Applications.

在线办理(ANU毕业证书)澳洲国立大学毕业证录取通知书一模一样

学校原件一模一样【微信:741003700 】《(ANU毕业证书)澳洲国立大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

NUMERICAL SIMULATIONS OF HEAT AND MASS TRANSFER IN CONDENSING HEAT EXCHANGERS...

Power plants release a large amount of water vapor into the

atmosphere through the stack. The flue gas can be a potential

source for obtaining much needed cooling water for a power

plant. If a power plant could recover and reuse a portion of this

moisture, it could reduce its total cooling water intake

requirement. One of the most practical way to recover water

from flue gas is to use a condensing heat exchanger. The power

plant could also recover latent heat due to condensation as well

as sensible heat due to lowering the flue gas exit temperature.

Additionally, harmful acids released from the stack can be

reduced in a condensing heat exchanger by acid condensation. reduced in a condensing heat exchanger by acid condensation.

Condensation of vapors in flue gas is a complicated

phenomenon since heat and mass transfer of water vapor and

various acids simultaneously occur in the presence of noncondensable

gases such as nitrogen and oxygen. Design of a

condenser depends on the knowledge and understanding of the

heat and mass transfer processes. A computer program for

numerical simulations of water (H2O) and sulfuric acid (H2SO4)

condensation in a flue gas condensing heat exchanger was

developed using MATLAB. Governing equations based on

mass and energy balances for the system were derived to

predict variables such as flue gas exit temperature, cooling

water outlet temperature, mole fraction and condensation rates

of water and sulfuric acid vapors. The equations were solved

using an iterative solution technique with calculations of heat

and mass transfer coefficients and physical properties.

一比一原版(IIT毕业证)伊利诺伊理工大学毕业证成绩单专业办理

IIT毕业证原版定制【微信:176555708】【伊利诺伊理工大学毕业证成绩单-学位证】【微信:176555708】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

◆◆◆◆◆ — — — — — — — — 【留学教育】留学归国服务中心 — — — — — -◆◆◆◆◆

【主营项目】

一.毕业证【微信:176555708】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【微信:176555708】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

学历顾问:微信:176555708

KuberTENes Birthday Bash Guadalajara - K8sGPT first impressions

K8sGPT is a tool that analyzes and diagnoses Kubernetes clusters. This presentation was used to share the requirements and dependencies to deploy K8sGPT in a local environment.

Hierarchical Digital Twin of a Naval Power System

A hierarchical digital twin of a Naval DC power system has been developed and experimentally verified. Similar to other state-of-the-art digital twins, this technology creates a digital replica of the physical system executed in real-time or faster, which can modify hardware controls. However, its advantage stems from distributing computational efforts by utilizing a hierarchical structure composed of lower-level digital twin blocks and a higher-level system digital twin. Each digital twin block is associated with a physical subsystem of the hardware and communicates with a singular system digital twin, which creates a system-level response. By extracting information from each level of the hierarchy, power system controls of the hardware were reconfigured autonomously. This hierarchical digital twin development offers several advantages over other digital twins, particularly in the field of naval power systems. The hierarchical structure allows for greater computational efficiency and scalability while the ability to autonomously reconfigure hardware controls offers increased flexibility and responsiveness. The hierarchical decomposition and models utilized were well aligned with the physical twin, as indicated by the maximum deviations between the developed digital twin hierarchy and the hardware.

Understanding Inductive Bias in Machine Learning

This presentation explores the concept of inductive bias in machine learning. It explains how algorithms come with built-in assumptions and preferences that guide the learning process. You'll learn about the different types of inductive bias and how they can impact the performance and generalizability of machine learning models.

The presentation also covers the positive and negative aspects of inductive bias, along with strategies for mitigating potential drawbacks. We'll explore examples of how bias manifests in algorithms like neural networks and decision trees.

By understanding inductive bias, you can gain valuable insights into how machine learning models work and make informed decisions when building and deploying them.

哪里办理(csu毕业证书)查尔斯特大学毕业证硕士学历原版一模一样

原版一模一样【微信:741003700 】【(csu毕业证书)查尔斯特大学毕业证硕士学历】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

一比一原版(SFU毕业证)西蒙菲莎大学毕业证成绩单如何办理

SFU毕业证原版定制【微信:176555708】【西蒙菲莎大学毕业证成绩单-学位证】【微信:176555708】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

◆◆◆◆◆ — — — — — — — — 【留学教育】留学归国服务中心 — — — — — -◆◆◆◆◆

【主营项目】

一.毕业证【微信:176555708】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【微信:176555708】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

学历顾问:微信:176555708

Recently uploaded (20)

Governing Equations for Fundamental Aerodynamics_Anderson2010.pdf

Governing Equations for Fundamental Aerodynamics_Anderson2010.pdf

Tutorial for 16S rRNA Gene Analysis with QIIME2.pdf

Tutorial for 16S rRNA Gene Analysis with QIIME2.pdf

A review on techniques and modelling methodologies used for checking electrom...

A review on techniques and modelling methodologies used for checking electrom...

Design and Analysis of Algorithms-DP,Backtracking,Graphs,B&B

Design and Analysis of Algorithms-DP,Backtracking,Graphs,B&B

6th International Conference on Machine Learning & Applications (CMLA 2024)

6th International Conference on Machine Learning & Applications (CMLA 2024)

NUMERICAL SIMULATIONS OF HEAT AND MASS TRANSFER IN CONDENSING HEAT EXCHANGERS...

NUMERICAL SIMULATIONS OF HEAT AND MASS TRANSFER IN CONDENSING HEAT EXCHANGERS...

KuberTENes Birthday Bash Guadalajara - K8sGPT first impressions

KuberTENes Birthday Bash Guadalajara - K8sGPT first impressions

Fundamentals of Electric Drives and its applications.pptx

Fundamentals of Electric Drives and its applications.pptx

2021 03-02-transformer interpretability

- 2. Abstract • Existing methods either rely on the obtained attention maps, or employ heuristic propagation along the attention graph. • We propose a novel way to compute relevancy for Transformer networks.

- 3. Introduction Problems of previous works 1. This is usually done for a single attention layer 2. Combine multiple layers : Simply averaging -> Blurring of signals -> Not consider the different roles of the layers (deeper layers are more semantic) The rollout method is an alternative. -> we show, by relying on simplistic assumptions, irrelevant tokens often become highlighted

- 4. Rollout attention rollout attention flow information ratio information capacity

- 6. Methods 𝐶 : number of classes 𝑥(𝑛) : input of layer 𝐿(𝑛) (𝑥(1) ∶ network output, 𝑥(𝑁) : network input) 𝐿𝑖 𝑛 (𝑋, 𝑌) : layer operation on two tensors X and Y Deep Taylor Decomposition formulation :

- 8. Non parametric relevance propagation Skip connection : 𝐿 𝑛 𝑢, 𝑣 = 𝑢 + 𝑣 Matrix Multiplication : 𝐿 𝑛 𝑢, 𝑣 = 𝑢𝑣

- 9. Non parametric relevance propagation Attention v u u + v Attention 𝑅𝑣 𝑅𝑢 𝑅(𝑛−1)

- 10. Non parametric relevance propagation

- 11. Non parametric relevance propagation

- 12. Relevance and gradient diffusion 𝐴(𝑏): attention map of block 𝑏 ∇𝐴(𝑏) : gradients 𝑅(𝑛) : relevance 𝐶 ∈ ℝ𝑠×𝑠 𝔼ℎ : is the mean across the “heads” dimension. ⨀ : Hadamard product.

- 13. Experiments

- 14. Experiments

- 15. Experiments First, a pre-trained network is used for extracting visualizations for the validation set of ImageNet. Second, we gradually mask out the pixels of the input image, and measure the mean top-1 accuracy of the network.

- 16. Experiments

- 17. Conclusion How to construct LRP + rollout