2016 bigdata - projects list

•Download as DOCX, PDF•

0 likes•70 views

IEEE PROJECTS, M.TECH PROJECTS,

Report

Share

Report

Share

Recommended

OpenNebulaConf 2016 - MICHAL - flexible infrastructure accounting framework b...

This talk will introduce a flexible accounting framework with data visualization capabilities called MICHAL, that we at CESNET developed for our infrastructure. Framework is able to gather data from multiple sources, OpenNebula being one of them, process it and present the result in a form of charts. MICHAL isn't bind to only one platform and can be easily extended to support accounting of multiple parts of the infrastructure. As part of the presentation, we will discuss our data gathering techniques, MICHAL's design and functionality, currently available data processing modules for IaaS cloud and plans for the future development.

GI2016 ppt shi (big data analytics on the internet)

16. Sächsisches GIS-Forum, Dresden: 4. Oktober, 2016

AAPG Geoscience Technology Workshop 2019

Sharing challenges faced processing petrophysical big data of 1000 well logs, how it was resolved and potential next steps to improve process

CAPI the NASS Way | Pam Hird | Federal Mobile Computing Summit | July 9, 2013

The Federal Mobile Computing Summit was held on July 9, 2013 in Washington, DC.

Recommended

OpenNebulaConf 2016 - MICHAL - flexible infrastructure accounting framework b...

This talk will introduce a flexible accounting framework with data visualization capabilities called MICHAL, that we at CESNET developed for our infrastructure. Framework is able to gather data from multiple sources, OpenNebula being one of them, process it and present the result in a form of charts. MICHAL isn't bind to only one platform and can be easily extended to support accounting of multiple parts of the infrastructure. As part of the presentation, we will discuss our data gathering techniques, MICHAL's design and functionality, currently available data processing modules for IaaS cloud and plans for the future development.

GI2016 ppt shi (big data analytics on the internet)

16. Sächsisches GIS-Forum, Dresden: 4. Oktober, 2016

AAPG Geoscience Technology Workshop 2019

Sharing challenges faced processing petrophysical big data of 1000 well logs, how it was resolved and potential next steps to improve process

CAPI the NASS Way | Pam Hird | Federal Mobile Computing Summit | July 9, 2013

The Federal Mobile Computing Summit was held on July 9, 2013 in Washington, DC.

Process documentation research of CAPI uses in VDSA project

Computer Assisted Personal Interviewing (CAPI) provides huge efficiency gain in household survey and data management over Paper and Pencil Interview (PAPI). ICRISAT - VDSA team introduced CAPI mode of survey in three villages of SAT India in 2014. Objectives • To assess and document process adopted in implementing CAPI mode for household survey in the VDSA project.

Domain research presentation Final

My final project presentation for Domain research on Land Use statistics as part of course curriculum in Fall 2015 semester.

Team 5: Open Land Use Metadata Harvesting on NextGEOSS

Team 5: Open Land Use Metadata Harvesting on NextGEOSS

Predictive control for energy aware consolidation in cloud datacenters

Predictive control for energy aware consolidation in cloud datacenters

+91-9994232214,7806844441, ieeeprojectchennai@gmail.com,

www.projectsieee.com, www.ieee-projects-chennai.com

IEEE PROJECTS 2016-2017

-----------------------------------

Contact:+91-9994232214,+91-7806844441

Email: ieeeprojectchennai@gmail.com

Experience Big Data Analytics use cases ranging from cancer research to IoT a...

Nowadays, successful Big Data initiatives rely on the ability to act fast and to cope with the variety of data and models, like structured and unstructured data from sensors, social media or databases. In this break-out-session, we will showcase how PRIMEFLEX for Hadoop, a powerful and scalable analytics platform, can help business oriented users and citizen data scientists to collect, transform, analyze and even leverage artificial intelligence for Big Data analysis. Alexander Kaffenberger, Senior Business Developer – Big Data, Category Management EMEIA, Fujitsu

Unify Line of Business Data with SAP Digital Boardroom

In this sample use case, you can see the power and potential of unifying your business data using SAP Digital Boardroom to gain meaningful insight. Learn more at http://www.sap.com/digital-boardroom

With SAP Digital Boardroom, you’re able to:

• Connect to Cloud data

• Leverage SAP business networks such as Hybris, Fieldglass, Ariba, and SuccessFactors

• Make faster decisions on live data

• Gain actionable insights

• Collaborate seamlessly in real-time

Field Data Collecting, Processing and Sharing: Using web Service Technologies

Collecting, Distributing and Analyzing field data is a crucial part in any geospatial study. Field data collection tools and methods have been developed significantly due to the advancement of technologies such as Global Navigational Satellite Systems (GNSS) and development of smartphones. Accurate field data collection is also a necessary task for broad spatial data analysis and proper decision making. Development of Web technologies led to share the data and information effectively. This study tries to develop a framework based on the Geospatial Semantic Web technologies for disseminating and processing field data. Experimental results from an implemented prototype show that the proposed framework allows to visualize and process the field data in any context. The system of this study is capable of distributing and processing field data using web application. Moreover, the study demonstrates the importance and the capabilities of web services for spatial data gathering and processing. The system has been developed based on Free and Open Source Software (FOSS) packages such as ZOO-Project, Open Data Kit, etc. It enables user to further improve or deploy the system for variety of studies.

Data Warehousing and Business Intelligence Project on Smart Agriculture and M...

Implemented a Data Warehouse on smart agriculture to solve various Business Intelligence queries. Integrated multiple datasets from 3 different data sources including both structured and unstructured data.

Tools used:

> SQL Server Integration Services for ETL

> SQL Server Management Services for Database

> SQL Server Analysis Services for building the Schema

> Tableau and PowerBI for Visualization

> R for data preprocessing

> LATEX for documentation

Video Presentation: https://www.youtube.com/watch?v=0oIlLQcyPdM

Devconf 17 metrics collection using open-source tools is easy

Data is the core for everything we program - For auto scaling logic, billing, disaster recovery, security, propriety logic and much more.

Its the basic. Like letters.

Following that data pipelines allows the logic of the code to do something important.

Every site, engine and client need to expose the data for their own usages - by logs, counters for flows, events, raw values for alarms and reports..

Data pipeline sounds quite simple to handle, but some are still struggle with trying to collect the data that their program and users require. If it is indeed basic, we probably have the best solutions out there.

Lets organize such requirements for collection, reporting, logs analysis and more - Can we define if we need all of those outputs? How to we align the requirements with the solutions?

In this session we will raise interesting challenges around metrics collection. I will present and show few of the open source alternatives (ELK, graphite, collectd, statsd, filebeat and hawkular) and we will discuss about the solutions we choose in oVirt project to fit our needs.

Leveraging big data to maximize value from rail and power infrastructure assets.

Leveraging big data to maximize value from rail and power infrastructure assets.Chijioke “CJ” Ejimuda

To intelligently design and optimally operate infrastructure assets, a combination of big data batch and streaming execution models are very essential before useful insights are generated. This presentation specifically focuses on how these models could be applied throughout the life cycle of the Design, Build, Finance, Operate and Maintenance phases of rail and power system infrastructures to maximize their value.Analysis of Crime Big Data using MapReduce

Analyzed Crime Big data of Washington DC to solve the following business queries:

> Which hour has the highest crime count?

> Which shift has the highest crime count?

> Year wise crime count

> Hour wise crime count

> Crime count by an offense

> Average of Shift wise crime count

The data was initially stored in MySql which was then moved to HDFS using SQOOP, from where 4 MapReduce operations are doing using JAVA in Eclipse IDE. The outputs of the queries are then moved to HBase using SQOOP. Two more MapReduce operations are done using PIG, the output of which is also moved to HBase using SQOOP. All the outputs were then moved to the local system and are visualized using RStudio and Tableau.

Tools used:

> MySQL, HDFS and HBase to store the data

> SCOOP to move the data from one database to another

> JAVA (Eclipse IDE) and PIG to run the MapReduce queries

> RStudio for data pre-processing and visualization

> Tableau for visualization

> LATEX for Documentation

Data Sources

Slides from 'Data Sources' talk at San Luis Obispo GIS Users Group meeting June 8th, 2010 - given in conjunction with 3 other individuals.

An Energy Efficient Data Transmission and Aggregation of WSN using Data Proce...

https://www.irjet.net/archives/V4/i3/IRJET-V4I3642.pdf

Performance evaluation of Map-reduce jar pig hive and spark with machine lear...

Big data is the biggest challenges as we need huge processing power system and good algorithms to make a decision. We need Hadoop environment with pig hive, machine learning and hadoopecosystem components. The data comes from industries. Many devices around us and sensor, and from social media sites. According to McKinsey There will be a shortage of 15000000 big data professionals by the end of 2020. There are lots of technologies to solve the problem of big data Storage and processing. Such technologies are Apache Hadoop, Apache Spark, Apache Kafka, and many more. Here we analyse the processing speed for the 4GB data on cloudx lab with Hadoop mapreduce with varing mappers and reducers and with pig script and Hive querries and spark environment along with machine learning technology and from the results we can say that machine learning with Hadoop will enhance the processing performance along with with spark, and also we can say that spark is better than Hadoop mapreduce pig and hive, spark with hive and machine learning will be the best performance enhanced compared with pig and hive, Hadoop mapreduce jar.

More Related Content

What's hot

Process documentation research of CAPI uses in VDSA project

Computer Assisted Personal Interviewing (CAPI) provides huge efficiency gain in household survey and data management over Paper and Pencil Interview (PAPI). ICRISAT - VDSA team introduced CAPI mode of survey in three villages of SAT India in 2014. Objectives • To assess and document process adopted in implementing CAPI mode for household survey in the VDSA project.

Domain research presentation Final

My final project presentation for Domain research on Land Use statistics as part of course curriculum in Fall 2015 semester.

Team 5: Open Land Use Metadata Harvesting on NextGEOSS

Team 5: Open Land Use Metadata Harvesting on NextGEOSS

Predictive control for energy aware consolidation in cloud datacenters

Predictive control for energy aware consolidation in cloud datacenters

+91-9994232214,7806844441, ieeeprojectchennai@gmail.com,

www.projectsieee.com, www.ieee-projects-chennai.com

IEEE PROJECTS 2016-2017

-----------------------------------

Contact:+91-9994232214,+91-7806844441

Email: ieeeprojectchennai@gmail.com

Experience Big Data Analytics use cases ranging from cancer research to IoT a...

Nowadays, successful Big Data initiatives rely on the ability to act fast and to cope with the variety of data and models, like structured and unstructured data from sensors, social media or databases. In this break-out-session, we will showcase how PRIMEFLEX for Hadoop, a powerful and scalable analytics platform, can help business oriented users and citizen data scientists to collect, transform, analyze and even leverage artificial intelligence for Big Data analysis. Alexander Kaffenberger, Senior Business Developer – Big Data, Category Management EMEIA, Fujitsu

Unify Line of Business Data with SAP Digital Boardroom

In this sample use case, you can see the power and potential of unifying your business data using SAP Digital Boardroom to gain meaningful insight. Learn more at http://www.sap.com/digital-boardroom

With SAP Digital Boardroom, you’re able to:

• Connect to Cloud data

• Leverage SAP business networks such as Hybris, Fieldglass, Ariba, and SuccessFactors

• Make faster decisions on live data

• Gain actionable insights

• Collaborate seamlessly in real-time

Field Data Collecting, Processing and Sharing: Using web Service Technologies

Collecting, Distributing and Analyzing field data is a crucial part in any geospatial study. Field data collection tools and methods have been developed significantly due to the advancement of technologies such as Global Navigational Satellite Systems (GNSS) and development of smartphones. Accurate field data collection is also a necessary task for broad spatial data analysis and proper decision making. Development of Web technologies led to share the data and information effectively. This study tries to develop a framework based on the Geospatial Semantic Web technologies for disseminating and processing field data. Experimental results from an implemented prototype show that the proposed framework allows to visualize and process the field data in any context. The system of this study is capable of distributing and processing field data using web application. Moreover, the study demonstrates the importance and the capabilities of web services for spatial data gathering and processing. The system has been developed based on Free and Open Source Software (FOSS) packages such as ZOO-Project, Open Data Kit, etc. It enables user to further improve or deploy the system for variety of studies.

Data Warehousing and Business Intelligence Project on Smart Agriculture and M...

Implemented a Data Warehouse on smart agriculture to solve various Business Intelligence queries. Integrated multiple datasets from 3 different data sources including both structured and unstructured data.

Tools used:

> SQL Server Integration Services for ETL

> SQL Server Management Services for Database

> SQL Server Analysis Services for building the Schema

> Tableau and PowerBI for Visualization

> R for data preprocessing

> LATEX for documentation

Video Presentation: https://www.youtube.com/watch?v=0oIlLQcyPdM

Devconf 17 metrics collection using open-source tools is easy

Data is the core for everything we program - For auto scaling logic, billing, disaster recovery, security, propriety logic and much more.

Its the basic. Like letters.

Following that data pipelines allows the logic of the code to do something important.

Every site, engine and client need to expose the data for their own usages - by logs, counters for flows, events, raw values for alarms and reports..

Data pipeline sounds quite simple to handle, but some are still struggle with trying to collect the data that their program and users require. If it is indeed basic, we probably have the best solutions out there.

Lets organize such requirements for collection, reporting, logs analysis and more - Can we define if we need all of those outputs? How to we align the requirements with the solutions?

In this session we will raise interesting challenges around metrics collection. I will present and show few of the open source alternatives (ELK, graphite, collectd, statsd, filebeat and hawkular) and we will discuss about the solutions we choose in oVirt project to fit our needs.

Leveraging big data to maximize value from rail and power infrastructure assets.

Leveraging big data to maximize value from rail and power infrastructure assets.Chijioke “CJ” Ejimuda

To intelligently design and optimally operate infrastructure assets, a combination of big data batch and streaming execution models are very essential before useful insights are generated. This presentation specifically focuses on how these models could be applied throughout the life cycle of the Design, Build, Finance, Operate and Maintenance phases of rail and power system infrastructures to maximize their value.Analysis of Crime Big Data using MapReduce

Analyzed Crime Big data of Washington DC to solve the following business queries:

> Which hour has the highest crime count?

> Which shift has the highest crime count?

> Year wise crime count

> Hour wise crime count

> Crime count by an offense

> Average of Shift wise crime count

The data was initially stored in MySql which was then moved to HDFS using SQOOP, from where 4 MapReduce operations are doing using JAVA in Eclipse IDE. The outputs of the queries are then moved to HBase using SQOOP. Two more MapReduce operations are done using PIG, the output of which is also moved to HBase using SQOOP. All the outputs were then moved to the local system and are visualized using RStudio and Tableau.

Tools used:

> MySQL, HDFS and HBase to store the data

> SCOOP to move the data from one database to another

> JAVA (Eclipse IDE) and PIG to run the MapReduce queries

> RStudio for data pre-processing and visualization

> Tableau for visualization

> LATEX for Documentation

Data Sources

Slides from 'Data Sources' talk at San Luis Obispo GIS Users Group meeting June 8th, 2010 - given in conjunction with 3 other individuals.

What's hot (20)

Process documentation research of CAPI uses in VDSA project

Process documentation research of CAPI uses in VDSA project

Team 5: Open Land Use Metadata Harvesting on NextGEOSS

Team 5: Open Land Use Metadata Harvesting on NextGEOSS

2004-09-12 Data and Tools for Web-Based Monitoring and Analysis

2004-09-12 Data and Tools for Web-Based Monitoring and Analysis

Predictive control for energy aware consolidation in cloud datacenters

Predictive control for energy aware consolidation in cloud datacenters

Experience Big Data Analytics use cases ranging from cancer research to IoT a...

Experience Big Data Analytics use cases ranging from cancer research to IoT a...

Unify Line of Business Data with SAP Digital Boardroom

Unify Line of Business Data with SAP Digital Boardroom

Field Data Collecting, Processing and Sharing: Using web Service Technologies

Field Data Collecting, Processing and Sharing: Using web Service Technologies

Data Warehousing and Business Intelligence Project on Smart Agriculture and M...

Data Warehousing and Business Intelligence Project on Smart Agriculture and M...

Devconf 17 metrics collection using open-source tools is easy

Devconf 17 metrics collection using open-source tools is easy

Leveraging big data to maximize value from rail and power infrastructure assets.

Leveraging big data to maximize value from rail and power infrastructure assets.

Similar to 2016 bigdata - projects list

An Energy Efficient Data Transmission and Aggregation of WSN using Data Proce...

https://www.irjet.net/archives/V4/i3/IRJET-V4I3642.pdf

Performance evaluation of Map-reduce jar pig hive and spark with machine lear...

Big data is the biggest challenges as we need huge processing power system and good algorithms to make a decision. We need Hadoop environment with pig hive, machine learning and hadoopecosystem components. The data comes from industries. Many devices around us and sensor, and from social media sites. According to McKinsey There will be a shortage of 15000000 big data professionals by the end of 2020. There are lots of technologies to solve the problem of big data Storage and processing. Such technologies are Apache Hadoop, Apache Spark, Apache Kafka, and many more. Here we analyse the processing speed for the 4GB data on cloudx lab with Hadoop mapreduce with varing mappers and reducers and with pig script and Hive querries and spark environment along with machine learning technology and from the results we can say that machine learning with Hadoop will enhance the processing performance along with with spark, and also we can say that spark is better than Hadoop mapreduce pig and hive, spark with hive and machine learning will be the best performance enhanced compared with pig and hive, Hadoop mapreduce jar.

On Traffic-Aware Partition and Aggregation in Map Reduce for Big Data Applica...

The MapReduce programming model simplifies

large-scale data processing on commodity cluster by

exploiting parallel map tasks and reduces tasks.

Although many efforts have been made to improve

the performance of MapReduce jobs, they ignore the

network traffic generated in the shuffle phase, which

plays a critical role in performance enhancement.

Traditionally, a hash function is used to partition

intermediate data among reduce tasks, which,

however, is not traffic-efficient because network

topology and data size associated with each key are

not taken into consideration. In this paper, we study

to reduce network traffic cost for a MapReduce job

by designing a novel intermediate data partition

scheme. Furthermore, we jointly consider the

aggregator placement problem, where each

aggregator can reduce merged traffic from multiple

map tasks. A decomposition-based distributed

algorithm is proposed to deal with the large-scale

optimization problem for big data application and an

online algorithm is also designed to adjust data

partition and aggregation in a dynamic manner.

Finally, extensive simulation results demonstrate that

our proposals can significantly reduce network traffic

cost under both offline and online cases.

Introduction of GIS & its Applications With R-APDRP Project

Electrical Network from source to the distribution network up to consumer premises, the study area is Loni, Ghaziabad District in the Indian state of Uttar Pradesh. The procedure of the project is first step Digitization of image is carried out in “AutoCAD Map” software. Base map is prepared in DWG file format. Vector data delivers in GIS (Shape file) Format. The Base map prepare for the survey. There are two types of survey, Network survey and Consumer survey. Field survey data integrated using the Arc GIS 9.3Thematic mapping and analysis of the study area.

Smart4RES - Data science for renewable energy prediction

Recording at https://youtu.be/kn8X6kIfo6I

The prediction of Renewable Energy Source (RES) production is a worldwide challenge for Smart Grids. In this webinar, you will learn next-generation solutions proposed by the European Project Smart4RES:

· Future power system applications based on RES forecasting,

· Innovative weather and RES forecasting products to increase performance by 10-20%.

Fast Range Aggregate Queries for Big Data Analysis

https://www.irjet.net/archives/V4/i3/IRJET-V4I3590.pdf

SURVEY ON BIG DATA PROCESSING USING HADOOP, MAP REDUCE

We are in the age of big data which involves collection of large datasets.Managing and processing large data sets is difficult with existing traditional database systems.Hadoop and Map Reduce has become one of the most powerful and popular tools for big data processing . Hadoop Map Reduce a powerful programming model is used for analyzing large set of data with parallelization, fault tolerance and load balancing and other features are it is elastic,scalable,efficient.MapReduce with cloud is combined to form a framework for storage, processing and analysis of massive machine maintenance data in a cloud computing environment.

IRJET - Evaluating and Comparing the Two Variation with Current Scheduling Al...

https://www.irjet.net/archives/V6/i6/IRJET-V6I6453.pdf

Unstructured Datasets Analysis: Thesaurus Model

Mankind has stored more than 295 billion gigabytes (or 295 Exabyte) of data since 1986, as per a report by the University of Southern California. Storing and monitoring this data in widely distributed environments for 24/7 is a huge task for global service organizations. These datasets require high processing power which can’t be offered by traditional databases as they are stored in an unstructured format. Although one can use Map Reduce paradigm to solve this problem using java based Hadoop, it cannot provide us with maximum functionality. Drawbacks can be overcome using Hadoop-streaming techniques that allow users to define non-java executable for processing this datasets. This paper proposes a THESAURUS model which allows a faster and easier version of business analysis.

A Big-Data Process Consigned Geographically by Employing Mapreduce Frame Work

https://www.irjet.net/archives/V4/i8/IRJET-V4I8311.pdf

Automatic Parameter Tuning for Databases and Big Data Systems

Database and big data analytics systems such as Hadoop and Spark have a large number of configuration parameters that control memory distribution, I/O optimization, parallelism, and compression. Improper parameter settings can cause significant performance degradation and stability issues. However, regular users and even expert administrators struggle to understand and tune them to achieve good performance. In this tutorial, we review existing approaches on automatic parameter tuning for databases, Hadoop, and Spark, which we classify into six categories: rule-based, cost modeling, simulation-based, experiment-driven, machine learning, and adaptive tuning. We describe the foundations of different automatic parameter tuning algorithms and present pros and cons of each approach. We also highlight real-world applications and systems and identify research challenges for handling cloud services, resource heterogeneity, and real-time analytics

Managing Big data using Hadoop Map Reduce in Telecom Domain

Map reduce is a programming model for analysing and processing large massive data sets. Apache Hadoop is an efficient frame work and the most popular implementation of the map reduce model. Hadoop’s success has motivated research interest and has led to different modifications as well as extensions to framework. In this paper, the challenges faced in different domains like data storage, analytics, online processing and privacy/ security issues while handling big data are explored. Also, the various possible solutions with respect to Telecom domain with Hadoop Map reduce implementation is discussed in this paper.

IRJET- A Review on K-Means++ Clustering Algorithm and Cloud Computing wit...

https://www.irjet.net/archives/V6/i5/IRJET-V6I51092.pdf

NETWORK TRAFFIC ANALYSIS: HADOOP PIG VS TYPICAL MAPREDUCE

Big data analysis has become much popular in the present day scenario and the manipulation of

big data has gained the keen attention of researchers in the field of data analytics. Analysis of

big data is currently considered as an integral part of many computational and statistical

departments. As a result, novel approaches in data analysis are evolving on a daily basis.

Thousands of transaction requests are handled and processed everyday by different websites

associated with e-commerce, e-banking, e-shopping carts etc. The network traffic and weblog

analysis comes to play a crucial role in such situations where Hadoop can be suggested as an

efficient solution for processing the Netflow data collected from switches as well as website

access-logs during fixed intervals.

NETWORK TRAFFIC ANALYSIS: HADOOP PIG VS TYPICAL MAPREDUCE

Big data analysis has become much popular in the present day scenario and the manipulation of big data has gained the keen attention of researchers in the field of data analytics. Analysis of

big data is currently considered as an integral part of many computational and statistical departments. As a result, novel approaches in data analysis are evolving on a daily basis.

Thousands of transaction requests are handled and processed every day by different websites associated with e-commerce, e-banking, e-shopping carts etc. The network traffic and weblog

analysis comes to play a crucial role in such situations where Hadoop can be suggested as an efficient solution for processing the Netflow data collected from switches as well as website

access-logs during fixed intervals.

IRJET - A Prognosis Approach for Stock Market Prediction based on Term Streak...

https://irjet.net/archives/V7/i3/IRJET-V7I3454.pdf

IJET-V2I6P25

Today’s era is generally treated as the era of data on each and every field of computing application huge amount of data is generated. The society is gradually more dependent on computers so large amount of data is generated in each and every second which is either in structured format, unstructured format or semi structured format. These huge amount of data are generally treated as big data. To analyze big data is a biggest challenge in current world. Hadoop is an open-source framework that allows to store and process big data in a distributed environment across clusters of computers using simple programming models. It is designed to scale up from single servers to thousands of machines, each offering local computation and storage and it generally follows horizontal processing. Map Reduce programming is generally run over Hadoop Framework and process the large amount of structured and unstructured data. This Paper describes about different joining strategies used in Map reduce programming to combine the data of two files in Hadoop Framework and also discusses the skewness problem associate to it.

IRJET - A Research on Eloquent Salvation and Productive Outsourcing of Massiv...

https://irjet.net/archives/V7/i3/IRJET-V7I3258.pdf

GIS-Based Design for Effective Smart Grid Strategies

An accurate, up-to-date model of a utility’s distribution network is the backbone of Smart Grid technologies. But a Schneider Electric survey shows that 74% of utilities are concerned about the readiness of their network model to support Smart Grid applications. This paper presents a quantitative comparison of a Geographic Information System (GIS)–based graphic work design system vs. a CAD-based tool, demonstrating how the GIS-based design approach is better able to keep up with the continuous changes in a dynamic electrical distribution network.

Similar to 2016 bigdata - projects list (20)

An Energy Efficient Data Transmission and Aggregation of WSN using Data Proce...

An Energy Efficient Data Transmission and Aggregation of WSN using Data Proce...

Performance evaluation of Map-reduce jar pig hive and spark with machine lear...

Performance evaluation of Map-reduce jar pig hive and spark with machine lear...

On Traffic-Aware Partition and Aggregation in Map Reduce for Big Data Applica...

On Traffic-Aware Partition and Aggregation in Map Reduce for Big Data Applica...

Introduction of GIS & its Applications With R-APDRP Project

Introduction of GIS & its Applications With R-APDRP Project

Smart4RES - Data science for renewable energy prediction

Smart4RES - Data science for renewable energy prediction

Fast Range Aggregate Queries for Big Data Analysis

Fast Range Aggregate Queries for Big Data Analysis

SURVEY ON BIG DATA PROCESSING USING HADOOP, MAP REDUCE

SURVEY ON BIG DATA PROCESSING USING HADOOP, MAP REDUCE

IRJET - Evaluating and Comparing the Two Variation with Current Scheduling Al...

IRJET - Evaluating and Comparing the Two Variation with Current Scheduling Al...

A Big-Data Process Consigned Geographically by Employing Mapreduce Frame Work

A Big-Data Process Consigned Geographically by Employing Mapreduce Frame Work

Automatic Parameter Tuning for Databases and Big Data Systems

Automatic Parameter Tuning for Databases and Big Data Systems

Managing Big data using Hadoop Map Reduce in Telecom Domain

Managing Big data using Hadoop Map Reduce in Telecom Domain

IRJET- A Review on K-Means++ Clustering Algorithm and Cloud Computing wit...

IRJET- A Review on K-Means++ Clustering Algorithm and Cloud Computing wit...

NETWORK TRAFFIC ANALYSIS: HADOOP PIG VS TYPICAL MAPREDUCE

NETWORK TRAFFIC ANALYSIS: HADOOP PIG VS TYPICAL MAPREDUCE

NETWORK TRAFFIC ANALYSIS: HADOOP PIG VS TYPICAL MAPREDUCE

NETWORK TRAFFIC ANALYSIS: HADOOP PIG VS TYPICAL MAPREDUCE

IRJET - A Prognosis Approach for Stock Market Prediction based on Term Streak...

IRJET - A Prognosis Approach for Stock Market Prediction based on Term Streak...

IRJET - A Research on Eloquent Salvation and Productive Outsourcing of Massiv...

IRJET - A Research on Eloquent Salvation and Productive Outsourcing of Massiv...

GIS-Based Design for Effective Smart Grid Strategies

GIS-Based Design for Effective Smart Grid Strategies

More from MSR PROJECTS

Electronics engineering- Embedded system projects

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD),

#503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: 040 66334142, +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

Iot projects 2021-2022

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD),

#503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: 040 66334142, +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

Vlsi 2020 21_titles

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD),

#503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: 040 66334142, +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

Robotics list from msr projects

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD), #503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: 040 66334142, +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

Electrical simulation 20 21 projects list

Power system Projects, Power electronics projects, Control system projects,

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD),

#503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: 040 66334142, +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

2021- 22 IEEE JAVA Projects

Java Projects, Cloud Computing Projects, Networking Projects, Mobile Computing Projects, Bigdata Projects,

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD),

#503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: 040 66334142, +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

Python (ml ai block chain) projects- 2021-2022

PYTHON (ML AI BLOCK CHAIN) PROJECTS

machine Learning Projects

Artificial intelligent Projects

Deep Learning Projects

Block Chain Projects

Contact: 8977464142

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD), #503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

Python (ml ai block chain) projects

PYTHON (ML AI BLOCK CHAIN) PROJECTS

machine Learning Projects

Artificial intelligent Projects

Deep Learning Projects

Block Chain Projects

Contact: 8977464142

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD), #503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojectshyd.com , facebook.com/msrprojects ,

Ring on: +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

ieee Power systems projects 2017-18--9581464142

power system projects in hyderabad,

www.msrprojectshyd.com

9581464142 ,04066334142 contact numbers

Civil m.tech latest projects-9581464142

Civil projects in hyderabad, msr projects, civil m.tech project

www.msrprojects.org

MSR PROJECTS ( Now as a MSR EDUSOFT PVT LTD),

#503,Annapurna Block, beside mytrivanam, Adhithya Enclave, Ameerpet, HYD-38.

E-mail: msrprojectshyd@gmail.com,

Web: www.msrprojects.org , facebook.com/msrprojects ,

Ring on: +91 9581464142.

Branches: Hyderabad ( Ameerpet | Dilsuknagar) | Kurnool

m.tech VLSI-2017-18 --9581464142-msr projects

M.Tech vlsi projects, academic vlsi projects, ieee vlsi projects, ieee projects, academic projects in hyderabad, vlsi b.tech projects

Contact on 9581464142, 04066334142

web: www.msrprojectshyd.com

2016 java - projects list-9581464142

latest JAVA projects list for mtech projects ..

Contact on 9581464142 , 040 66334142

web: www.msrprojectshyd.com

Vlsi 2016 17--- m.tech-9581464142

latest VLSI projects list for mtech projects ..

Contact on 9581464142 , 040 66334142

web: www.msrprojectshyd.com

mtech Power systems projects list-2016-17-9581464142

latest power system projects list for mtech projects ..

Contact on 9581464142 , 040 66334142

web: www.msrprojectshyd.com

Mtech power system(2016 17)

Silent Features:

100% Pakka results

Documentation

Online support

PPT

Abstract

Extension

Base paper

Paper publishing(Min 3ip)

Plagiarism

Latest M.Tech power system Projects (2016 17)

Silent Features:

100% Pakka results

Documentation

Online support

PPT

Abstract

Extension

Base paper

Paper publishing(Min 3ip)

Plagiarism

More from MSR PROJECTS (20)

mtech Power systems projects list-2016-17-9581464142

mtech Power systems projects list-2016-17-9581464142

Recently uploaded

Event Management System Vb Net Project Report.pdf

In present era, the scopes of information technology growing with a very fast .We do not see any are untouched from this industry. The scope of information technology has become wider includes: Business and industry. Household Business, Communication, Education, Entertainment, Science, Medicine, Engineering, Distance Learning, Weather Forecasting. Carrier Searching and so on.

My project named “Event Management System” is software that store and maintained all events coordinated in college. It also helpful to print related reports. My project will help to record the events coordinated by faculties with their Name, Event subject, date & details in an efficient & effective ways.

In my system we have to make a system by which a user can record all events coordinated by a particular faculty. In our proposed system some more featured are added which differs it from the existing system such as security.

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.pdf

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.

Thank me later.

samsarthak31@gmail.com

The Benefits and Techniques of Trenchless Pipe Repair.pdf

Explore the innovative world of trenchless pipe repair with our comprehensive guide, "The Benefits and Techniques of Trenchless Pipe Repair." This document delves into the modern methods of repairing underground pipes without the need for extensive excavation, highlighting the numerous advantages and the latest techniques used in the industry.

Learn about the cost savings, reduced environmental impact, and minimal disruption associated with trenchless technology. Discover detailed explanations of popular techniques such as pipe bursting, cured-in-place pipe (CIPP) lining, and directional drilling. Understand how these methods can be applied to various types of infrastructure, from residential plumbing to large-scale municipal systems.

Ideal for homeowners, contractors, engineers, and anyone interested in modern plumbing solutions, this guide provides valuable insights into why trenchless pipe repair is becoming the preferred choice for pipe rehabilitation. Stay informed about the latest advancements and best practices in the field.

Forklift Classes Overview by Intella Parts

Discover the different forklift classes and their specific applications. Learn how to choose the right forklift for your needs to ensure safety, efficiency, and compliance in your operations.

For more technical information, visit our website https://intellaparts.com

Quality defects in TMT Bars, Possible causes and Potential Solutions.

Maintaining high-quality standards in the production of TMT bars is crucial for ensuring structural integrity in construction. Addressing common defects through careful monitoring, standardized processes, and advanced technology can significantly improve the quality of TMT bars. Continuous training and adherence to quality control measures will also play a pivotal role in minimizing these defects.

Courier management system project report.pdf

It is now-a-days very important for the people to send or receive articles like imported furniture, electronic items, gifts, business goods and the like. People depend vastly on different transport systems which mostly use the manual way of receiving and delivering the articles. There is no way to track the articles till they are received and there is no way to let the customer know what happened in transit, once he booked some articles. In such a situation, we need a system which completely computerizes the cargo activities including time to time tracking of the articles sent. This need is fulfilled by Courier Management System software which is online software for the cargo management people that enables them to receive the goods from a source and send them to a required destination and track their status from time to time.

TECHNICAL TRAINING MANUAL GENERAL FAMILIARIZATION COURSE

AIRCRAFT GENERAL

The Single Aisle is the most advanced family aircraft in service today, with fly-by-wire flight controls.

The A318, A319, A320 and A321 are twin-engine subsonic medium range aircraft.

The family offers a choice of engines

Final project report on grocery store management system..pdf

In today’s fast-changing business environment, it’s extremely important to be able to respond to client needs in the most effective and timely manner. If your customers wish to see your business online and have instant access to your products or services.

Online Grocery Store is an e-commerce website, which retails various grocery products. This project allows viewing various products available enables registered users to purchase desired products instantly using Paytm, UPI payment processor (Instant Pay) and also can place order by using Cash on Delivery (Pay Later) option. This project provides an easy access to Administrators and Managers to view orders placed using Pay Later and Instant Pay options.

In order to develop an e-commerce website, a number of Technologies must be studied and understood. These include multi-tiered architecture, server and client-side scripting techniques, implementation technologies, programming language (such as PHP, HTML, CSS, JavaScript) and MySQL relational databases. This is a project with the objective to develop a basic website where a consumer is provided with a shopping cart website and also to know about the technologies used to develop such a website.

This document will discuss each of the underlying technologies to create and implement an e- commerce website.

Nuclear Power Economics and Structuring 2024

Title: Nuclear Power Economics and Structuring - 2024 Edition

Produced by: World Nuclear Association Published: April 2024

Report No. 2024/001

© 2024 World Nuclear Association.

Registered in England and Wales, company number 01215741

This report reflects the views

of industry experts but does not

necessarily represent those

of World Nuclear Association’s

individual member organizations.

Student information management system project report ii.pdf

Our project explains about the student management. This project mainly explains the various actions related to student details. This project shows some ease in adding, editing and deleting the student details. It also provides a less time consuming process for viewing, adding, editing and deleting the marks of the students.

CFD Simulation of By-pass Flow in a HRSG module by R&R Consult.pptx

CFD analysis is incredibly effective at solving mysteries and improving the performance of complex systems!

Here's a great example: At a large natural gas-fired power plant, where they use waste heat to generate steam and energy, they were puzzled that their boiler wasn't producing as much steam as expected.

R&R and Tetra Engineering Group Inc. were asked to solve the issue with reduced steam production.

An inspection had shown that a significant amount of hot flue gas was bypassing the boiler tubes, where the heat was supposed to be transferred.

R&R Consult conducted a CFD analysis, which revealed that 6.3% of the flue gas was bypassing the boiler tubes without transferring heat. The analysis also showed that the flue gas was instead being directed along the sides of the boiler and between the modules that were supposed to capture the heat. This was the cause of the reduced performance.

Based on our results, Tetra Engineering installed covering plates to reduce the bypass flow. This improved the boiler's performance and increased electricity production.

It is always satisfying when we can help solve complex challenges like this. Do your systems also need a check-up or optimization? Give us a call!

Work done in cooperation with James Malloy and David Moelling from Tetra Engineering.

More examples of our work https://www.r-r-consult.dk/en/cases-en/

Cosmetic shop management system project report.pdf

Buying new cosmetic products is difficult. It can even be scary for those who have sensitive skin and are prone to skin trouble. The information needed to alleviate this problem is on the back of each product, but it's thought to interpret those ingredient lists unless you have a background in chemistry.

Instead of buying and hoping for the best, we can use data science to help us predict which products may be good fits for us. It includes various function programs to do the above mentioned tasks.

Data file handling has been effectively used in the program.

The automated cosmetic shop management system should deal with the automation of general workflow and administration process of the shop. The main processes of the system focus on customer's request where the system is able to search the most appropriate products and deliver it to the customers. It should help the employees to quickly identify the list of cosmetic product that have reached the minimum quantity and also keep a track of expired date for each cosmetic product. It should help the employees to find the rack number in which the product is placed.It is also Faster and more efficient way.

在线办理(ANU毕业证书)澳洲国立大学毕业证录取通知书一模一样

学校原件一模一样【微信:741003700 】《(ANU毕业证书)澳洲国立大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

Automobile Management System Project Report.pdf

The proposed project is developed to manage the automobile in the automobile dealer company. The main module in this project is login, automobile management, customer management, sales, complaints and reports. The first module is the login. The automobile showroom owner should login to the project for usage. The username and password are verified and if it is correct, next form opens. If the username and password are not correct, it shows the error message.

When a customer search for a automobile, if the automobile is available, they will be taken to a page that shows the details of the automobile including automobile name, automobile ID, quantity, price etc. “Automobile Management System” is useful for maintaining automobiles, customers effectively and hence helps for establishing good relation between customer and automobile organization. It contains various customized modules for effectively maintaining automobiles and stock information accurately and safely.

When the automobile is sold to the customer, stock will be reduced automatically. When a new purchase is made, stock will be increased automatically. While selecting automobiles for sale, the proposed software will automatically check for total number of available stock of that particular item, if the total stock of that particular item is less than 5, software will notify the user to purchase the particular item.

Also when the user tries to sale items which are not in stock, the system will prompt the user that the stock is not enough. Customers of this system can search for a automobile; can purchase a automobile easily by selecting fast. On the other hand the stock of automobiles can be maintained perfectly by the automobile shop manager overcoming the drawbacks of existing system.

HYDROPOWER - Hydroelectric power generation

Overview of the fundamental roles in Hydropower generation and the components involved in wider Electrical Engineering.

This paper presents the design and construction of hydroelectric dams from the hydrologist’s survey of the valley before construction, all aspects and involved disciplines, fluid dynamics, structural engineering, generation and mains frequency regulation to the very transmission of power through the network in the United Kingdom.

Author: Robbie Edward Sayers

Collaborators and co editors: Charlie Sims and Connor Healey.

(C) 2024 Robbie E. Sayers

Immunizing Image Classifiers Against Localized Adversary Attacks

This paper addresses the vulnerability of deep learning models, particularly convolutional neural networks

(CNN)s, to adversarial attacks and presents a proactive training technique designed to counter them. We

introduce a novel volumization algorithm, which transforms 2D images into 3D volumetric representations.

When combined with 3D convolution and deep curriculum learning optimization (CLO), itsignificantly improves

the immunity of models against localized universal attacks by up to 40%. We evaluate our proposed approach

using contemporary CNN architectures and the modified Canadian Institute for Advanced Research (CIFAR-10

and CIFAR-100) and ImageNet Large Scale Visual Recognition Challenge (ILSVRC12) datasets, showcasing

accuracy improvements over previous techniques. The results indicate that the combination of the volumetric

input and curriculum learning holds significant promise for mitigating adversarial attacks without necessitating

adversary training.

Recently uploaded (20)

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.pdf

AKS UNIVERSITY Satna Final Year Project By OM Hardaha.pdf

The Benefits and Techniques of Trenchless Pipe Repair.pdf

The Benefits and Techniques of Trenchless Pipe Repair.pdf

Quality defects in TMT Bars, Possible causes and Potential Solutions.

Quality defects in TMT Bars, Possible causes and Potential Solutions.

TECHNICAL TRAINING MANUAL GENERAL FAMILIARIZATION COURSE

TECHNICAL TRAINING MANUAL GENERAL FAMILIARIZATION COURSE

Final project report on grocery store management system..pdf

Final project report on grocery store management system..pdf

Pile Foundation by Venkatesh Taduvai (Sub Geotechnical Engineering II)-conver...

Pile Foundation by Venkatesh Taduvai (Sub Geotechnical Engineering II)-conver...

Student information management system project report ii.pdf

Student information management system project report ii.pdf

CFD Simulation of By-pass Flow in a HRSG module by R&R Consult.pptx

CFD Simulation of By-pass Flow in a HRSG module by R&R Consult.pptx

Cosmetic shop management system project report.pdf

Cosmetic shop management system project report.pdf

MCQ Soil mechanics questions (Soil shear strength).pdf

MCQ Soil mechanics questions (Soil shear strength).pdf

Immunizing Image Classifiers Against Localized Adversary Attacks

Immunizing Image Classifiers Against Localized Adversary Attacks

2016 bigdata - projects list

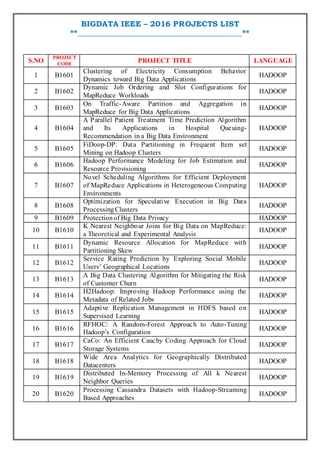

- 1. BIGDATA IEEE – 2016 PROJECTS LIST **_________________________________________** S.NO PROJECT CODE PROJECT TITLE LANGUAGE 1 B1601 Clustering of Electricity Consumption Behavior Dynamics toward Big Data Applications HADOOP 2 B1602 Dynamic Job Ordering and Slot Configurations for MapReduce Workloads HADOOP 3 B1603 On Traffic-Aware Partition and Aggregation in MapReduce for Big Data Applications HADOOP 4 B1604 A Parallel Patient Treatment Time Prediction Algorithm and Its Applications in Hospital Queuing- Recommendation in a Big Data Environment HADOOP 5 B1605 FiDoop-DP: Data Partitioning in Frequent Item set Mining on Hadoop Clusters HADOOP 6 B1606 Hadoop Performance Modeling for Job Estimation and Resource Provisioning HADOOP 7 B1607 Novel Scheduling Algorithms for Efficient Deployment of MapReduce Applications in Heterogeneous Computing Environments HADOOP 8 B1608 Optimization for Speculative Execution in Big Data ProcessingClusters HADOOP 9 B1609 Protectionof Big Data Privacy HADOOP 10 B1610 K Nearest Neighbour Joins for Big Data on MapReduce: a Theoretical and Experimental Analysis HADOOP 11 B1611 Dynamic Resource Allocation for MapReduce with Partitioning Skew HADOOP 12 B1612 Service Rating Prediction by Exploring Social Mobile Users’ Geographical Locations HADOOP 13 B1613 A Big Data Clustering Algorithm for Mitigating the Risk of Customer Churn HADOOP 14 B1614 H2Hadoop: Improving Hadoop Performance using the Metadata of Related Jobs HADOOP 15 B1615 Adaptive Replication Management in HDFS based on Supervised Learning HADOOP 16 B1616 RFHOC: A Random-Forest Approach to Auto-Tuning Hadoop’s Configuration HADOOP 17 B1617 CaCo: An Efficient Cauchy Coding Approach for Cloud Storage Systems HADOOP 18 B1618 Wide Area Analytics for Geographically Distributed Datacenters HADOOP 19 B1619 Distributed In-Memory Processing of All k Nearest Neighbor Queries HADOOP 20 B1620 Processing Cassandra Datasets with Hadoop-Streaming Based Approaches HADOOP