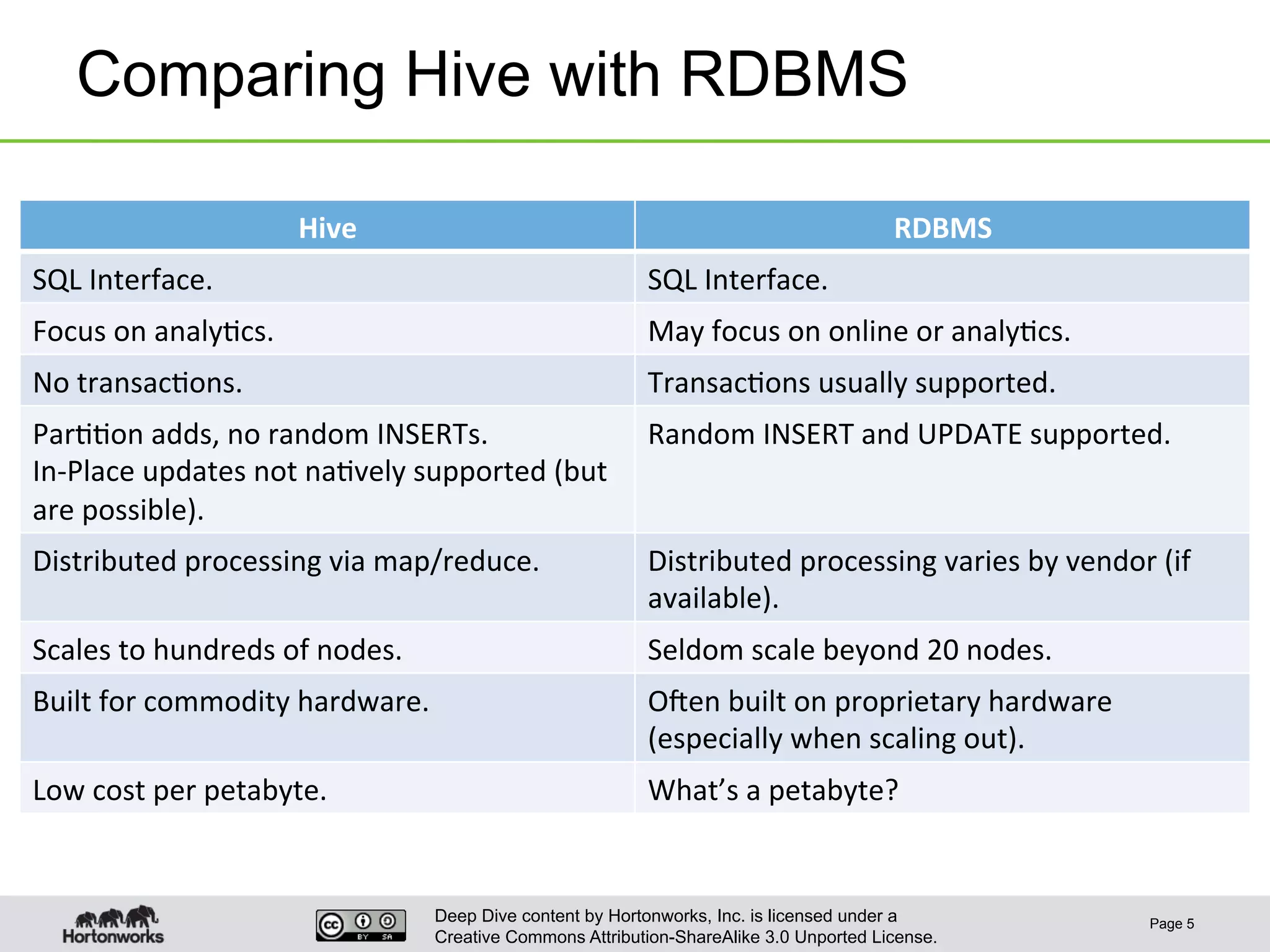

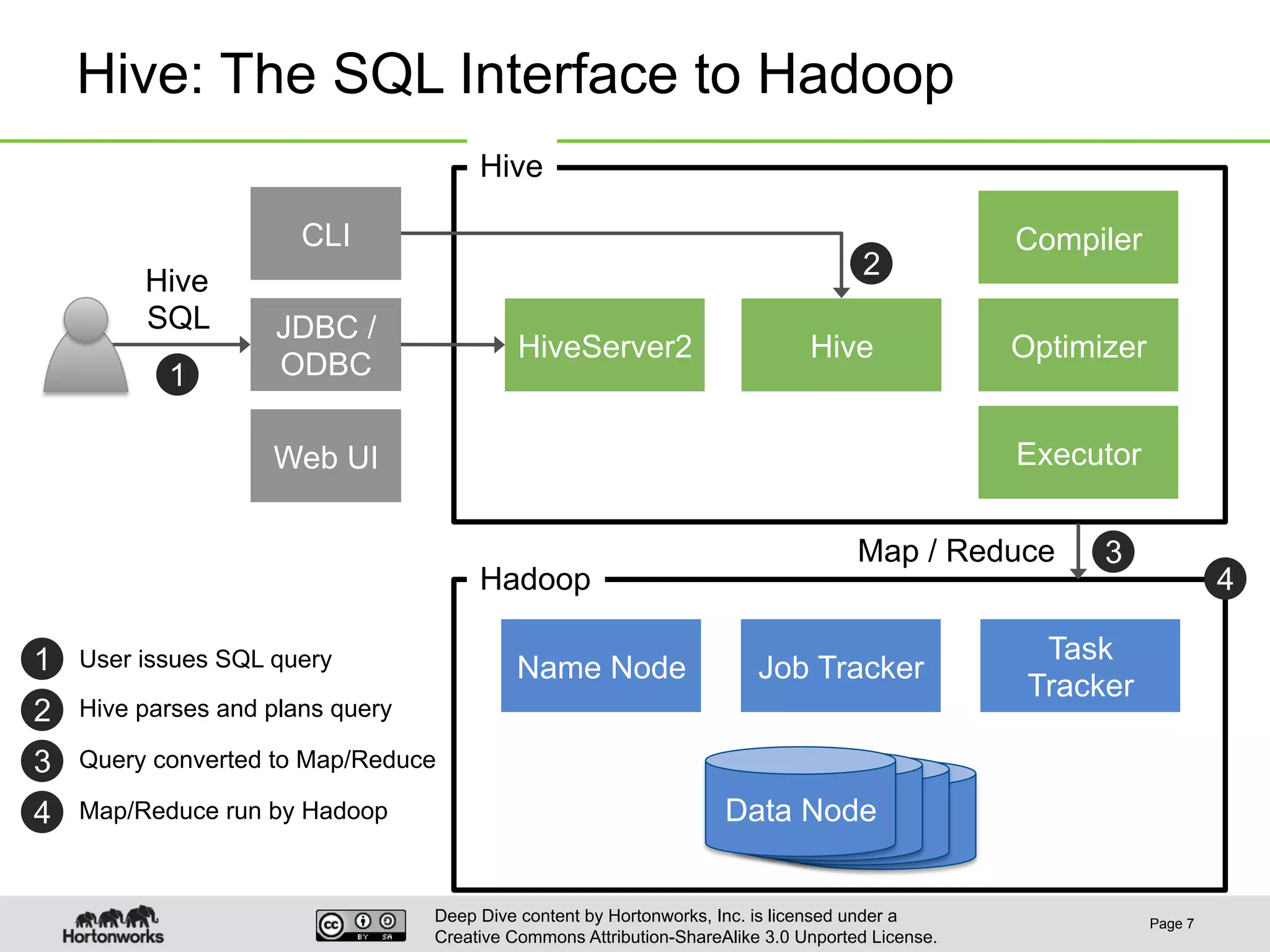

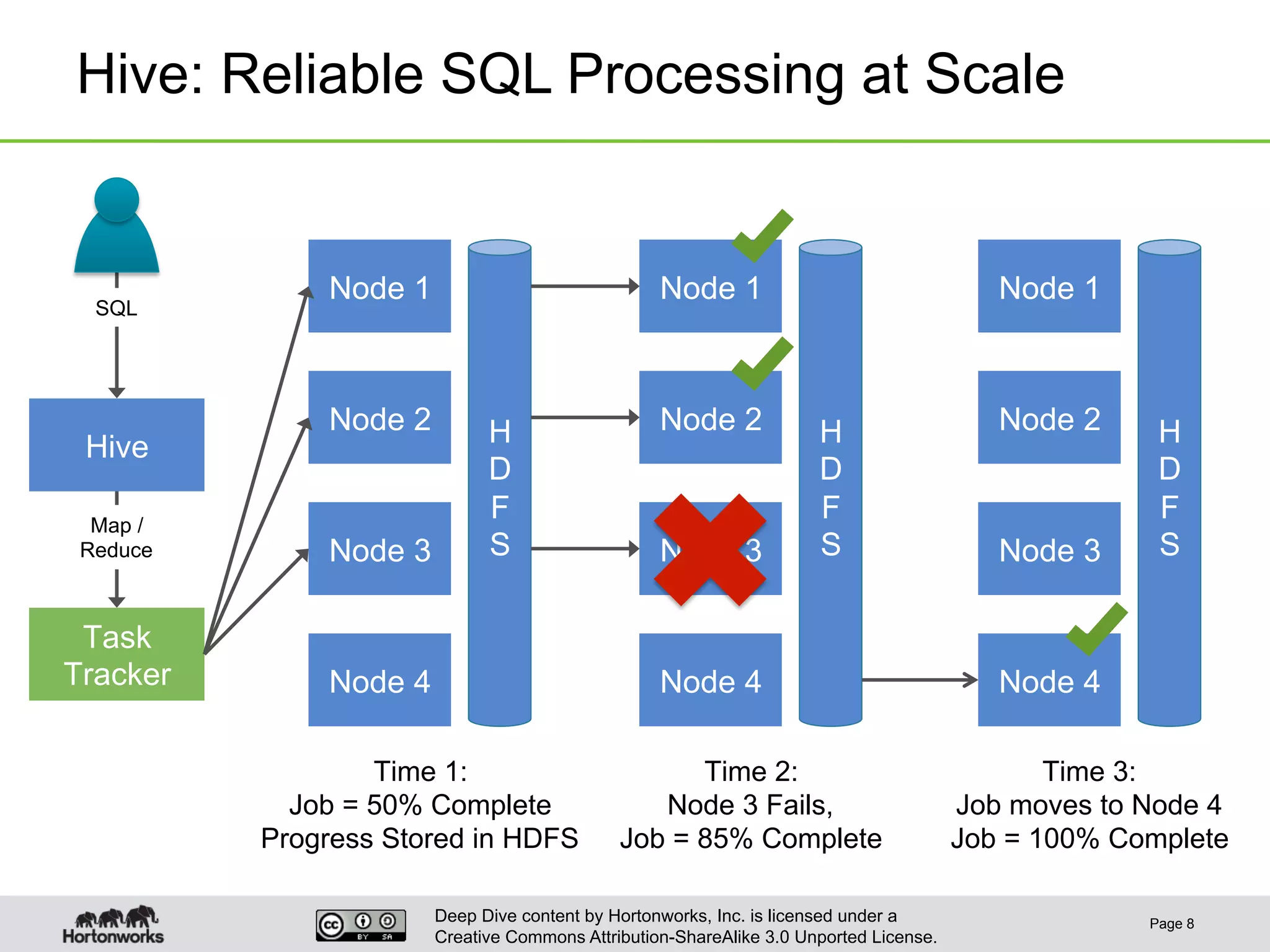

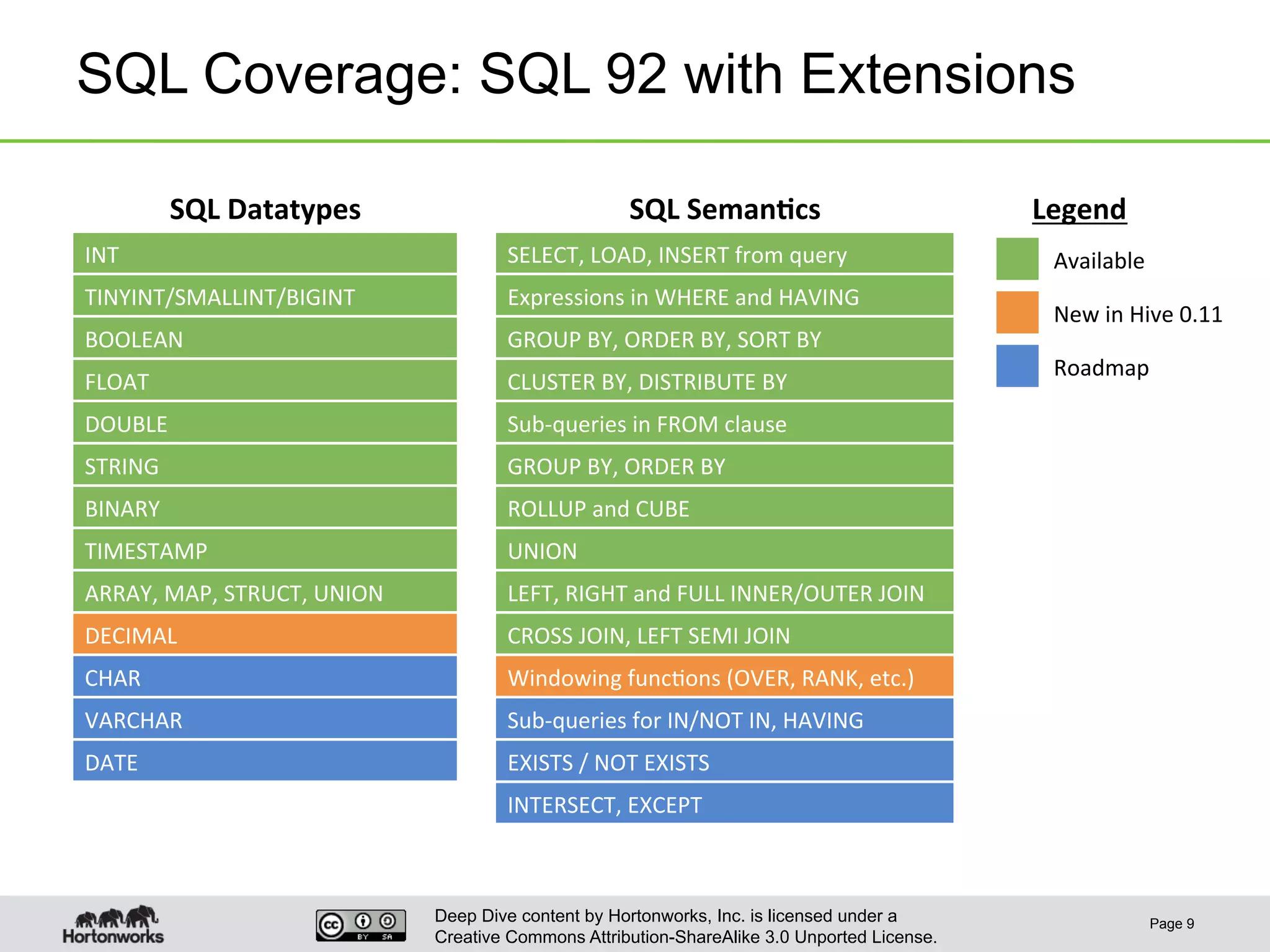

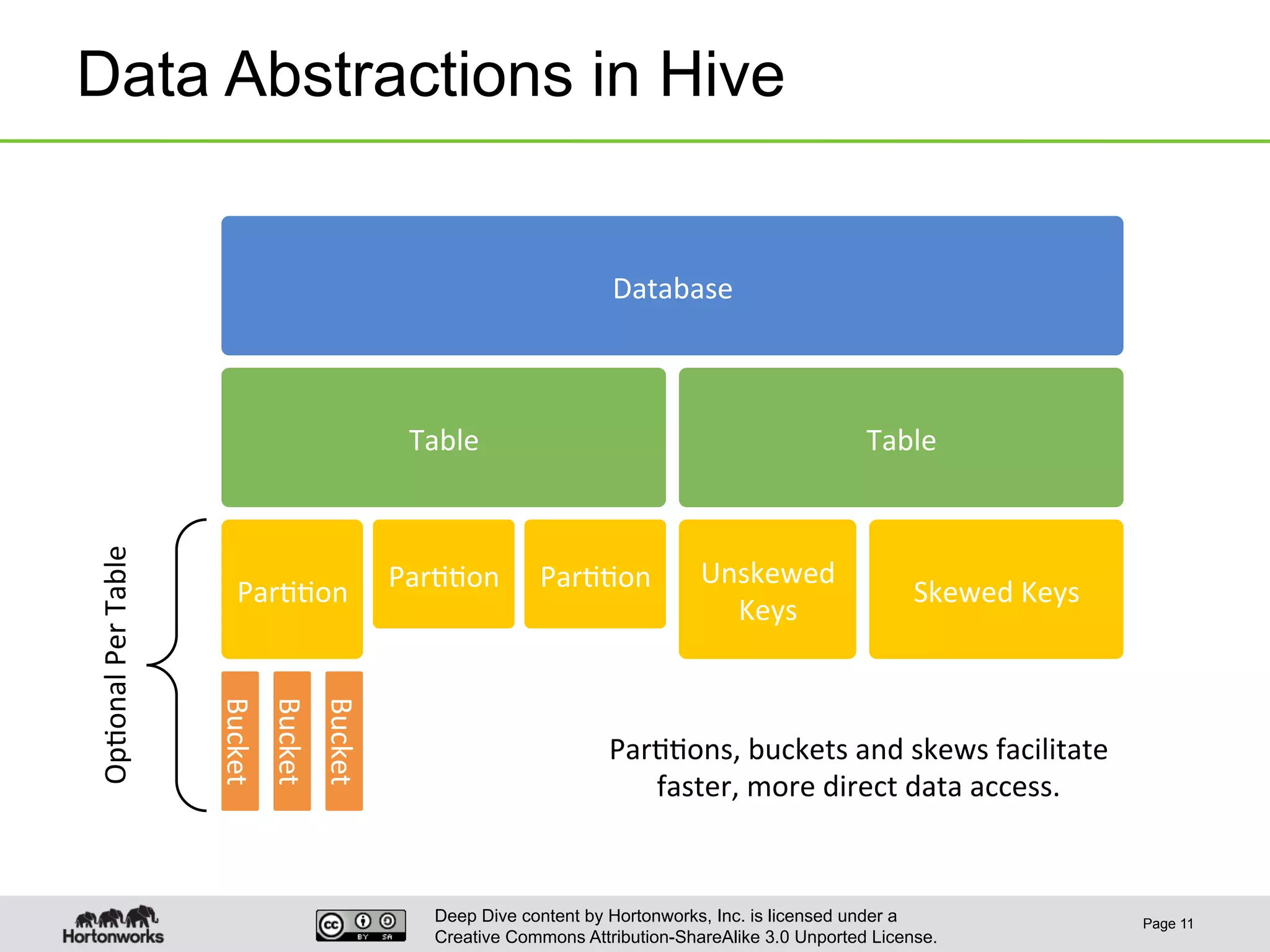

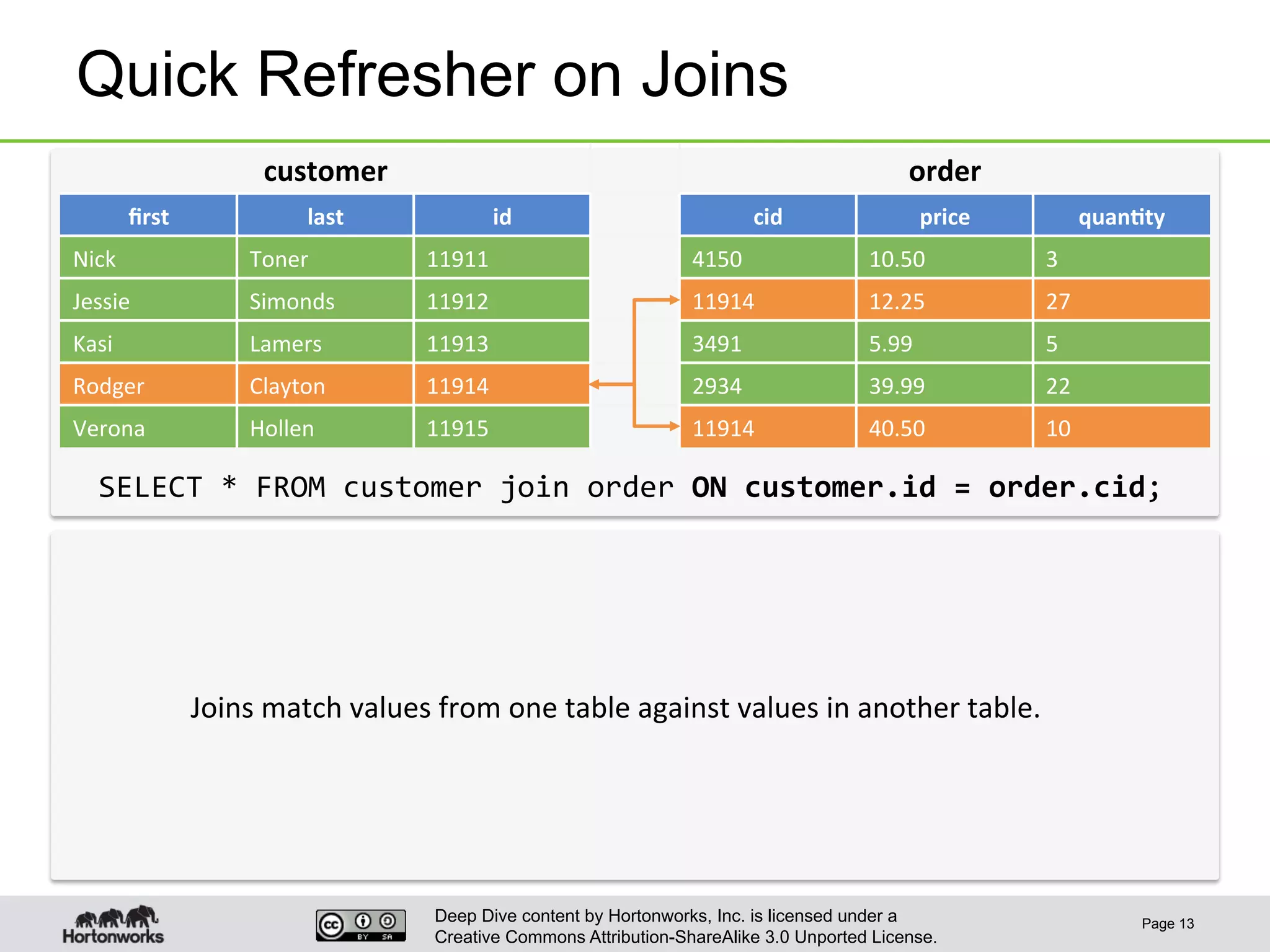

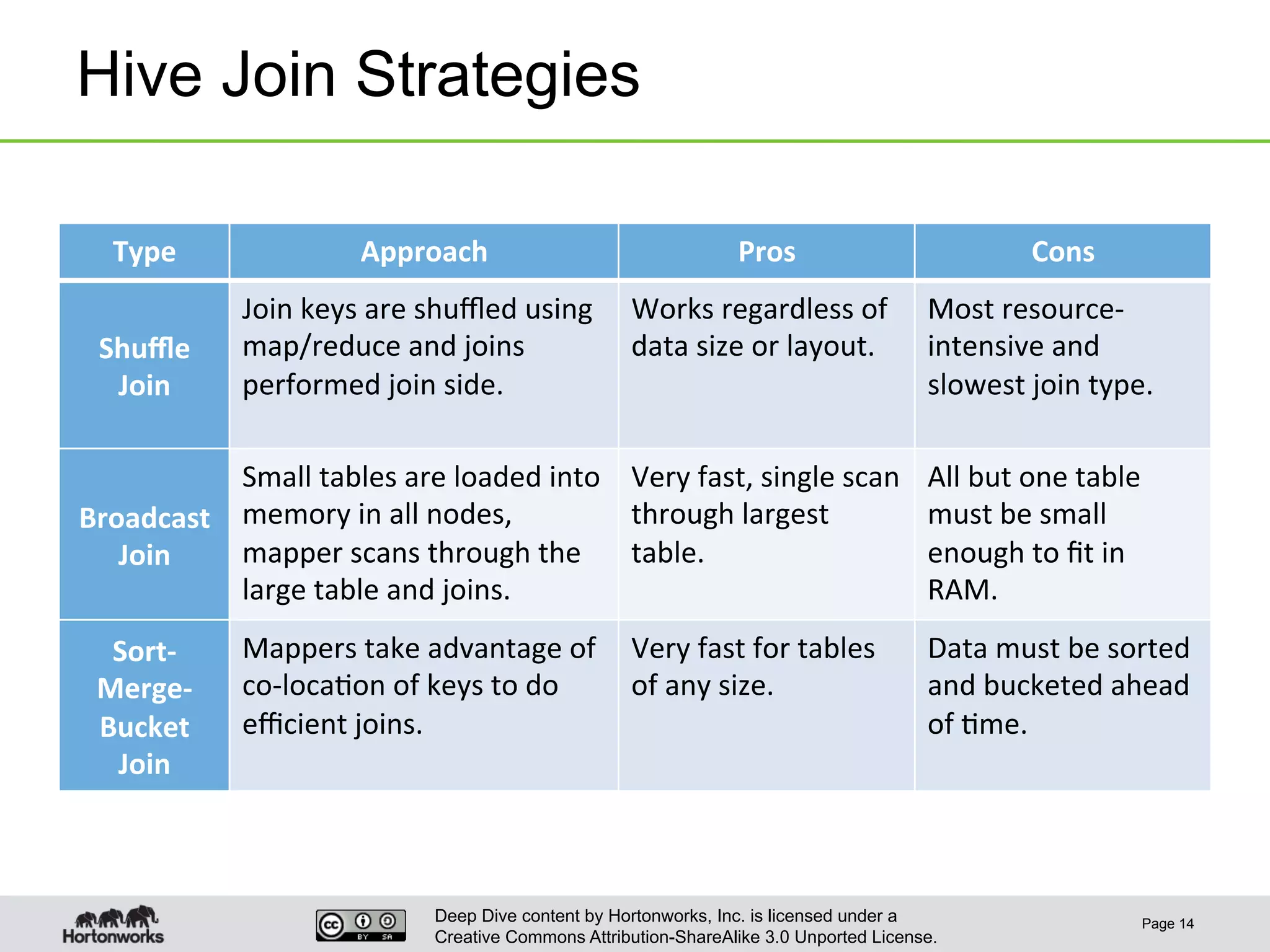

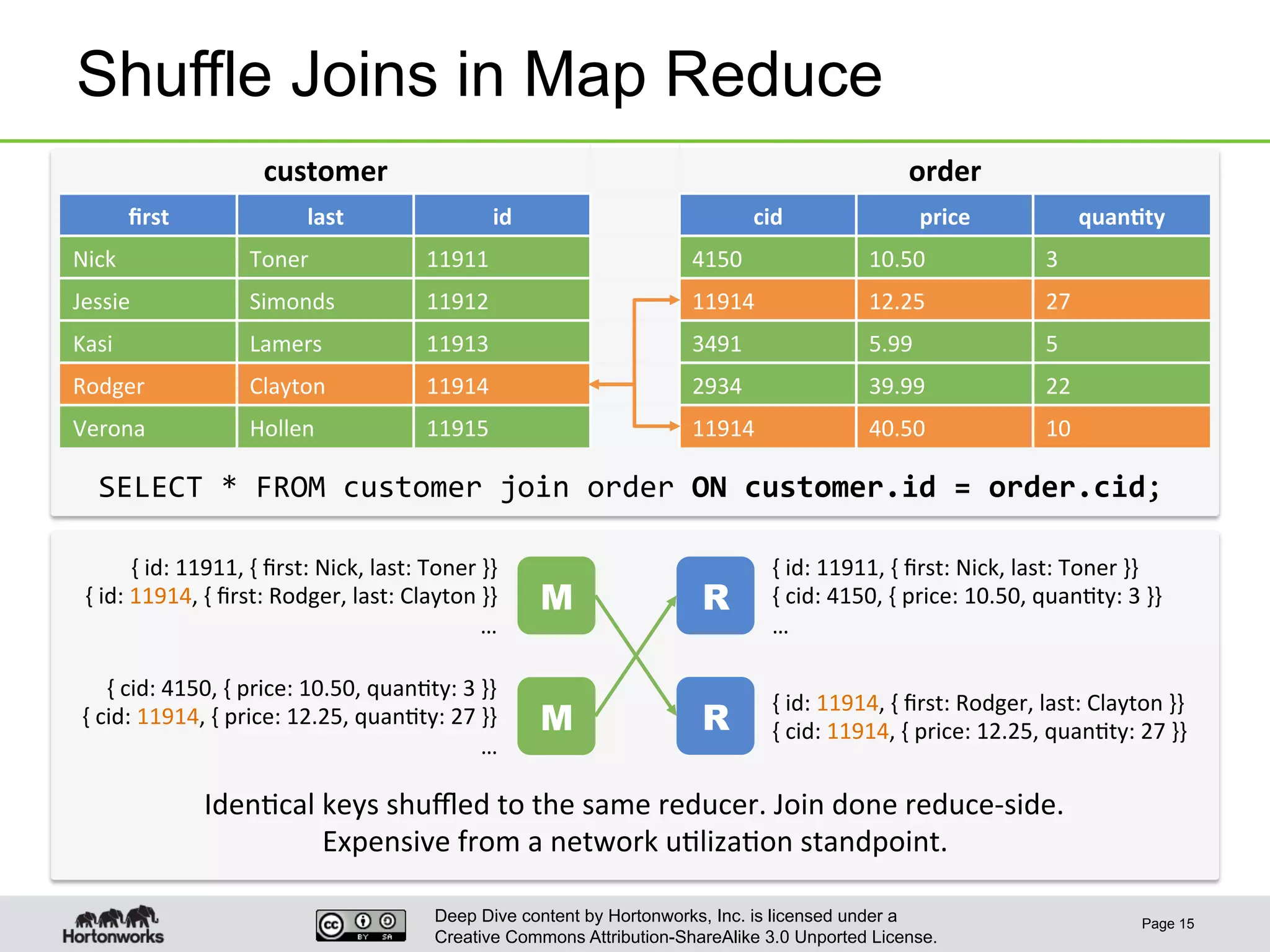

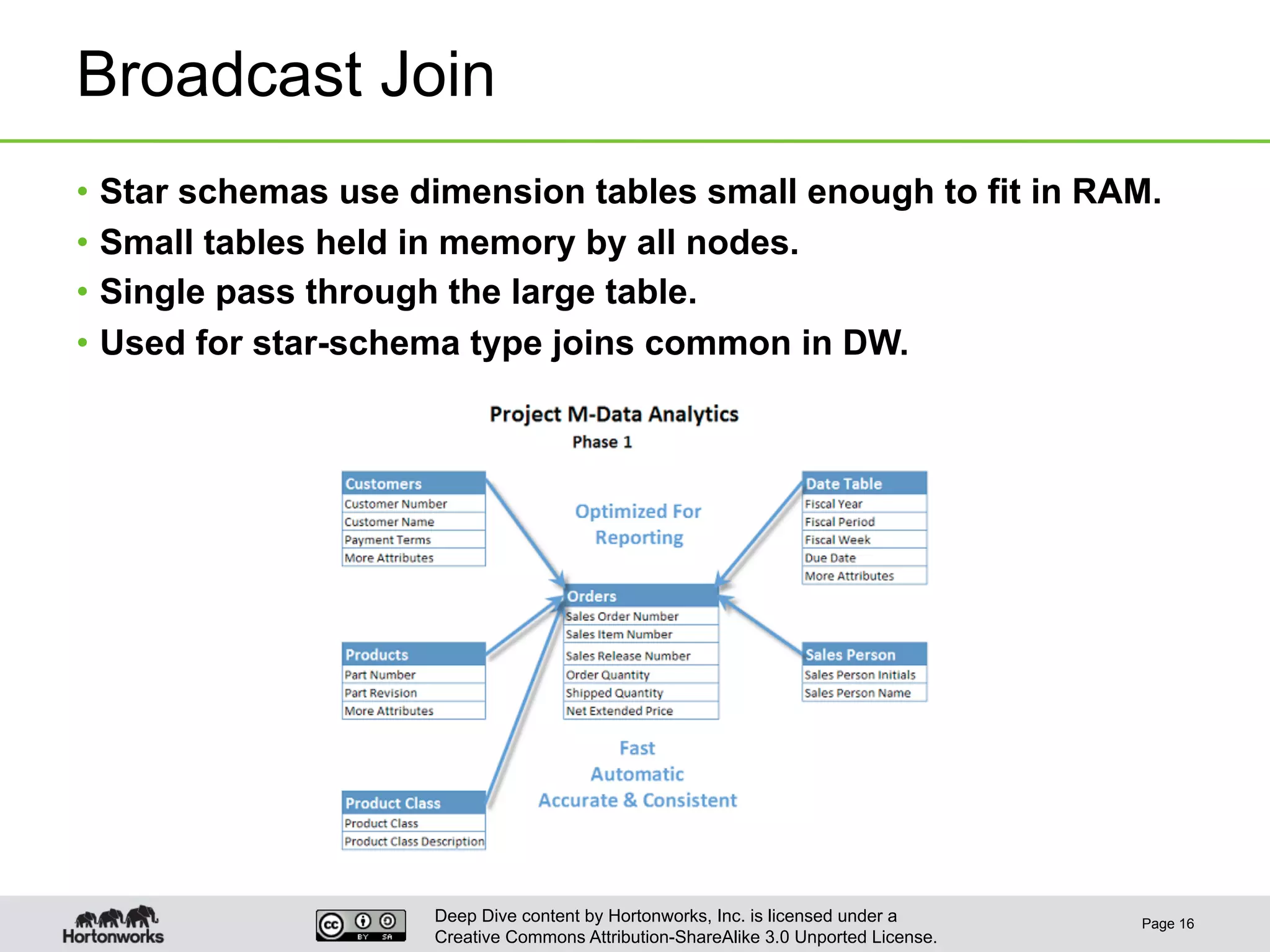

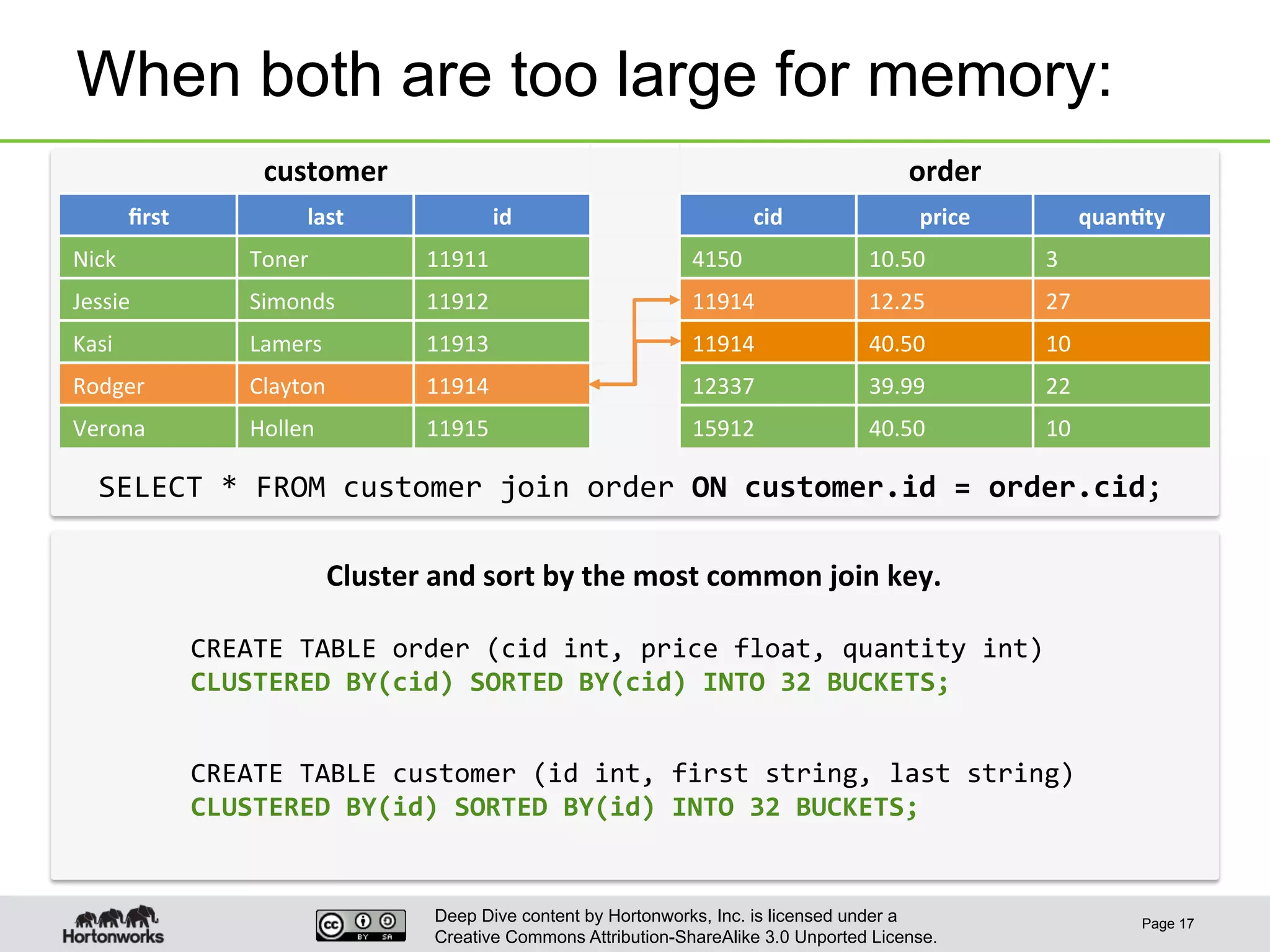

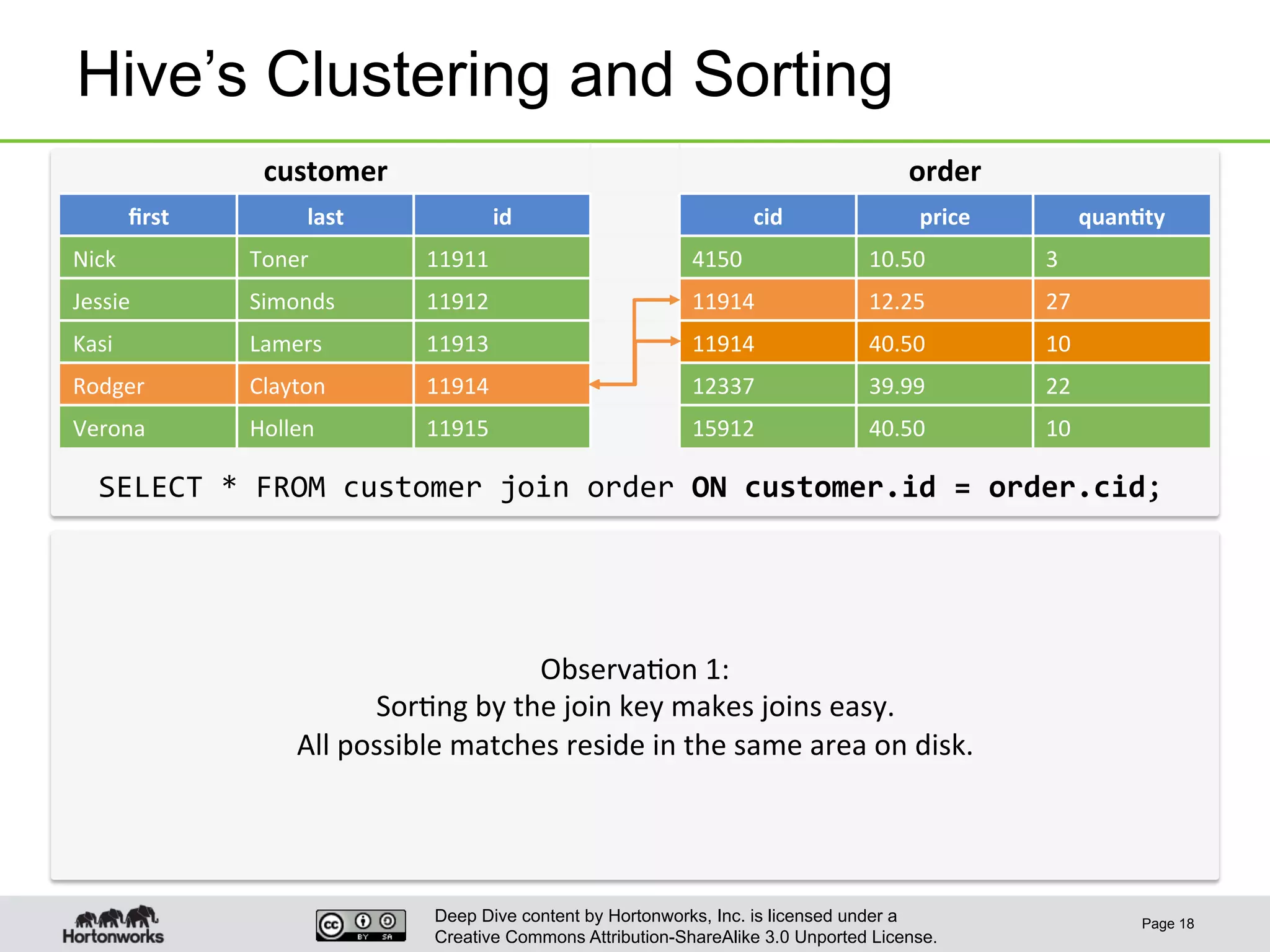

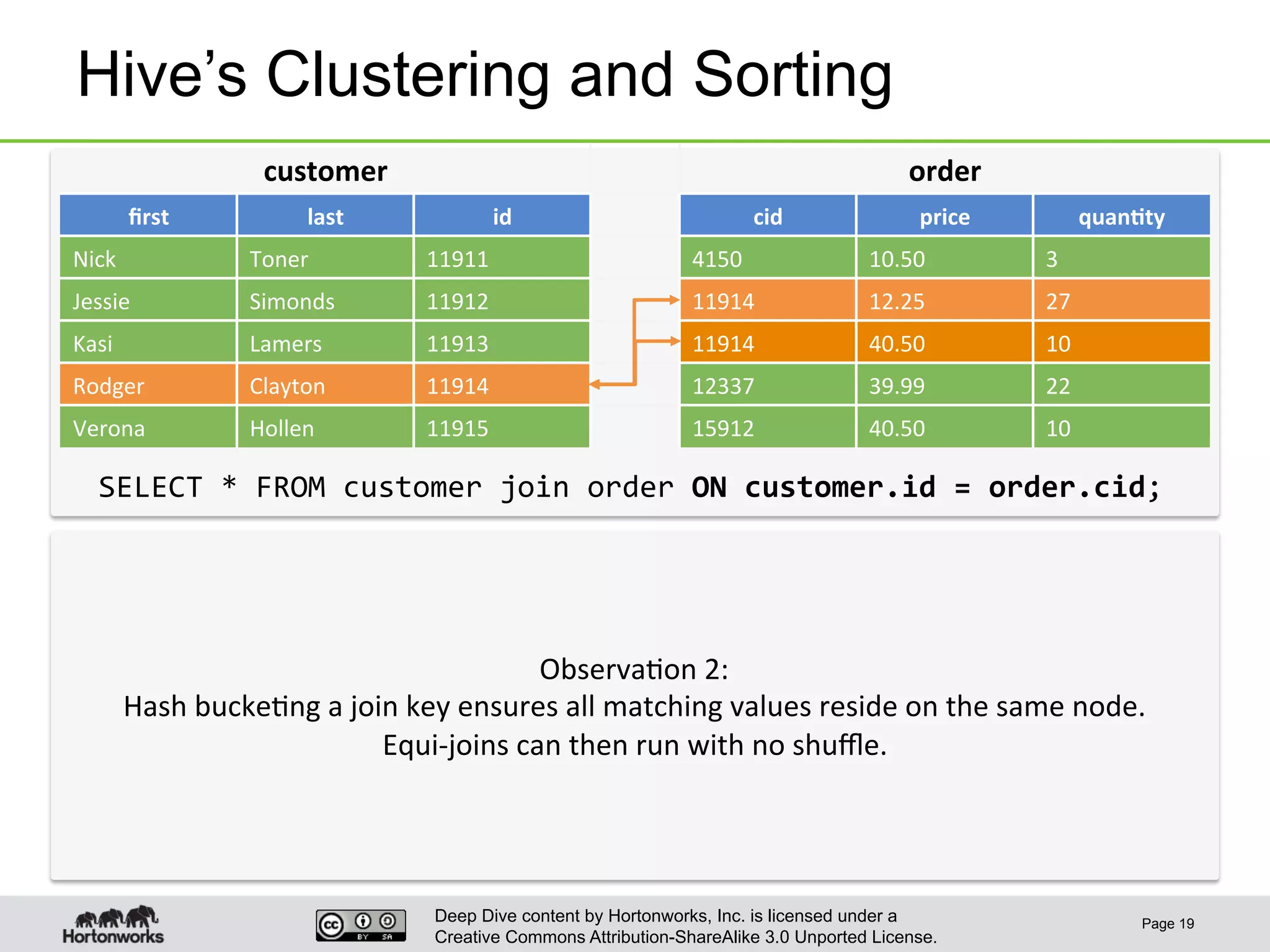

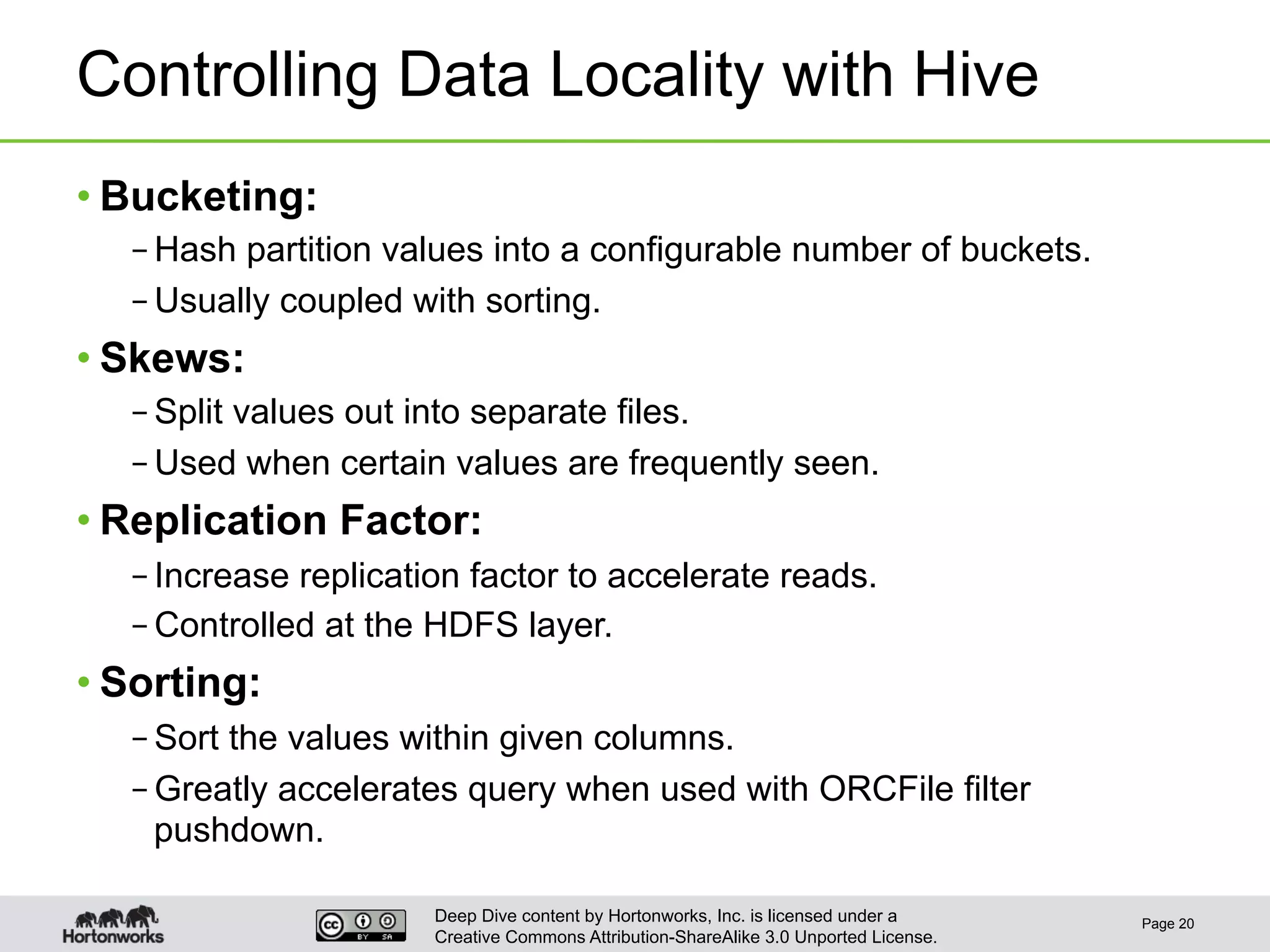

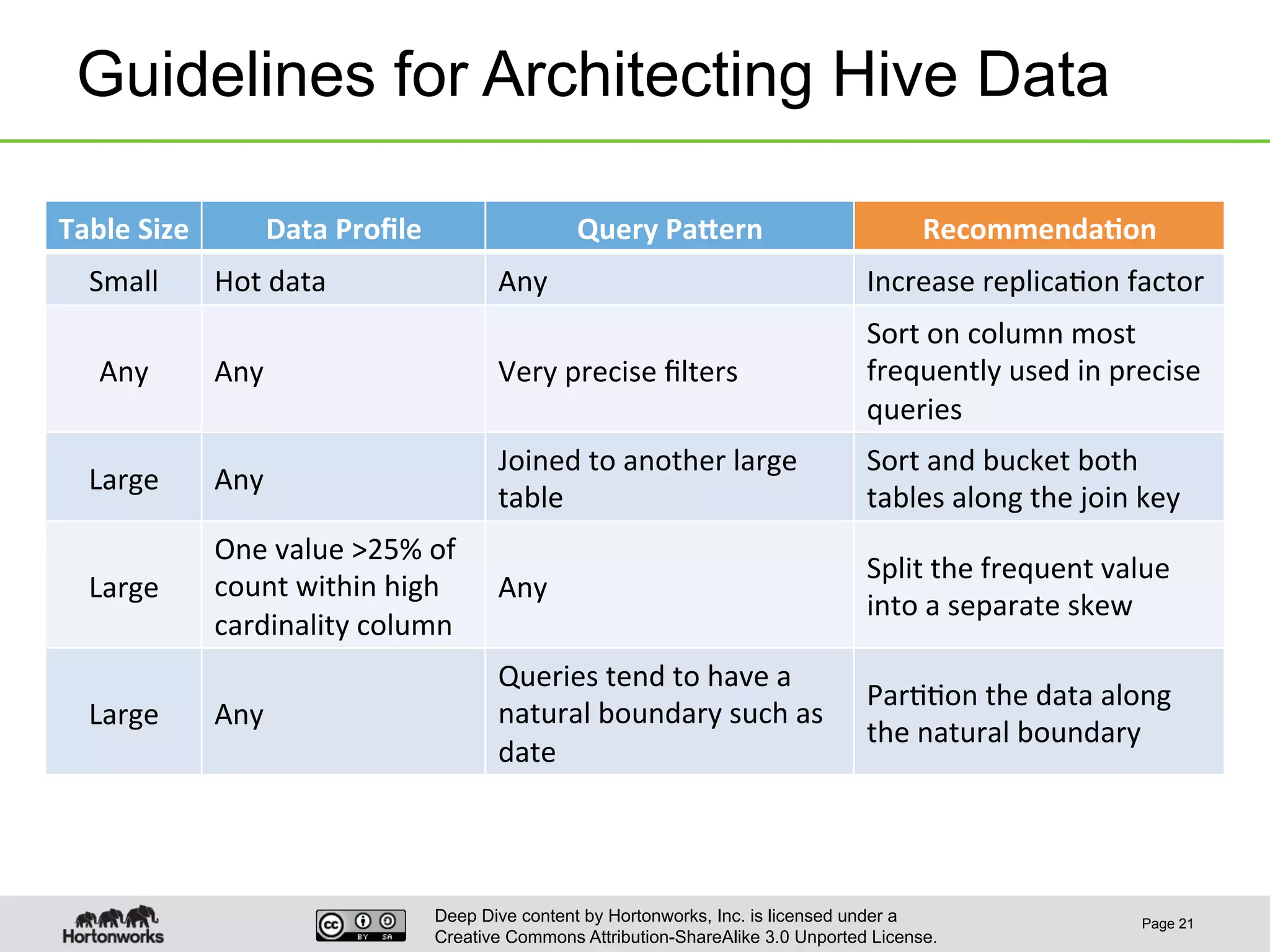

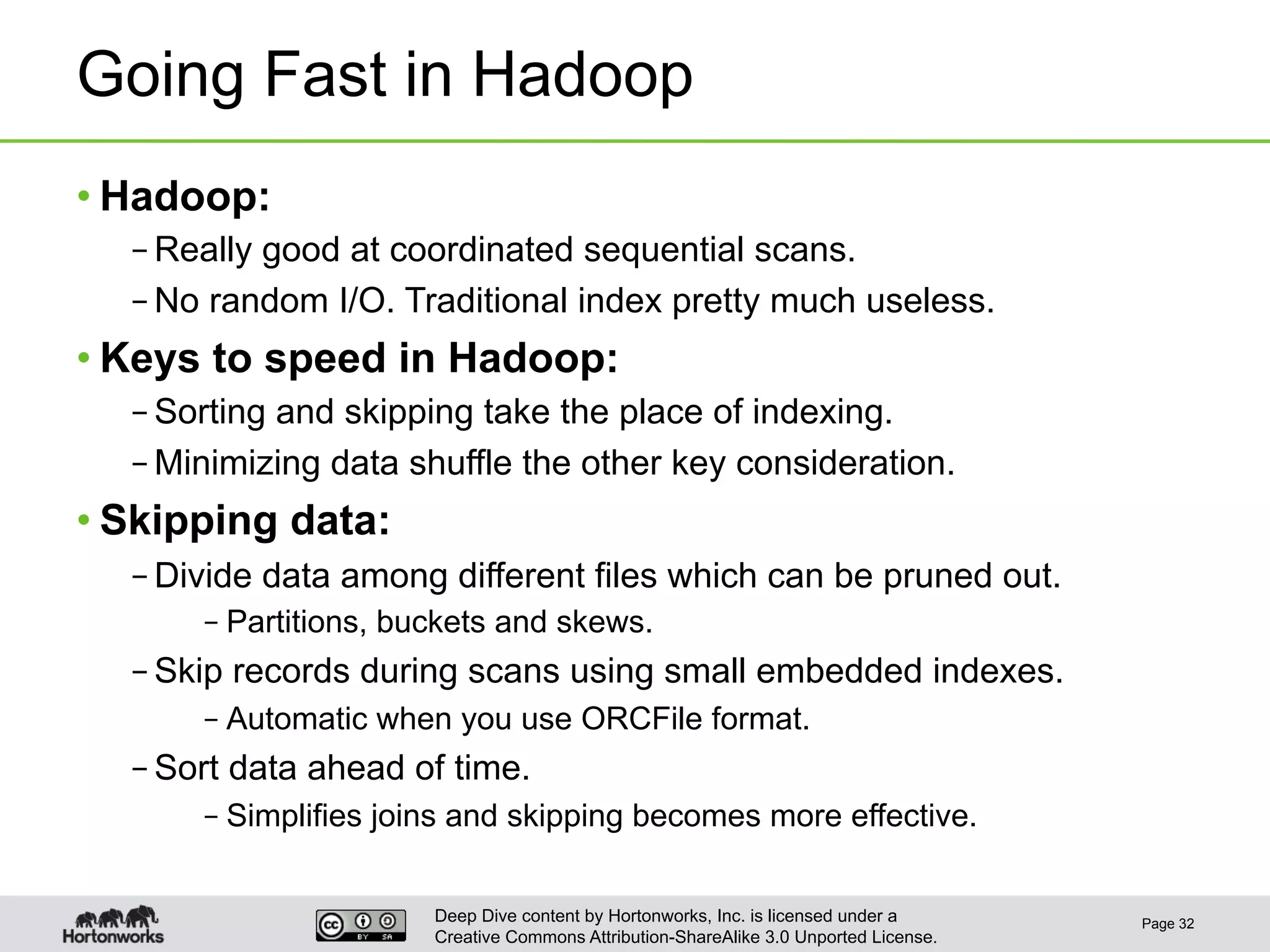

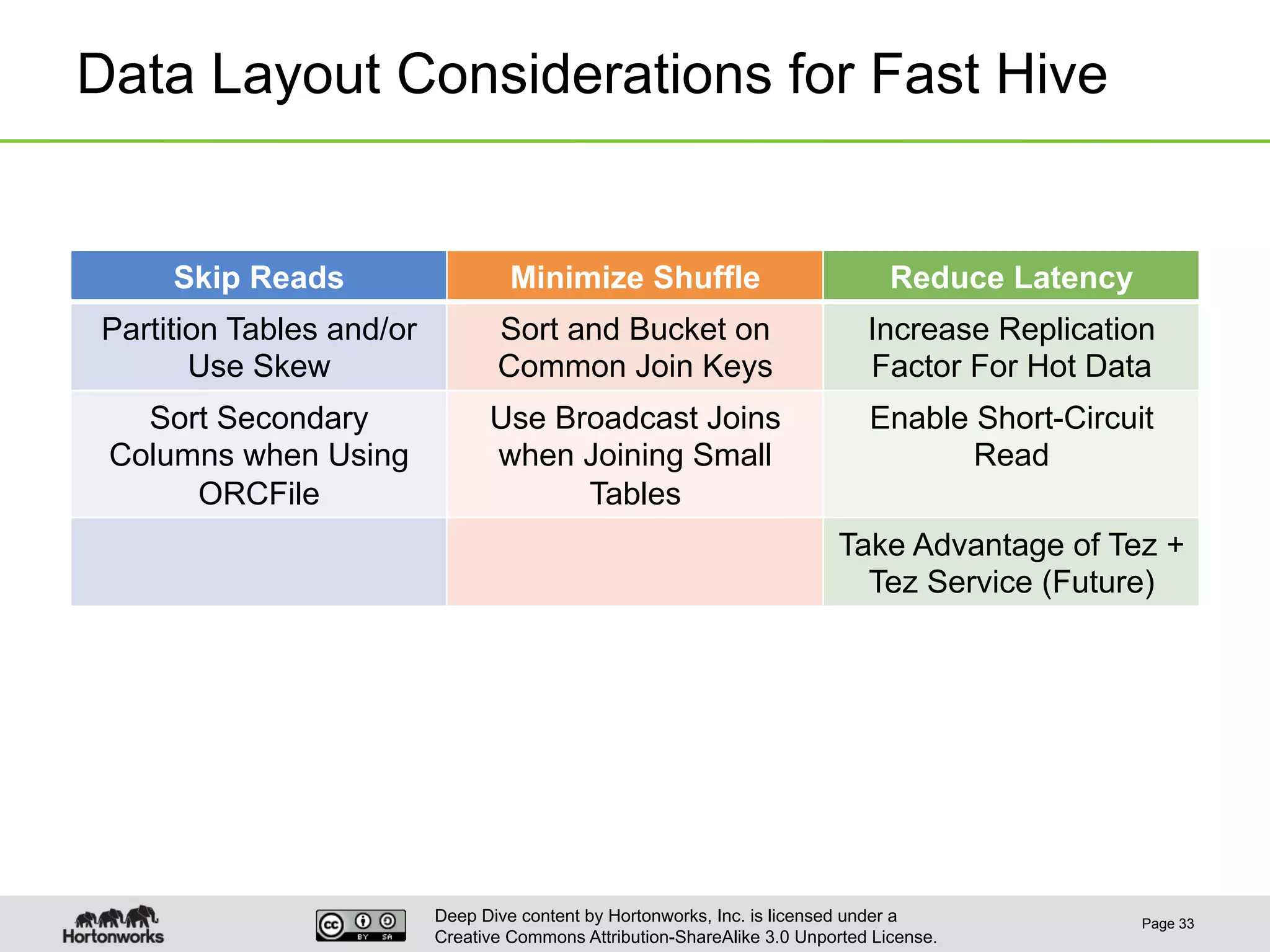

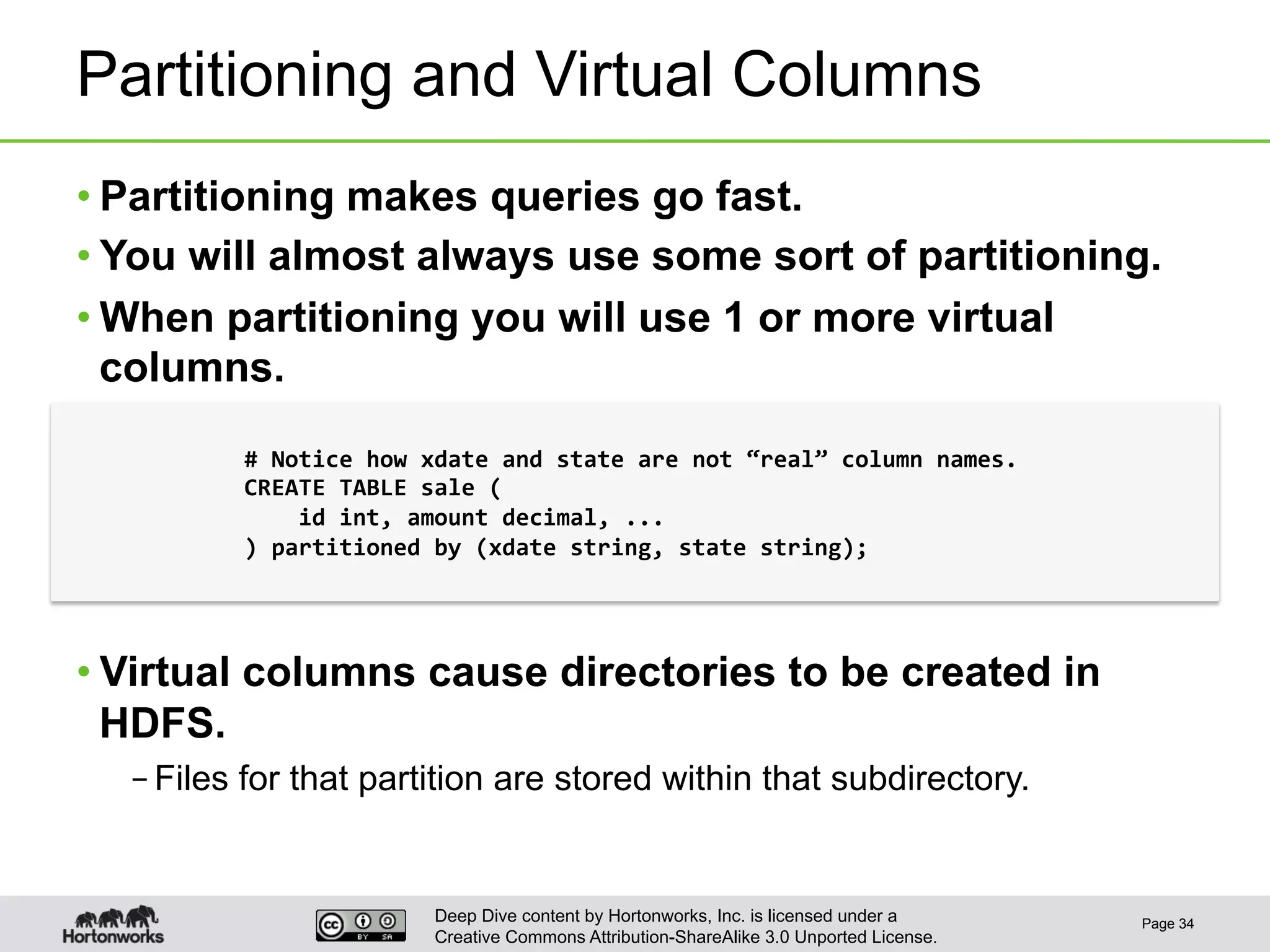

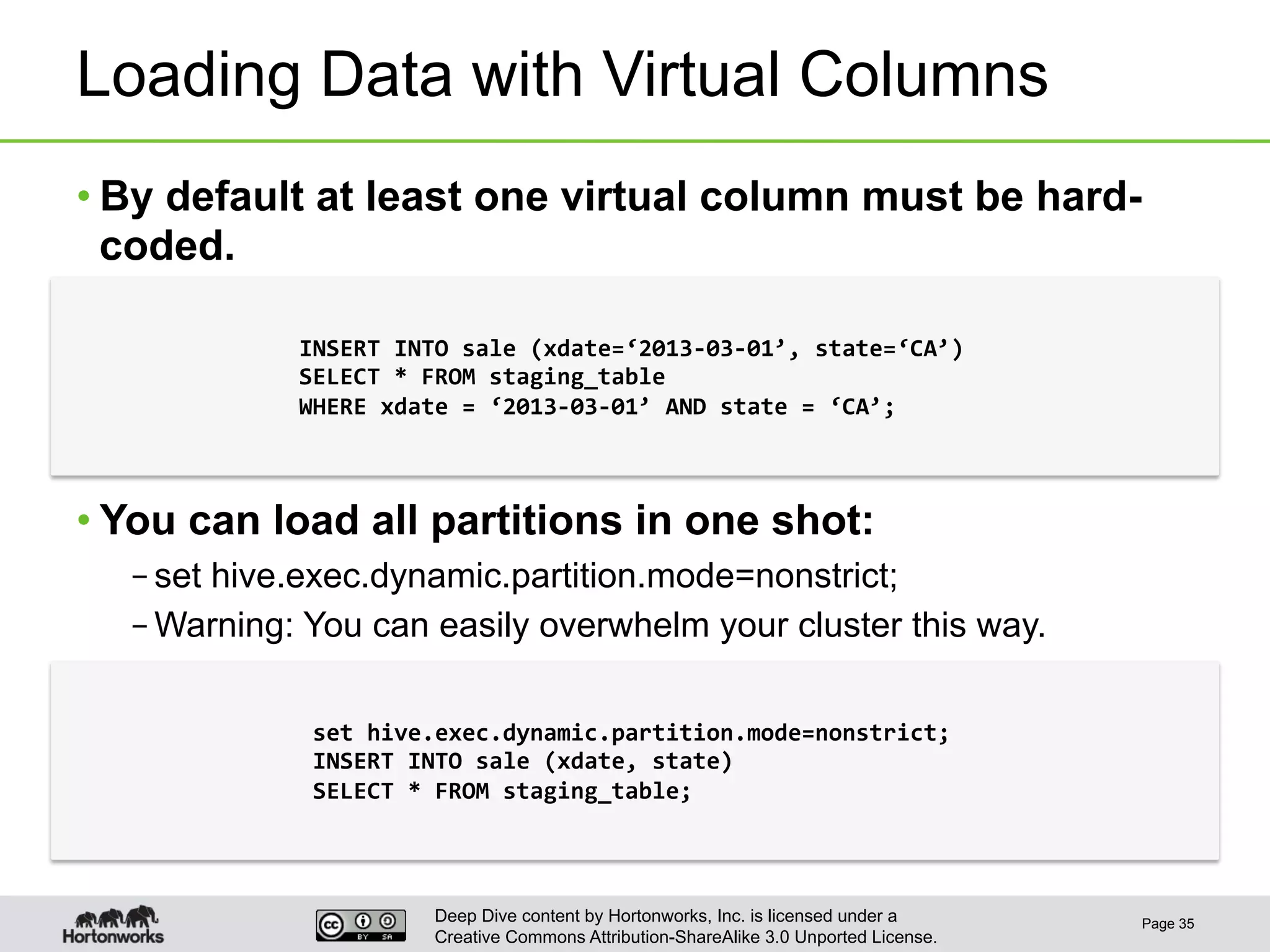

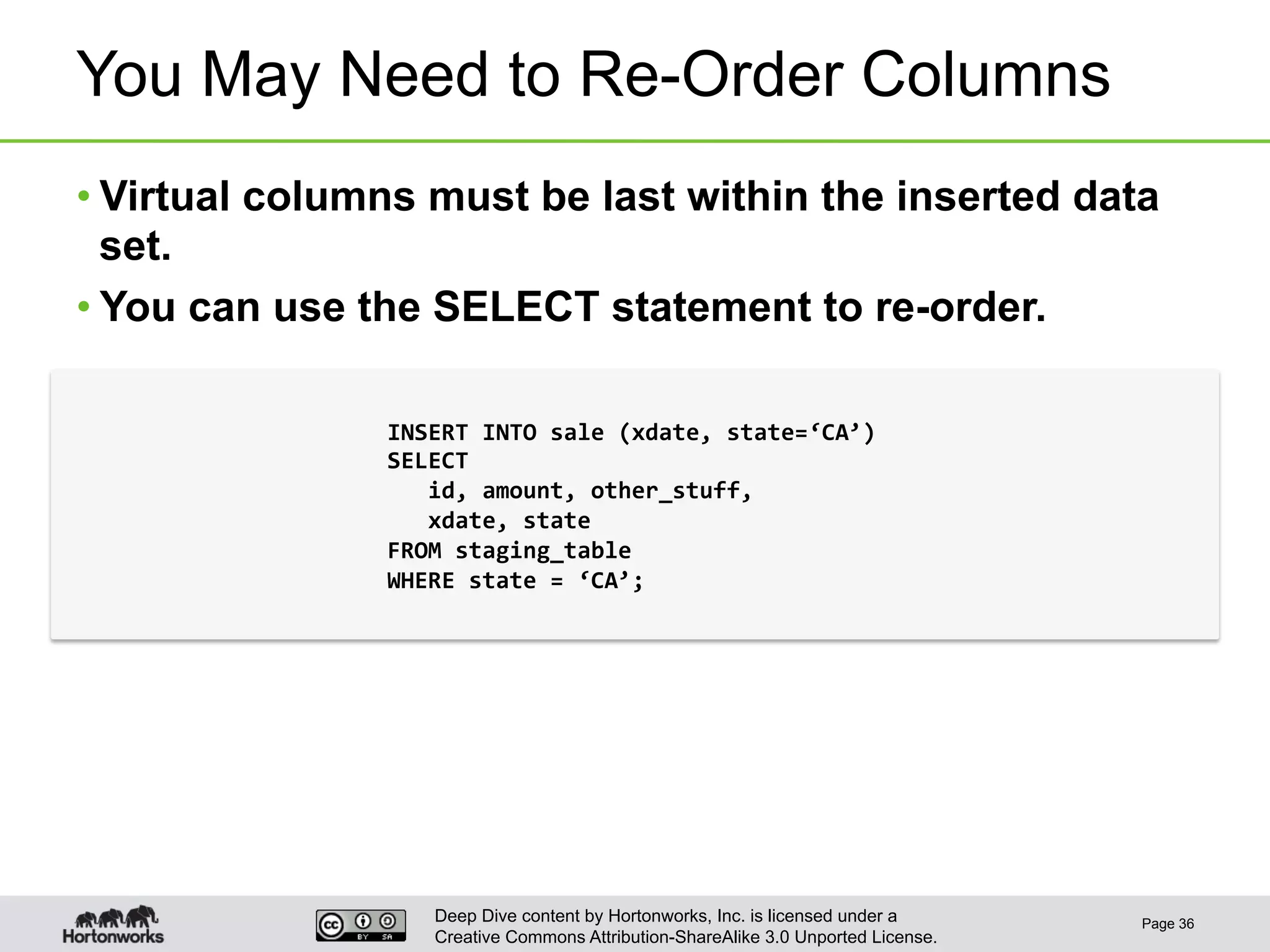

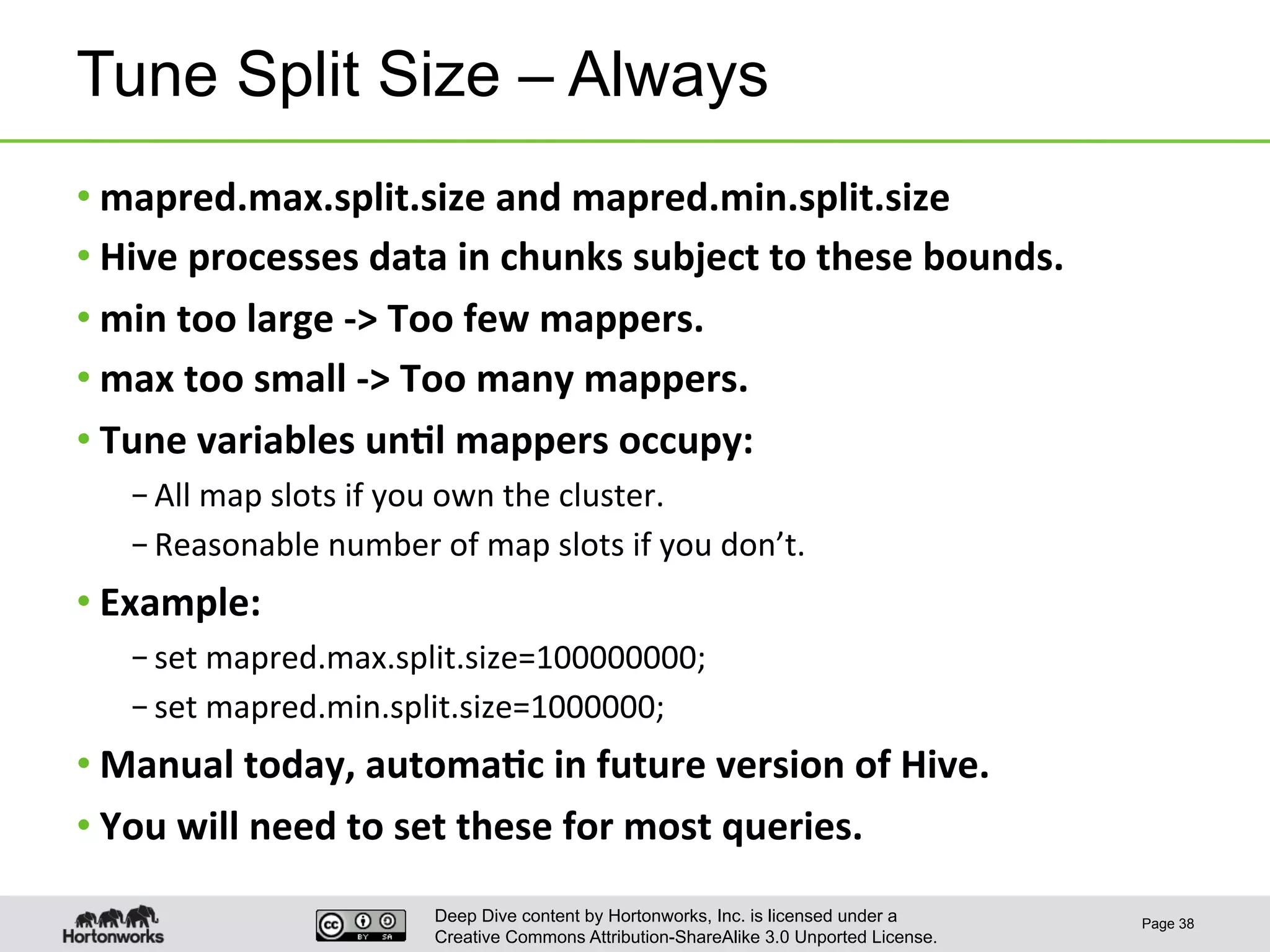

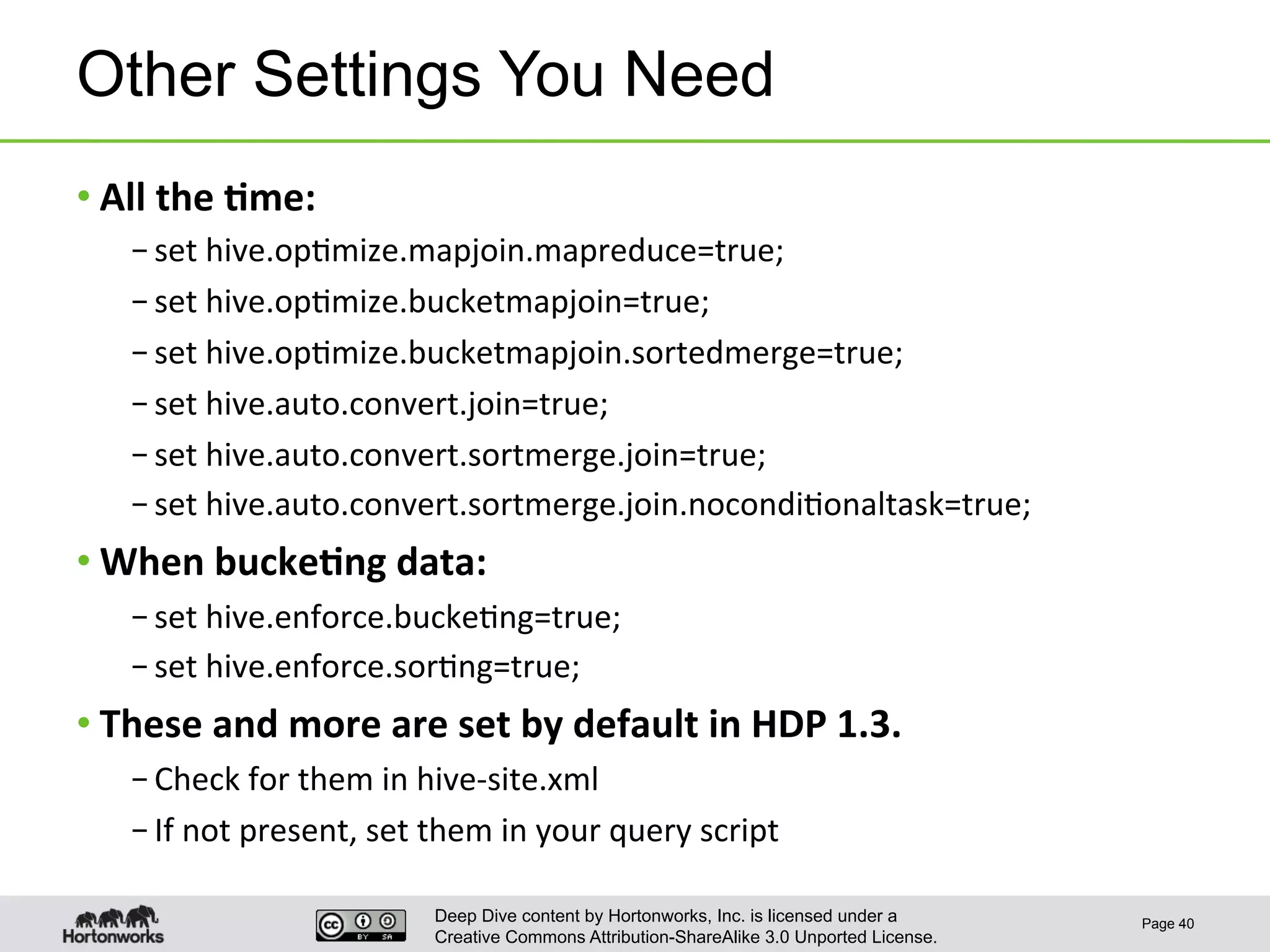

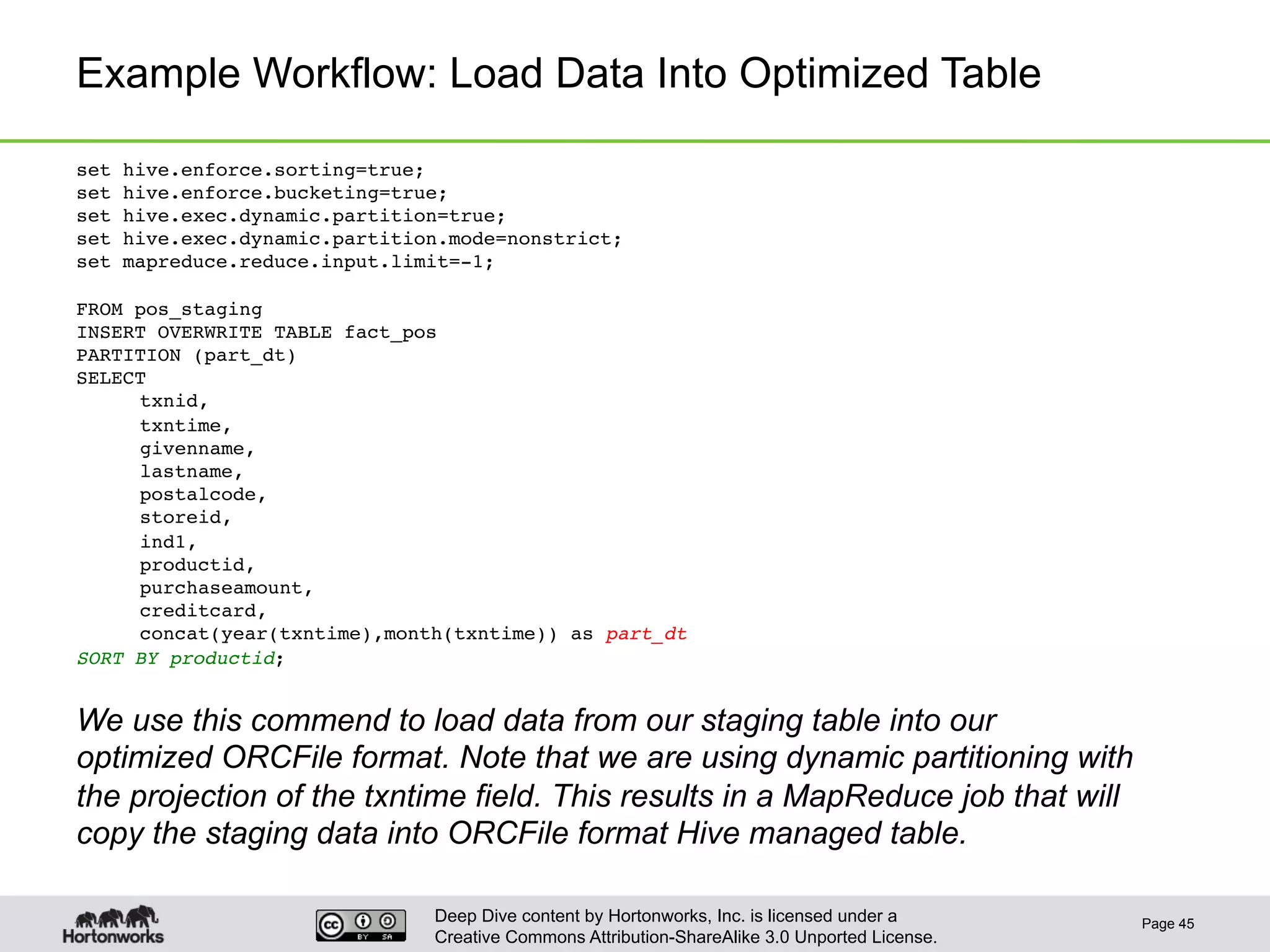

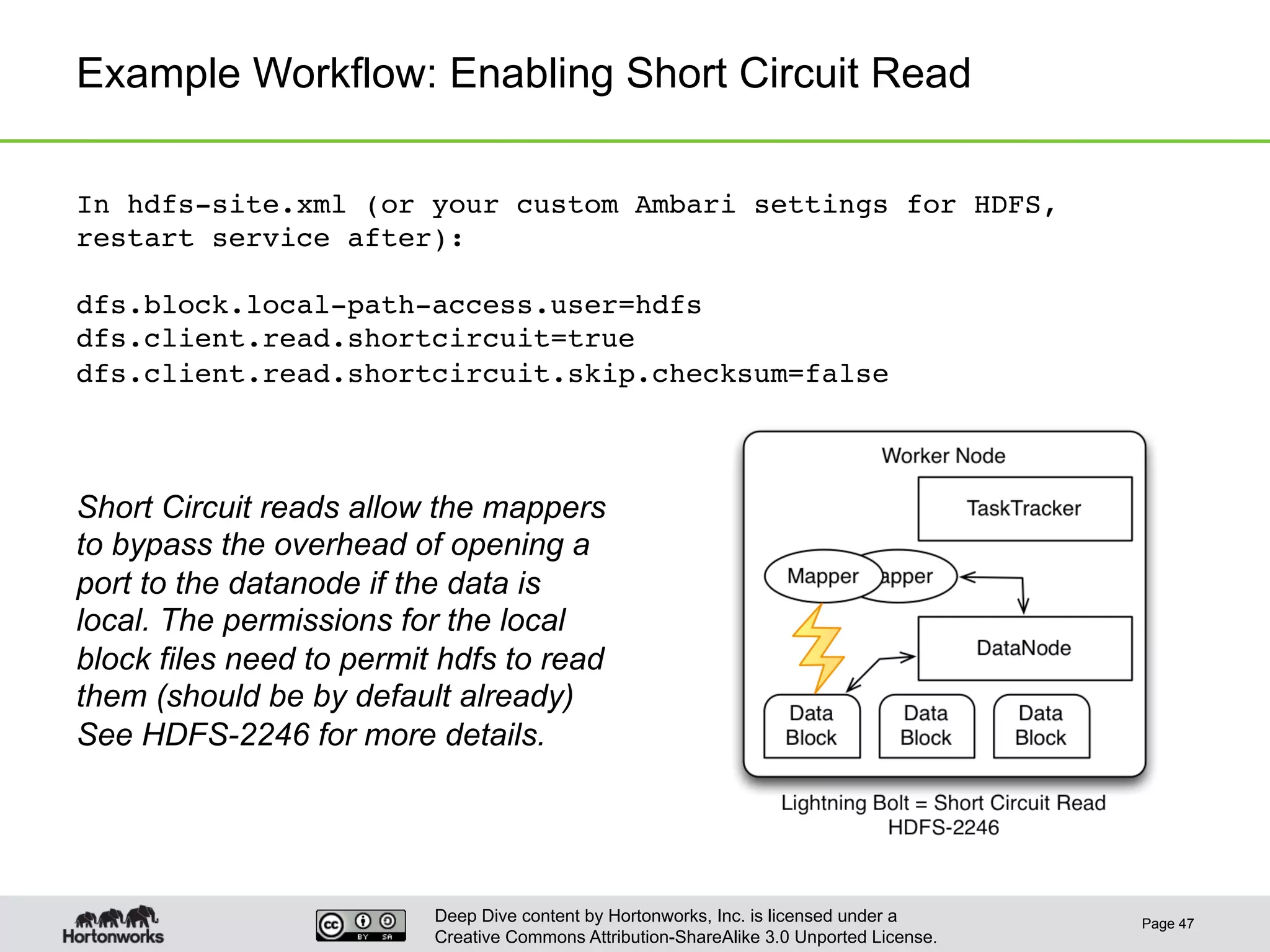

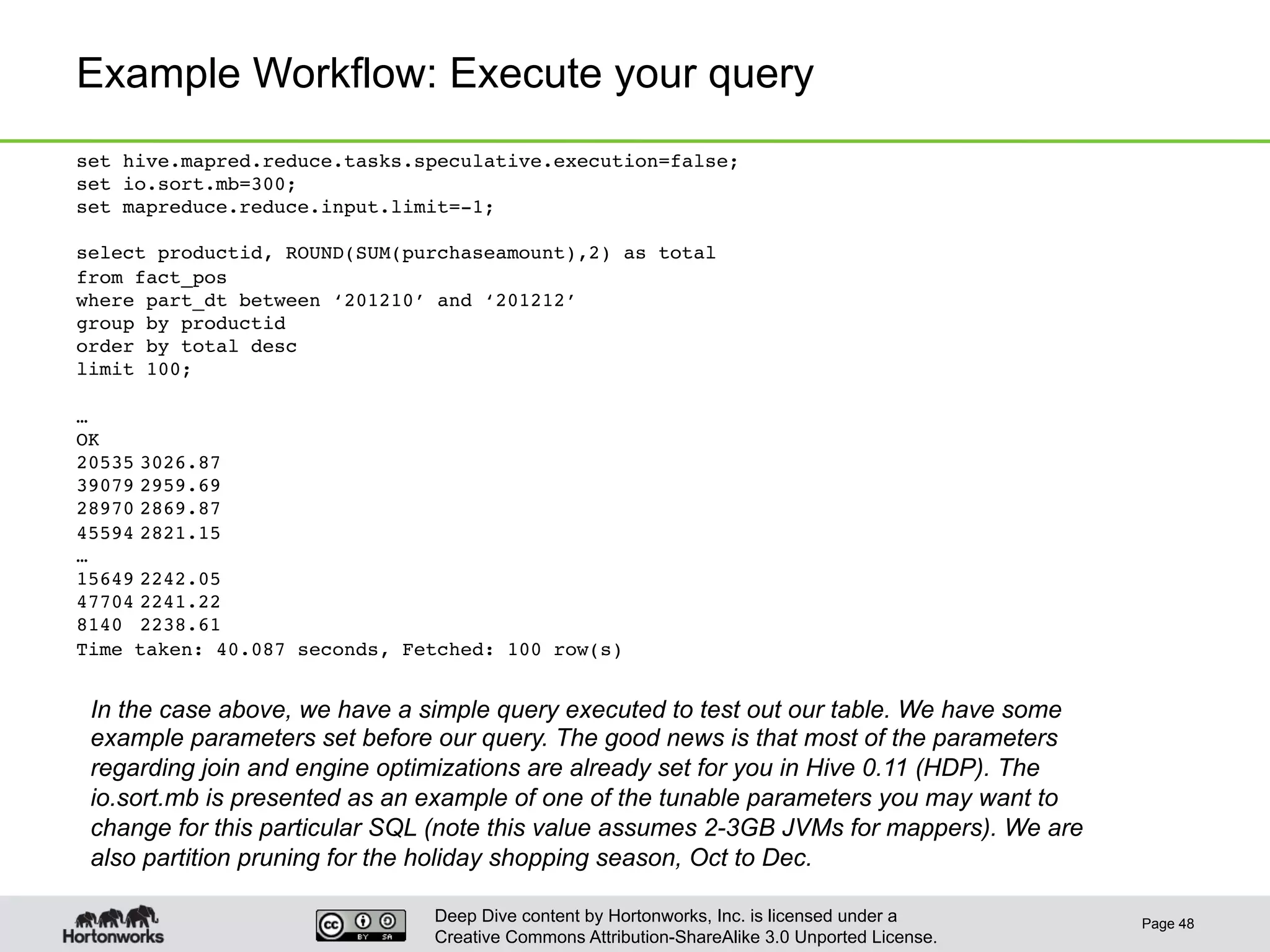

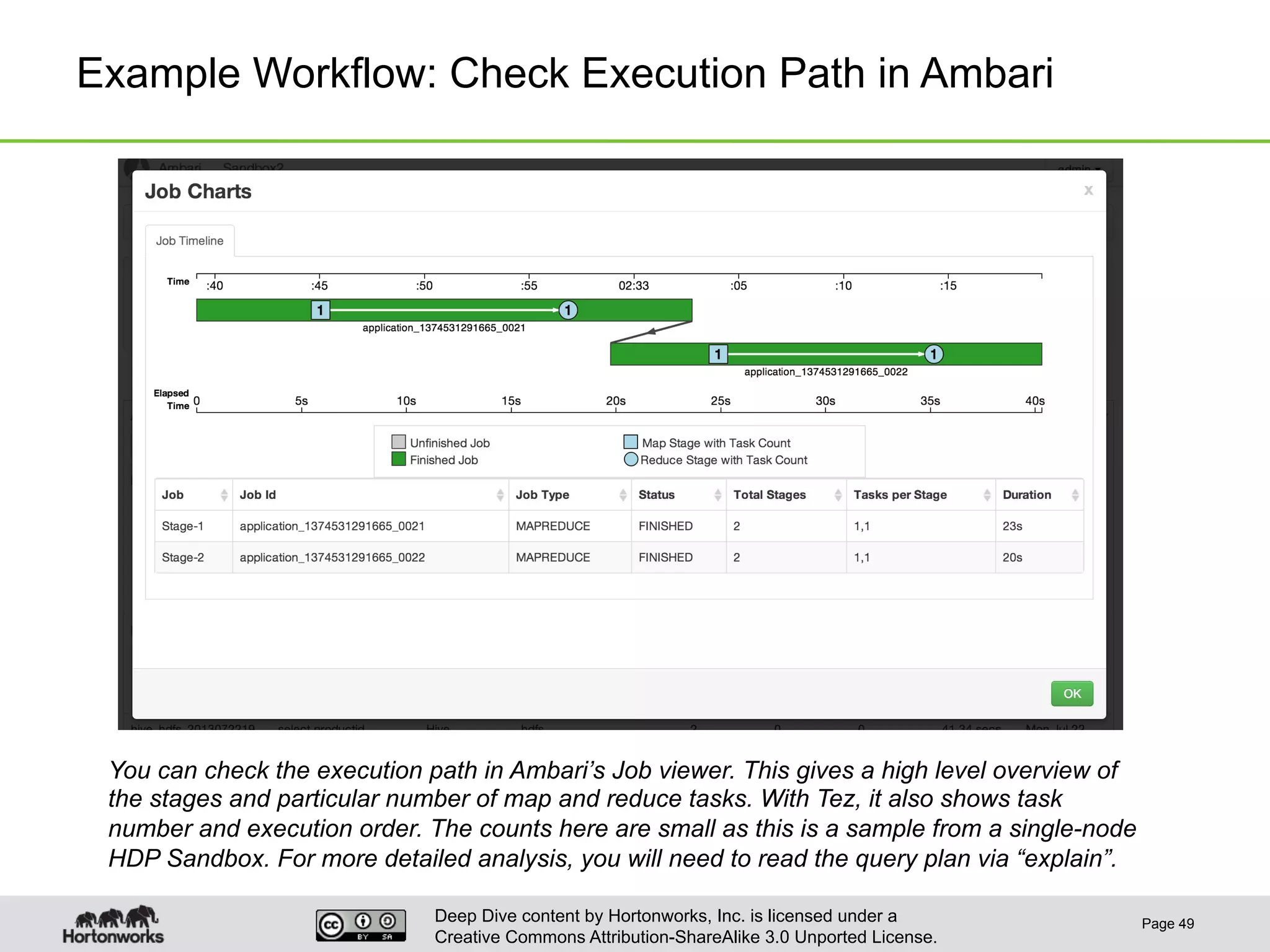

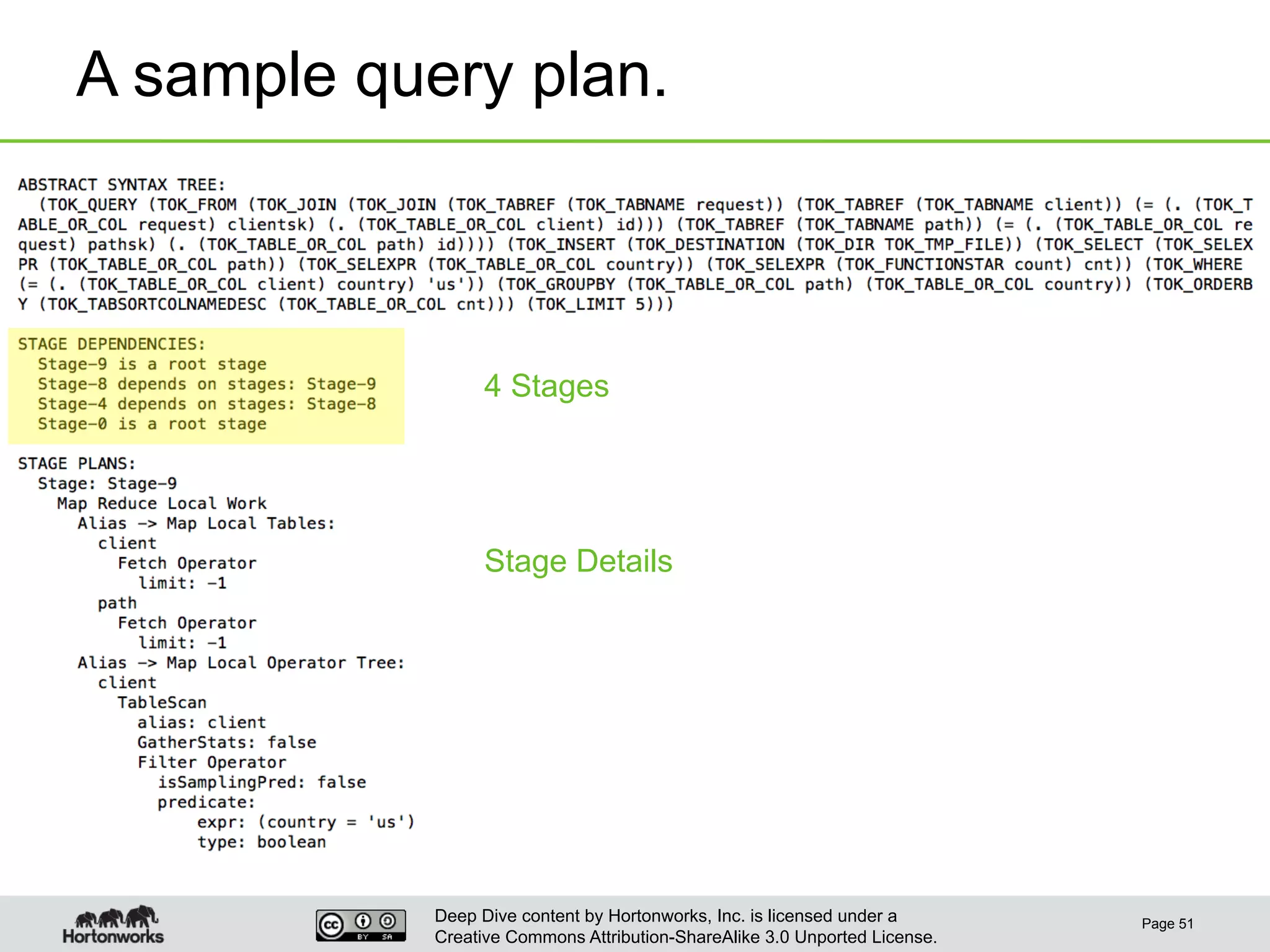

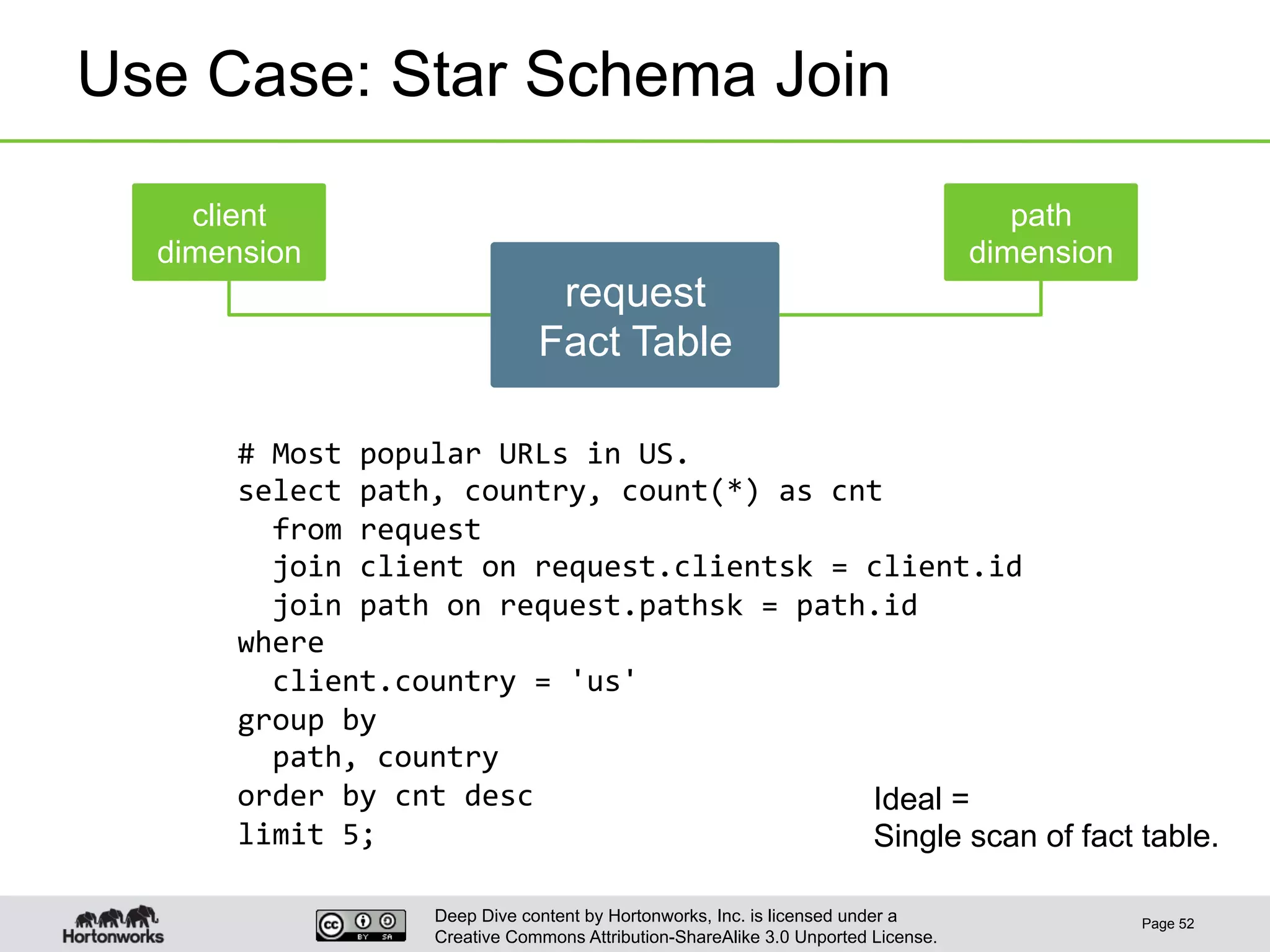

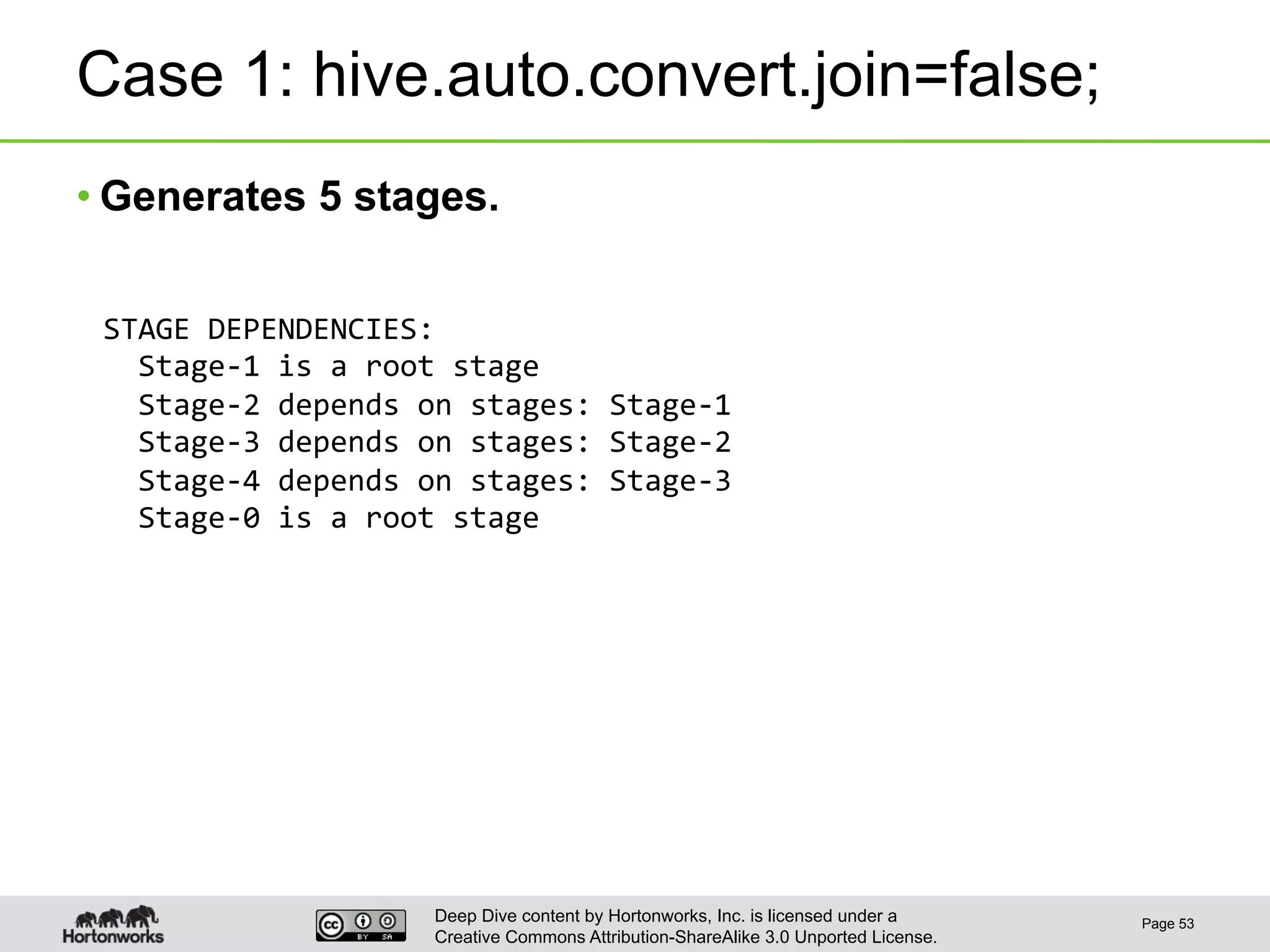

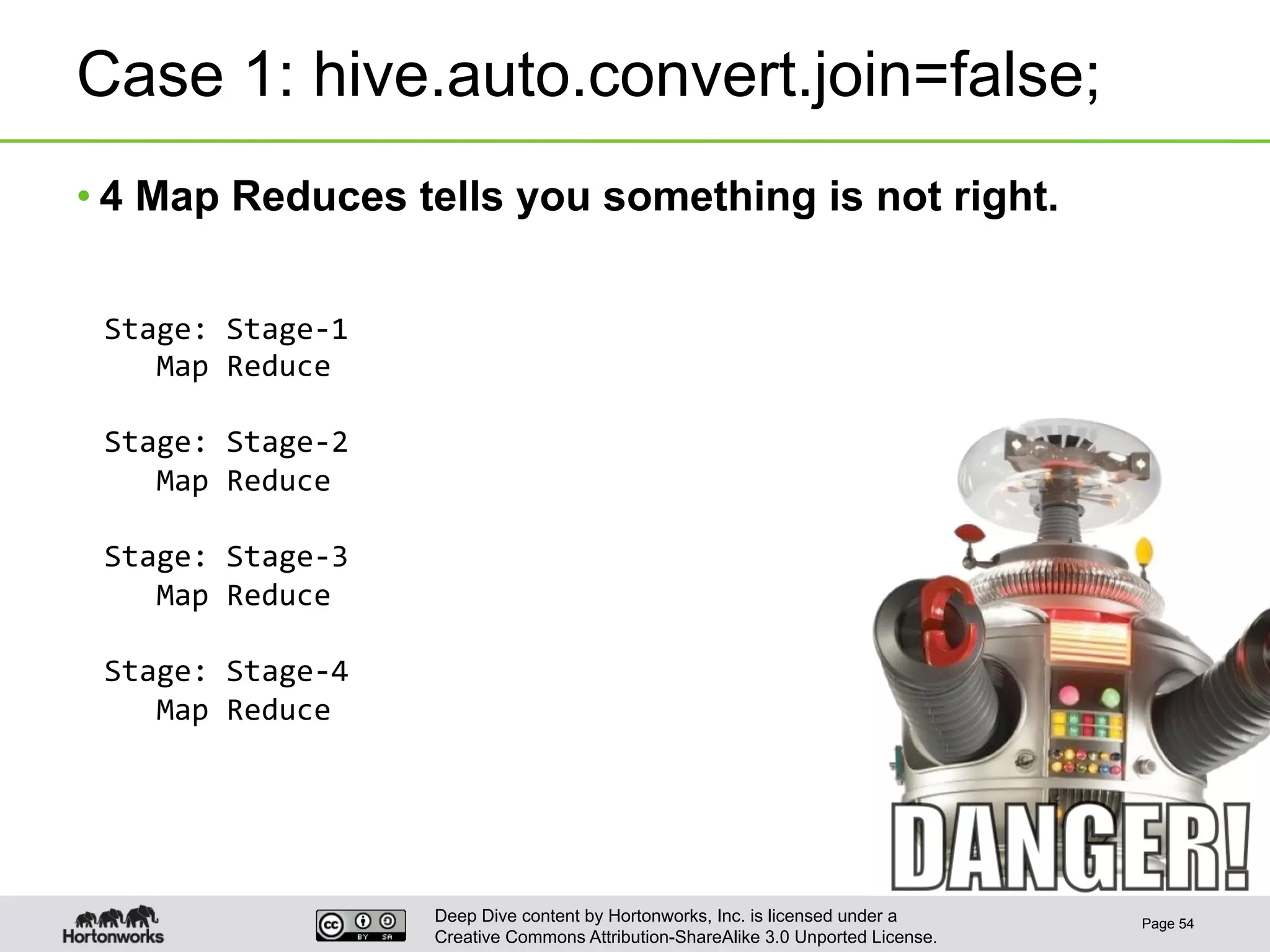

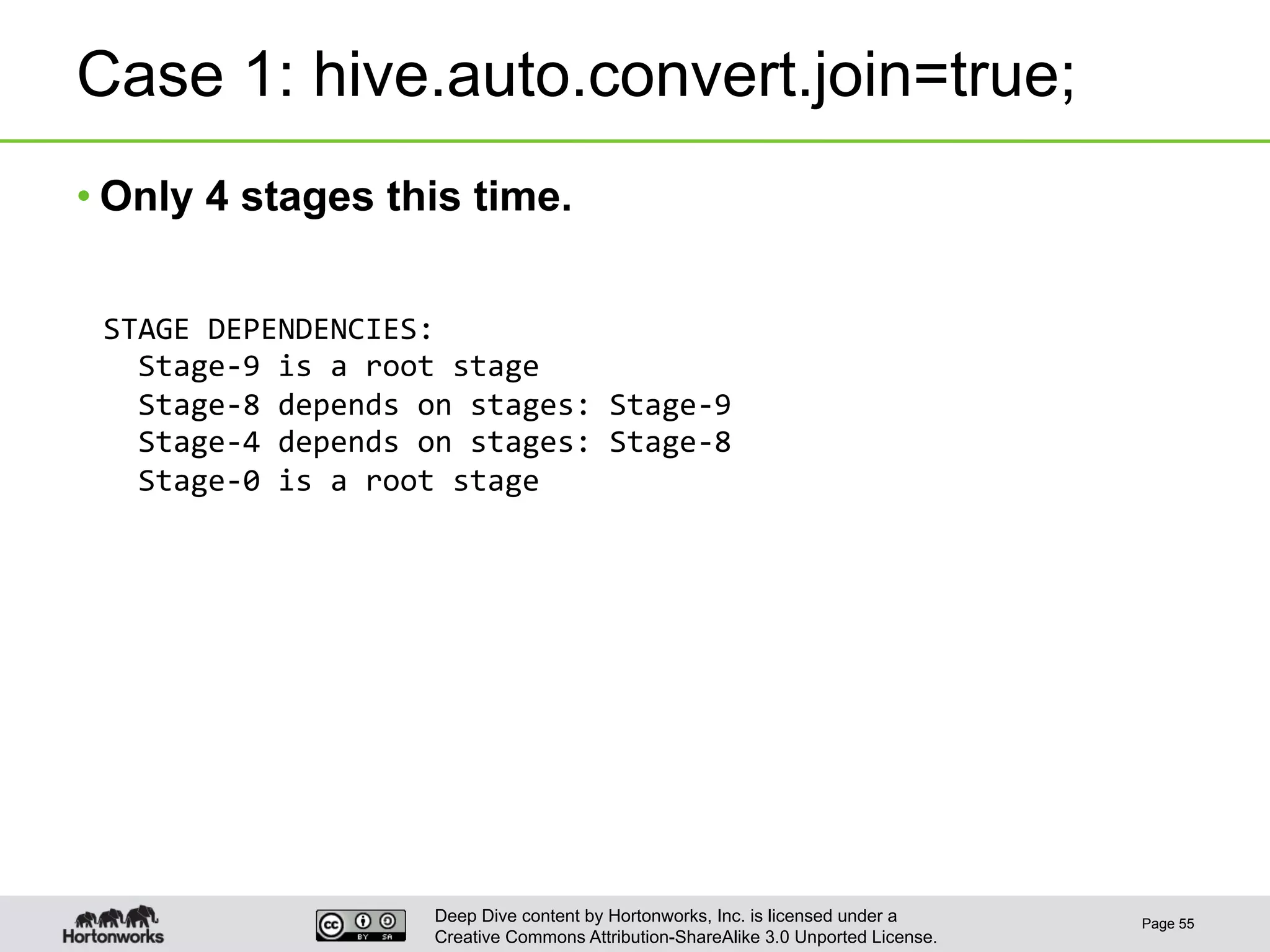

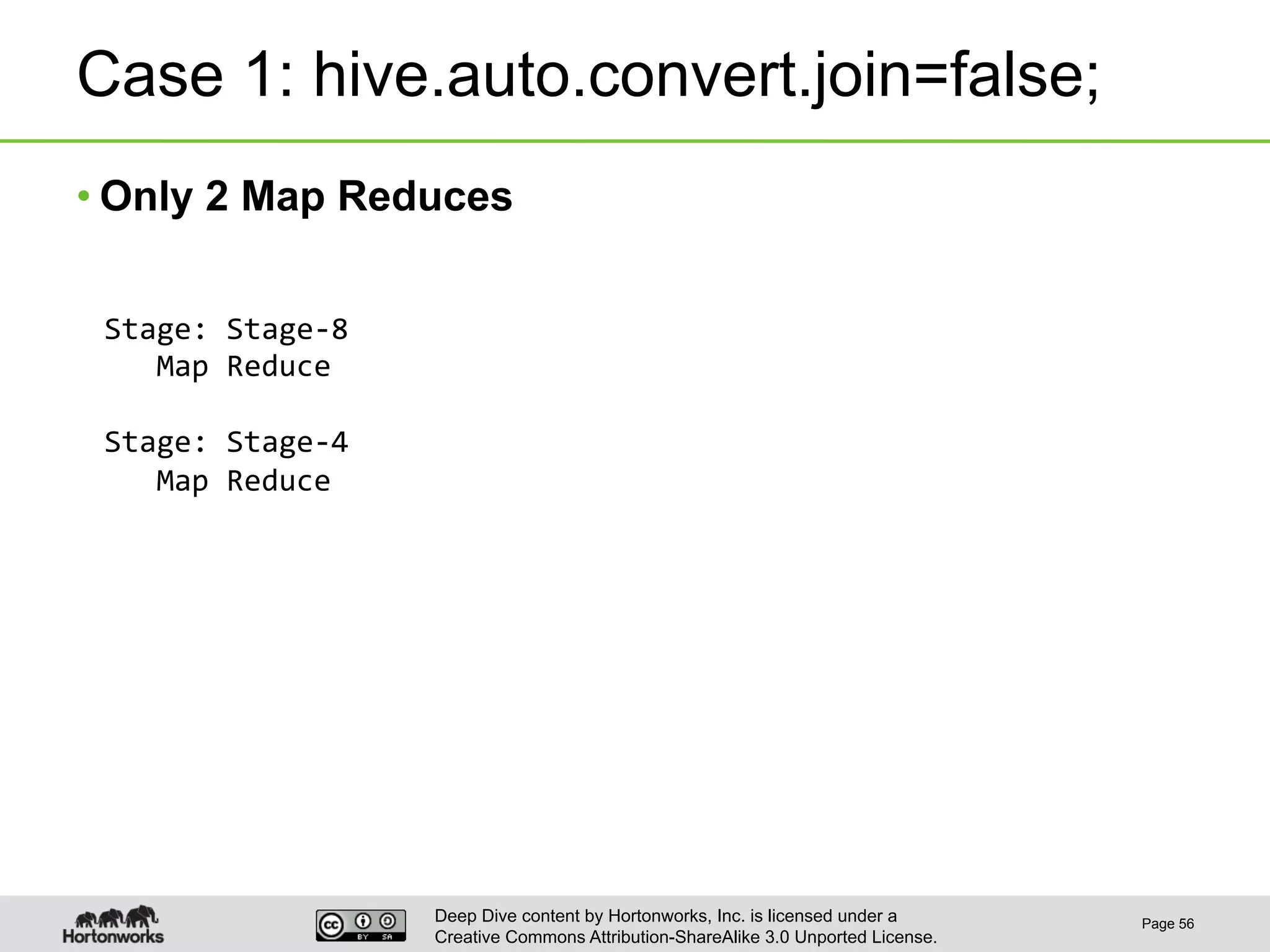

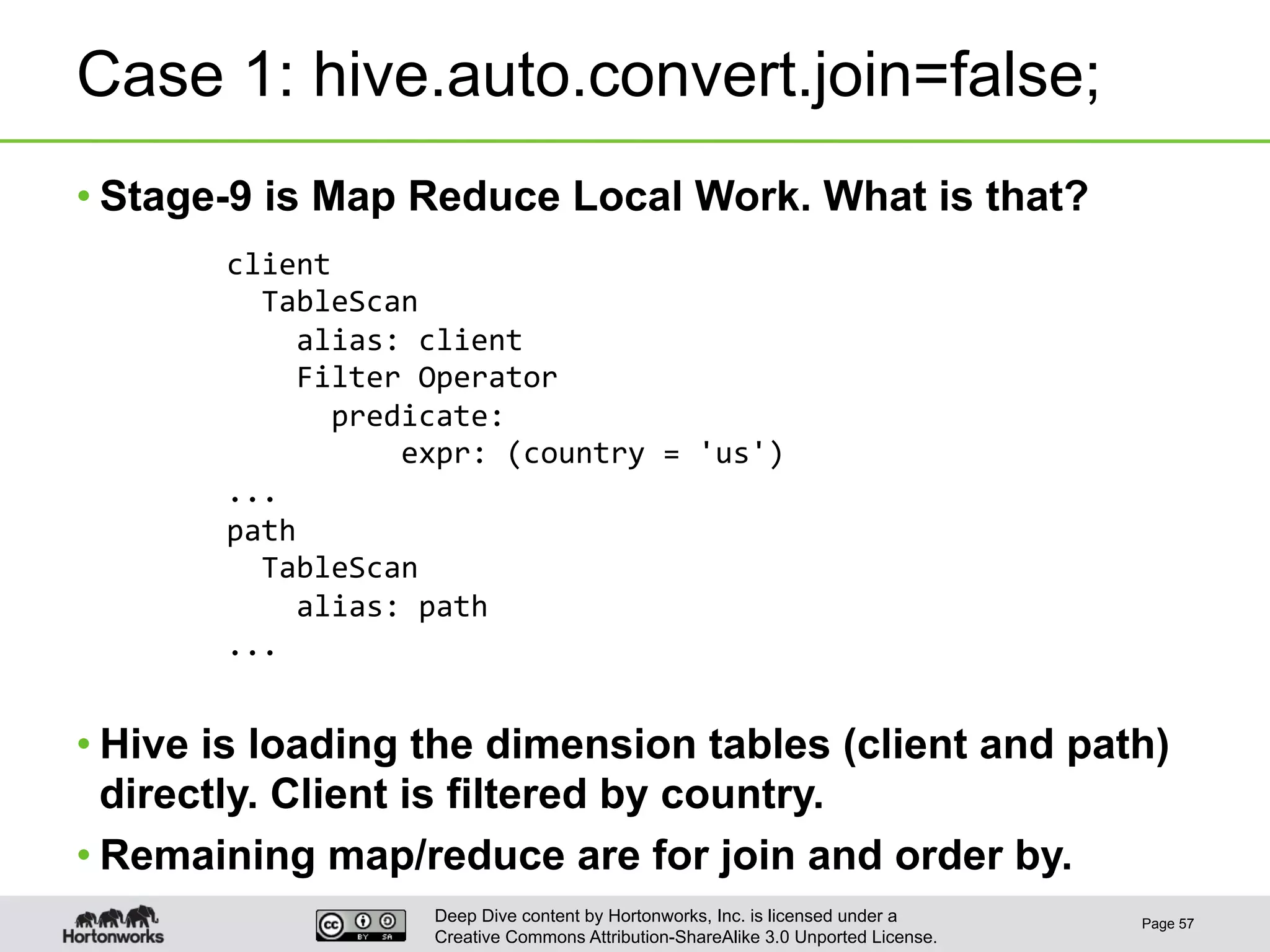

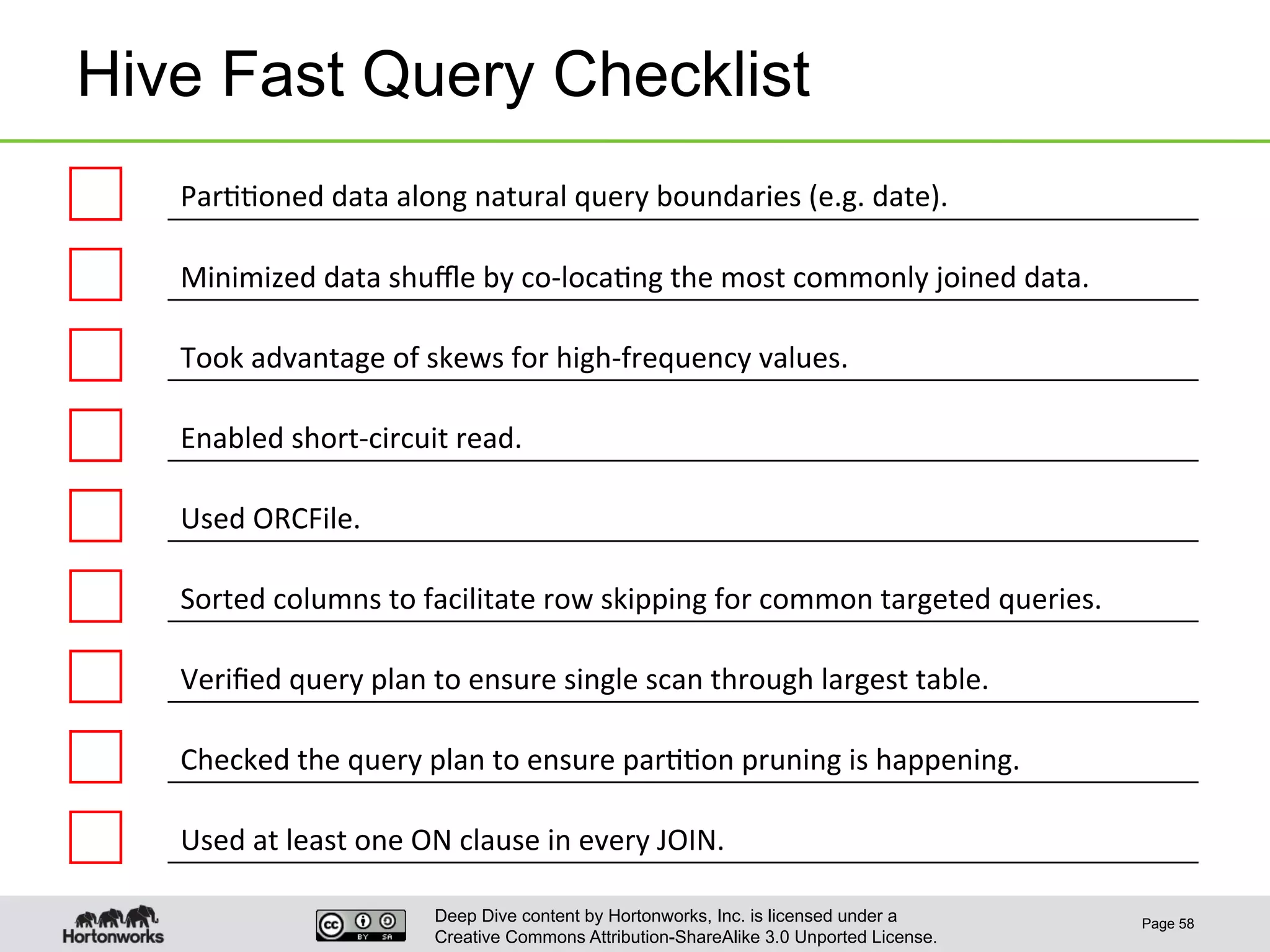

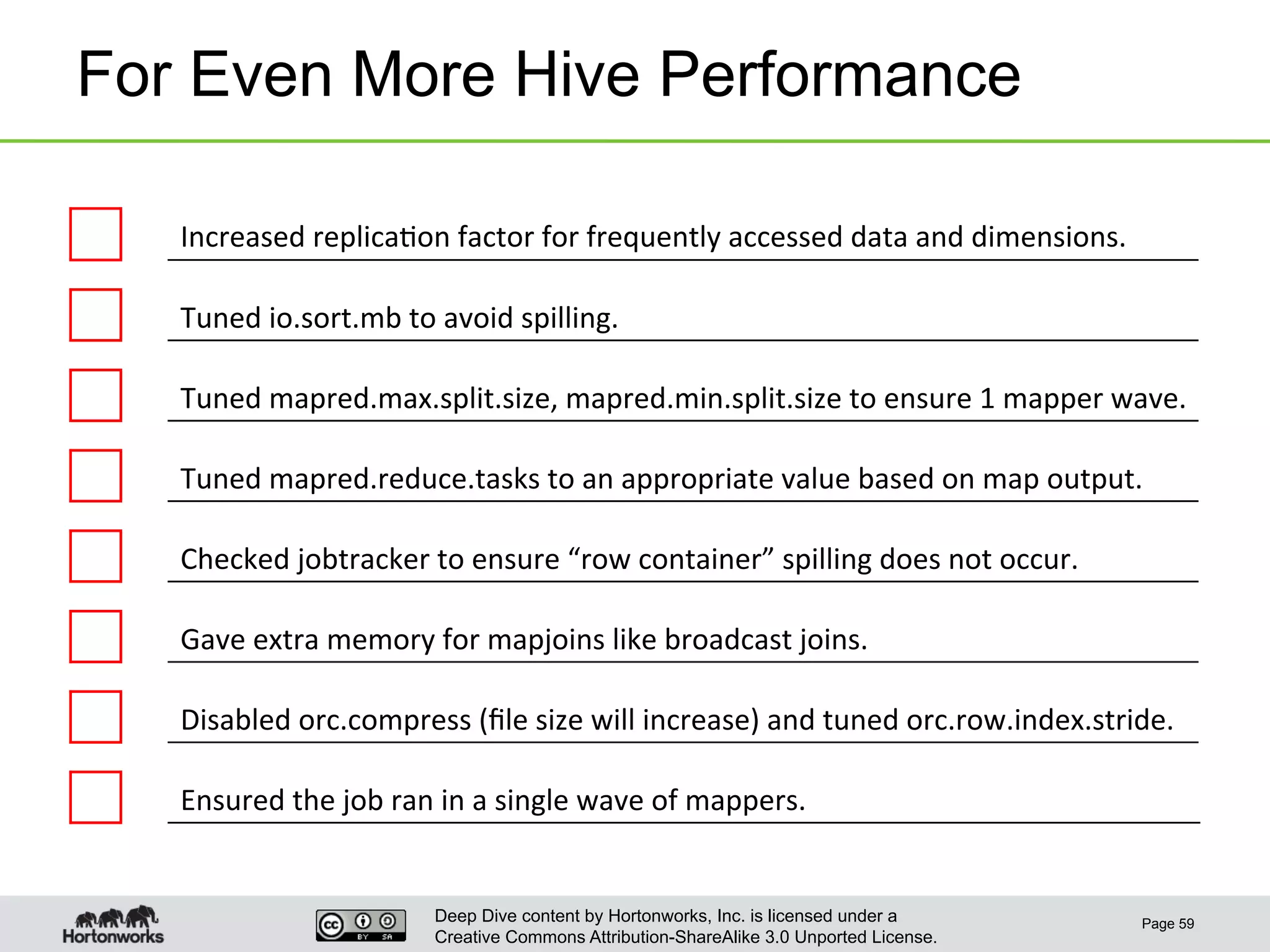

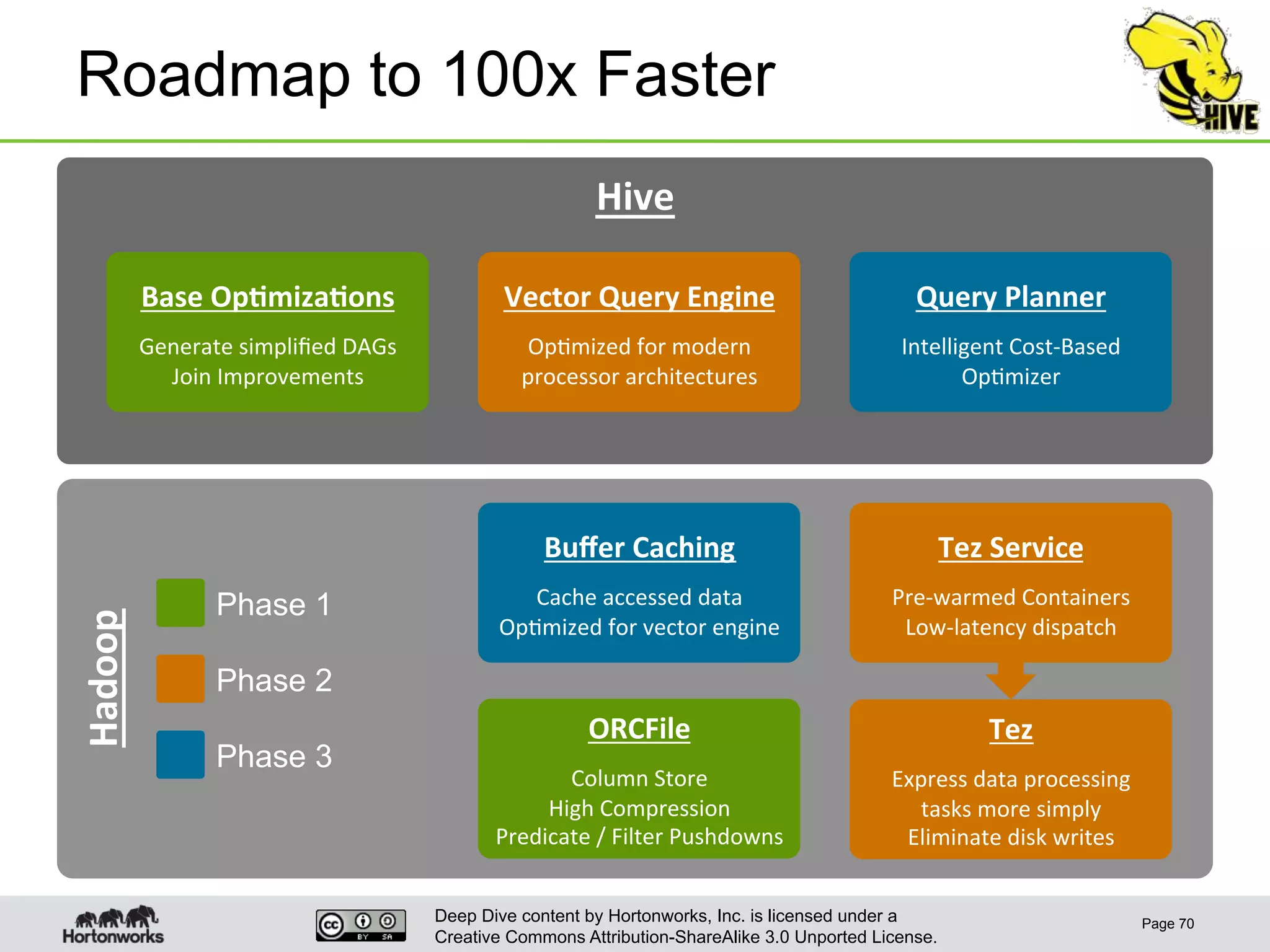

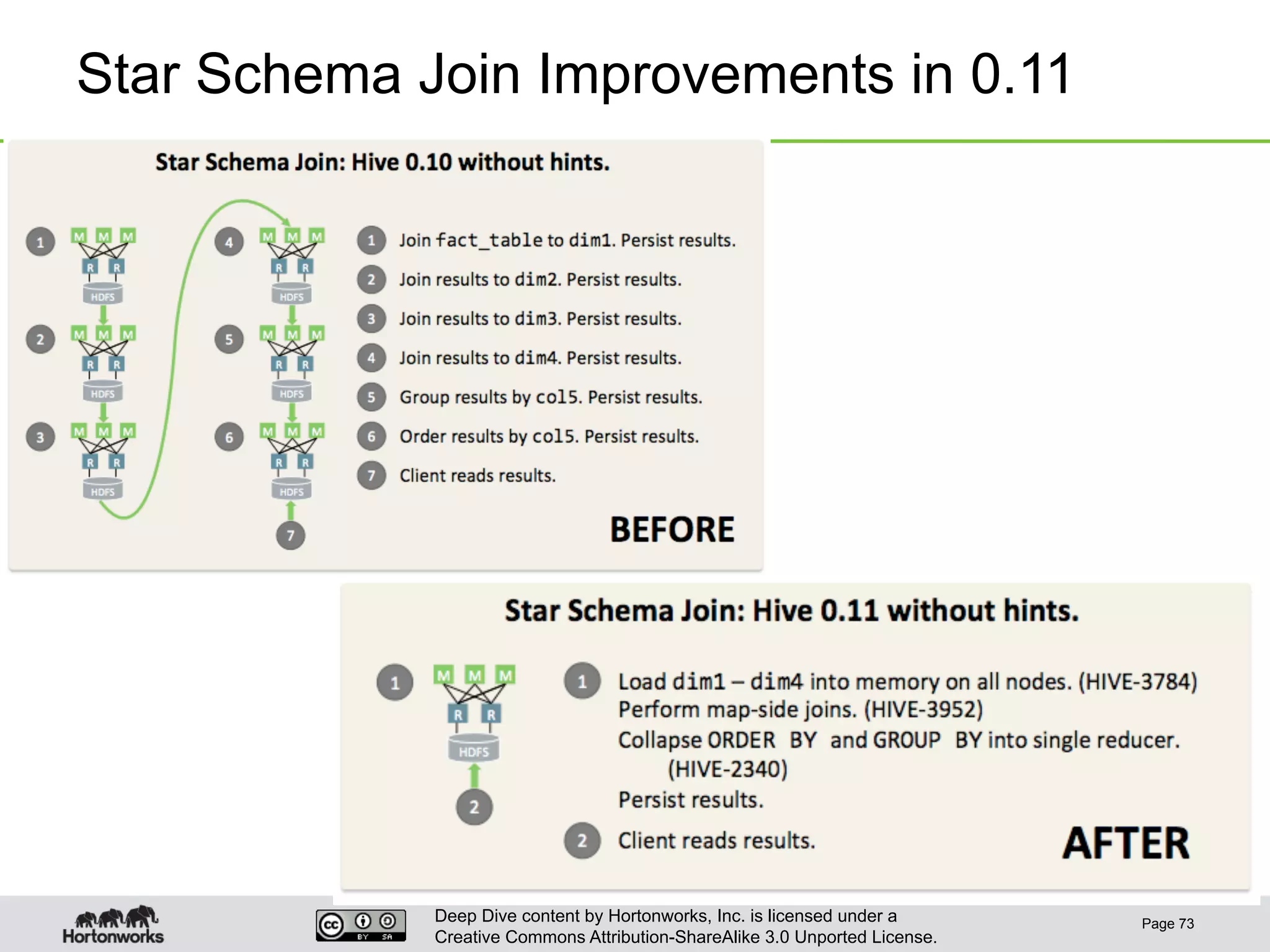

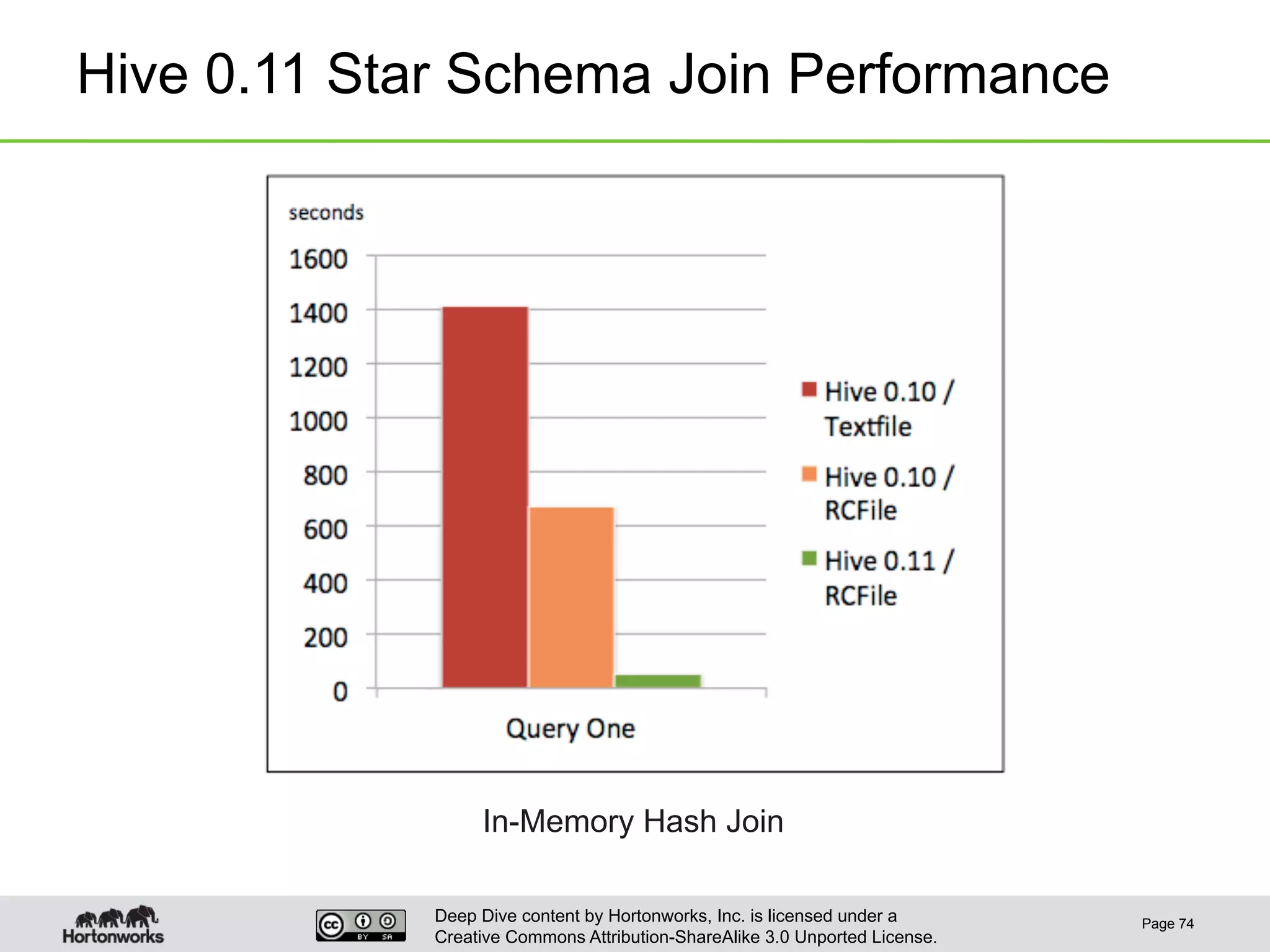

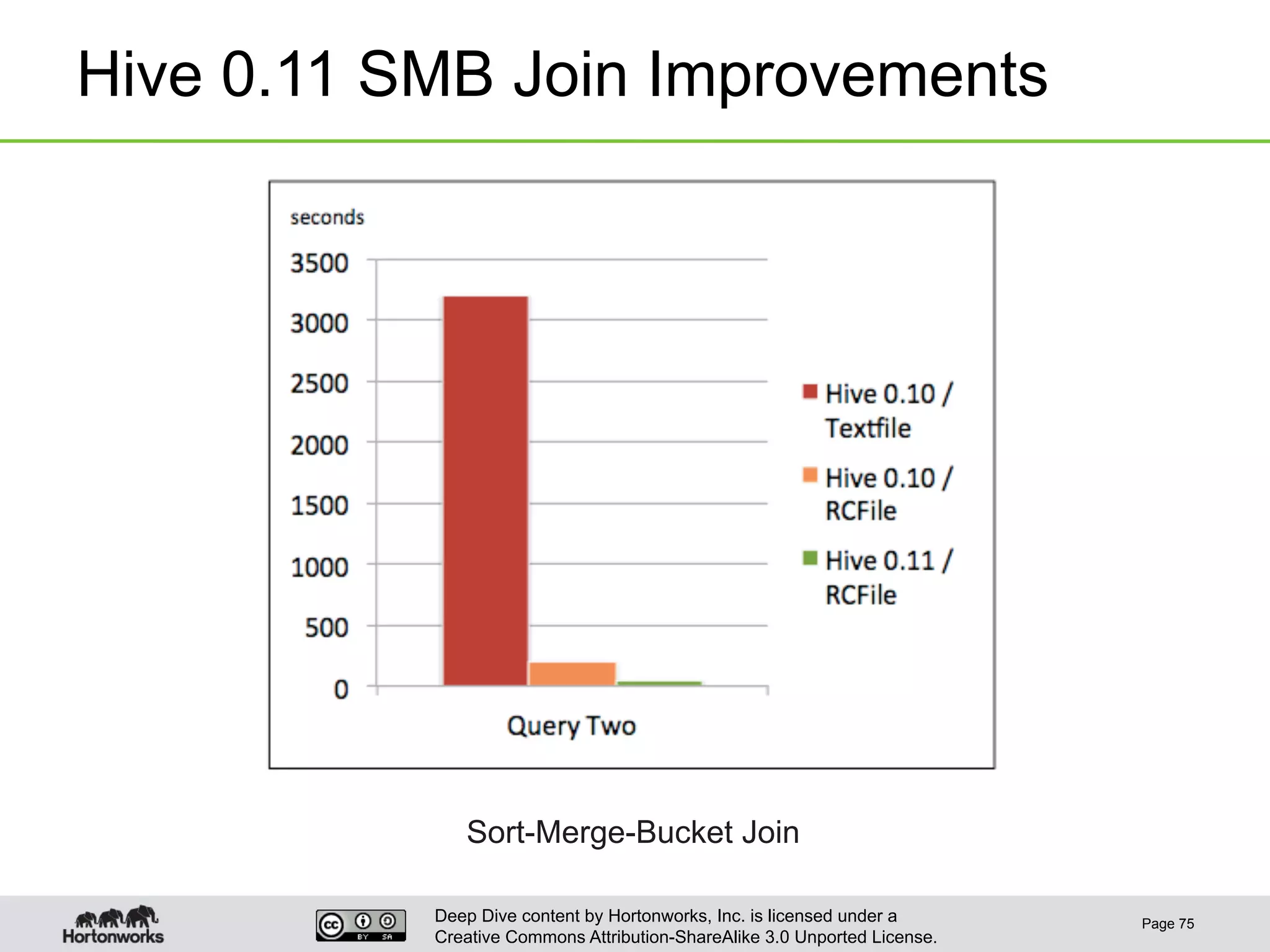

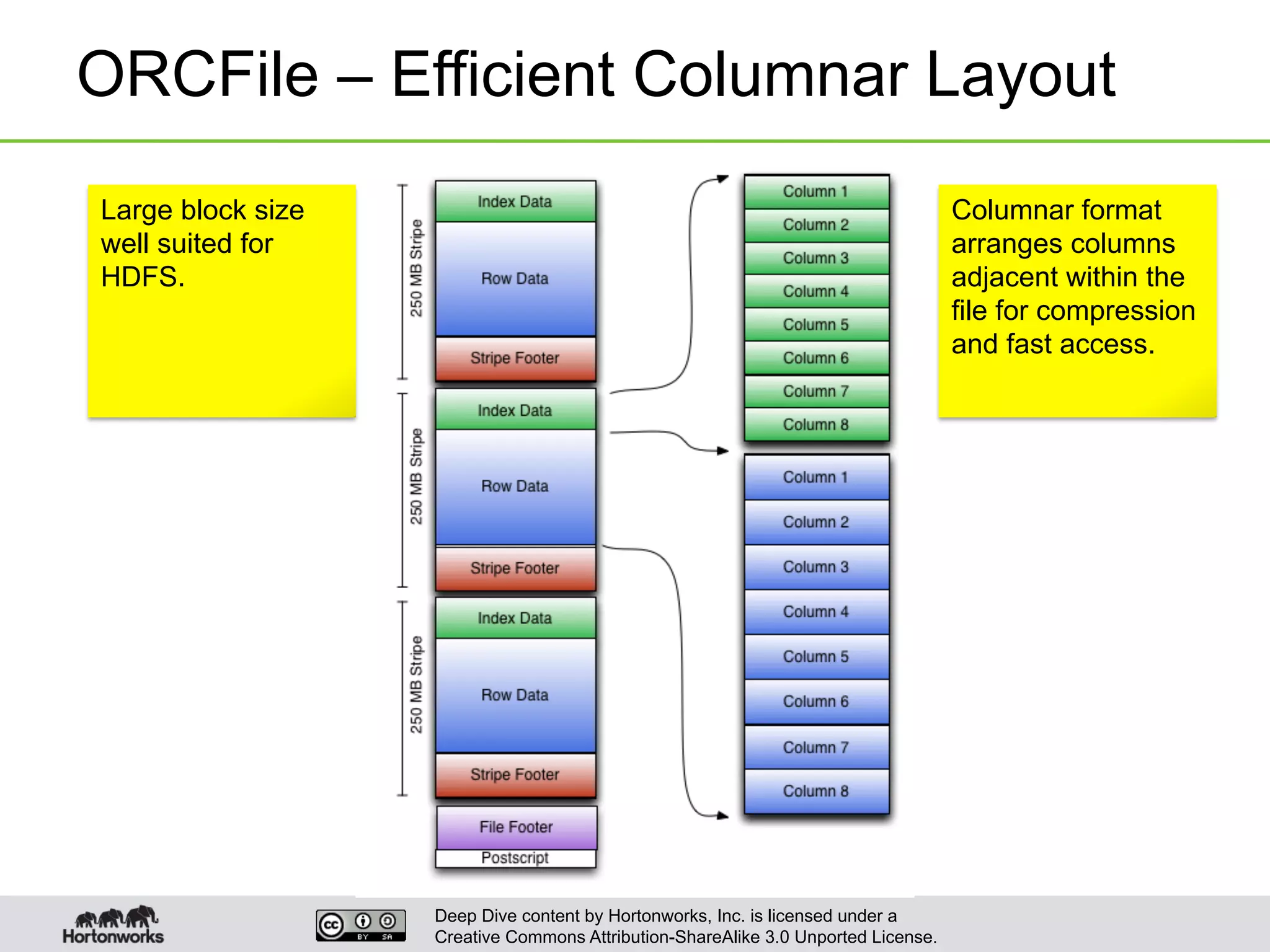

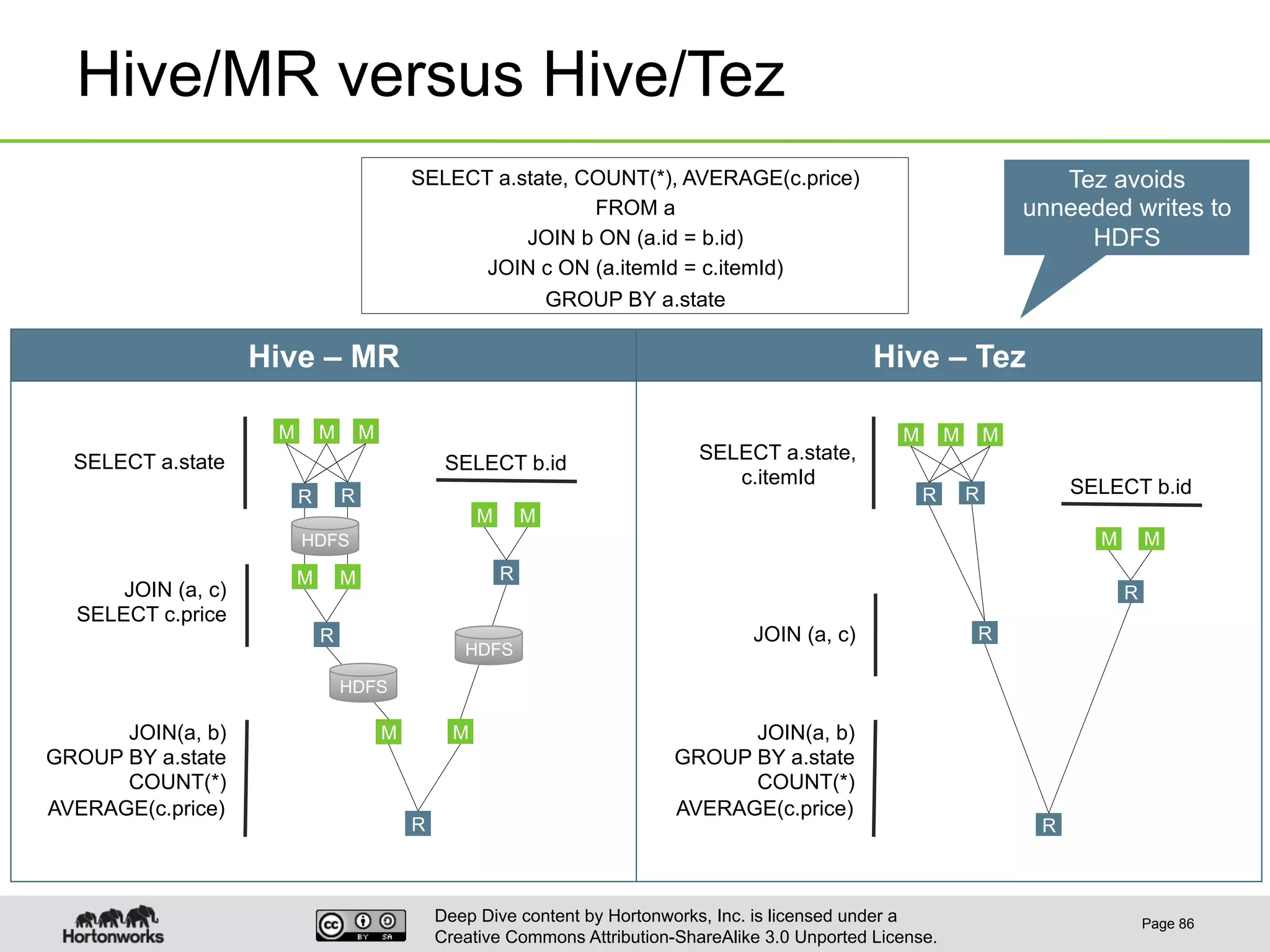

This document provides an overview of Hive and its performance capabilities. It discusses Hive's SQL interface for querying large datasets stored in Hadoop, its architecture which compiles SQL queries into MapReduce jobs, and its support for SQL features. The document also covers techniques for optimizing Hive performance, including data abstractions like partitions, buckets and skews. It describes different join strategies in Hive like shuffle joins, broadcast joins and sort-merge bucket joins, and how shuffle joins are implemented in MapReduce.