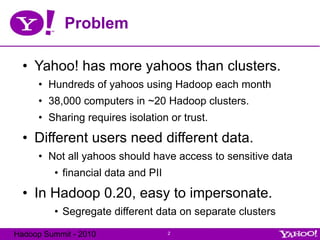

- Yahoo needed to secure its Hadoop clusters to isolate different users' data and prevent impersonation, as it had hundreds of users sharing 38,000 computers across ~20 clusters.

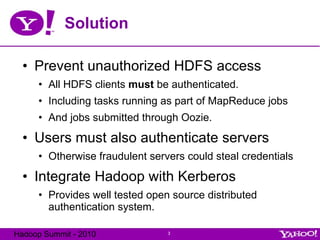

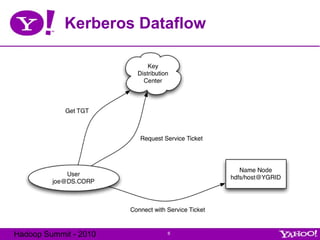

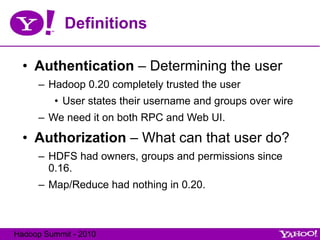

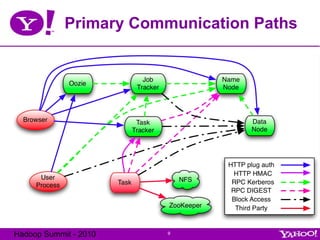

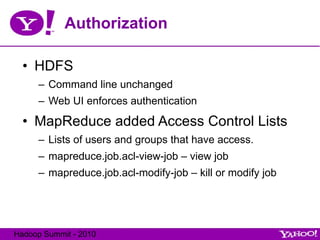

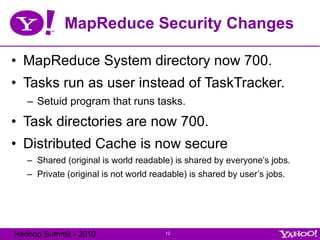

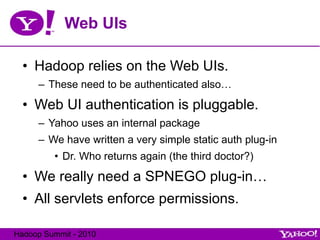

- The solution introduced Kerberos authentication to Hadoop to provide distributed authentication, integrating it with HDFS, MapReduce, and other components. Authorization was also added through access control lists.

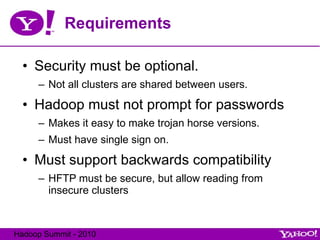

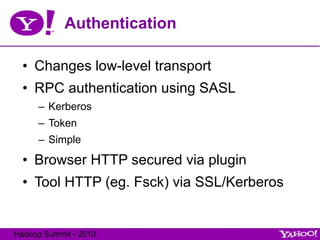

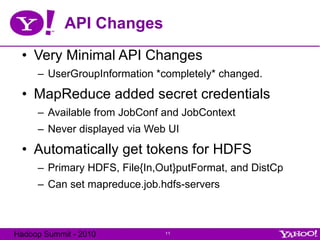

- The changes were made largely transparent to users and backwards compatible to allow signing in once with Kerberos and maintaining access, while securing various communication paths and APIs. This allowed for secure sharing of clusters while keeping options for non-shared clusters.

![Hadoop Security Hadoop Summit 2010 Owen O’Malley [email_address] Yahoo’s Hadoop Team](https://image.slidesharecdn.com/1hadoopsecurityindetailshadoopsummit2010-100630134441-phpapp01/75/Hadoop-Security-in-Detail__HadoopSummit2010-1-2048.jpg)

![Questions? Questions should be sent to: common/hdfs/mapreduce-user@hadoop.apache.org Security holes should be sent to: [email_address] Available from http://developer.yahoo.com/hadoop/distribution/ Also a VM with Hadoop cluster with security Thanks!](https://image.slidesharecdn.com/1hadoopsecurityindetailshadoopsummit2010-100630134441-phpapp01/85/Hadoop-Security-in-Detail__HadoopSummit2010-17-320.jpg)