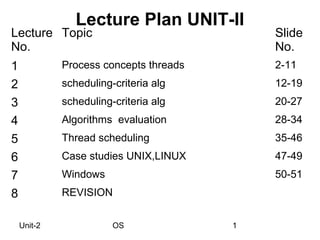

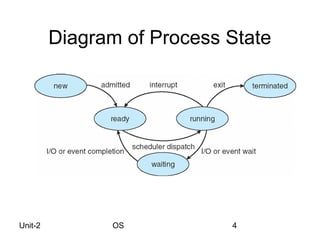

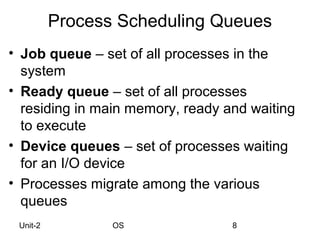

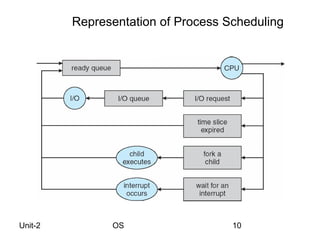

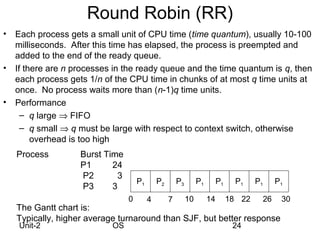

This lecture covers process and thread concepts in operating systems including scheduling criteria and algorithms. It discusses key process concepts like process state, process control block and CPU scheduling. Common scheduling algorithms like FCFS, SJF, priority and round robin are explained. Process scheduling queues and the producer-consumer problem are also summarized. Evaluation methods for scheduling algorithms like deterministic modeling, queueing models and simulation are briefly covered.

![Bounded-Buffer – Shared-Memory Solution

• Shared data

#define BUFFER_SIZE 10

typedef struct {

...

} item;

item buffer[BUFFER_SIZE];

int in = 0;

int out = 0;

• Solution is correct, but can only use

BUFFER_SIZE-1 elements

Unit-2 OS 13](https://image.slidesharecdn.com/osppt2unit-120825095051-phpapp02/85/OS-Process-and-Thread-Concepts-13-320.jpg)

![Bounded-Buffer – Producer

while (true) {

/* Produce an item */

while (((in = (in + 1)

% BUFFER SIZE count) == out)

; /* do nothing -- no

free buffers */

buffer[in] = item;

in = (in + 1) % BUFFER

SIZE;

Unit-2 OS 14](https://image.slidesharecdn.com/osppt2unit-120825095051-phpapp02/85/OS-Process-and-Thread-Concepts-14-320.jpg)

![Bounded Buffer – Consumer

while (true) {

while (in == out)

; // do nothing

-- nothing to consume

// remove an item from the

buffer

item = buffer[out];

out = (out + 1) % BUFFER

SIZE;

Unit-2 OS 15](https://image.slidesharecdn.com/osppt2unit-120825095051-phpapp02/85/OS-Process-and-Thread-Concepts-15-320.jpg)

![Pthread Scheduling API

#include <pthread.h>

#include <stdio.h>

#define NUM THREADS 5

int main(int argc, char *argv[])

{

int i;

pthread t tid[NUM THREADS];

pthread attr t attr;

/* get the default attributes */

pthread attr init(&attr);

/* set the scheduling algorithm to PROCESS or

SYSTEM */

pthread attr setscope(&attr, PTHREAD SCOPE

SYSTEM);

/* set the scheduling policy - FIFO, RT, or

OTHER */

pthread attr setschedpolicy(&attr, SCHED OTHER);

Unit-2 create the threads */

/* OS 36

for (i = 0; i < NUM THREADS; i++)](https://image.slidesharecdn.com/osppt2unit-120825095051-phpapp02/85/OS-Process-and-Thread-Concepts-36-320.jpg)

![Pthread Scheduling API

/* now join on each thread */

for (i = 0; i < NUM THREADS; i++)

pthread join(tid[i], NULL);

}

/* Each thread will begin control in

this function */

void *runner(void *param)

{

printf("I am a threadn");

pthread exit(0);

}

Unit-2 OS 37](https://image.slidesharecdn.com/osppt2unit-120825095051-phpapp02/85/OS-Process-and-Thread-Concepts-37-320.jpg)