BIAS AND CHANCE AND CONFOUNDING

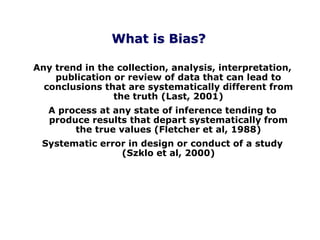

- 1. Any trend in the collection, analysis, interpretation, publication or review of data that can lead to conclusions that are systematically different from the truth (Last, 2001) A process at any state of inference tending to produce results that depart systematically from the true values (Fletcher et al, 1988) Systematic error in design or conduct of a study (Szklo et al, 2000) What is Bias?

- 2. Errors can be differential (systematic) or non- differential (random) Random error: use of invalid outcome measure that equally misclassifies cases and controls Differential error: use of an invalid measures that misclassifies cases in one direction and misclassifies controls in another Term 'bias' should be reserved for differential or systematic error Bias is systematic error

- 3. Chance vs Bias Chance is caused by random error Bias is caused by systematic error Errors from chance will cancel each other out in the long run (large sample size) Errors from bias will not cancel each other out whatever the sample size Chance leads to imprecise results Bias leads to inaccurate results

- 4. Selection bias Unrepresentative nature of sample Information (misclassification) bias Errors in measurement of exposure of disease Confounding bias Distortion of exposure - disease relation by some other factor Types of bias not mutually exclusive (effect modification is not bias) This classification is by Miettinen OS in 1970s See for example Miettinen & Cook, 1981 (www) Types of Bias

- 5. Selection Bias Selective differences between comparison groups that impacts on relationship between exposure and outcome Usually results from comparative groups not coming from the same study base and not being representative of the populations they come from

- 10. Selection Bias Examples (www) Selective survival (Neyman's) bias

- 11. Selection Bias Examples Case-control study: Controls have less potential for exposure than cases Outcome = brain tumour; exposure = overhead high voltage power lines Cases chosen from province wide cancer registry Controls chosen from rural areas Systematic differences between cases and controls

- 12. Case-Control Studies: Potential Bias Schulz & Grimes, 2002 (www) (PDF)

- 13. Selection Bias Examples Cohort study: Differential loss to follow-up Especially problematic in cohort studies Subjects in follow-up study of multiple sclerosis may differentially drop out due to disease severity Differential attrition selection bias

- 14. Selection Bias Examples Self-selection bias: - You want to determine the prevalence of HIV infection - You ask for volunteers for testing - You find no HIV - Is it correct to conclude that there is no HIV in this location?

- 15. Selection Bias Examples Healthy worker effect: Another form of self-selection bias “self-screening” process – people who are unhealthy “screen” themselves out of active worker population Example: - Course of recovery from low back injuries in 25-45 year olds - Data captured on worker’s compensation records - But prior to identifying subjects for study, self-selection has already taken place

- 16. Selection Bias Examples Diagnostic or workup bias: Also occurs before subjects are identified for study Diagnoses (case selection) may be influenced by physician’s knowledge of exposure Example: - Case control study – outcome is pulmonary disease, exposure is smoking - Radiologist aware of patient’s smoking status when reading x-ray – may look more carefully for abnormalities on x-ray and differentially select cases Legitimate for clinical decisions, inconvenient for research

- 17. Selection bias Unrepresentative nature of sample ** Information (misclassification) bias ** Errors in measurement of exposure of disease Confounding bias Distortion of exposure - disease relation by some other factor Types of bias not mutually exclusive (effect modification is not bias) Types of Bias

- 18. Information / Measurement / Misclassification Bias Method of gathering information is inappropriate and yields systematic errors in measurement of exposures or outcomes If misclassification of exposure (or disease) is unrelated to disease (or exposure) then the misclassification is non-differential If misclassification of exposure (or disease) is related to disease (or exposure) then the misclassification is differential Distorts the true strength of association

- 19. Information / Measurement / Misclassification Bias Recall bias: Those exposed have a greater sensitivity for recalling exposure (reduced specificity) - specifically important in case-control studies - when exposure history is obtained retrospectively cases may more closely scrutinize their past history looking for ways to explain their illness - controls, not feeling a burden of disease, may less closely examine their past history Those who develop a cold are more likely to identify the exposure than those who do not – differential misclassification - Case: Yes, I was sneezed on - Control: No, can’t remember any sneezing

- 20. Information / Measurement / Misclassification Bias Reporting bias: Individuals with severe disease tends to have complete records therefore more complete information about exposures and greater association found Individuals who are aware of being participants of a study behave differently (Hawthorne effect)

- 21. Controlling for Information Bias - Blinding prevents investigators and interviewers from knowing case/control or exposed/non-exposed status of a given participant - Form of survey mail may impose less “white coat tension” than a phone or face-to-face interview - Questionnaire use multiple questions that ask same information acts as a built in double-check - Accuracy multiple checks in medical records gathering diagnosis data from multiple sources

- 22. Selection bias Unrepresentative nature of sample Information (misclassification) bias Errors in measurement of exposure of disease ** Confounding bias ** Distortion of exposure - disease relation by some other factor Types of bias not mutually exclusive (effect modification is not bias) Types of Bias

- 23. (www)

- 24. • A third factor which is related to both exposure and outcome, and which accounts for some/all of the observed relationship between the two • Confounder not a result of the exposure – e.g., association between child’s birth rank (exposure) and Down syndrome (outcome); mother’s age a confounder? – e.g., association between mother’s age (exposure) and Down syndrome (outcome); birth rank a confounder? Confounding

- 25. Confounding Imagine you have repeated a positive finding of birth order association in Down syndrome or association of coffee drinking with CHD in another sample. Would you be able to replicate it? If not why? Imagine you have included only non-smokers in a study and examined association of alcohol with lung cancer. Would you find an association? Imagine you have stratified your dataset for smoking status in the alcohol - lung cancer association study. Would the odds ratios differ in the two strata? Imagine you have tried to adjust your alcohol association for smoking status (in a statistical model). Would you see an association?

- 26. Confounding Imagine you have repeated a positive finding of birth order association in Down syndrome or association of coffee drinking with CHD in another sample. Would you be able to replicate it? If not why? You would not necessarily be able to replicate the original finding because it was a spurious association due to confounding. In another sample where all mothers are below 30 yr, there would be no association with birth order. In another sample in which there are few smokers, the coffee association with CHD would not be replicated.

- 27. Confounding Imagine you have included only non-smokers in a study and examined association of alcohol with lung cancer. Would you find an association? No because the first study was confounded. The association with alcohol was actually due to smoking. By restricting the study to non-smokers, we have found the truth. Restriction is one way of preventing confounding at the time of study design.

- 28. Confounding Imagine you have stratified your dataset for smoking status in the alcohol - lung cancer association study. Would the odds ratios differ in the two strata? The alcohol association would yield the similar odds ratio in both strata and would be close to unity. In confounding, the stratum-specific odds ratios should be similar and different from the crude odds ratio by at least 15%. Stratification is one way of identifying confounding at the time of analysis. If the stratum-specific odds ratios are different, then this is not confounding but effect modification.

- 29. Confounding If the smoking is included in the statistical model, the alcohol association would lose its statistical significance. Adjustment by multivariable modelling is another method to identify confounders at the time of data analysis. Imagine you have tried to adjust your alcohol association for smoking status (in a statistical model). Would you see an association?

- 30. Confounding For confounding to occur, the confounders should be differentially represented in the comparison groups. Randomisation is an attempt to evenly distribute potential (unknown) confounders in study groups. It does not guarantee control of confounding. Matching is another way of achieving the same. It ensures equal representation of subjects with known confounders in study groups. It has to be coupled with matched analysis. Restriction for potential confounders in design also prevents confounding but causes loss of statistical power (instead stratified analysis may be tried).

- 31. Confounding Randomisation, matching and restriction can be tried at the time of designing a study to reduce the risk of confounding. At the time of analysis: Stratification and multivariable (adjusted) analysis can achieve the same. It is preferable to try something at the time of designing the study.

- 32. Effect of randomisation on outcome of trials in acute pain Bandolier Bias Guide (www)

- 33. Confounding (www) If each case is matched with a same-age control, there will be no association (OR for old age = 2.6, P = 0.0001)

- 35. 0 100 200 300 400 500 600 700 800 900 1000 Cases per 100000 1 2 3 4 5 < 20 20-24 25-29 30-34 35-39 40+ Birth order Age groups Cases of Down syndrom by birth order and mother's age EPIET (www) Cases of Down Syndrome by Birth Order and Maternal Age If each case is matched with a same-age control, there will be no association. If analysis is repeated after stratification by age, there will be no association with birth order.

- 36. Confounding or Effect Modification Birth Weight Leukaemia Sex Can sex be responsible for the birth weight association in leukaemia? - Is it correlated with birth weight? - Is it correlated with leukaemia independently of birth weight? - Is it on the causal pathway? - Can it be associated with leukaemia even if birth weight is low? - Is sex distribution uneven in comparison groups?

- 37. Confounding or Effect Modification Birth Weight Leukaemia Sex Does birth weight association differ in strength according to sex? Birth Weight Leukaemia Birth Weight Leukaemia/ / BOYS GIRLS OR = 1.8 OR = 0.9 OR = 1.5

- 38. Effect Modification In an association study, if the strength of the association varies over different categories of a third variable, this is called effect modification. The third variable is changing the effect of the exposure. The effect modifier may be sex, age, an environmental exposure or a genetic effect. Effect modification is similar to interaction in statistics. There is no adjustment for effect modification. Once it is detected, stratified analysis can be used to obtain stratum-specific odds ratios.

- 39. Effect modifier Belongs to nature Different effects in different strata Simple Useful Increases knowledge of biological mechanism Allows targeting of public health action Confounding factor Belongs to study Adjusted OR/RR different from crude OR/RR Distortion of effect Creates confusion in data Prevent (design) Control (analysis)

- 40. HOW TO CONTROL FOR CONFOUNDERS? • IN STUDY DESIGN… – RESTRICTION of subjects according to potential confounders (i.e. simply don’t include confounder in study) – RANDOM ALLOCATION of subjects to study groups to attempt to even out unknown confounders – MATCHING subjects on potential confounder thus assuring even distribution among study groups

- 41. HOW TO CONTROL FOR CONFOUNDERS? • IN DATA ANALYSIS… – STRATIFIED ANALYSIS using the Mantel Haenszel method to adjust for confounders – IMPLEMENT A MATCHED-DESIGN after you have collected data (frequency or group) – RESTRICTION is still possible at the analysis stage but it means throwing away data – MODEL FITTING using regression techniques

- 42. WILL ROGERS' PHENOMENON Assume that you are tabulating survival for patients with a certain type of tumour. You separately track survival of patients whose cancer has metastasized and survival of patients whose cancer remains localized. As you would expect, average survival is longer for the patients without metastases. Now a fancier scanner becomes available, making it possible to detect metastases earlier. What happens to the survival of patients in the two groups? The group of patients without metastases is now smaller. The patients who are removed from the group are those with small metastases that could not have been detected without the new technology. These patients tend to die sooner than the patients without detectable metastases. By taking away these patients, the average survival of the patients remaining in the "no metastases" group will improve. What about the other group? The group of patients with metastases is now larger. The additional patients, however, are those with small metastases. These patients tend to live longer than patients with larger metastases. Thus the average survival of all patients in the "with-metastases" group will improve. Changing the diagnostic method paradoxically increased the average survival of both groups! This paradox is called the Will Rogers' phenomenon after a quote from the humorist Will Rogers ("When the Okies left California and went to Oklahoma, they raised the average intelligence in both states"). (www) See also Festenstein, 1985 (www)

- 43. Cause-and-Effect Relationship Grimes & Schulz, 2002 (www) (PDF)