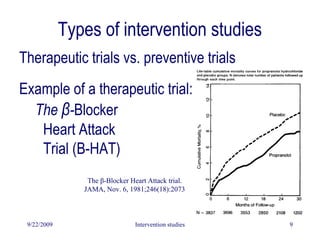

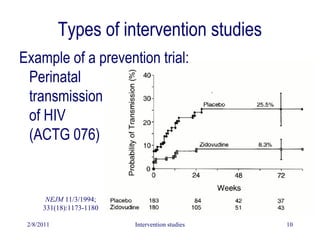

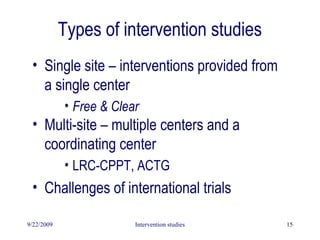

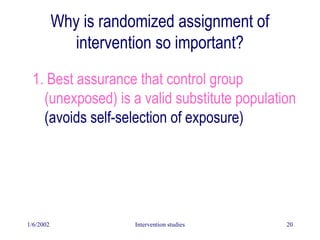

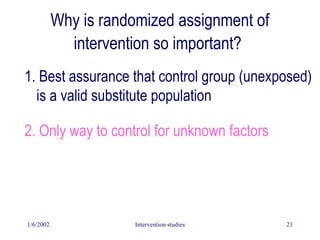

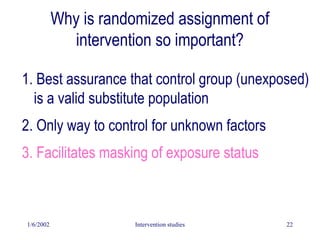

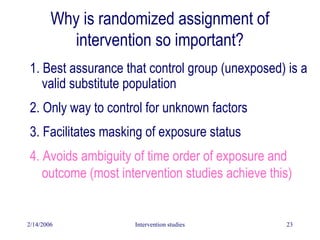

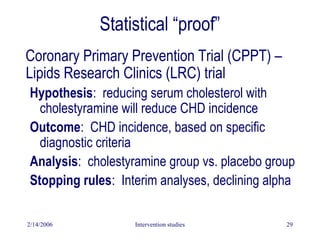

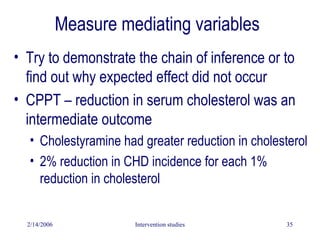

This document discusses intervention studies and randomized controlled trials. It begins by defining causality using the counterfactual model and comparing exposed and unexposed groups. It then describes the importance of randomization in intervention studies, noting that randomization helps ensure the unexposed group is a valid control, controls for unknown confounders, facilitates blinding, and provides a foundation for statistical tests. The document discusses types of intervention studies, issues like compliance, analysis approaches like intention-to-treat, and ethical considerations.