This document summarizes the key points from a workshop on project evaluations. The workshop covered:

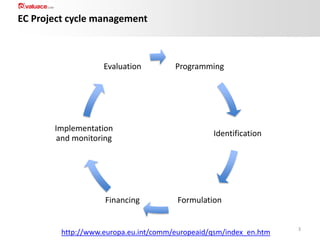

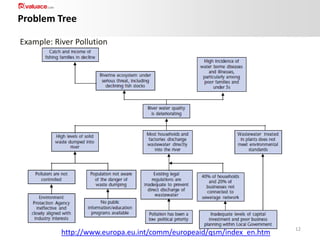

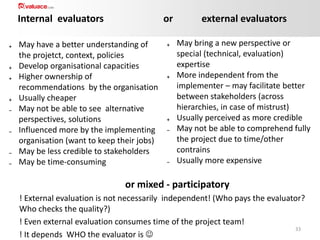

1) An introduction to project evaluations and the project cycle.

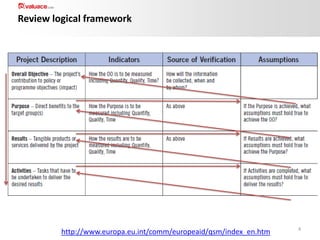

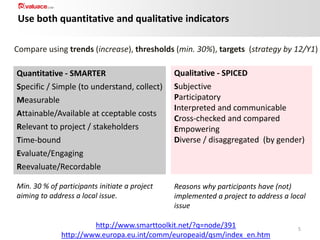

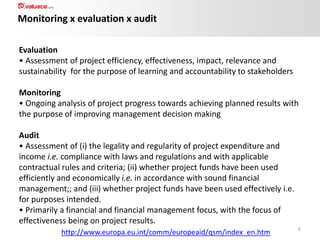

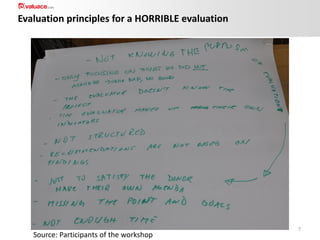

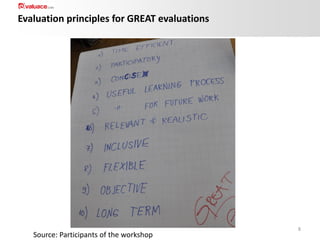

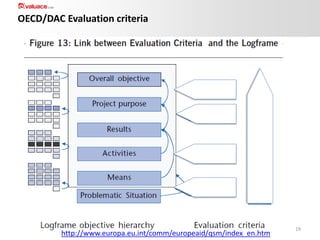

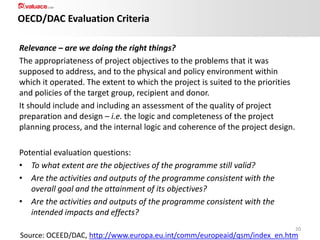

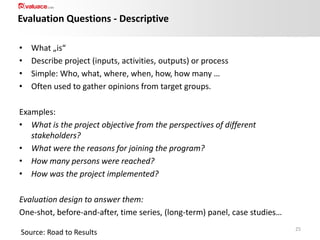

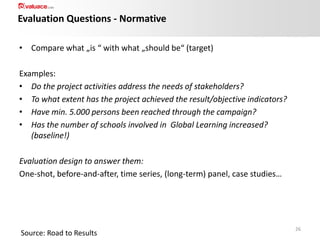

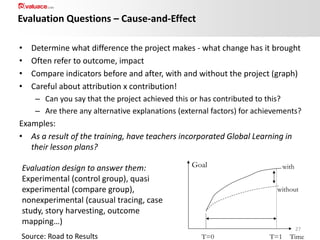

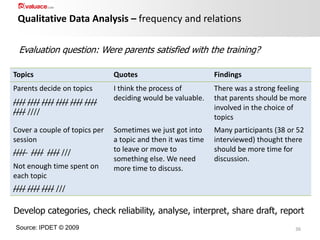

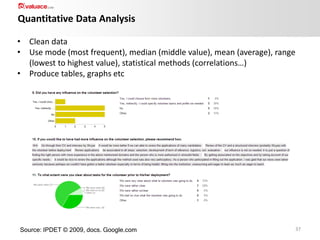

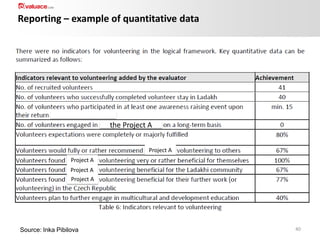

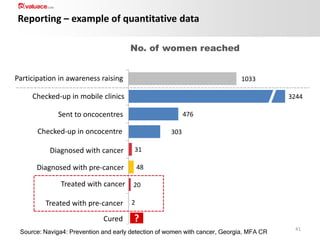

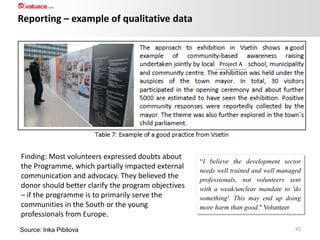

2) Discussion of evaluation criteria like relevance, effectiveness, efficiency, impact and sustainability. Quantitative and qualitative indicators were also covered.

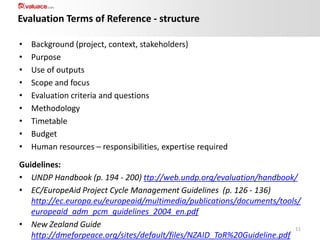

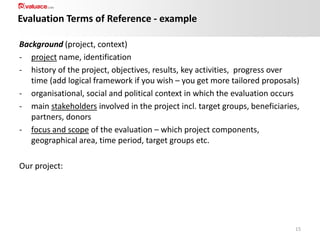

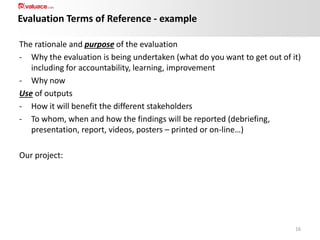

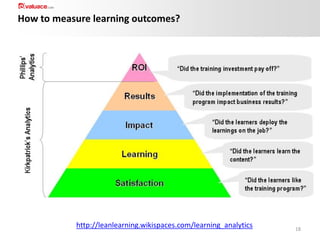

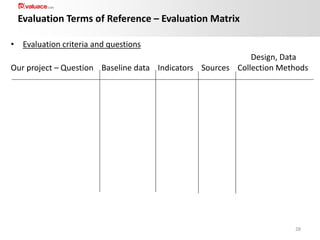

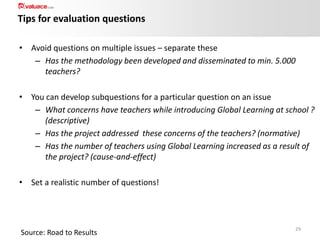

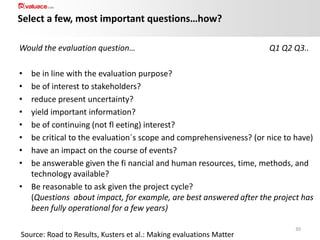

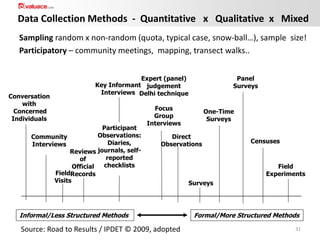

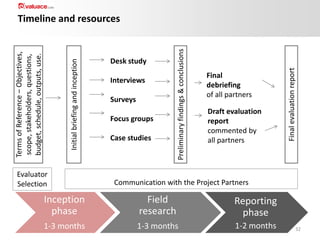

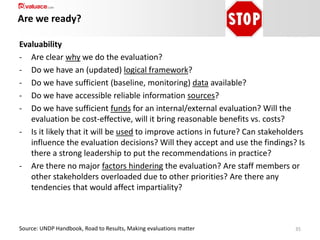

3) Methods for data collection, developing evaluation questions, and analyzing qualitative data. Key points on developing terms of reference for evaluations were also provided.